2024-04-04 13:40:11

Using the Arc Browser is one of the strongest possible signals today that the user sees nothing of value or to respect in the open Internet. A tool based on stripping everything it finds to give you an expensive answer that's probably worse than what a normal web search would have given ya. An egocentric product that sees everything outside of the user as mere content

2024-06-04 09:07:09

This https://arxiv.org/abs/2405.15461 has been replaced.

initial toot: https://mastoxiv.page/@arXiv_csCE_…

2024-03-28 21:34:14

2024 Dallas Cowboys Mock Draft: Fixing Problem Areas https://www.yardbarker.com/nfl/articles/2024_dallas_cowboys_mock_draft_fixing_problem_areas/s1_16880_40169173

2024-04-01 05:41:53

#Turkey’s main opposition party retained its control over key cities and made huge gains elsewhere in Sunday’s #local #elections, in a major upset to President Recep Tayyip Erdogan, who had set his sights on retaking control of those ur…

2024-06-03 07:33:33

Dimension formulas for period spaces via motives and species

Annette Huber, Martin Kalck

https://arxiv.org/abs/2405.21053 https://arx…

2024-05-01 06:48:48

Can Large Language Models put 2 and 2 together? Probing for Entailed Arithmetical Relationships

D. Panas, S. Seth, V. Belle

https://arxiv.org/abs/2404.19432 https://arxiv.org/pdf/2404.19432

arXiv:2404.19432v1 Announce Type: new

Abstract: Two major areas of interest in the era of Large Language Models regard questions of what do LLMs know, and if and how they may be able to reason (or rather, approximately reason). Since to date these lines of work progressed largely in parallel (with notable exceptions), we are interested in investigating the intersection: probing for reasoning about the implicitly-held knowledge. Suspecting the performance to be lacking in this area, we use a very simple set-up of comparisons between cardinalities associated with elements of various subjects (e.g. the number of legs a bird has versus the number of wheels on a tricycle). We empirically demonstrate that although LLMs make steady progress in knowledge acquisition and (pseudo)reasoning with each new GPT release, their capabilities are limited to statistical inference only. It is difficult to argue that pure statistical learning can cope with the combinatorial explosion inherent in many commonsense reasoning tasks, especially once arithmetical notions are involved. Further, we argue that bigger is not always better and chasing purely statistical improvements is flawed at the core, since it only exacerbates the dangerous conflation of the production of correct answers with genuine reasoning ability.

2024-05-02 07:18:06

Explainable Automatic Grading with Neural Additive Models

Aubrey Condor, Zachary Pardos

https://arxiv.org/abs/2405.00489 https://arxi…

2024-03-26 16:02:05

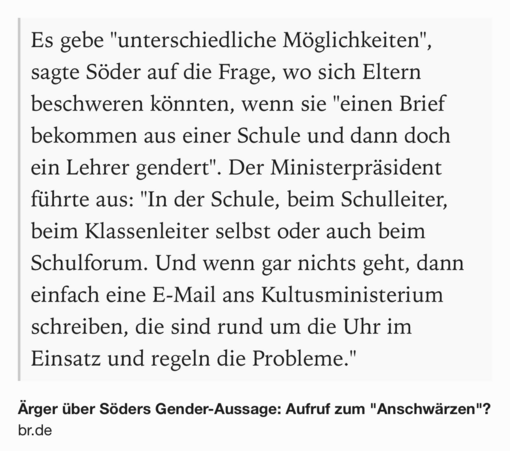

Söder ruft zur Hetzjagd gegenüber Schulen und Lehrer*innen auf

https://www.br.de/nachrichten/bayern/aerger-ueber-soeders-gender-aussage-aufruf-zum-anschwaerzen,U87J1Bc

2024-05-03 06:56:33

Finite symmetric groups are strongly verbally closed

Olga K. Karimova, Anton A. Klyachko

https://arxiv.org/abs/2405.01179 https://arx…

2024-05-02 07:18:06

Explainable Automatic Grading with Neural Additive Models

Aubrey Condor, Zachary Pardos

https://arxiv.org/abs/2405.00489 https://arxi…