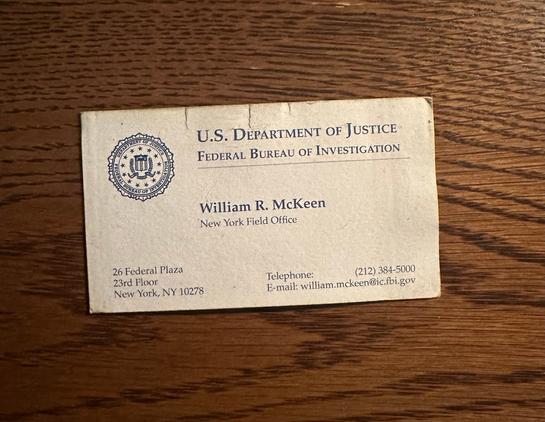

2026-02-26 07:46:00

A reporter writes about a visit from the FBI in 2020, following his story about a hack, and the long-term personal impact, along with eroding press freedoms (Zack Whittaker/~this week in security~)

https://this.weekinsecurity.com/fbi-ag

2026-01-27 03:20:40

The Trump administration appeared to acknowledge on Monday that its investigation into

the killing of a Veterans Affairs nurse, Alex Pretti, by federal agents this weekend

was limited to a “use of force” review meant to establish whether government employees had violated training standards.

Such a move, disclosed in court filings, would represent a much narrower inquiry

-- focused on tactics and conduct

-- than one that would examine whether federal agents shoul…

2026-04-26 20:32:19

Ich hab keine Ahnung, wie der Phishing-Angriff auf Signal-Accounts im Detail aussah. Und die einfache Reaktion ist: „die sind alle zu dumm.“

Letztlich heißt das: die Menschen, die da Signal (oder andere Messenger) nutzen, haben kein gutes mentales Modell, das ihnen intuitiv erlaubt zu merken, wenn da irgendwas seltsam ist. Wir sind einfach nicht gut darin, Systeme zu bauen, die diesbezüglich von ihren Usern so gut verstanden werden- und Banken, die Newsletter von Domains Dritter versc…

2026-04-21 11:42:00

2026-03-25 23:02:08

In Agent Running in the Field, Le Carré gave himself a variety of characters through which he could vent his anger at Brexit, Trump, oligarchs, the state of the world & its intelligence services: Ed, Florence, Prue.

The main character, as ever, remains more circumspect but has clear sympathy for the other characters' views #Bookstodon #LeCarré #AmReading

2026-03-22 18:22:50

2026-02-09 06:05:13

»Hunderte verseuchte Skills — Angreifer schleusen Trojaner in KI-Agent OpenClaw ein:

Hunderte Skills für den KI-Agenten OpenClaw enthielten Trojaner und Datendiebe. OpenClaw und VirusTotal reagieren mit einer Partnerschaft. Doch das grundlegende Sicherheitsproblem von KI-Agenten bleibt.«

Ich behaupte mal, die KI an sich ist ein Sicherheitsproblem in der IT, denn das ist der Virus.

🦠

2026-03-26 19:19:43

The sales analyst leans back and types a question into her company’s shiny new AI data agent: “Which leads from last quarter should we follow up on?”

Simple enough. The kind of question that used to eat up half a junior analyst’s afternoon. The agent spins for a few seconds. Then it either returns a confidently wrong answer, crashes without a word, or does the thing I find most telling of all: it gives up before it even tries.

https://levelup.gitconnected.com/why-sql-agents-fail-62-of-the-time-on-real-enterprise-queries-5da9eeb48ede

2026-02-22 16:01:21

Turkey launches a review of how social media platforms handle children's data as it prepares new rules that include identity verification and age restrictions (Turkish Minute)

https://www.turkishminute.com/2026/02/21/turkey…

2026-02-17 16:51:00