2026-05-08 13:53:55

I’m not sure what sets us up to have better LMSes, but I don’t think institutions building them in-house is a viable answer.

One long-successful direction I like that has been gaining ground of late is course-specific web sites. I’m cheering the rise of static site generators, and hoping they continue to creep outside the confines of the techiest among us. A course site is not an LMS, but moving course •content• mostly out of the LMS simplifies the problem considerably!

/end

2026-06-07 21:14:29

The California city of Whittier swung to Democrats this spring,

spurred by ICE raids and by anger toward election rules that long dampened turnout among Latinos

and kept them out of city government.

https://boltsmag.org/california-immigrant-organiz…

2026-05-08 01:51:27

Wow. Admittedly I still teach this, but didn't think remembering how to do long division would be all that difficult. This has got me curious. Do you remember how to do long division? (no answers from current students/teachers please) #poll boosts appreciated. Try 100÷4 if you want to try it out and see if it comes back to you

2026-04-08 01:40:00

Was just made aware of this pretty good but entirely predictable study:

#LLMs

2026-05-05 05:15:56

Noch ein paar der zuletzt hier besonders häufig geteilten #News:

Linux-Lücke „Copy Fail“ wird bereits angegriffen

https://www.

2026-06-07 10:00:05

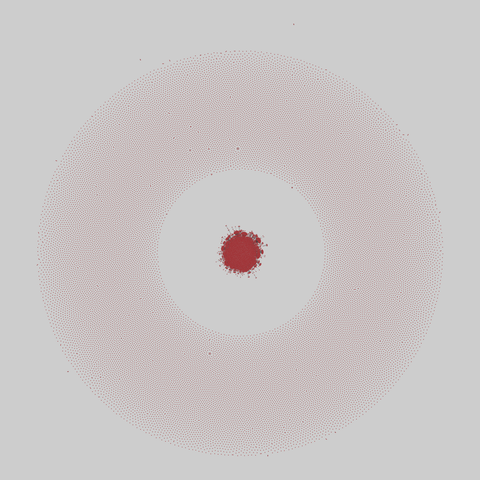

inploid: Inploid: an online social Q&A platform

Inploid is a social question & answer website in Turkish. Users can follow others and see their questions and answers on the main page. Each user is associated with a reputability score which is influenced by feedback of others about questions and answers of the user. Each user can also specify interest in topics. The data is crawled in June 2017 and consist of 39,749 nodes and 57,276 directed links between them. In addition, for …

2026-03-08 23:06:30

Stanford University ↔️ ends ivy frustration #KarmaManager #anagram

2026-06-07 21:00:05

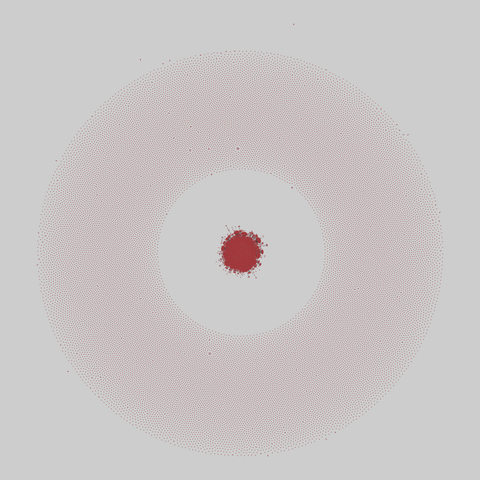

inploid: Inploid: an online social Q&A platform

Inploid is a social question & answer website in Turkish. Users can follow others and see their questions and answers on the main page. Each user is associated with a reputability score which is influenced by feedback of others about questions and answers of the user. Each user can also specify interest in topics. The data is crawled in June 2017 and consist of 39,749 nodes and 57,276 directed links between them. In addition, for …

2026-03-08 19:55:35

A pet dragon ↔️ Entrap a God! #KarmaManager #anagram

2026-03-08 22:23:47

Richard M. Nixon ↔️ rancid horn mix #KarmaManager #anagram