2026-03-26 14:30:55

Cents, which makes operating and payments software for laundromats, raised a $110M Series C, following a $40M Series B in 2024 (Lucinda Shen/Axios)

https://www.axios.com/pro/all-deals/2026/03/26/laundromat-software-cents-tender-offer

2026-04-25 22:00:04

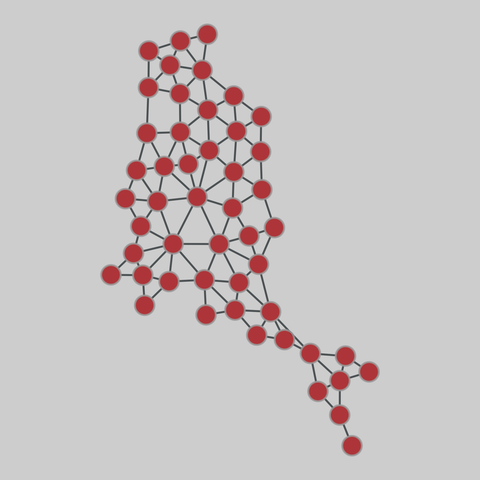

contiguous_usa: Contiguous states (USA)

A network of contiguous states in the USA, in which each state is a node and two nodes are connected if they share a land-based geographic border. The dataset includes the lower 48 states, and the District of Columbia.

This network has 49 nodes and 107 edges.

Tags: Transportation, Roads, Unweighted

2026-04-26 02:11:04

Mike Vrabel not in contact with Patriots, team says, during Day 3 of 2026 NFL Draft https://www.nytimes.com/athletic/7230620/2026/04/25/mike-vrabel-patriots-nfl-draft-day-3-contact/

2026-03-26 04:15:58

More US Counties See Population Drops Under Trump's Immigration Crackdown (Bloomberg)

https://www.bloomberg.com/news/articles/2026-03-26/us-census-shows-more-counties-see-population-drops-in-immigration-crackdown

http://www.memeorandum.com/260326/p1#a260326p1

2026-02-25 10:45:11

Untied Ulysses: Memory-Efficient Context Parallelism via Headwise Chunking

Ravi Ghadia, Maksim Abraham, Sergei Vorobyov, Max Ryabinin

https://arxiv.org/abs/2602.21196 https://arxiv.org/pdf/2602.21196 https://arxiv.org/html/2602.21196

arXiv:2602.21196v1 Announce Type: new

Abstract: Efficiently processing long sequences with Transformer models usually requires splitting the computations across accelerators via context parallelism. The dominant approaches in this family of methods, such as Ring Attention or DeepSpeed Ulysses, enable scaling over the context dimension but do not focus on memory efficiency, which limits the sequence lengths they can support. More advanced techniques, such as Fully Pipelined Distributed Transformer or activation offloading, can further extend the possible context length at the cost of training throughput. In this paper, we present UPipe, a simple yet effective context parallelism technique that performs fine-grained chunking at the attention head level. This technique significantly reduces the activation memory usage of self-attention, breaking the activation memory barrier and unlocking much longer context lengths. Our approach reduces intermediate tensor memory usage in the attention layer by as much as 87.5$\%$ for 32B Transformers, while matching previous context parallelism techniques in terms of training speed. UPipe can support the context length of 5M tokens when training Llama3-8B on a single 8$\times$H100 node, improving upon prior methods by over 25$\%$.

toXiv_bot_toot

2026-04-23 19:03:17

The Most AI-Exposed Counties in America Are Not Where You Think

https://jakeprokopets.substack.com/p/why-the-most-ai-exposed-counties

Here is a county-level AI displacement model across all 3,204 US counties.

The Top 5 most exposed counties are a…

2026-03-25 21:26:06

Singapore-based Startale, developer of the Strium blockchain for tokenized securities and JPYSC and USDSC stablecoins, raised a $63M Series A from SBI and Sony (Francisco Rodrigues/CoinDesk)

https://www.coindesk.com/business/2026/03/…

2026-02-25 00:00:05

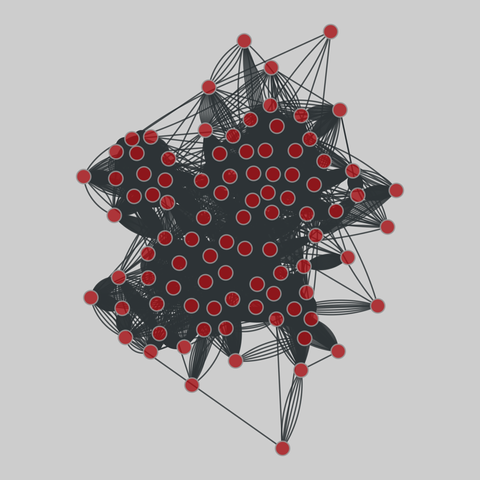

sp_office: Workplace contacts (2013)

A temporal network of contacts between individuals, measured in an office building in France, from June 24 to July 3, 2013.

This network has 92 nodes and 9827 edges.

Tags: Social, Offline, Weighted, Temporal, Metadata

https://networks.skewed.de/net/sp_offi

2026-02-26 19:00:04

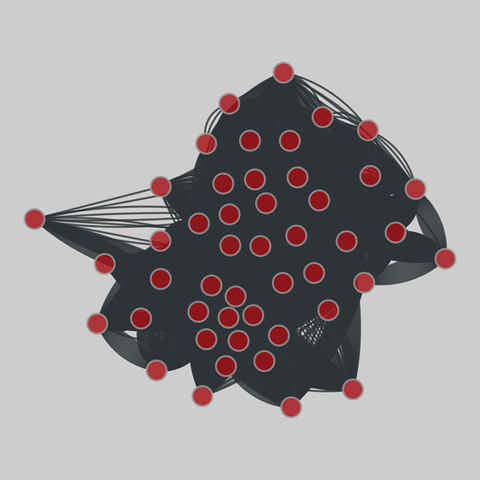

sp_kenyan_households: Kenyan households contacts (2012)

A network of proximity contacts measured between members of 5 households of rural Kenya, between April 24 and May 12, 2012.

This network has 47 nodes and 32643 edges.

Tags: Social, Offline, Unweighted, Temporal, Metadata

https://networks.skewed.…

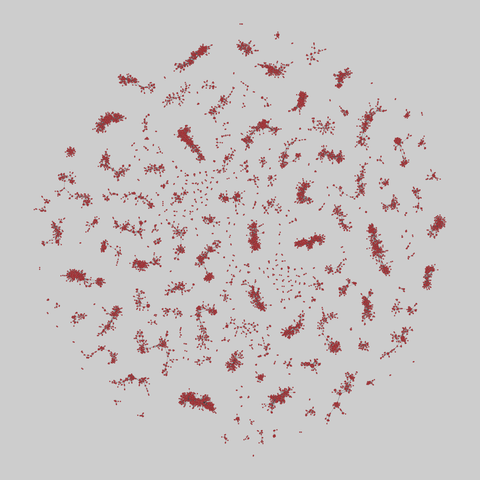

2026-04-26 00:00:04

sp_infectious: Art exhibit dynamic contacts (2011)

This dataset contains the daily dynamic contact networks collected during the Infectious SocioPatterns event that took place at the Science Gallery in Dublin, Ireland, during the artscience exhibition INFECTIOUS: STAY AWAY. Each file in the downloadable package contains a tab-separated list representing the active contacts during 20-second intervals of one day of data collection. Each line has the form “t i j“, where i and j are the a…