2026-03-13 19:31:22

Claude Opus 4.6 and Sonnet 4.6 now offer a 1M context window at standard pricing; it is the default for Claude Code Max, Team, and Enterprise users on Opus 4.6 (Anthropic)

https://claude.com/blog/1m-context-ga

2026-02-14 22:00:04

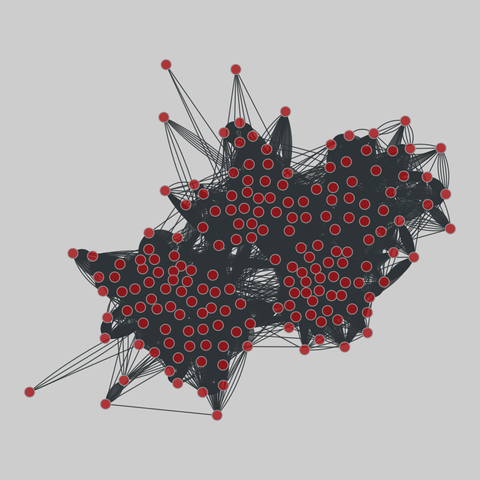

sp_high_school_new: High school dynamic contacts (2011-2012)

These datasets contain the temporal network of contacts between students in a high school in Marseilles, France. The first dataset gives the contacts of the students of three classes during 4 days in Dec. 2011, and the second corresponds to the contacts of the students of 5 classes during 7 days (from a Monday to the Tuesday of the following week) in Nov. 2012.

This network has 180 nodes and 45047 edges.

Tags: Soc…

2026-03-14 16:38:57

Criei um "starter pack" de contas portuguesas.

https://fedidevs.com/s/OTQ1/

Boa ideia? Mš ideia? É para aprofundar? É para apagar?

2026-04-14 14:22:42

So to follow up on this, I've caught it in action. Models, when quantized a bit, just do a bit more poorly with short contexts. Even going from f32 (as trained) to bf16 (as usually run) to q8 tends to do okay for "normal" context windows. And q4 you start feeling like "this model is a little stupid and gets stuck sometimes” (it is! It's just that it's still mostly careening about in the space of "plausible" most of the time. Not good guesswork, but still in the zone). With long contexts, the probability of parameters collapsing to zero are higher, so the more context the more likelihood you are to see brokenness.

And then at Q2 (2 bits per parameter) or Q1, the model falls apart completely. Parameters collapse to zero easily. You start seeing "all work and no play makes jack a dull boy” sorts of behavior, with intense and unscrutinized repetition, followed by a hard stop when it just stops working.

And quantization is a parameter that a model vendor can turn relatively easily. (they have to regenerate the model from the base with more quantization, but it's a data transformation on the order of running a terabyte through a straightforward and fast process, not like training).

If you have 1000 customers and enough equipment to handle the requests of 700, going from bf16 to q8 is a no-brainer. Suddenly you can handle the load and have a little spare capacity. They get worse results, probably pay the same per token (or they're on a subscription that hides the cost anyway so you are even freer to make trade-offs. There's a reason that subscription products are kinda poorly described.)

It's also possible for them to vary this across a day: use models during quieter periods? Maybe you get an instance running a bf16 quantization. If you use it during a high use period? You get a Q4 model.

Or intelligent routing is possible. No idea if anyone is doing this, but if they monitor what you send a bit, and you generally shoot for an expensive model for simple requests? They could totally substitute a highly quantized version of the model to answer the question.

There are •so many tricks• that can be pulled here. Some of them very reasonable to make, some of them treading into outright misleading or fraudulent, and it's weirdly hard to draw the line between them.

2026-02-15 04:25:04

I keep meaning to try the fancy expensive vegan cookies at Christine's. Anyone been over there?

https://cometochristines.com/

2026-04-14 15:55:51

A malicious Ledger Live app clone available via Apple's App Store appears to have drained about $9.5M from over 50 victims between April 7 and April 13 (Oliver Knight/CoinDesk)

https://www.coindesk.com/business/2026/04/

2026-03-14 22:00:04

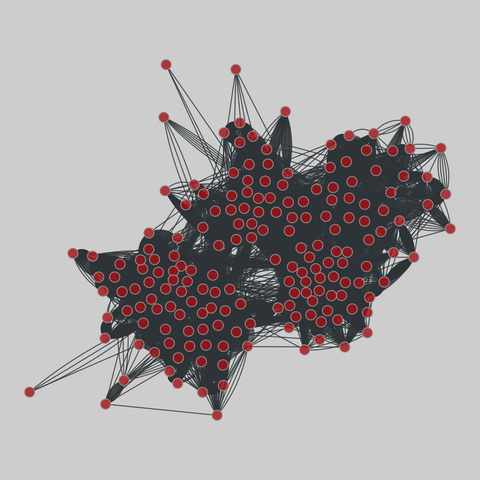

sp_high_school_new: High school dynamic contacts (2011-2012)

These datasets contain the temporal network of contacts between students in a high school in Marseilles, France. The first dataset gives the contacts of the students of three classes during 4 days in Dec. 2011, and the second corresponds to the contacts of the students of 5 classes during 7 days (from a Monday to the Tuesday of the following week) in Nov. 2012.

This network has 180 nodes and 45047 edges.

Tags: Soc…

2026-04-14 21:56:07

Meta and Broadcom announce an expanded partnership to co-develop multiple generations of Meta's MTIA chips; Broadcom CEO Hock Tan plans to leave Meta's board (CNBC)

https://www.cnbc.com/2026/04/14/meta-commi

2026-04-14 16:00:05

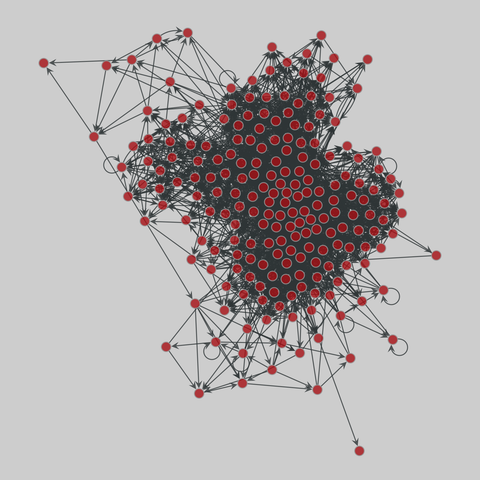

cintestinalis: Tadpole larva brain (C. intestinalis)

Entire connectivity matrix for the complete brain of a larva of Ciona intestinalis. Each directed edge represents a synaptic connection from pre-synaptic cell i to post-synaptic cell j (may not be a neuron). Edge weights represent the cumulative depth of presynaptic contacts in µm.

This network has 205 nodes and 2903 edges.

Tags: Biological, Connectome, Weighted

2026-02-15 10:00:03

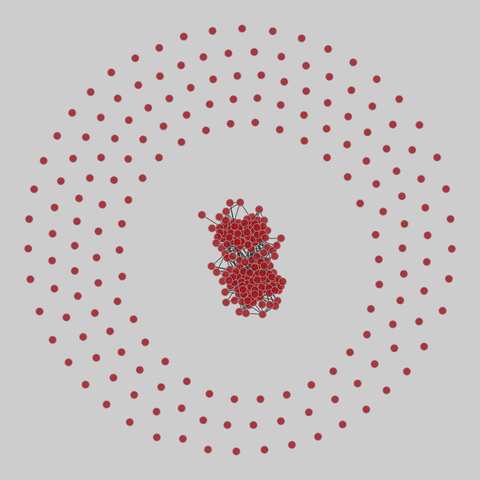

sp_high_school: High school temporal contacts (2013)

These data sets correspond to the contacts and friendship relations between students in a high school in Marseilles, France, in December 2013, as measured through several techniques.

This network has 329 nodes and 1437 edges.

Tags: Social, Offline, Unweighted, Weighted, Temporal, Metadata