2026-04-14 14:22:42

So to follow up on this, I've caught it in action. Models, when quantized a bit, just do a bit more poorly with short contexts. Even going from f32 (as trained) to bf16 (as usually run) to q8 tends to do okay for "normal" context windows. And q4 you start feeling like "this model is a little stupid and gets stuck sometimes” (it is! It's just that it's still mostly careening about in the space of "plausible" most of the time. Not good guesswork, but still in the zone). With long contexts, the probability of parameters collapsing to zero are higher, so the more context the more likelihood you are to see brokenness.

And then at Q2 (2 bits per parameter) or Q1, the model falls apart completely. Parameters collapse to zero easily. You start seeing "all work and no play makes jack a dull boy” sorts of behavior, with intense and unscrutinized repetition, followed by a hard stop when it just stops working.

And quantization is a parameter that a model vendor can turn relatively easily. (they have to regenerate the model from the base with more quantization, but it's a data transformation on the order of running a terabyte through a straightforward and fast process, not like training).

If you have 1000 customers and enough equipment to handle the requests of 700, going from bf16 to q8 is a no-brainer. Suddenly you can handle the load and have a little spare capacity. They get worse results, probably pay the same per token (or they're on a subscription that hides the cost anyway so you are even freer to make trade-offs. There's a reason that subscription products are kinda poorly described.)

It's also possible for them to vary this across a day: use models during quieter periods? Maybe you get an instance running a bf16 quantization. If you use it during a high use period? You get a Q4 model.

Or intelligent routing is possible. No idea if anyone is doing this, but if they monitor what you send a bit, and you generally shoot for an expensive model for simple requests? They could totally substitute a highly quantized version of the model to answer the question.

There are •so many tricks• that can be pulled here. Some of them very reasonable to make, some of them treading into outright misleading or fraudulent, and it's weirdly hard to draw the line between them.

2026-03-10 14:04:11

Mon pere a fait une demande d'aide a mourir. C'est aujourd'hui qie va se passe.

L'infirmière est ici pour installer un catheter.

C'est un feeling étrange...

2026-02-25 20:07:07

As the "last falangista*" dies, fascism is again on the rise.

Just an example from Manchester.

In the nearby parliamentary constituency, a candidate with connections to race pseudoscience organisations, with Nazi pedigree, could win a crucial by-election.

*Falange= Franco's fascist party.

Muere a los 93 años el golpista Antonio Tejero, brazo ejecutor del 23F

2026-03-27 15:26:18

Trying to geocode some of my old holiday pictures with #immich

Pages and pages of log messages like this:

"LOG [Microservices:MapRepository] Empty response from database for city reverse geocoding lat: 64.978553, lon: -21.063319. Likely cause: no nearby large populated place (500 within 25km). Falling back to country boundaries."

Clearly, the queue has reached my picture…

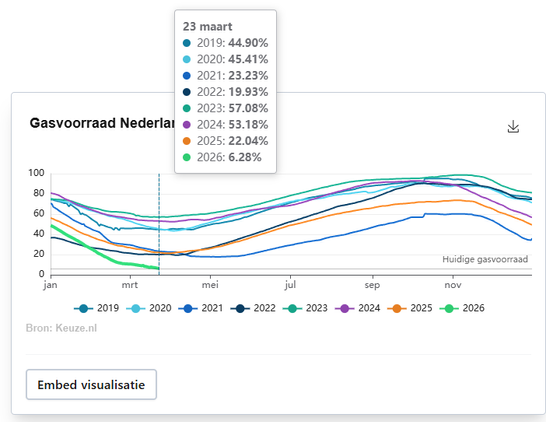

2026-03-23 20:02:54

2026-01-27 13:26:47

Filing: Pinterest plans to lay off less than 15% of its workforce by Q3 and cut back on office space, saying it is "reallocating resources" to AI teams (Annie Palmer/CNBC)

https://www.cnbc.com/2026/01/27/pinterest-layoffs-stock-ai.html

2026-01-21 01:57:37

Hš vinte anos atršs, eu achava que 480p era uma resolução ótima para vídeos e que somente filmes muito específicos (com fotografia excepcional) justificavam o uso de resolução de 720p, que ocupava muito espaço no HD/CD. Mas agora hš pouco estava assistindo um videoclip da Loreena McKennitt em resolução 480p na minha TV de 42 polegadas e mal conseguia distinguir as imagens.

Em parte isso é porque nossos cérebros ficaram muito acostumado a imagens em alta resolução. Mas, principalmente, c…

2026-02-04 07:32:45

Nicht vergessen:

Heute ist der Nationale Tag des Schafe-Quälens

#Sirenentest #Schweiz

2026-01-18 11:01:04

India's largest retailer Reliance Retail says its daily quick commerce orders peaked at 1.6M in Q4 2025; market leader Blinkit averaged 2.4M daily orders in Q3 (Manish Singh/India Dispatch)

https://indiadispatch.com/p/the-mystery-of-reliance-quick-commerce-growt…

![immich_server | [Nest] 7 - 03/27/2026, 3:20:35 PM LOG [Microservices:MapRepository] Empty response from database for city reverse geocoding lat: 64.978553, lon: -21.063319. Likely cause: no nearby large populated place (500+ within 25km). Falling back to country boundaries.

repeated several times](https://assets.chaos.social/media_attachments/files/116/301/822/099/590/279/small/545e1967e3137124.png)