2026-03-21 00:20:57

Filing: Anthropic says it cannot manipulate Claude once the military has deployed it, denying DOD accusations that Anthropic could tamper with models during war (Paresh Dave/Wired)

https://www.wired.com/story/anthropic-denies-sabotage-ai-tools-war-claude/

2026-04-18 00:51:56

2026-04-19 09:15:45

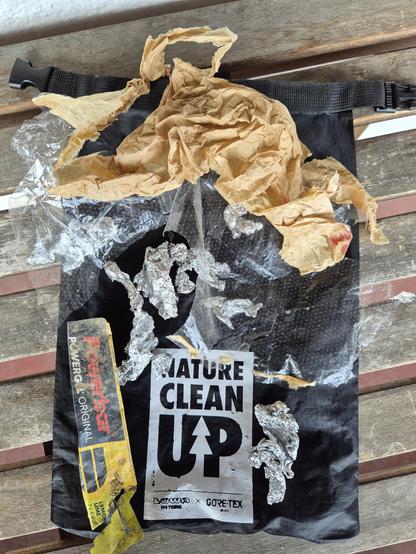

Oh wow, I feel quite sore today. Maybe I didn't do "enough" during winter to keep my muscles in shape.

But it was worth it! (Pictures and video are yet to come). After the snow has mostly melted, it's time again to clean up all kinds of litter.

I once heard a metallic *pling* and knew ... "new microspikes needed now ... but as the metal plate broke and not just a chain, I hope that the shop might replace it. I mean .. broken metal after just ~1year? Keep fi…

2026-04-20 20:30:53

Microsoft pauses new GitHub Copilot signups for Pro, Pro , and Student tiers, tightens usage limits, removes Opus models from Pro, and limits Opus 4.7 to Pro (The GitHub Blog)

https://github.blog/changelog/2026-04-20-changes-to-github-copilot-plans-…

2026-03-12 22:45:25

Nvidia will spend $26 billion to build open-weight AI models, filings show https://www.wired.com/story/nvidia-investing-26-billion-open-source-models/ "The move could position the AI infrastructure powerhouse to quickly compete with OpenAI, Anthropic, an…

2026-04-14 14:22:42

So to follow up on this, I've caught it in action. Models, when quantized a bit, just do a bit more poorly with short contexts. Even going from f32 (as trained) to bf16 (as usually run) to q8 tends to do okay for "normal" context windows. And q4 you start feeling like "this model is a little stupid and gets stuck sometimes” (it is! It's just that it's still mostly careening about in the space of "plausible" most of the time. Not good guesswork, but still in the zone). With long contexts, the probability of parameters collapsing to zero are higher, so the more context the more likelihood you are to see brokenness.

And then at Q2 (2 bits per parameter) or Q1, the model falls apart completely. Parameters collapse to zero easily. You start seeing "all work and no play makes jack a dull boy” sorts of behavior, with intense and unscrutinized repetition, followed by a hard stop when it just stops working.

And quantization is a parameter that a model vendor can turn relatively easily. (they have to regenerate the model from the base with more quantization, but it's a data transformation on the order of running a terabyte through a straightforward and fast process, not like training).

If you have 1000 customers and enough equipment to handle the requests of 700, going from bf16 to q8 is a no-brainer. Suddenly you can handle the load and have a little spare capacity. They get worse results, probably pay the same per token (or they're on a subscription that hides the cost anyway so you are even freer to make trade-offs. There's a reason that subscription products are kinda poorly described.)

It's also possible for them to vary this across a day: use models during quieter periods? Maybe you get an instance running a bf16 quantization. If you use it during a high use period? You get a Q4 model.

Or intelligent routing is possible. No idea if anyone is doing this, but if they monitor what you send a bit, and you generally shoot for an expensive model for simple requests? They could totally substitute a highly quantized version of the model to answer the question.

There are •so many tricks• that can be pulled here. Some of them very reasonable to make, some of them treading into outright misleading or fraudulent, and it's weirdly hard to draw the line between them.

2026-02-14 19:38:18

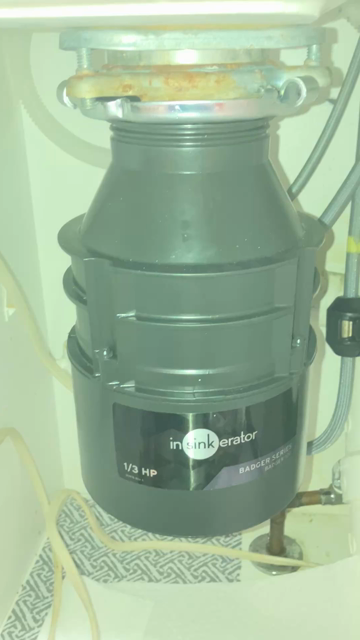

Happily it was a straightforward swap of identical models. Didn’t have to replace the sink flange as a result. It was an awkward business of hefting it up to get it aligned and locked into the flange, but it took only a mild amount of swearing to finally get it right.🤬

No leaks. Orders have been given to unfurl the mission accomplished banner.

(The wiring under this sink could use some taming. Whoever did it was certainly not paid by the hour. 🙄 )

2026-02-12 18:53:51

The risk of a hothouse Earth trajectory: #Climate May Go from Greenhouse to Hothouse: https://eos.org/articles/earths-climate-may-go-from-greenhouse-to-hothouse - uncertainty in climate models could mean Earth systems are perilously close to their tipping points, scientists warn.

2026-03-11 21:51:06

Nvidia debuts Nemotron 3 Super, a 120B-parameter hybrid MoE open-weight model; filing: Nvidia plans to spend $26B over the next five years to build open models (Will Knight/Wired)

https://www.wired.com/story/nvidia-investing-26-billion-open-source-models/…

2026-03-18 01:32:07

Filing: the DOD said it designated Anthropic a supply chain risk over concerns the AI company could disable its tech if the Pentagon crossed its "red lines" (Paresh Dave/Wired)

https://www.wired.com/story/department-of-defense-responds-to-anthropic-…