2026-04-19 09:15:45

Oh wow, I feel quite sore today. Maybe I didn't do "enough" during winter to keep my muscles in shape.

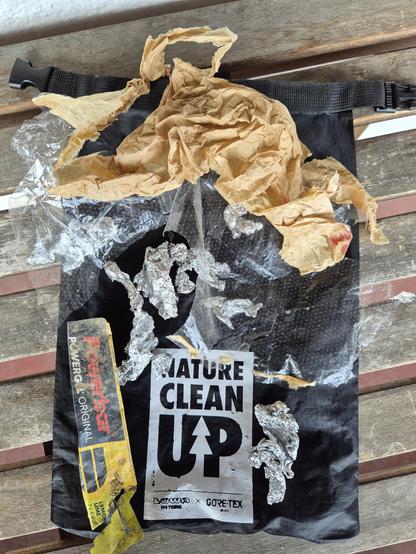

But it was worth it! (Pictures and video are yet to come). After the snow has mostly melted, it's time again to clean up all kinds of litter.

I once heard a metallic *pling* and knew ... "new microspikes needed now ... but as the metal plate broke and not just a chain, I hope that the shop might replace it. I mean .. broken metal after just ~1year? Keep fi…

2026-02-18 19:30:52

Maybe it isn't a very ladylike pose, and that neck looks broken, but Harley is totally relaxed about the heavy snow falling outside. She knows Spring is close despite the current weather conditions.

#DogsOfMastadon #NapTime

2026-04-14 14:22:42

So to follow up on this, I've caught it in action. Models, when quantized a bit, just do a bit more poorly with short contexts. Even going from f32 (as trained) to bf16 (as usually run) to q8 tends to do okay for "normal" context windows. And q4 you start feeling like "this model is a little stupid and gets stuck sometimes” (it is! It's just that it's still mostly careening about in the space of "plausible" most of the time. Not good guesswork, but still in the zone). With long contexts, the probability of parameters collapsing to zero are higher, so the more context the more likelihood you are to see brokenness.

And then at Q2 (2 bits per parameter) or Q1, the model falls apart completely. Parameters collapse to zero easily. You start seeing "all work and no play makes jack a dull boy” sorts of behavior, with intense and unscrutinized repetition, followed by a hard stop when it just stops working.

And quantization is a parameter that a model vendor can turn relatively easily. (they have to regenerate the model from the base with more quantization, but it's a data transformation on the order of running a terabyte through a straightforward and fast process, not like training).

If you have 1000 customers and enough equipment to handle the requests of 700, going from bf16 to q8 is a no-brainer. Suddenly you can handle the load and have a little spare capacity. They get worse results, probably pay the same per token (or they're on a subscription that hides the cost anyway so you are even freer to make trade-offs. There's a reason that subscription products are kinda poorly described.)

It's also possible for them to vary this across a day: use models during quieter periods? Maybe you get an instance running a bf16 quantization. If you use it during a high use period? You get a Q4 model.

Or intelligent routing is possible. No idea if anyone is doing this, but if they monitor what you send a bit, and you generally shoot for an expensive model for simple requests? They could totally substitute a highly quantized version of the model to answer the question.

There are •so many tricks• that can be pulled here. Some of them very reasonable to make, some of them treading into outright misleading or fraudulent, and it's weirdly hard to draw the line between them.

2026-03-09 17:05:07

Capitalists are the reason people become socialists.

https://www.ign.com/articles/ea-lays-off-staff-across-all-battlefield-studios-following-record-breaking-battlefield-6-launch

2026-03-16 08:51:40

one reason recommendation systems seem terrible to me: I used to go to bookstores basically to read the bookshelves, not really looking for a book to read. similarly, I often open up netflix with a vague feeling of "maybe I'll watch something", but really what interests me is the browsing, and I'm unsatisfied by the weird browsing experience. sometimes I do hunt and want something to pounce on, but most of the time I'm just exploring

2026-03-12 19:12:11

Nice here in the fediverse.

But have you ever been to Ankh-Morpork?

City of a thousand surprises

#GNUPterry #gnuterrypratchett #GNUSirPterry

2026-03-02 13:41:48

Rebuilding public trust in AI requires meaningful citizen engagement, transparent governance, and robust legislation. Technology itself is not the problem. The issue is that few people trust institutions to deploy it wisely and for their benefit. This makes the first step to answer the following question: What’s it in for me?

2026-01-24 14:47:26

Weekend #Plankton Factoid 🦠🦐

The polar vortex has caused a cold snap here, and the Great Lakes are rapidly forming ice. Under this thick ice, algae are growing. These are normal diatom phytoplankton, which change to form thick chains, attaching to the underside of the ice where light is optimal. We clearly see this brown ice and water in the wake of our icebreakers. This occurs near the pole…

2026-02-09 07:55:17

If you're following my #publiicvoit blog via Atom feed:

I might have found a fix for my broken feed format: https://github.com/novoid/lazyblorg/issues/24

Please report back a…

2026-03-07 22:32:04

No, really, my feeling for people who believe that they are not engage in promoting Fascism when using LLMs like ChatGPT and Clause and Grok is pure pity. I can’t even be angry with them any more when it is clear that if those LLMs are good for anything, it is breaking people’s connections to reality.