2026-03-14 18:48:10

There are some changes for the 2026 tax filing season that people who are 65 years of age and older should be aware of.

The most recent being the enhanced deduction for seniors

https://www.irs.gov/newsroom/2026-filing-season-updates-and-resources-for-…

2026-03-14 15:49:00

Here's another short story that reflects on our extollation of technology...

The Flying Machine – Ray Bradbury

https://xpressenglish.com/our-stories/flying-machine/

2026-04-14 18:44:17

Ginn returns to Aviators sideline after DWI arrest https://www.espn.com/united-football-league/story/_/id/48485633/ted-ginn-jr-resumes-coaching-aviators-following-dwi-arrest

2026-03-14 08:33:46

2026-02-14 12:00:06

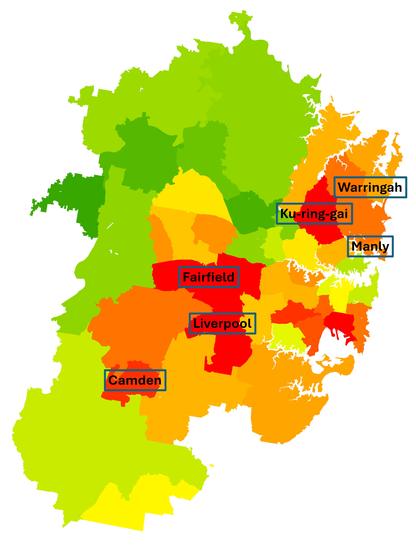

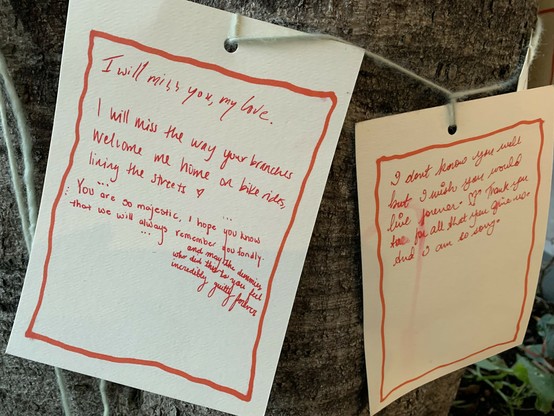

"Tree planting can combat urban heat, but some neighborhoods are falling behind"

#Trees #Environment

https://

2026-04-16 00:44:16

🇺🇦 #NowPlaying on KEXP's #DriveTime

Flyying Colours:

🎵 Goodtimes

#FlyyingColours

https://flyyingcolours.bandcamp.com/track/goodtimes-3

https://open.spotify.com/track/5OK5Ayd5kjcfXO7bM8HVYI

2026-04-14 14:22:42

So to follow up on this, I've caught it in action. Models, when quantized a bit, just do a bit more poorly with short contexts. Even going from f32 (as trained) to bf16 (as usually run) to q8 tends to do okay for "normal" context windows. And q4 you start feeling like "this model is a little stupid and gets stuck sometimes” (it is! It's just that it's still mostly careening about in the space of "plausible" most of the time. Not good guesswork, but still in the zone). With long contexts, the probability of parameters collapsing to zero are higher, so the more context the more likelihood you are to see brokenness.

And then at Q2 (2 bits per parameter) or Q1, the model falls apart completely. Parameters collapse to zero easily. You start seeing "all work and no play makes jack a dull boy” sorts of behavior, with intense and unscrutinized repetition, followed by a hard stop when it just stops working.

And quantization is a parameter that a model vendor can turn relatively easily. (they have to regenerate the model from the base with more quantization, but it's a data transformation on the order of running a terabyte through a straightforward and fast process, not like training).

If you have 1000 customers and enough equipment to handle the requests of 700, going from bf16 to q8 is a no-brainer. Suddenly you can handle the load and have a little spare capacity. They get worse results, probably pay the same per token (or they're on a subscription that hides the cost anyway so you are even freer to make trade-offs. There's a reason that subscription products are kinda poorly described.)

It's also possible for them to vary this across a day: use models during quieter periods? Maybe you get an instance running a bf16 quantization. If you use it during a high use period? You get a Q4 model.

Or intelligent routing is possible. No idea if anyone is doing this, but if they monitor what you send a bit, and you generally shoot for an expensive model for simple requests? They could totally substitute a highly quantized version of the model to answer the question.

There are •so many tricks• that can be pulled here. Some of them very reasonable to make, some of them treading into outright misleading or fraudulent, and it's weirdly hard to draw the line between them.

2026-01-16 01:21:28

2026-03-15 13:36:37

Why are we having fewer children?

(Interview with Berkay Ozcan, Professor at LSE)

- Couple formation happens at later age

- Women are choosing "careers" and not just "jobs"

- More people choose not to have kids at all

What else is going on? Short anser: we don't know yet

Even in countries providing a lot of support to parents, fertility rate has still declined

Immigration is no silver bullet. It's part of the solution, not th…

2026-03-16 01:54:12

The entire machinery of online discourse around building and creating has been so thoroughly captured by entrepreneurial "logic"

that we've lost the language to describe what it feels like to simply make a thing that helps someone,

give it away, and move on with your life.

I've been feeling this for a while now, and I suspect a lot of folks who have the itch to build feel it too, even if they haven't articulated it.