2026-05-11 16:36:44

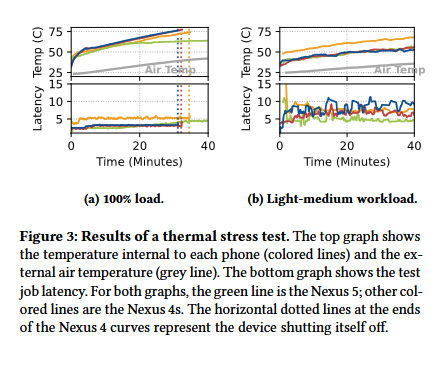

🚯 Junkyard Computing: Repurposing Discarded Smartphones to Minimize Carbon

#computing

2026-04-11 10:09:53

RE: https://mastodon.social/@ispreview/116377243959305777

The high position of the UK in the Spamhaus C2 list represents a big failure of the UK's (now decade long) investment in cyber security, and a small win for the reliability of its networks.

2026-02-27 12:22:08

When DHS, USCIS, and DOGE announced the overhaul of the SAVE program,

they explicitly linked the effort to a campaign to combat the “taint” of noncitizen voter fraud,

a long-standing claim tied to the lie that the 2020 presidential election was stolen.

By all appearances, the administration is poised to use the SAVE program to prop up these false claims,

and sympathetic local officials may deploy SAVE to concoct evidence.

This move poses serious risks to vo…

2026-04-27 07:15:23

“In this work, we conduct a large-scale simulation of how users might delegate work to LLMs across 52 professional domains. We find that current LLMs are unreliable delegates: even frontier models corrupt an average of 25% of document content over long workflows, with sparse but severe errors that silently compound over time.”

Good to see the issue addressed explicitly, even though the results aren’t surprising—why would anyone expect LLMs to be reliable!?

2026-03-24 12:22:12

RE: https://toot.cat/@plexus/116283016837715719

It should also be noted that beyond the ethical, political and environmental issues with this is that it doesn't work:

1. There is on average no mid to long term productivity gain with actual real-world software development that isn't just a "wow see what it can do" demo. (Multiple studies have shown that now.)

2. It won't help with 90% of the work when professionally making software, which, believe it or not, isn't coding. 90% of the work is designing and planning the software (these are things that happen both upfront and during development).

Maybe you have seen the recent Microsoft thing rolling back features in Windows they added?

E.g. Copilot in Notepad. What they did is essentially outsourcing project management to developers who then outsourced it to LLMs. But an LLMs can't plan and design software, and arguably barely can even generate code that works (as in reliable and performant). So now they have a buggy mess with features no one wants and they're rolling it back.

There's no silver bullets in software development.

2026-04-30 13:32:37

PSA: that linux root exploit is significant. Nobody cares about the number of bytes of the exploit. It's about as meaningless as the also popular "this attack takes only xx seconds / xx minutes". If attackers want to become root, they really don't care if you need 700 or 7000 or 70000 bytes. Or if it takes 2 seconds or 5 minutes. Irrelevant metrics for impact.

Relevant Qs are: how reliable the exploit is, how widespread the vulnerability, etc.

2026-04-27 07:15:23

“In this work, we conduct a large-scale simulation of how users might delegate work to LLMs across 52 professional domains. We find that current LLMs are unreliable delegates: even frontier models corrupt an average of 25% of document content over long workflows, with sparse but severe errors that silently compound over time.”

Good to see the issue addressed explicitly, even though the results aren’t surprising—why would anyone expect LLMs to be reliable!?

2026-04-27 07:15:23

“In this work, we conduct a large-scale simulation of how users might delegate work to LLMs across 52 professional domains. We find that current LLMs are unreliable delegates: even frontier models corrupt an average of 25% of document content over long workflows, with sparse but severe errors that silently compound over time.”

Good to see the issue addressed explicitly, even though the results aren’t surprising—why would anyone expect LLMs to be reliable!?

2026-05-01 20:42:01

from my link log —

What SRE is not.

https://blog.relyabilit.ie/what-sre-is-not/

saved 2021-11-29 https://dotat.at/:/T0UG8.html

2026-04-27 19:27:59

We did a few small things to polish #LinuxBoot channel if you're interested! 🥳