2025-08-12 03:13:07

Erster! Erster?

Guten Morgen. Aber ich lege mich nochmals hin. U.a. auch, weil die Gruppe ein paar Zelte weiter ihre kulturell diversen Hudigäggeler erst vor einer Stunde ausgeschaltet haben.

#pastpuzzle 95

🟩🟩🟥🟥 (-14)

🟩🟩🟩🟩 (0)

▪️▪️▪️▪️

▪️▪️▪️▪️

2/4 🥈

2025-08-11 12:55:26

Stellt euch vor: Ihr betretet einen Raum und werdet sofort erkannt - ohne Kameras, ohne Sensoren, einfach durch die WLAN-Signale um euch herum. 😳 Was nach Science-Fiction klingt, ist jetzt Realität geworden!

Zum Artikel: https://heise.de/-10515620?wt_mc=sm.re

2025-10-11 22:54:19

2025-10-11 21:03:07

Appreciate the nod that spatial data viz is hard, @…!

The motivation part about expensive GIS tools is a little off though 💸 :qgis:

https://m…

2025-10-12 16:18:07

📖 Ich habe Angst vor #Groupies. Das Gekreische und Herumgezerre ist wirklich schlimm. Also keine #Buchmesse.🤭

Deshalb stelle ich Euch erlauchten #Nerds (lt. Sascha Lobo 😁) hier eines meiner

2025-08-13 07:33:57

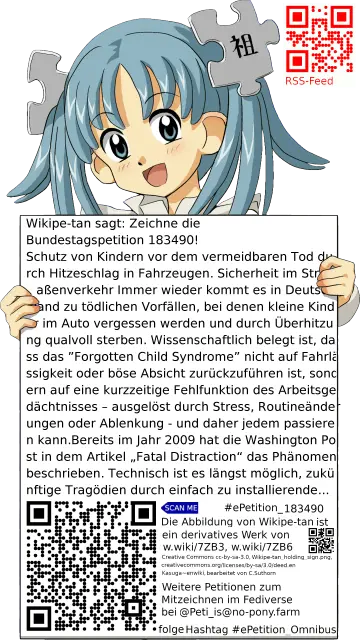

Die ePetition 183490 an den Bundestag aus dem Bereich Sicherheit im Straßenverkehr mit dem Titel

Schutz von Kindern vor dem vermeidbaren Tod durch Hitzeschlag in Fahrzeugen

kann bis zum Mittwoch 24. September 2025 01:00 UTC mitgezeichnet werden und lautetMit dieser Petition werden gesetzliche R…

2025-10-12 16:13:28

Teruglezen | Zo verliep het anti-immigratieprotest in Amsterdam - NU.nl

https://www.nu.nl/binnenland/6372144/teruglezen-zo-verliep-het-anti-immigratieprotest-in-amsterdam.html

Misschien wel t belangrijkste nieuws van vandaag: ⬇️ 😎😎

-Vluchtelingenclubs kregen ton door actie tegen haat-

Link naar de in het artikel genoemde actie:

https://www.degoedezaak.org/sponsorloop-draai-dit-extreemrechtse-protest-om-in-steun-voor-het-goede-doel/

Doneren mag nog steeds!

(Via @… )

2025-10-11 16:41:00

Airline-Verbandschef fordert Abschuss von Drohnen

Der Chef des deutschen Airline-Verbands ist über die Zögerlichkeit der Politik verärgert – und hat schlechte Nachrichten für Passagiere.

https://www.

2025-08-13 07:33:00

Pixel 10 Fold: Google zeigt sein neues Foldable

Google gewährt einen ersten Blick auf das neue Pixel 10 Pro Fold schon vor der offiziellen Enthüllung am 20. August.

https://www.heise.de/news/Pi…

2025-09-11 16:09:00