2026-02-28 10:20:01

As salty as I am about it, there's also another way to think about this. For anyone who still has connections to folks on the right (which is perhaps unlikely for anyone on this server, I digress), the cult that has consumed them thrives on isolation and grievance.

The words "you were right" have the potential to cut through the programming and open up an opportunity for reconnection. The modern conspiratorial cult of the Right has been built partially around people who were told they were wrong or were crazy. In the vast majority of cases, they were wrong and even when they were right they completely misunderstood why, but we'll skip that for now. Liberals making fun of them (even the times when they definitely earned it) has pushed them further and further into their ideological hole.

The thing about those words, "you were right," in this context is that the way they offer reconnection also requires them to take one little step of betraying their ideology to accept them. So they must choose between maintaining allegiance to a pedophile or finally getting to feel superior after years of living in an illusion of persecution.

Under the ideology of the Right, admitting one is wrong is a weakness. It is admitting defeat. They have to "own the libs" by saying things, things that they know aren't true, in order to feel dominant. But these things are often so absurd that they end up being made fun of, feeling even more weak and pathetic, reinforcing their fear and alienation.

Offering what they're looking for can offer a way out, but only if they're willing to start to recognize the thing they've supported for what it is.

And they were right about some things. They were right that Bill Gates was a terrible person. I've had plenty of liberals defend him based on his philanthropy washing, but he's awful and always has been. The Epstein links make that blatant. They intuitively recognized him and didn't trust him, even if they were wildly off base about *how and why* he shouldn't be trusted... Even if their correct mistrust was leveraged into one of the most destructive conspiracy theories ever (vaccine denial and COVID vaccine avoidance).

They were right about Bill Clinton. He was always shady as fuck. Sure, the people who attacked him at the time turned out to be even more shady but that's not the point right now. He was connected to Epstein and that was always creepy as fuck.

And the Epstein thing was an open secret that liberals ignored for a long time. It was seen as some weird thing that right wing nutjobs believed about the Clintons. But it was true. Not all of it, and there has always been an antisemitic element to the right wing interpretation or Epstein stuff, but his whole pedophile conspiracy was always kind of real.

The whole "Illuminati"/deep state thing is a vast oversimplification, an attempt to make comprehensible an incredibly complex set of interlocking and emergent behaviors. But Epstein did very much want to remake the world, to create a new world order, and he absolutely played a part in it.

The Right wing nutjobs talked about global authoritarianism, Blackhawks flying over American cities, masked men with guns disarming and executing legal gun owners in the streets. That's all happening right now.

The "FEMA concentration camps" are not actually that far off. ICE and FEMA are sister agencies, both under DHS. I'd be more than happy to call that one "close enough" in order to hear some MAGA admit that ICE is, in fact, building concentration camps.

There was always a huge millennialist element to these things. They tended to be connected to "the antichrist." It was absurd, especially for me as someone who no longer identifies as a Christian. But I'll even acquiess that to a degree. The "the number of the Beast" is 666. That's just the sum of the Hebrew spelling of "Nero." Revelations focuses a lot on Nero coming back to life after his death. His death that involved a head wound, thus the line from Revelation 13:3:

> And I saw one of his heads as if it had been mortally wounded, and his deadly wound was healed. And all the world marveled and followed the beast.

The parallels between Trump and Nero are easy to draw, and Trump's ear wound feels pretty on-the-nose for this. I don't believe in "prophecy" in this way. I think that there are patterns, and useful patterns can become encoded in beleif systems. But I will, again, happily call this one "close enough" for anyone on that side willing to also acknowledge it. I'm happy to meet on that common ground, because anyone who accepts it must recognize that their duty is to fight against it.

A lot of these correct nuggets are embedded in a framework of religious extremism and antisemitism. The vast majority of the beliefs holding these together are wildly wrong and incredibly toxic. But by giving some room to feel validated, listened to, understood, can give some room to admit things that were wrong.

Cult de-programming starts with an opening. People have to talk through their own thoughts, hear their own inconsistencies. Guiding questions can help them untangle these things for themselves. And it all starts by having enough room to feel safe, to not feel cornered, to not feel stupid. Admitting mistakes means being vulnerable, and the MAGA cult is built on fear. It's built on exploiting vulnerability and locking it away.

De-programming takes a long time. It's not easy. It takes patience. But every person who comes out does so with a powerful perspective, a deep understanding, that can be turned back against it. The best people at getting people out of cults are former members. Some of the most dedicated antifa are former fascists who understood their mistakes and dedicate their lives to fixing them.

2026-01-26 22:10:47

A group of YouTubers with a combined 6.2M subscribers adds Snap to a class action lawsuit, alleging the company trained its AI systems on their video content (Sarah Perez/TechCrunch)

https://techcrunch.com/2026/01/26/youtuber…

2026-02-26 16:35:04

Seeking a shortcut to becoming exceptional is the best guarantee of remaining mid forever.

“The organizations believe that through a combination of online learning and AI tutoring, average performers can become exceptional in a compressed amount of time.”

https://www.…

2026-01-27 09:10:39

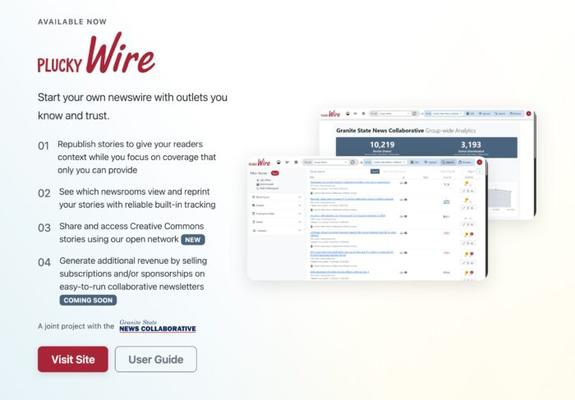

A look at Plucky Wire, a website for local newsrooms to find and share stories with each other for republication; it is now being used by over 200 publishers (Sarah Scire/Nieman Lab)

https://www.niemanlab.org/2026/01/a-scrappy-story-shar…

2026-02-25 16:08:08

Replaced article(s) found for cs.LG. https://arxiv.org/list/cs.LG/new

[4/6]:

- Neural Proposals, Symbolic Guarantees: Neuro-Symbolic Graph Generation with Hard Constraints

Chuqin Geng, Li Zhang, Mark Zhang, Haolin Ye, Ziyu Zhao, Xujie Si

https://arxiv.org/abs/2602.16954 https://mastoxiv.page/@arXiv_csLG_bot/116102434757760085

- Multi-Probe Zero Collision Hash (MPZCH): Mitigating Embedding Collisions and Enhancing Model Fres...

Ziliang Zhao, et al.

https://arxiv.org/abs/2602.17050 https://mastoxiv.page/@arXiv_csLG_bot/116102517335590034

- MASPO: Unifying Gradient Utilization, Probability Mass, and Signal Reliability for Robust and Sam...

Fu, Lin, Fang, Zheng, Hu, Shao, Qin, Pan, Zeng, Cai

https://arxiv.org/abs/2602.17550 https://mastoxiv.page/@arXiv_csLG_bot/116102581561441103

- A Theoretical Framework for Modular Learning of Robust Generative Models

Corinna Cortes, Mehryar Mohri, Yutao Zhong

https://arxiv.org/abs/2602.17554 https://mastoxiv.page/@arXiv_csLG_bot/116102582216715527

- Multi-Round Human-AI Collaboration with User-Specified Requirements

Sima Noorani, Shayan Kiyani, Hamed Hassani, George Pappas

https://arxiv.org/abs/2602.17646 https://mastoxiv.page/@arXiv_csLG_bot/116102592047544971

- NEXUS: A compact neural architecture for high-resolution spatiotemporal air quality forecasting i...

Rampunit Kumar, Aditya Maheshwari

https://arxiv.org/abs/2602.19654 https://mastoxiv.page/@arXiv_csLG_bot/116125610403473755

- Augmenting Lateral Thinking in Language Models with Humor and Riddle Data for the BRAINTEASER Task

Mina Ghashami, Soumya Smruti Mishra

https://arxiv.org/abs/2405.10385 https://mastoxiv.page/@arXiv_csCL_bot/112472190479013167

- Watermarking Language Models with Error Correcting Codes

Patrick Chao, Yan Sun, Edgar Dobriban, Hamed Hassani

https://arxiv.org/abs/2406.10281 https://mastoxiv.page/@arXiv_csCR_bot/112636307340218522

- Learning to Control Unknown Strongly Monotone Games

Siddharth Chandak, Ilai Bistritz, Nicholas Bambos

https://arxiv.org/abs/2407.00575 https://mastoxiv.page/@arXiv_csMA_bot/112715733875586837

- Classification and reconstruction for single-pixel imaging with classical and quantum neural netw...

Sofya Manko, Dmitry Frolovtsev

https://arxiv.org/abs/2407.12506 https://mastoxiv.page/@arXiv_quantph_bot/112806295477530195

- Statistical Inference for Temporal Difference Learning with Linear Function Approximation

Weichen Wu, Gen Li, Yuting Wei, Alessandro Rinaldo

https://arxiv.org/abs/2410.16106 https://mastoxiv.page/@arXiv_statML_bot/113350611306532443

- Big data approach to Kazhdan-Lusztig polynomials

Abel Lacabanne, Daniel Tubbenhauer, Pedro Vaz

https://arxiv.org/abs/2412.01283 https://mastoxiv.page/@arXiv_mathRT_bot/113587812663608119

- MoEMba: A Mamba-based Mixture of Experts for High-Density EMG-based Hand Gesture Recognition

Mehran Shabanpour, Kasra Rad, Sadaf Khademi, Arash Mohammadi

https://arxiv.org/abs/2502.17457 https://mastoxiv.page/@arXiv_eessSP_bot/114069047434302054

- Tightening Optimality gap with confidence through conformal prediction

Miao Li, Michael Klamkin, Russell Bent, Pascal Van Hentenryck

https://arxiv.org/abs/2503.04071 https://mastoxiv.page/@arXiv_statML_bot/114120074927291283

- SEED: Towards More Accurate Semantic Evaluation for Visual Brain Decoding

Juhyeon Park, Peter Yongho Kim, Jiook Cha, Shinjae Yoo, Taesup Moon

https://arxiv.org/abs/2503.06437 https://mastoxiv.page/@arXiv_csCV_bot/114142690988862508

- How much does context affect the accuracy of AI health advice?

Prashant Garg, Thiemo Fetzer

https://arxiv.org/abs/2504.18310 https://mastoxiv.page/@arXiv_econGN_bot/114414380916957986

- Reproducing and Improving CheXNet: Deep Learning for Chest X-ray Disease Classification

Daniel J. Strick, Carlos Garcia, Anthony Huang, Thomas Gardos

https://arxiv.org/abs/2505.06646 https://mastoxiv.page/@arXiv_eessIV_bot/114499319986528625

- Sharp Gaussian approximations for Decentralized Federated Learning

Soham Bonnerjee, Sayar Karmakar, Wei Biao Wu

https://arxiv.org/abs/2505.08125 https://mastoxiv.page/@arXiv_statML_bot/114505047719395949

- HoloLLM: Multisensory Foundation Model for Language-Grounded Human Sensing and Reasoning

Chuhao Zhou, Jianfei Yang

https://arxiv.org/abs/2505.17645 https://mastoxiv.page/@arXiv_csCV_bot/114572928659057348

- A Copula Based Supervised Filter for Feature Selection in Diabetes Risk Prediction Using Machine ...

Agnideep Aich, Md Monzur Murshed, Sameera Hewage, Amanda Mayeaux

https://arxiv.org/abs/2505.22554 https://mastoxiv.page/@arXiv_statML_bot/114589983451462525

- Synthesis of discrete-continuous quantum circuits with multimodal diffusion models

Florian F\"urrutter, Zohim Chandani, Ikko Hamamura, Hans J. Briegel, Gorka Mu\~noz-Gil

https://arxiv.org/abs/2506.01666 https://mastoxiv.page/@arXiv_quantph_bot/114618420761346125

toXiv_bot_toot

2026-03-28 01:16:19

Four weeks into a war that was going to take four days,

and that has so far cost the US about $30-40bn

and Israel $300m a day,

Washington is further away from a diplomatic agreement with Iran than it was in May 2025.

Not only has the war failed to persuade Iran to agree to dismantle its nuclear programme in the comprehensive and irreversible way the US demanded in a 15-point paper that it tabled on 23 May last year,

Washington is now having to negotiate to reopen…

2026-02-27 14:33:34

Replaced article(s) found for math.DG. https://arxiv.org/list/math.DG/new

[1/1]:

- On the modified $J$-equation

Ryosuke Takahashi

https://arxiv.org/abs/2207.04953

- Surfaces with flat normal connection in 4-dimensional space forms

Naoya Ando, Ryusei Hatanaka

https://arxiv.org/abs/2501.15780

- Regularized $\zeta_{\Delta}(1)$ for Polyhedra

Alexey Yu. Kokotov, Dmitrii V. Korikov

https://arxiv.org/abs/2502.03351 https://mastoxiv.page/@arXiv_mathDG_bot/113955669526276293

- General Chen-Ricci inequalities for Riemannian submersions and Riemannian maps

Ravindra Singh, Kiran Meena, Kapish Chand Meena

https://arxiv.org/abs/2509.15281 https://mastoxiv.page/@arXiv_mathDG_bot/115246828823405190

- Some configuration results for area-minimizing cones

Yongsheng Zhang

https://arxiv.org/abs/2510.17240 https://mastoxiv.page/@arXiv_mathDG_bot/115411416287934120

- Real Bers embedding on the line: Fisher-Rao linearization, Schwarzian curvature, and scattering c...

Hy Lam

https://arxiv.org/abs/2602.07373 https://mastoxiv.page/@arXiv_mathDG_bot/116045447030638429

- Explicit Hamiltonian representations of meromorphic connections and duality from different perspe...

Mohamad Alameddine, Olivier Marchal

https://arxiv.org/abs/2406.19187 https://mastoxiv.page/@arXiv_mathph_bot/112692974532066693

- An alternative solvability criterion for the Dirichlet problem for the minimal surface equation a...

Ari J. Aiolfi, Giovanni da Silva Nunes, Jaime Ripoll, Lisandra Sauer, Rodrigo Soares

https://arxiv.org/abs/2508.09806 https://mastoxiv.page/@arXiv_mathAP_bot/115026282591071982

- Gromov's Compactness Theorem for the Intrinsic Timed-Hausdorff Distance

Mauricio Che, Raquel Perales, Christina Sormani

https://arxiv.org/abs/2510.13069 https://mastoxiv.page/@arXiv_mathMG_bot/115382835673596437

- Nearly optimal spectral gaps for random Belyi surfaces

Yang Shen, Yunhui Wu

https://arxiv.org/abs/2511.02517 https://mastoxiv.page/@arXiv_mathSP_bot/115496082425305449

toXiv_bot_toot

2026-01-27 16:25:54

The Allen Institute for AI launches SERA, open-source coding agents including 32B- and 8B-parameter models designed to adapt to private codebases (Kyt Dotson/SiliconANGLE)

https://siliconangle.com/2026/01/27/ai2-launches-family-open-sou…

2026-02-25 16:07:47

Replaced article(s) found for cs.LG. https://arxiv.org/list/cs.LG/new

[2/6]:

- Performance Asymmetry in Model-Based Reinforcement Learning

Jing Yu Lim, Rushi Shah, Zarif Ikram, Samson Yu, Haozhe Ma, Tze-Yun Leong, Dianbo Liu

https://arxiv.org/abs/2505.19698 https://mastoxiv.page/@arXiv_csLG_bot/114578810521008766

- Towards Robust Real-World Multivariate Time Series Forecasting: A Unified Framework for Dependenc...

Jinkwan Jang, Hyungjin Park, Jinmyeong Choi, Taesup Kim

https://arxiv.org/abs/2506.08660 https://mastoxiv.page/@arXiv_csLG_bot/114664238967892509

- Wasserstein Barycenter Soft Actor-Critic

Zahra Shahrooei, Ali Baheri

https://arxiv.org/abs/2506.10167 https://mastoxiv.page/@arXiv_csLG_bot/114675175949432731

- Foundation Models for Causal Inference via Prior-Data Fitted Networks

Yuchen Ma, Dennis Frauen, Emil Javurek, Stefan Feuerriegel

https://arxiv.org/abs/2506.10914 https://mastoxiv.page/@arXiv_csLG_bot/114675529854402158

- FREQuency ATTribution: benchmarking frequency-based occlusion for time series data

Dominique Mercier, Andreas Dengel, Sheraz Ahmed

https://arxiv.org/abs/2506.18481 https://mastoxiv.page/@arXiv_csLG_bot/114738421450807709

- Complexity-aware fine-tuning

Andrey Goncharov, Daniil Vyazhev, Petr Sychev, Edvard Khalafyan, Alexey Zaytsev

https://arxiv.org/abs/2506.21220 https://mastoxiv.page/@arXiv_csLG_bot/114754764750730849

- Transfer Learning in Infinite Width Feature Learning Networks

Clarissa Lauditi, Blake Bordelon, Cengiz Pehlevan

https://arxiv.org/abs/2507.04448 https://mastoxiv.page/@arXiv_csLG_bot/114818005803079705

- A hierarchy tree data structure for behavior-based user segment representation

Liu, Kang, Iyer, Malik, Li, Wang, Lu, Zhao, Wang, Liu, Liu, Liang, Yu

https://arxiv.org/abs/2508.01115 https://mastoxiv.page/@arXiv_csLG_bot/114975999992144374

- One-Step Flow Q-Learning: Addressing the Diffusion Policy Bottleneck in Offline Reinforcement Lea...

Thanh Nguyen, Chang D. Yoo

https://arxiv.org/abs/2508.13904 https://mastoxiv.page/@arXiv_csLG_bot/115060568241390847

- Uncertainty Propagation Networks for Neural Ordinary Differential Equations

Hadi Jahanshahi, Zheng H. Zhu

https://arxiv.org/abs/2508.16815 https://mastoxiv.page/@arXiv_csLG_bot/115094785677272005

- Learning Unified Representations from Heterogeneous Data for Robust Heart Rate Modeling

Zhengdong Huang, Zicheng Xie, Wentao Tian, Jingyu Liu, Lunhong Dong, Peng Yang

https://arxiv.org/abs/2508.21785 https://mastoxiv.page/@arXiv_csLG_bot/115128450608548173

- Monte Carlo Tree Diffusion with Multiple Experts for Protein Design

Liu, Cao, Jiang, Luo, Duan, Wang, Sosnick, Xu, Stevens

https://arxiv.org/abs/2509.15796 https://mastoxiv.page/@arXiv_csLG_bot/115247429156900905

- From Samples to Scenarios: A New Paradigm for Probabilistic Forecasting

Xilin Dai, Zhijian Xu, Wanxu Cai, Qiang Xu

https://arxiv.org/abs/2509.19975 https://mastoxiv.page/@arXiv_csLG_bot/115264498084813952

- Why High-rank Neural Networks Generalize?: An Algebraic Framework with RKHSs

Yuka Hashimoto, Sho Sonoda, Isao Ishikawa, Masahiro Ikeda

https://arxiv.org/abs/2509.21895 https://mastoxiv.page/@arXiv_csLG_bot/115287261047939306

- From Parameters to Behaviors: Unsupervised Compression of the Policy Space

Davide Tenedini, Riccardo Zamboni, Mirco Mutti, Marcello Restelli

https://arxiv.org/abs/2509.22566 https://mastoxiv.page/@arXiv_csLG_bot/115287379672141023

- RHYTHM: Reasoning with Hierarchical Temporal Tokenization for Human Mobility

Haoyu He, Haozheng Luo, Yan Chen, Qi R. Wang

https://arxiv.org/abs/2509.23115 https://mastoxiv.page/@arXiv_csLG_bot/115293273559547106

- Polychromic Objectives for Reinforcement Learning

Jubayer Ibn Hamid, Ifdita Hasan Orney, Ellen Xu, Chelsea Finn, Dorsa Sadigh

https://arxiv.org/abs/2509.25424 https://mastoxiv.page/@arXiv_csLG_bot/115298579764580635

- Recursive Self-Aggregation Unlocks Deep Thinking in Large Language Models

Siddarth Venkatraman, et al.

https://arxiv.org/abs/2509.26626 https://mastoxiv.page/@arXiv_csLG_bot/115298789487177431

- Cautious Weight Decay

Chen, Li, Liang, Su, Xie, Pierse, Liang, Lao, Liu

https://arxiv.org/abs/2510.12402 https://mastoxiv.page/@arXiv_csLG_bot/115377759317818093

- TeamFormer: Shallow Parallel Transformers with Progressive Approximation

Wei Wang, Xiao-Yong Wei, Qing Li

https://arxiv.org/abs/2510.15425 https://mastoxiv.page/@arXiv_csLG_bot/115405933861293858

- Latent-Augmented Discrete Diffusion Models

Dario Shariatian, Alain Durmus, Umut Simsekli, Stefano Peluchetti

https://arxiv.org/abs/2510.18114 https://mastoxiv.page/@arXiv_csLG_bot/115417332500265972

- Predicting Metabolic Dysfunction-Associated Steatotic Liver Disease using Machine Learning Method...

Mary E. An, Paul Griffin, Jonathan G. Stine, Ramakrishna Balakrishnan, Soundar Kumara

https://arxiv.org/abs/2510.22293 https://mastoxiv.page/@arXiv_csLG_bot/115451746201804373

toXiv_bot_toot

2026-01-26 22:05:45

A group of YouTubers with a combined 6.2M subscribers adds Snap to a class action lawsuit, alleging the company trained its AI systems on their video content (Sarah Perez/TechCrunch)

https://techcrunch.com/2026/01/26/youtuber…