2026-01-18 23:17:10

In case anyone was wondering about the relevance of #LandBack in the current moment, via CrimeThinc an article on the Minneapolis resistance states:

"""

The Whipple, a federal building in Fort Snelling on the outskirts of the Minneapolis and St. Paul, has long been a regional headquarters for ICE, having previously housed other federal agencies. The complex is located across the street from a National Guard base, down the road from a military base, and next to the preserved fort itself. The fort sits on the sacred site of the convergence of two rivers. It was one of the earliest sites of colonization in the area; at one time, it was a concentration camp holding native Dakota people.

"""

If at any point in the past you ever felt that maybe Native soverignty was a niche issue, or so far from being realized that other causes were more important or relevant, things like this are a good reminder that that cause: overturning the colonial order, is the *same* cause as any meaningful change from the fascist status quo. Things like a "return to democracy" aren't necessarily bad, but the rot runs to the root of this nation, and any intervention that doesn't go that deep is going to leave us right back in this situation again later on.

The fact that ICE is detaining Native Americans is not at all a mistake given their white supremacist aims.

Article link: #ICE #LandBack

2025-12-19 13:02:42

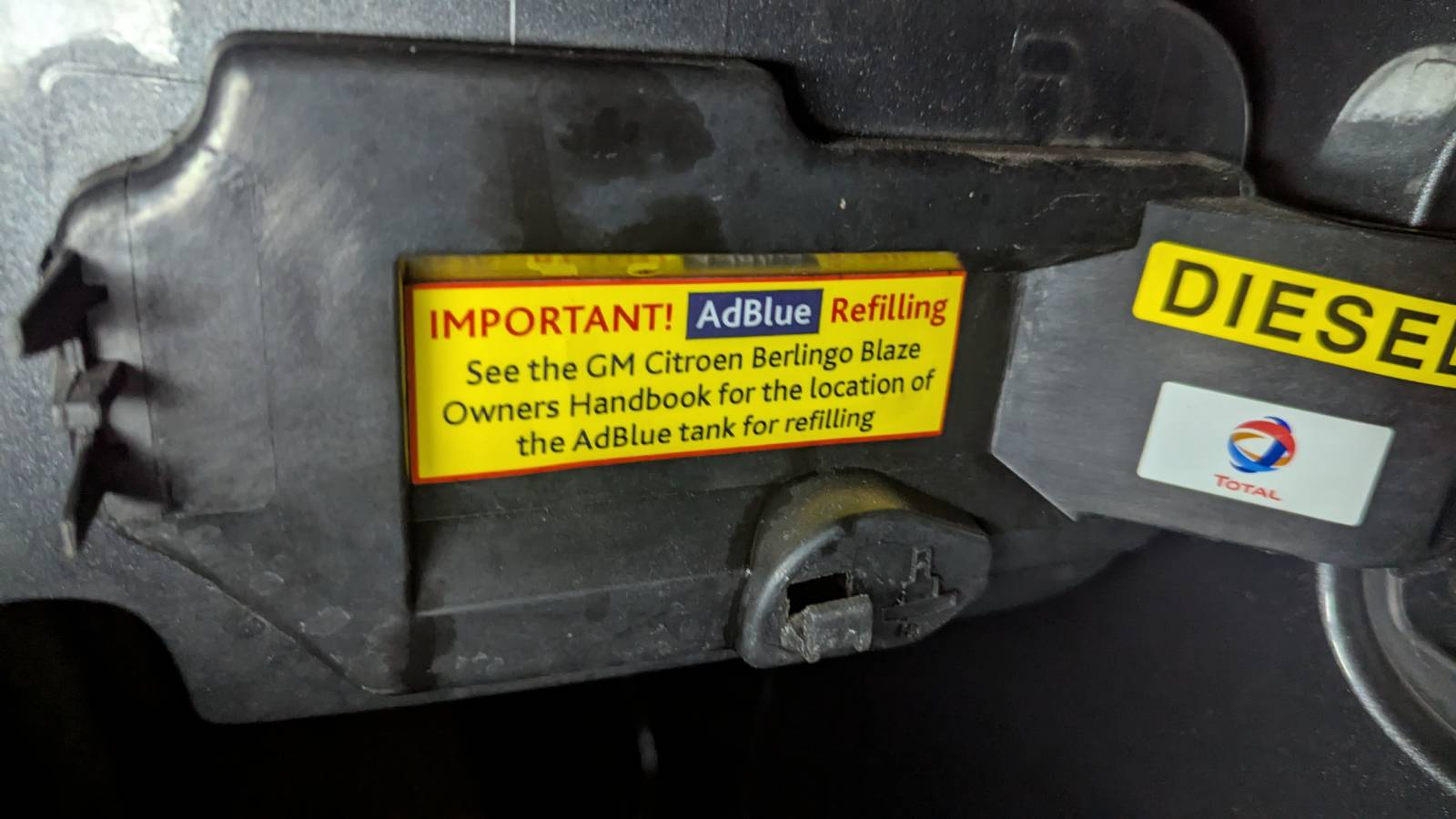

So my car has been complaining I should put some exhaust emissions neutralizing fluid in it. A thing they call AdBlue I believe.

Bought a big 10l drum of the stuff ready to fill up.

But when I look at where I expect to find the hole for filling it, I just find a capped off tube and a warning sticker "See the GM Citreon Berlingo Blaze manual" for the adblue refilling hole.

Only user manual I have is the one telling me to expect it there.

The car was converted for wheelchair access at some point in its life. I think they are referring to the wheelchair-adaption manual, which the seller did not give me.

🤔

Have been looking around the car as much as I can for a couple of hours this morning to no avail. Where have they hidden this hole to fill up adblue?

Maybe it's under the engine or something now and you have to put the thing on stilts to find it?

🤷

Asked my mechanic about it and he says to bring it in on Monday. Gonna be a pain if I have to rip up the floorboards or something every year to refill that.

#mechanic #car #diesel

2026-01-19 00:40:02

US CHILDREN 🧒

我們這些孩童 🧒

📷 Zeiss IKON Super Ikonta 533/16

🎞️ Lucky SHD 400

#filmphotography #Photography #blackandwhite

2026-01-19 14:46:33

Right, that's the record player in place and running! Anyone suggestions on the right (non-poncy) way to clean records and keep it happy? Some, like this one have been in jackets for a long time but are relatively clean; but I also have a big box of singles that probably more careful attention.

Now, the only bit of highfi equipment I need to look at is the Reel-to-reel player (which there's no longer room in that cabinet for)

2025-12-19 03:20:54

Q&A with Sam Altman on OpenAI's "code red" call, enterprise strategy, product ambitions, IPO plans, ChatGPT's personalization plans, and more (Alex Kantrowitz/Big Technology)

https://www.bigtechnology.com/p/sam-altman-on-openais-plan-to-win<…

2025-11-18 16:06:22

I recently researched the etymology of two interesting German words:

- "nonchalant" (informal, relaxed, casual, carefree, easy-going): I found that interesting because it's obviously a negation and I never read the non-negated form "chalant". Turns out that the non-negated form goes back to latin "calēre" (warm, to be hot, to be alarmed, to be fired up)

- "verschollen" (lost, missing, nothing has been known about the whereabouts of sth. or sb. for a long time). I found it weird because I couldn't make any sense of "schollen". This might be related to "verschallen" (stop making noise) and might go back to old high German "skellan" (which is also related to German "Schelle", a small bell). So, "verschollen" can be seen as a euphemistic expression because stop making noise is used to refer to being lost (and maybe dead).

#etymology #linguistics #German

2025-11-19 06:07:23

Part of why #Trump has always been so hard to pin down politically is that he was always representing highly conflicting interests. Now, as that eats him alive, the GOP is fracturing in to two main groups: the Pinochet/Franco wing and the Hitler wing.

The Pinochet/Franco wing (let's call them PF) are lead by Vance. PF are also a coalition with some competing interests, but basically it's evangelical leaders, Opus Dei (fascist catholics), tech fascists (Yarvinites), pharma, and the other normal big republican donors. They support Israel, some because apartheid is extremely profitable and some because they support the genocide of Palestinian in order to bring the end of the world. They are split between extremely antisemitic evangelicals and Zionists, wanting similar things for completely different reasons. PF wants strong immigration enforcement because it lets them exploit immigrants, they don't want actual ethnic cleansing (just the constant threat). They want H1B visas because they want to a precarious tech work force. They want to end tariffs because they support free trade and don't actually care about things being made here.

The Hitler wing are lead by Nick Fuentes. I think they're a more unified group, but they're going to try to pull together a coalition that I don't think can really work. They're against Israel because they believe in some bat shit antisemitic conspiracy theory (which they are trying to inject along side legitimate criticism of Israel). They are focused on release of the #EpsteinFiles because they believe that it shows that Epstein worked for Mossad. They don't think that the ICE raids are going far enough, they oppose H1Bs because they are racists. They want a full ethnic cleansing of the US where everyone who isn't "white" is either enslaved for menial labor, deported, or dead. But they're also critical of big business (partially because of conspiracy theories but also) because they think their best option is to push for a white socialism (red/brown alliance).

Both of them want to sink Trump because they see him as standing in the way of their objectives. Both see #Epstein as an opportunity. Both of them have absolutely terrifying visions of authoritarian dictatorships, but they're different dictatorships.with opposing interests. Even within these there may be opportunities to fracture these more.

While these fractures decrease the likelihood of either group getting enough people together, their vision is more clear and thus more likely to succeed if they can make that happen. Now is absolutely *not* the time to just enjoy the collapse, we need to keep up or accelerate anti-fascist efforts to avoid repeating some of the mistakes of history.

Edit:

I should not that this isn't *totally* original analysis. I'll link a video later when I have time to find it.

Here it is:

#USPol

2025-11-17 16:20:58

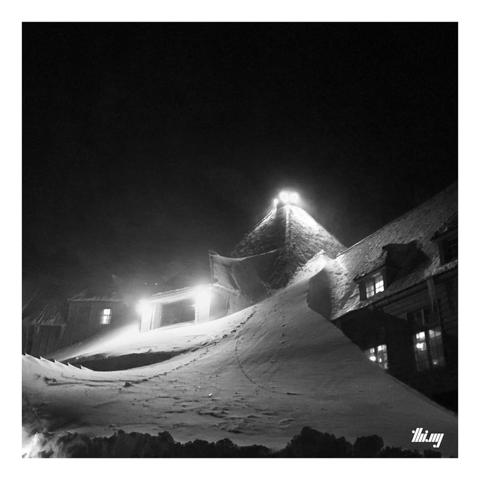

Monday night dinner at The Shining...

(Literally... a snowed-in Timberline Lodge in winter of 2018, the drive up there very much like the scene in the movie, 2 meters of snow on either side of the road and a huge snowdrift reaching up to the top of the roof of the lodge...)

#MonochromeMonday

2025-12-18 17:10:51

Sources describe tensions between OpenAI's research and ChatGPT product groups, leading to the "code red"; OpenAI is set to beat its 2025 revenue goal of $13B (The Information)

https://www.theinformation.com/articles/openais-organizational-p…

2025-11-18 23:01:50

Amazon's Zoox announces that users in San Francisco can now sign up for its Zoox Explorers program to take free rides in its robotaxis in select neighborhoods (Annie Palmer/CNBC)

https://www.cnbc.com/2025/11/18/zoox-begins-offering-robotaxi-rid…