2026-05-09 07:10:29

Beautifully designed scrolly story about the need to work with communities as economies de-carbonise - ABC (Australia) News https://www.abc.net.au/news/2026-05-09/australia-is-ditching-coal-but-theres-a-cost/106616676

2026-03-31 11:12:48

Replaced article(s) found for cs.CL. https://arxiv.org/list/cs.CL/new

[2/5]:

- POTSA: A Cross-Lingual Speech Alignment Framework for Speech-to-Text Translation

Li, Cui, Wang, Ge, Huang, Li, Peng, Lu, Tashi, Wang, Dang

https://arxiv.org/abs/2511.09232 https://mastoxiv.page/@arXiv_csCL_bot/115541846907664054

- Beyond Elicitation: Provision-based Prompt Optimization for Knowledge-Intensive Tasks

Yunzhe Xu, Zhuosheng Zhang, Zhe Liu

https://arxiv.org/abs/2511.10465 https://mastoxiv.page/@arXiv_csCL_bot/115547607561282911

- $\pi$-Attention: Periodic Sparse Transformers for Efficient Long-Context Modeling

Dong Liu, Yanxuan Yu

https://arxiv.org/abs/2511.10696 https://mastoxiv.page/@arXiv_csCL_bot/115564418836654965

- Based on Data Balancing and Model Improvement for Multi-Label Sentiment Classification Performanc...

Zijin Su, Huanzhu Lyu, Yuren Niu, Yiming Liu

https://arxiv.org/abs/2511.14073 https://mastoxiv.page/@arXiv_csCL_bot/115575715073023141

- HEAD-QA v2: Expanding a Healthcare Benchmark for Reasoning

Alexis Correa-Guill\'en, Carlos G\'omez-Rodr\'iguez, David Vilares

https://arxiv.org/abs/2511.15355 https://mastoxiv.page/@arXiv_csCL_bot/115581410328165116

- Towards Hyper-Efficient RAG Systems in VecDBs: Distributed Parallel Multi-Resolution Vector Search

Dong Liu, Yanxuan Yu

https://arxiv.org/abs/2511.16681 https://mastoxiv.page/@arXiv_csCL_bot/115603508442305146

- Estonian WinoGrande Dataset: Comparative Analysis of LLM Performance on Human and Machine Transla...

Marii Ojastu, Hele-Andra Kuulmets, Aleksei Dorkin, Marika Borovikova, Dage S\"arg, Kairit Sirts

https://arxiv.org/abs/2511.17290 https://mastoxiv.page/@arXiv_csCL_bot/115604083224487885

- A Systematic Study of In-the-Wild Model Merging for Large Language Models

O\u{g}uz Ka\u{g}an Hitit, Leander Girrbach, Zeynep Akata

https://arxiv.org/abs/2511.21437 https://mastoxiv.page/@arXiv_csCL_bot/115621178703846052

- CREST: Universal Safety Guardrails Through Cluster-Guided Cross-Lingual Transfer

Lavish Bansal, Naman Mishra

https://arxiv.org/abs/2512.02711 https://mastoxiv.page/@arXiv_csCL_bot/115655090475535157

- Multilingual Medical Reasoning for Question Answering with Large Language Models

Pietro Ferrazzi, Aitor Soroa, Rodrigo Agerri

https://arxiv.org/abs/2512.05658 https://mastoxiv.page/@arXiv_csCL_bot/115683267711014189

- OnCoCo 1.0: A Public Dataset for Fine-Grained Message Classification in Online Counseling Convers...

Albrecht, Lehmann, Poltermann, Rudolph, Steigerwald, Stieler

https://arxiv.org/abs/2512.09804 https://mastoxiv.page/@arXiv_csCL_bot/115700409397020978

- Does Tone Change the Answer? Evaluating Prompt Politeness Effects on Modern LLMs: GPT, Gemini, an...

Hanyu Cai, Binqi Shen, Lier Jin, Lan Hu, Xiaojing Fan

https://arxiv.org/abs/2512.12812 https://mastoxiv.page/@arXiv_csCL_bot/115729149622659403

- Beg to Differ: Understanding Reasoning-Answer Misalignment Across Languages

Ovalle, Ross, Ruder, Williams, Ullrich, Ibrahim, Sagun

https://arxiv.org/abs/2512.22712 https://mastoxiv.page/@arXiv_csCL_bot/115808161882146194

- Activation Steering for Masked Diffusion Language Models

Adi Shnaidman, Erin Feiglin, Osher Yaari, Efrat Mentel, Amit Levi, Raz Lapid

https://arxiv.org/abs/2512.24143 https://mastoxiv.page/@arXiv_csCL_bot/115819533211103315

- JMedEthicBench: A Multi-Turn Conversational Benchmark for Evaluating Medical Safety in Japanese L...

Liu, Li, Niu, Zhang, Xun, Hou, Wang, Iwasawa, Matsuo, Hatakeyama-Sato

https://arxiv.org/abs/2601.01627 https://mastoxiv.page/@arXiv_csCL_bot/115847901607405421

- FACTUM: Mechanistic Detection of Citation Hallucination in Long-Form RAG

Dassen, Kotula, Murray, Yates, Lawrie, Kayi, Mayfield, Duh

https://arxiv.org/abs/2601.05866 https://mastoxiv.page/@arXiv_csCL_bot/115881545684182376

- {\dag}DAGGER: Distractor-Aware Graph Generation for Executable Reasoning in Math Problems

Zabir Al Nazi, Shubhashis Roy Dipta, Sudipta Kar

https://arxiv.org/abs/2601.06853 https://mastoxiv.page/@arXiv_csCL_bot/115887753245730019

- Symphonym: Universal Phonetic Embeddings for Cross-Script Name Matching

Stephen Gadd

https://arxiv.org/abs/2601.06932 https://mastoxiv.page/@arXiv_csCL_bot/115887767008671765

- LLMs versus the Halting Problem: Revisiting Program Termination Prediction

Sultan, Armengol-Estape, Kesseli, Vanegue, Shahaf, Adi, O'Hearn

https://arxiv.org/abs/2601.18987 https://mastoxiv.page/@arXiv_csCL_bot/115972010510378715

- MuVaC: A Variational Causal Framework for Multimodal Sarcasm Understanding in Dialogues

Diandian Guo, Fangfang Yuan, Cong Cao, Xixun Lin, Chuan Zhou, Hao Peng, Yanan Cao, Yanbing Liu

https://arxiv.org/abs/2601.20451 https://mastoxiv.page/@arXiv_csCL_bot/115977891530875024

toXiv_bot_toot

2026-05-08 06:50:23

Mapping Post-Truth across Disciplines

https://ift.tt/PZBVmS8

updated: Thursday, May 7, 2026 - 1:33pmfull name / name of organization: University of…

via Input 4 RELCFP https://

2026-04-06 15:04:31

A man used AI to call 3,000 Irish bartenders to track the cost of Guinness. Now pubs are lowering their prices to compete

https://fortune.com/2026/03/30/guinness-beer-prices-ireland-anthropic-claude-ai/

Result:

2026-03-31 19:06:03

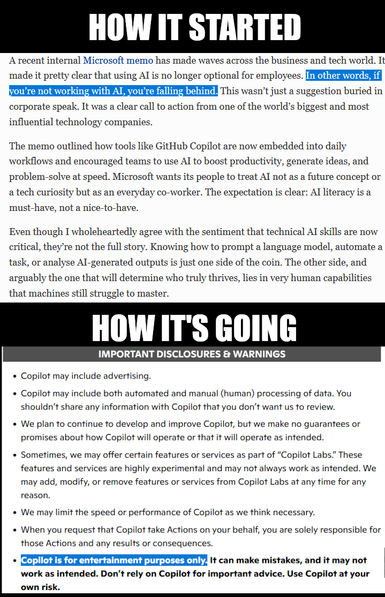

I find this . . . entertaining

Ref:

Forbes article on AI coding at Microsoft

https://www.forbes.com/sites/bernardmarr/2025/07/08/microsoft-makes-ai-mandatory-for-employees-what-it-means-for-your-career/…

2026-04-03 08:04:47

Skal vi gætte på at Trump ligger og bider i gulvtæppet nu, mens han har sat Hegseth til at forberede en invasion af Norge. #trump #nobelPris #nobelPrize

2026-04-29 04:33:00

Well this is some nice news.

https://www.theguardian.com/film/2026/apr/29/sam-neill-cancer-free-clinical-trial

2026-04-24 17:00:23

"A 58-day protest campaign just convinced Etsy to ban fur"

#Etsy #Animals

https://www.

2026-02-25 16:08:08

Replaced article(s) found for cs.LG. https://arxiv.org/list/cs.LG/new

[4/6]:

- Neural Proposals, Symbolic Guarantees: Neuro-Symbolic Graph Generation with Hard Constraints

Chuqin Geng, Li Zhang, Mark Zhang, Haolin Ye, Ziyu Zhao, Xujie Si

https://arxiv.org/abs/2602.16954 https://mastoxiv.page/@arXiv_csLG_bot/116102434757760085

- Multi-Probe Zero Collision Hash (MPZCH): Mitigating Embedding Collisions and Enhancing Model Fres...

Ziliang Zhao, et al.

https://arxiv.org/abs/2602.17050 https://mastoxiv.page/@arXiv_csLG_bot/116102517335590034

- MASPO: Unifying Gradient Utilization, Probability Mass, and Signal Reliability for Robust and Sam...

Fu, Lin, Fang, Zheng, Hu, Shao, Qin, Pan, Zeng, Cai

https://arxiv.org/abs/2602.17550 https://mastoxiv.page/@arXiv_csLG_bot/116102581561441103

- A Theoretical Framework for Modular Learning of Robust Generative Models

Corinna Cortes, Mehryar Mohri, Yutao Zhong

https://arxiv.org/abs/2602.17554 https://mastoxiv.page/@arXiv_csLG_bot/116102582216715527

- Multi-Round Human-AI Collaboration with User-Specified Requirements

Sima Noorani, Shayan Kiyani, Hamed Hassani, George Pappas

https://arxiv.org/abs/2602.17646 https://mastoxiv.page/@arXiv_csLG_bot/116102592047544971

- NEXUS: A compact neural architecture for high-resolution spatiotemporal air quality forecasting i...

Rampunit Kumar, Aditya Maheshwari

https://arxiv.org/abs/2602.19654 https://mastoxiv.page/@arXiv_csLG_bot/116125610403473755

- Augmenting Lateral Thinking in Language Models with Humor and Riddle Data for the BRAINTEASER Task

Mina Ghashami, Soumya Smruti Mishra

https://arxiv.org/abs/2405.10385 https://mastoxiv.page/@arXiv_csCL_bot/112472190479013167

- Watermarking Language Models with Error Correcting Codes

Patrick Chao, Yan Sun, Edgar Dobriban, Hamed Hassani

https://arxiv.org/abs/2406.10281 https://mastoxiv.page/@arXiv_csCR_bot/112636307340218522

- Learning to Control Unknown Strongly Monotone Games

Siddharth Chandak, Ilai Bistritz, Nicholas Bambos

https://arxiv.org/abs/2407.00575 https://mastoxiv.page/@arXiv_csMA_bot/112715733875586837

- Classification and reconstruction for single-pixel imaging with classical and quantum neural netw...

Sofya Manko, Dmitry Frolovtsev

https://arxiv.org/abs/2407.12506 https://mastoxiv.page/@arXiv_quantph_bot/112806295477530195

- Statistical Inference for Temporal Difference Learning with Linear Function Approximation

Weichen Wu, Gen Li, Yuting Wei, Alessandro Rinaldo

https://arxiv.org/abs/2410.16106 https://mastoxiv.page/@arXiv_statML_bot/113350611306532443

- Big data approach to Kazhdan-Lusztig polynomials

Abel Lacabanne, Daniel Tubbenhauer, Pedro Vaz

https://arxiv.org/abs/2412.01283 https://mastoxiv.page/@arXiv_mathRT_bot/113587812663608119

- MoEMba: A Mamba-based Mixture of Experts for High-Density EMG-based Hand Gesture Recognition

Mehran Shabanpour, Kasra Rad, Sadaf Khademi, Arash Mohammadi

https://arxiv.org/abs/2502.17457 https://mastoxiv.page/@arXiv_eessSP_bot/114069047434302054

- Tightening Optimality gap with confidence through conformal prediction

Miao Li, Michael Klamkin, Russell Bent, Pascal Van Hentenryck

https://arxiv.org/abs/2503.04071 https://mastoxiv.page/@arXiv_statML_bot/114120074927291283

- SEED: Towards More Accurate Semantic Evaluation for Visual Brain Decoding

Juhyeon Park, Peter Yongho Kim, Jiook Cha, Shinjae Yoo, Taesup Moon

https://arxiv.org/abs/2503.06437 https://mastoxiv.page/@arXiv_csCV_bot/114142690988862508

- How much does context affect the accuracy of AI health advice?

Prashant Garg, Thiemo Fetzer

https://arxiv.org/abs/2504.18310 https://mastoxiv.page/@arXiv_econGN_bot/114414380916957986

- Reproducing and Improving CheXNet: Deep Learning for Chest X-ray Disease Classification

Daniel J. Strick, Carlos Garcia, Anthony Huang, Thomas Gardos

https://arxiv.org/abs/2505.06646 https://mastoxiv.page/@arXiv_eessIV_bot/114499319986528625

- Sharp Gaussian approximations for Decentralized Federated Learning

Soham Bonnerjee, Sayar Karmakar, Wei Biao Wu

https://arxiv.org/abs/2505.08125 https://mastoxiv.page/@arXiv_statML_bot/114505047719395949

- HoloLLM: Multisensory Foundation Model for Language-Grounded Human Sensing and Reasoning

Chuhao Zhou, Jianfei Yang

https://arxiv.org/abs/2505.17645 https://mastoxiv.page/@arXiv_csCV_bot/114572928659057348

- A Copula Based Supervised Filter for Feature Selection in Diabetes Risk Prediction Using Machine ...

Agnideep Aich, Md Monzur Murshed, Sameera Hewage, Amanda Mayeaux

https://arxiv.org/abs/2505.22554 https://mastoxiv.page/@arXiv_statML_bot/114589983451462525

- Synthesis of discrete-continuous quantum circuits with multimodal diffusion models

Florian F\"urrutter, Zohim Chandani, Ikko Hamamura, Hans J. Briegel, Gorka Mu\~noz-Gil

https://arxiv.org/abs/2506.01666 https://mastoxiv.page/@arXiv_quantph_bot/114618420761346125

toXiv_bot_toot

2026-03-31 11:13:08

Replaced article(s) found for cs.CL. https://arxiv.org/list/cs.CL/new

[5/5]:

- AppellateGen: A Benchmark for Appellate Legal Judgment Generation

Yang, Wang, Fan, Hu, Wang, Liu, Zeng, Fu, Gong, Zhang, Li, Zheng, Xu

https://arxiv.org/abs/2601.01331 https://mastoxiv.page/@arXiv_csCY_bot/115847038572575387

- Vision-Language Agents for Interactive Forest Change Analysis

James Brock, Ce Zhang, Nantheera Anantrasirichai

https://arxiv.org/abs/2601.04497 https://mastoxiv.page/@arXiv_csCV_bot/115864542639529766

- FigEx2: Visual-Conditioned Panel Detection and Captioning for Scientific Compound Figures

Jifeng Song, Arun Das, Pan Wang, Hui Ji, Kun Zhao, Yufei Huang

https://arxiv.org/abs/2601.08026 https://mastoxiv.page/@arXiv_csCV_bot/115892719657942341

- Sparse-RL: Breaking the Memory Wall in LLM Reinforcement Learning via Stable Sparse Rollouts

Luo, Zhang, Hu, Zhang, Wang, Su, Sun, Liang, Zhang

https://arxiv.org/abs/2601.10079 https://mastoxiv.page/@arXiv_csLG_bot/115904206341755873

- Compounding Disadvantage: Auditing Intersectional Bias in LLM-Generated Explanations Across India...

Amogh Gupta (Neil), Niharika Patil (Neil), Sourojit Ghosh (Neil), SnehalKumar (Neil), S Gaikwad

https://arxiv.org/abs/2601.14506 https://mastoxiv.page/@arXiv_csCY_bot/115937624654783353

- Measuring Complexity at the Requirements Stage: Spectral Metrics as Development Effort Predictors

Vierlboeck, Pugliese, Nilchian, Grogan, Babu

https://arxiv.org/abs/2602.07182 https://mastoxiv.page/@arXiv_csSE_bot/116045826365214235

- CoPE-VideoLM: Leveraging Codec Primitives For Efficient Video Language Modeling

Sarkar, Pautrat, Miksik, Pollefeys, Armeni, Rad, Dusmanu

https://arxiv.org/abs/2602.13191 https://mastoxiv.page/@arXiv_csCV_bot/116079824094529198

- MoD-DPO: Towards Mitigating Cross-modal Hallucinations in Omni LLMs using Modality Decoupled Pref...

Ashutosh Chaubey, Jiacheng Pang, Mohammad Soleymani

https://arxiv.org/abs/2603.03192 https://mastoxiv.page/@arXiv_csCV_bot/116170511143131333

- Image Generation Models: A Technical History

Rouzbeh Shirvani

https://arxiv.org/abs/2603.07455 https://mastoxiv.page/@arXiv_csCV_bot/116204960613280699

- Rethinking Attention Output Projection: Structured Hadamard Transforms for Efficient Transformers

Shubham Aggarwal, Lokendra Kumar

https://arxiv.org/abs/2603.08343 https://mastoxiv.page/@arXiv_csLG_bot/116205064359384079

- FGTR: Fine-Grained Multi-Table Retrieval via Hierarchical LLM Reasoning

Chaojie Sun, Bin Cao, Tiantian Li, Chenyu Hou, Ruizhe Li, Jing Fan

https://arxiv.org/abs/2603.12702 https://mastoxiv.page/@arXiv_csIR_bot/116237827836520478

- CausalEvolve: Towards Open-Ended Discovery with Causal Scratchpad

Yongqiang Chen, Chenxi Liu, Zhenhao Chen, Tongliang Liu, Bo Han, Kun Zhang

https://arxiv.org/abs/2603.14575 https://mastoxiv.page/@arXiv_csLG_bot/116243782215605653

- Silicon Bureaucracy and AI Test-Oriented Education: Contamination Sensitivity and Score Confidenc...

Yiliang Song, Hongjun An, Jiangan Chen, Xuanchen Yan, Huan Song, Jiawei Shao, Xuelong Li

https://arxiv.org/abs/2603.21636 https://mastoxiv.page/@arXiv_csAI_bot/116283590092117172

- Problems with Chinchilla Approach 2: Systematic Biases in IsoFLOP Parabola Fits

Eric Czech, Zhiwei Xu, Yael Elmatad, Yixin Wang, William Held

https://arxiv.org/abs/2603.22339 https://mastoxiv.page/@arXiv_csLG_bot/116288991182888131

- X-OPD: Cross-Modal On-Policy Distillation for Capability Alignment in Speech LLMs

Di Cao, Dongjie Fu, Hai Yu, Siqi Zheng, Xu Tan, Tao Jin

https://arxiv.org/abs/2603.24596 https://mastoxiv.page/@arXiv_eessAS_bot/116300009464853696

toXiv_bot_toot