2025-09-26 08:02:21

Periodicity of point processes in abelian groups without lattices

Nachi Avraham-Re'em, Michael Bj\"orklund, Rickard Cullman

https://arxiv.org/abs/2509.20847 https://

2025-10-24 12:01:22

Moving on to an excellent keynote by Ana Persic from @… , reiterating that we are not doing #OpenScience for the sake of open science - we have a global agenda aiming to achieve Sustainable Development Goals, which needs Open Science to succeed. Science is also a human right and to share science is thus a moral obligation. We are not there yet - there is a rise of mistrust in science, misinformation, actions from non-state actors such as the private sector.

2025-11-25 10:08:13

Roadmap: Emerging Platforms and Applications of Optical Frequency Combs and Dissipative Solitons

Dmitry Skryabin, Arne Kordts, Richard Zeltner, Ronald Holzwarth, Victor Torres-Company, Tobias Herr, Fuchuan Lei, Qi-Fan Yang, Camille-Sophie Br\`es, John F. Donegan, Hai-Zhong Weng, Delphine Marris-Morini, Adel Bousseksou, Markku Vainio, Thomas Bunel, Matteo Conforti, Arnaud Mussot, Erwan Lucas, Julien Fatome, Yuk Shan Cheng, Derryck T. Reid, Alessia Pasquazi, Marco Peccianti, M. Giudici, M. Marconi, A. Bartolo, N. Vigne, B. Chomet, A. Garnache, G. Beaudoin, I. Sagnes, Richard Burguete, Sarah Hammer, Jonathan Silver

https://arxiv.org/abs/2511.18231 https://arxiv.org/pdf/2511.18231 https://arxiv.org/html/2511.18231

arXiv:2511.18231v1 Announce Type: new

Abstract: The discovery of optical frequency combs (OFCs) has revolutionised science and technology by bridging electronics and photonics, driving major advances in precision measurements, atomic clocks, spectroscopy, telecommunications, and astronomy. However, current OFC systems still require further development to enable broader adoption in fields such as communication, aerospace, defence, and healthcare. There is a growing need for compact, portable OFCs that deliver high output power, robust self-referencing, and application-specific spectral coverage. On the conceptual side, progress toward such systems is hindered by an incomplete understanding of the fundamental principles governing OFC generation in emerging devices and materials, as well as evolving insights into the interplay between soliton and mode-locking effects. This roadmap presents the vision of a diverse group of academic and industry researchers and educators from Europe, along with their collaborators, on the current status and future directions of OFC science. It highlights a multidisciplinary approach that integrates novel physics, engineering innovation, and advanced researcher training. Topics include advances in soliton science as it relates to OFCs, the extension of OFC spectra into the visible and mid-infrared ranges, metrology applications and noise performance of integrated OFC sources, new fibre-based OFC modules, OFC lasers and OFC applications in astronomy.

toXiv_bot_toot

2025-10-02 07:17:11

2025-12-14 14:58:37

Yesterday was my last day of this term's teaching Communication at the School of Engineering. So time to say good by to the little fellow living in TH547 and attending all lectures! He was there even on days none of the 33 students showed up

#AcademicChatter #TeachingElephant

2025-10-01 13:12:53

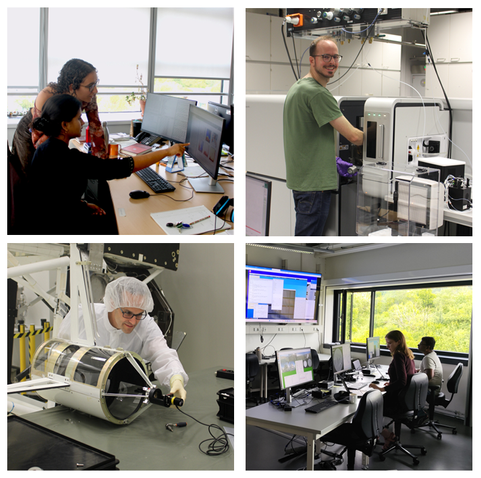

The International Max Planck Research School for Solar System Science becomes a permanent institution in Göttingen and Braunschweig

The Solar System School, the joint graduate school of the Max Planck Institute for Solar System Research, the University of Göttingen, and the Technical University of Braunschweig, is now a permanent fixture in doctoral training in Göttingen and Braunschweig. The Max Planck Society will continue the International Max Planck Research School for Solar Syste…

2025-11-17 10:15:14

I feel as though I should illustrate the difference that this one single constraint can make by two examples.

The rules of Simon Says are maximally authoritarian. You must perform any action ordered, with the only restriction that the authority must say "Simon says" first. Were you forced to stay in this system, it would be the most despotic autocracy possible. But it's not. It's a silly game because you can leave at any time.

Let's flip this and imagine a room. During a specific period of time you will have absolute control over everything in this room. In this room you have total freedom. This is not even the limited freedom, the coordinated freedom, the compromising freedom of civil society. You could, without consequence, perform any action you wish in this room. You could say anything, destroy or steal any object, order any individual to perform any action, kill any person in the room with you and take anything they own. This is the sovereign freedom, the absolute freedom, of dictators and kings. The only restriction is that you are not allowed to leave the room while you have this freedom. In fact, you really only have this level of freedom because the room is actually empty other than for you. I am, of course, talking about a form of torture still common in the US: solitary confinement.

2025-10-13 08:04:20

Robust autobidding for noisy conversion prediction models

Andrey Pudovikov, Alexandra Khirianova, Ekaterina Solodneva, Gleb Molodtsov, Aleksandr Katrutsa, Yuriy Dorn, Egor Samosvat

https://arxiv.org/abs/2510.08788

2025-10-13 10:55:45

"Getting Hired to Work on FOSS - The Do-s, Don't-s and Pitfalls for Everyone Involved" is what my friend Till Adam talked about at #Akademy2025. He also has a short section on why Free Software is worth more compared to proprietary software, so you should take higher rates and principals should be willing to pay more. And he draws from experience of contracts that

2025-11-17 10:14:48

I feel as though I should illustrate the difference that this one single constraint can make by two examples.

The rules of Simon Says are maximally authoritarian. You must perform any action ordered, with the only restriction that the authority must say "Simon says" first. Were you forced to stay in this system, it would be the most despotic autocracy possible. But it's not. It's a silly game because you can leave at any time.

Let's flip this and imagine a room. During a specific period of time you will have absolute control over everything in this room. In this room you have total freedom. This is not even the limited freedom, the coordinated freedom, the compromising freedom of civil society. You could, without consequence, perform any action you wish in this room. You could say anything, destroy or steal any object, order any individual to perform any action, kill any person in the room with you and take anything they own. This is the sovereign freedom, the absolute freedom, of dictators and kings. The only restriction is that you are not allowed to leave the room while you have this freedom. In fact, you really only have this level of freedom because the room is actually empty other than for you. I am, of course, talking about a form of torture still common in the US: solitary confinement.