2026-01-22 04:59:09

Dripping to Destruction - Exploring Salt-driven Viscous Surface Convergence in Europa’s Icy Shell: #Europa

2026-04-21 10:51:37

Prisco's 'What teams should do' mock draft: Giants take Dexter Lawrence replacement, Cowboys add to defense

https://www.cbssports.com/nfl/draft/news/priscos-what-teams…

2026-02-21 20:06:20

President Donald J. Trump Approves Emergency Declaration for the District of Columbia (FEMA.gov)

https://www.fema.gov/press-release/20260221/president-donald-j-trump-approves-emergency-declaration-district-columbia

http://www.memeorandum.com/260221/p40#a260221p40

2026-03-20 21:07:35

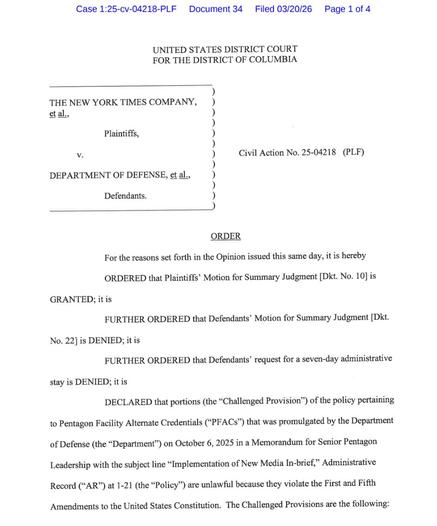

A district court judge rules in favor of the NYT in its suit challenging rules that limited press access to the Pentagon, citing the First and Fifth amendments (Scott Nover/@scottnover)

https://x.com/scottnover/status/2035098180489023807

2026-03-21 05:34:45

"Federal sex-crimes prosecutor Marie Villafaña repeatedly urged her boss, Alexander Acosta, then U.S. Attorney for the Southern District of Florida, to pursue a 60-count indictment against Jeffrey Epstein in 2007, but Acosta dismissed her requests."

Ex-Trump official once shut down 60-count federal indictment against Epstein: report - Raw Story

https://www.rawstory.com/epstein-2676467249/

2026-03-20 22:55:57

Pentagon press policy ruled unconstitutional in case brought by N.Y. Times

A federal judge in Washington, D.C., struck down the Defense Department’s controversial press policy as unconstitutional Friday,

ruling in favor of the New York Times and one of its reporters, Julian E. Barnes.

Senior U.S. District Judge Paul L. Friedman said in his ruling that the ongoing war with Iran made it

“more important than ever that the public have access to information from a variety…

2026-04-22 01:20:44

Virginia voters approve redistricting plan that could boost Democrats' seats in Congress (David A. Lieb/Associated Press)

https://apnews.com/article/virginia-redistricting-election-congress-trump-78e0e68100119011b1b439634f6b6fa1

http://www.memeorandum.com/260421/p138#a260421p138

2026-02-20 18:01:46

How Alan Dershowitz's petition to seek review of his CNN libel suit threatens NYT v. Sullivan, which Dershowitz argues should only apply to government officials (Adam Liptak/New York Times)

https://www.nytimes.com/2026/02/19/us/politics/thedocket-dershow…

2026-04-20 20:29:40

Texas Medical Board Disciplines Doctors in Porsha Ngumezi and Nevaeh Crain Cases — ProPublica

https://www.propublica.org/article/tmb-disciplines-doctors-ngumezi-crain-cases

2026-04-21 14:25:42

Human Rights Campaign targets battleground districts during broader reckoning over LGBTQ rights (Matt Brown/Associated Press)

https://apnews.com/article/human-rights-campaign-midterm-elections-lgbtq-55147b73698ef63d13068abe1a109747

http://www.memeorandum.com/260421/p43#a260421p43