2026-01-25 11:00:00

[BLOG] BitBastelei #678 – Linux auf altem Embedded-System https://www.adlerweb.info/blog/2026/01/25/bitbastelei-678-linux-auf-altem-embedded-system/

2026-02-25 00:34:41

Bills GM says WR Coleman will have 'every chance' https://www.espn.com/nfl/story/_/id/48026260/bills-gm-says-embattled-wr-coleman-every-chance

2026-03-25 20:55:59

America's Bookend Wars for Oil (emptywheel)

https://emptywheel.net/2026/03/25/americas-bookend-wars-for-oil/

http://www.memeorandum.com/260325/p103#a260325p103

2026-03-24 19:51:47

2026-02-26 10:17:16

Organisations are racing to embed AI into everyday life. But people don’t trust institutions and private enterprise to do this in a way that benefits them, and that’s a problem.

https://www.computing.co.uk/feature…

2026-02-24 16:39:49

I can’t believe I’m having to kludge a CSS filter onto their iframe to invert the embedded checkout form of a company that carries out *trillions of dollars* of transactions every year because they don’t have dark mode support.

My feeble mind clearly cannot understand such high capitalist craft.

2026-02-24 18:26:49

2026-02-25 04:16:05

Koah, which embeds sponsored ads directly into AI chatbots and aims to build an AdSense for AI, raised a $20.5M Series A led by Theory Ventures (Trishla Ostwal/Adweek)

https://www.adweek.com/media/koah-adsense-for-chatgpt-series-a/

2026-03-24 23:37:02

Direct spectroscopic confirmation of the young embedded #ProtoPlanet WISPIT 2c: https://arxiv.org/abs/2603.22085 -> A Solar System in the making? Two planets spotted forming in disc around young star: https://www.eso.org/public/news/eso2604/

2026-01-25 14:30:07

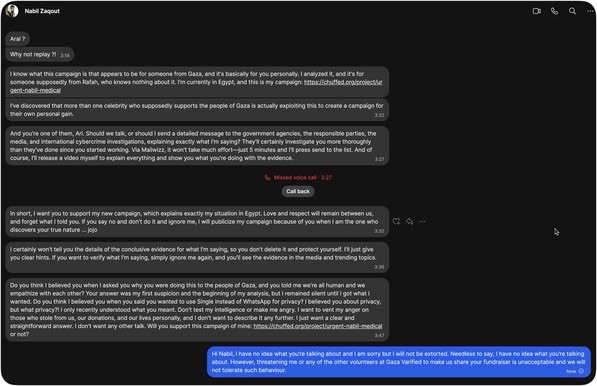

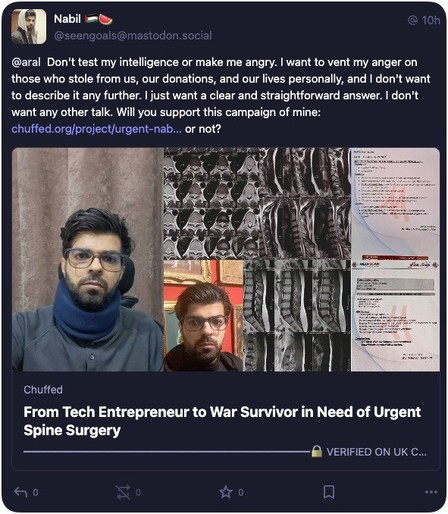

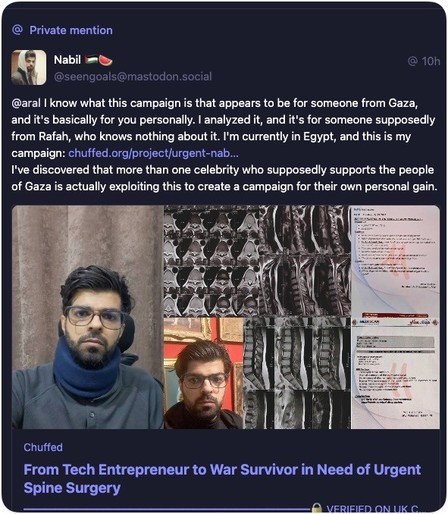

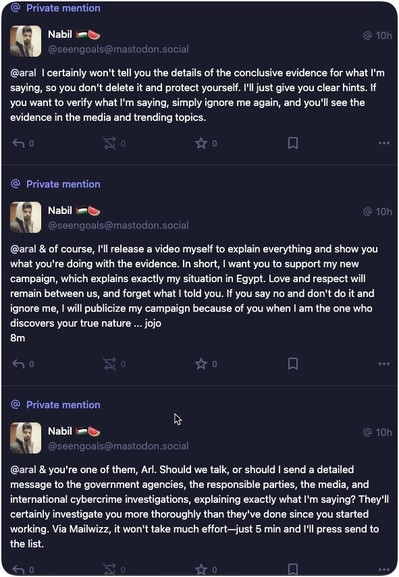

Just got these messages from @seengoals@mastodon.social, one of the members of Gaza Verified, basically accusing me of running a fundraiser for Gaza and keeping the proceeds (I have no fundraiser on any fundraising site anywhere) and extorting me to share his fundraiser or he’ll apparently go public with it.

So here’s what’s going to happening instead: Nabil has been removed Gaza Verified (