2026-01-29 02:01:38

Internal docs: Paramount is planning to add a heavy dose of short-form video to Paramount , initially from existing content, and host user-generated content (James Faris/Business Insider)

https://www.businessinsider.com/paramount-short-fo…

2026-01-29 09:10:28

Rule of thumb: End-to-end encryption is meaningless unless you know and control exactly who the ends are. (Let’s call this the Waltz principle.)

Same goes unless the context your encrypted messenger is running in is secure. Signal can encrypt your messages all day long but you’re still screwed if the custom keyboard app you installed on your phone is sending them off to someone else before they even reach Signal.

2026-01-29 01:26:24

The Raiders’ Klint Kubiak Window is real but so is the margin for error https://raiderramble.com/2026/01/28/the-raiders-klint-kubiak-window-is-real-but-so-is-the-margin-for-error/

2026-02-28 01:23:39

2026-02-28 22:37:01

2026-03-27 07:18:07

De West Bank is een soort schietbaan maar dan met levende doelen. Oud-premier Olmert roept het ICC op de daders te vervolgen omdat Israël het allemaal prima vindt.

https://www.trouw.nl/buitenland/voor-1100-

2026-04-28 18:48:38

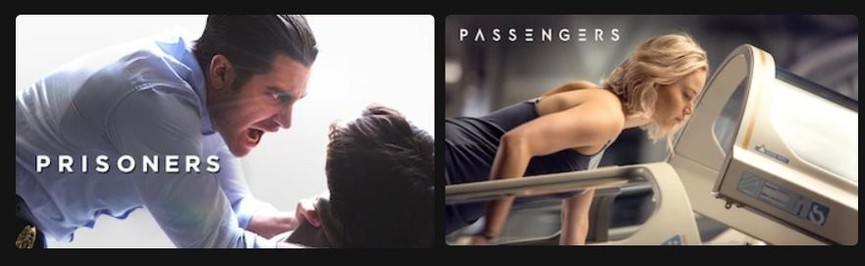

This is the weirdest combination of thumbnails I have ever seen on Netflix 😁

#netflix

2026-03-27 17:07:43

A homeowner in Maryland allegedly waited until immigrant workers had arrived to start a project on her house before calling U.S. Immigration and Customs Enforcement (ICE) on them.

The moment in Cambridge was captured on video and shared on social media by a co-worker identified as Bryan Polanco.

"Seeing it is not the same as experiencing it,"

Polanco could be heard saying in Spanish in the video reviewed by Newsweek.

"I’ve seen many videos, and sadly t…