2026-01-25 18:51:52

#dogcontent meets #librarycontent: "Aktive Lernpause mit Pfötchen" in der SLUB Dresden! 📚🐶🐾📚

2026-01-16 09:09:00

Rheinland-Pfalz: Lernapps wie Anton und bettermarks zum Aufholen von Rückständen

In Rheinland-Pfalz sollen Lernapps wie bettermarks Problem- zu Lieblingsfächern machen. Landeslizenzen wurden verlängert, Lehrkräfte müssen sich qualifizieren.

2025-12-25 13:56:53

Erster Zwischenstatus meines Linux-Lernauftakts:

> Linux Mint Cinnamon installiert -> Läuft nicht flott, aber läuft

> Grundeinstellungen und Dateiverwaltung erkundet

> Thunderbird eingerichtet (mit neuer IMAP E-Mail-Adresse)

> Libre Office erkundet und Base nachinstalliert

Das macht Spaß!

#Linux

2026-01-25 18:38:59

🇺🇦 #NowPlaying on KEXP's #Roadhouse

The Sadies:

🎵 Walking Boss

#TheSadies

https://thesadies.bandcamp.com/track/walking-boss

https://open.spotify.com/track/2nxBjIW6lrEMvcuQHAqPVH

2025-11-24 14:56:12

Am 26.06.2026 wird die Abschlussveranstaltung unseres Fachgruppen-Projekts stattfinden und unsere Planungen haben begonnen. Es wird Vorträge von und mit unserer #Fachgruppe geben, Einblicke, wie sie die Lernplattform @… barriereärmer gemacht hat und ganz vielleicht e…

2025-11-01 18:42:02

from my link log —

Search and replace tricks with ripgrep.

https://learnbyexample.github.io/substitution-with-ripgrep/

saved 2020-09-17 ht…

2026-01-17 02:09:06

🇺🇦 #NowPlaying on KEXP's #DriveTime

Lauren Mayberry:

🎵 Something in the Air

#LaurenMayberry

https://open.spotify.com/track/0Y1hlDyHfsQXBrPWNLCveD

2025-12-22 10:32:50

Spatially-informed transformers: Injecting geostatistical covariance biases into self-attention for spatio-temporal forecasting

Yuri Calleo

https://arxiv.org/abs/2512.17696 https://arxiv.org/pdf/2512.17696 https://arxiv.org/html/2512.17696

arXiv:2512.17696v1 Announce Type: new

Abstract: The modeling of high-dimensional spatio-temporal processes presents a fundamental dichotomy between the probabilistic rigor of classical geostatistics and the flexible, high-capacity representations of deep learning. While Gaussian processes offer theoretical consistency and exact uncertainty quantification, their prohibitive computational scaling renders them impractical for massive sensor networks. Conversely, modern transformer architectures excel at sequence modeling but inherently lack a geometric inductive bias, treating spatial sensors as permutation-invariant tokens without a native understanding of distance. In this work, we propose a spatially-informed transformer, a hybrid architecture that injects a geostatistical inductive bias directly into the self-attention mechanism via a learnable covariance kernel. By formally decomposing the attention structure into a stationary physical prior and a non-stationary data-driven residual, we impose a soft topological constraint that favors spatially proximal interactions while retaining the capacity to model complex dynamics. We demonstrate the phenomenon of ``Deep Variography'', where the network successfully recovers the true spatial decay parameters of the underlying process end-to-end via backpropagation. Extensive experiments on synthetic Gaussian random fields and real-world traffic benchmarks confirm that our method outperforms state-of-the-art graph neural networks. Furthermore, rigorous statistical validation confirms that the proposed method delivers not only superior predictive accuracy but also well-calibrated probabilistic forecasts, effectively bridging the gap between physics-aware modeling and data-driven learning.

toXiv_bot_toot

2026-01-16 18:42:16

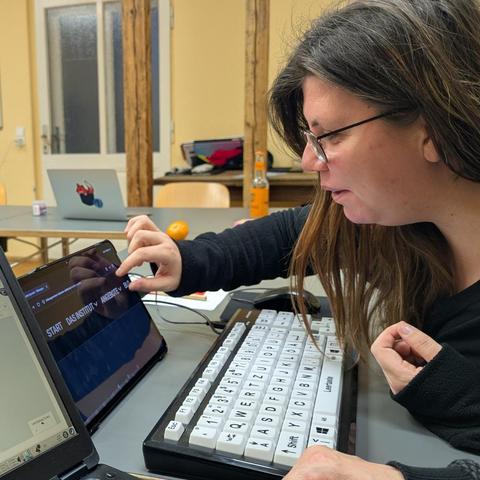

Gestern ist unsere #Fachgruppe ins kalte Wasser gesprungen und hat zum ersten Mal ein digitales Angebot beraten, das nicht unsere hauseigene Lernplattform @… ist. Zu Besuch waren Julika und Pauline vom Bildungsinstitut für inklusive Vielfalt, die wissen wollten, wie …

2025-12-22 13:54:35

Replaced article(s) found for cs.LG. https://arxiv.org/list/cs.LG/new

[2/5]:

- The Diffusion Duality

Sahoo, Deschenaux, Gokaslan, Wang, Chiu, Kuleshov

https://arxiv.org/abs/2506.10892 https://mastoxiv.page/@arXiv_csLG_bot/114675526577078472

- Multimodal Representation Learning and Fusion

Jin, Ge, Xie, Luo, Song, Bi, Liang, Guan, Yeong, Song, Hao

https://arxiv.org/abs/2506.20494 https://mastoxiv.page/@arXiv_csLG_bot/114749113025183688

- The kernel of graph indices for vector search

Mariano Tepper, Ted Willke

https://arxiv.org/abs/2506.20584 https://mastoxiv.page/@arXiv_csLG_bot/114749118923266356

- OptScale: Probabilistic Optimality for Inference-time Scaling

Youkang Wang, Jian Wang, Rubing Chen, Xiao-Yong Wei

https://arxiv.org/abs/2506.22376 https://mastoxiv.page/@arXiv_csLG_bot/114771735361664528

- Boosting Revisited: Benchmarking and Advancing LP-Based Ensemble Methods

Fabian Akkerman, Julien Ferry, Christian Artigues, Emmanuel Hebrard, Thibaut Vidal

https://arxiv.org/abs/2507.18242 https://mastoxiv.page/@arXiv_csLG_bot/114913322736512937

- MolMark: Safeguarding Molecular Structures through Learnable Atom-Level Watermarking

Runwen Hu, Peilin Chen, Keyan Ding, Shiqi Wang

https://arxiv.org/abs/2508.17702 https://mastoxiv.page/@arXiv_csLG_bot/115095014405732247

- Dual-Distilled Heterogeneous Federated Learning with Adaptive Margins for Trainable Global Protot...

Fatema Siddika, Md Anwar Hossen, Wensheng Zhang, Anuj Sharma, Juan Pablo Mu\~noz, Ali Jannesari

https://arxiv.org/abs/2508.19009 https://mastoxiv.page/@arXiv_csLG_bot/115100269482762688

- STDiff: A State Transition Diffusion Framework for Time Series Imputation in Industrial Systems

Gary Simethy, Daniel Ortiz-Arroyo, Petar Durdevic

https://arxiv.org/abs/2508.19011 https://mastoxiv.page/@arXiv_csLG_bot/115100270137397046

- EEGDM: Learning EEG Representation with Latent Diffusion Model

Shaocong Wang, Tong Liu, Yihan Li, Ming Li, Kairui Wen, Pei Yang, Wenqi Ji, Minjing Yu, Yong-Jin Liu

https://arxiv.org/abs/2508.20705 https://mastoxiv.page/@arXiv_csLG_bot/115111565155687451

- Data-Free Continual Learning of Server Models in Model-Heterogeneous Cloud-Device Collaboration

Xiao Zhang, Zengzhe Chen, Yuan Yuan, Yifei Zou, Fuzhen Zhuang, Wenyu Jiao, Yuke Wang, Dongxiao Yu

https://arxiv.org/abs/2509.25977 https://mastoxiv.page/@arXiv_csLG_bot/115298721327100391

- Fine-Tuning Masked Diffusion for Provable Self-Correction

Jaeyeon Kim, Seunggeun Kim, Taekyun Lee, David Z. Pan, Hyeji Kim, Sham Kakade, Sitan Chen

https://arxiv.org/abs/2510.01384 https://mastoxiv.page/@arXiv_csLG_bot/115309690976554356

- A Generic Machine Learning Framework for Radio Frequency Fingerprinting

Alex Hiles, Bashar I. Ahmad

https://arxiv.org/abs/2510.09775 https://mastoxiv.page/@arXiv_csLG_bot/115372387779061015

- ASecond-Order SpikingSSM for Wearables

Kartikay Agrawal, Abhijeet Vikram, Vedant Sharma, Vaishnavi Nagabhushana, Ayon Borthakur

https://arxiv.org/abs/2510.14386 https://mastoxiv.page/@arXiv_csLG_bot/115389079527543821

- Utility-Diversity Aware Online Batch Selection for LLM Supervised Fine-tuning

Heming Zou, Yixiu Mao, Yun Qu, Qi Wang, Xiangyang Ji

https://arxiv.org/abs/2510.16882 https://mastoxiv.page/@arXiv_csLG_bot/115412243355962887

- Seeing Structural Failure Before it Happens: An Image-Based Physics-Informed Neural Network (PINN...

Omer Jauhar Khan, Sudais Khan, Hafeez Anwar, Shahzeb Khan, Shams Ul Arifeen

https://arxiv.org/abs/2510.23117 https://mastoxiv.page/@arXiv_csLG_bot/115451891042176876

- Training Deep Physics-Informed Kolmogorov-Arnold Networks

Spyros Rigas, Fotios Anagnostopoulos, Michalis Papachristou, Georgios Alexandridis

https://arxiv.org/abs/2510.23501 https://mastoxiv.page/@arXiv_csLG_bot/115451942159737549

- Semi-Supervised Preference Optimization with Limited Feedback

Seonggyun Lee, Sungjun Lim, Seojin Park, Soeun Cheon, Kyungwoo Song

https://arxiv.org/abs/2511.00040 https://mastoxiv.page/@arXiv_csLG_bot/115490555013124989

- Towards Causal Market Simulators

Dennis Thumm, Luis Ontaneda Mijares

https://arxiv.org/abs/2511.04469 https://mastoxiv.page/@arXiv_csLG_bot/115507943827841017

- Incremental Generation is Necessary and Sufficient for Universality in Flow-Based Modelling

Hossein Rouhvarzi, Anastasis Kratsios

https://arxiv.org/abs/2511.09902 https://mastoxiv.page/@arXiv_csLG_bot/115547587245365920

- Optimizing Mixture of Block Attention

Guangxuan Xiao, Junxian Guo, Kasra Mazaheri, Song Han

https://arxiv.org/abs/2511.11571 https://mastoxiv.page/@arXiv_csLG_bot/115564541392410174

- Assessing Automated Fact-Checking for Medical LLM Responses with Knowledge Graphs

Shasha Zhou, Mingyu Huang, Jack Cole, Charles Britton, Ming Yin, Jan Wolber, Ke Li

https://arxiv.org/abs/2511.12817 https://mastoxiv.page/@arXiv_csLG_bot/115570877730326947

toXiv_bot_toot