2026-03-08 18:56:49

The planets #Venus and #Saturn in #conjunction 1/2 hour ago over Bochum, Germany - easily caught with a hand-held bridge camera at 1/4 and 1/3.2 second, f/2.8 and ISO 800 but marginal even in 11x70 binoculars: bad sky transparency, caused mostly by Sahara dust AFAIK took its toll. At these images with the best *photographic* contrast the Sun was 8 1/2° below the horizon while Saturn and Venus were 3 1/4° and 3 3/4° high, with the latter 83 times brighter.

2026-03-06 09:42:01

from my link log —

Measuring C std::unordered_map badness.

https://artificial-mind.net/blog/2021/10/09/unordered-map-badness

saved 2021-10-24

2026-04-02 16:30:55

📣 An alle #neuhier:

Herzliche willkommen im #Fediverse! :mastolove:

Es gibt für alle Interessierten an Solidarischer Landwirtschaft / #solawi und

Organisationen der Solidarischer…

2026-04-26 20:26:26

The 'thinking' from a local Gemma 4 #llm reading bad handwriting is fascinating - I told it not to interpret stuff, it mostly didn't; but look at this thinking!

'Actually, looking at the 'e' in "the", it's a loop. The 'x' in "co-ax" is a cross. The letter in "axial" is a loop and a stroke. This is a very messy 'x' or a v…

2026-02-11 09:35:11

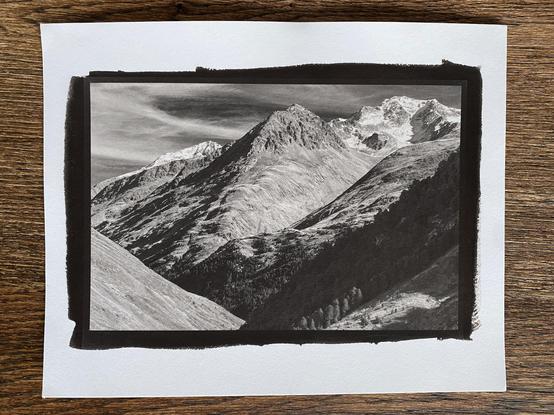

Latest 8x6" toned kallitype print, fresh from the tray (well, from the drying line)... 🤩

(Kallitype is quite challenging in terms of balancing achieving deep blacks vs detail, so this scene took quite some tweaking to retain the details in the band of dark shadowy forest, but I'm happy how it turned out...)

#AltProcess

2026-03-12 06:56:05

Apple's MacBook Neo validates a vision that began with Windows on ARM, which to this day is still held back by Microsoft's commitment to x86 compatibility (Steven Sinofsky/Hardcore Software)

https://hardcoresoftware.learningbyshipping.com/…

2026-03-10 23:41:56

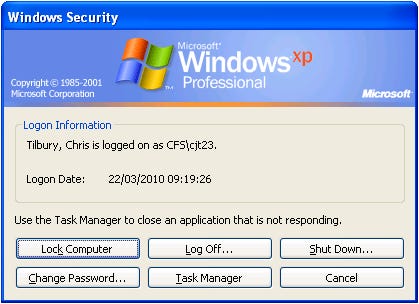

Back in the bad-old-days (2009-11?) when MS Internet Explorer was mostly gone, but a few companies with laggard user bases insisted on supporting it, staff at my company, Egressive, *hated* working on any compatibility as it required nasty regressive hacks, breaking open web standards. It made them feel dirty to do it. We instituted double-time 'hazard pay' for staff subjected to the indignity, and we charged 2x for IE-related compatibility work. Customers paid it, too.

2026-04-17 18:32:59

Some of those Georgie & Mandy's First Marriage episodes (2x16, Alpha Males and the Power of Prayer) are so uncomfortable to watch, I love it 😄

#TV #GeorgieAndMandysFirstMarriage

2026-03-12 22:29:16

Makes me feel old that I remember having to tweak the `/etc/X11/xorg.conf` to get my display working. Nowadays, I mostly don't have to think about any of that anymore b/c Wayland "Just Works" (TM), at least for the cases that I use (simple laptop/desktop setup). I lost AwesomeWM and Xmonad of course, but Sway still scratches a similar itch. If I'm masochistic enough, I might try doing something on top of wlroots with config in Haskell 😂

2026-04-13 14:42:03

from my link log —

Bring back idiomatic design.

https://essays.johnloeber.com/p/4-bring-back-idiomatic-design

saved 2026-04-12 https://