2025-11-22 14:19:48

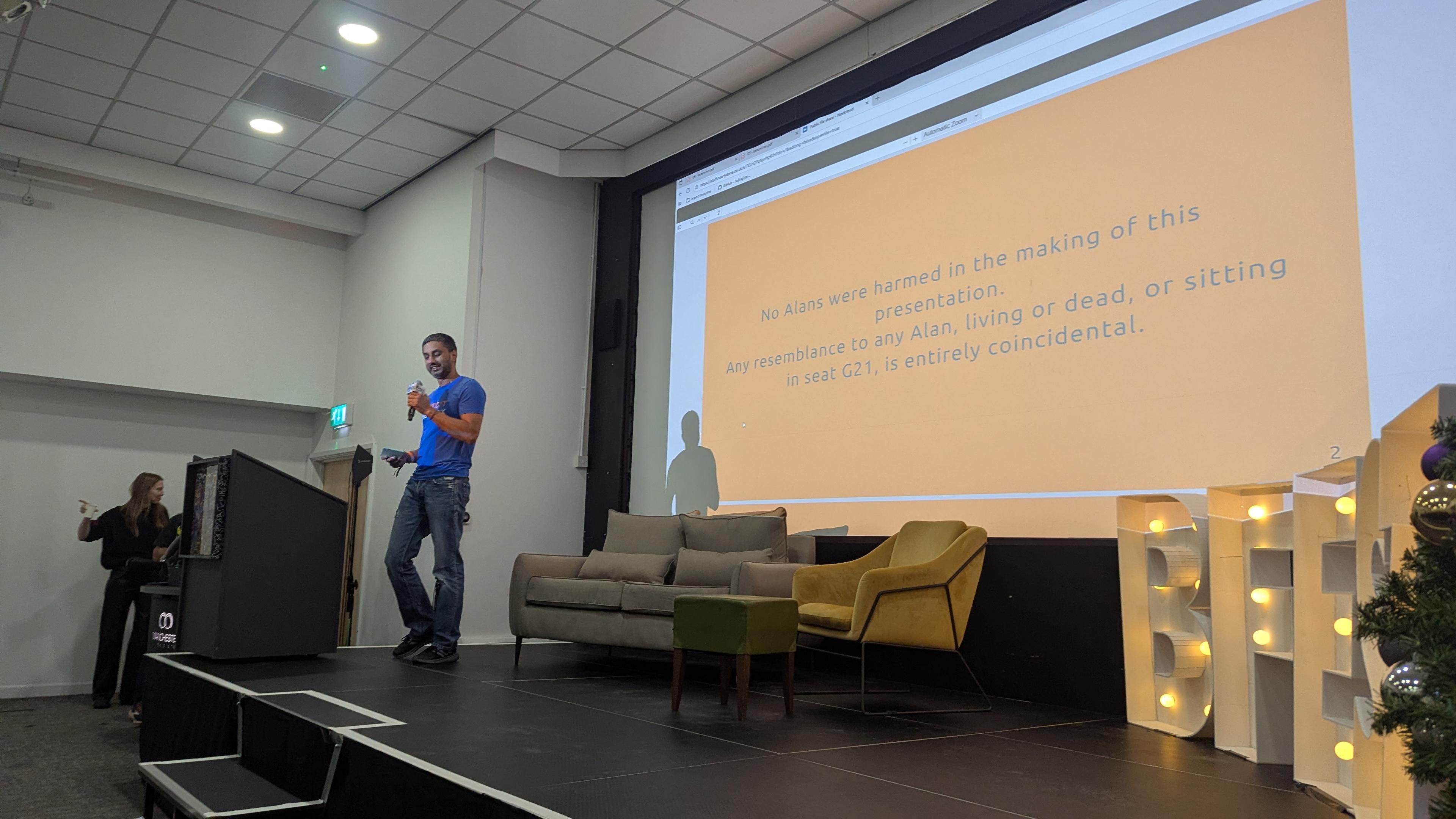

Prem ghinde thinks that Alan is killing bitcoin.

Alan is paid in government money, and saves in bitcoin. He's an imaginary straw man.

Alan doesn't plan to spend his bitcoin though. Just stack it until he sells it. And this doesn't build the bitcoin network.

Without transitions, when the block rewards run out, there will be no money for miners. Miners will need fees, which means transactions.

Since he's paying in bank money, he's funding bankers instead of miners. He's encouraging retail to accept bank money instead of miners and lightning liquidity providers.

Unlike Alan, Prem lives on the bitcoin standard. All in. Spending sats because he has no bank money to spend. It can be done, he insists. Today. Mostly by using gift vouchers bought with bitcoin.

He's sad that people here are buying drinks from the hotel with bank cards instead of lightning.

Stop watching the price, he says, it's only a measure of government money's collapse. Change your yardstick. Account in bitcoin. Dollars aren't even money, they are currency. If you must measure, do it against gold.

Since moving to el Salvador he had learned Spanish, until he even dreams in Spanish. Try to dream in bitcoin.

Every transaction is a vote, so stop voting for bank money.

I think the main trouble with this is that tax event in every purchase, and the fact my employer won't set a wage in bitcoin even if they would convert to bitcoin to pay me.

#bitcoin #bitfest

2025-09-22 07:38:36

There is a giant mountain in the US carved with the faces of a couple of slavers, and two guys who tried to stop slavery. Now most Americans will stop right there and say, "wait, two? Lincoln did that though..." They'll say that because Americans don't know anything about their own history, including the fact that the practice of slavery remained central to the southern economy well through Roosevelt's administration. If this is not familiar to you (because, maybe, you were taught history in the US) and you'd like to actually learn about that, you might want to read "Slavery by Another Name."

But let's talk about half-slaver mountain for a minute. This mountain is functionally a sacred site for Americans, but it's literally a sacred site for Black Hills Sioux. Speaking of stolen land, did you know that JBLM (a military base in Washington state) is built on land promised the Puyallup in the Treaty of Medicine Creek before being stolen in 1918? I remember being taught that all the land was stolen a long time ago and now there's nothing we can do. Yeah, does anyone remember that DAPL was under Obama? In fact, unused federal lands are supposed to be returned to the tribes from which the land was taken but there's a whole site to auction off federal property... That's a whole section of the government dedicated to violating the Treaty of Fort Laramie.

They could just comply with the treaty, as they are legally obligated to do. These violations are ongoing. Slavery, again, is still legal. Slaves are still used by major corporations today, they just have to be tricked into confessing to a crime first. The sins that this country is built on remain fully active today... Because the system was built to preserve white supremacists patriarchy. How could the founding of the US not lead *directly* to Trump? How could this have been different, from the beginning?

But, please, tell me, how, exactly, are you going to fix that by voting harder in the mid terms. How?

2025-11-20 22:27:26

After #Trump finally crashes and burns (I'm still saying I don't think he makes it to the mid terms, and I think it's more than possible he won't make it to the end of the year) we'll hear a lot of people say, "the system worked!" Today people are already talking about "saving democracy" by fighting back. This will become a big rally cry to vote (for Democrats, specifically), and the complete failure of the system will be held up as the best evidence for even greater investment in it.

I just want to point out that American democracy gave nuclear weapons to a pedophile, who, before being elected was already a well known sexual predator, and who made the campaign promise to commit genocide. He then preceded to commit genocide. And like, I don't care that he's "only" kidnaped and disappeared a few thousand brown people. That's still genocide. Even if you don't kill every member of a targeted group, any attempt to do so is still "committing genocide." Trump said he would commit genocide, then he hired all the "let's go do a race war" guys he could find and *paid* them to go do a race war. And, even now as this deranged monster is crashing out, he is still authorized to use the world's largest nuclear arsenal.

He committed genocide during his first term when his administration separated migrant parents and children, then adopted those children out to other parents. That's technically genocide. The point was to destroy the very people been sending right wing terror squads after.

There was a peaceful hand over of power to a known Russian asset *twice*, and the second time he'd already committed *at least one* act of genocide *and* destroyed cultural heritage sites (oh yeah, he also destroyed indigenous grave sites, in case you forgot, during his first term).

All of this was allowed because the system is set up to protect exactly these types of people, because *exactly* these types of people are *the entire power structure*.

Going back to that system means going back to exactly the system that gave nuclear weapons to a pedophile *TWICE*.

I'm already seeing the attempts to pull people back, the congratulations as we enter the final phase, the belief that getting Trump out will let us all get back to normal. Normal. The normal that lead here in the first place. I can already see the brunch reservations being made. When Trump is over, we will be told we won. We will be told that it's time to go back to sleep.

When they tell you everything worked, everything is better, that we can stop because we won, tell them "fuck you! Never again means never again." Destroy every system that ever gave these people power, that ever protected them from consequences, that ever let them hide what they were doing.

These democrats funded a genocide abroad and laid the groundwork for genocide at home. They protected these predators, for years. The whole power structure is guilty. As these files implicate so many powerful people, they're trying to shove everything back in the box. After all the suffering, after we've finally made it clear that we are the once with the power, only now they're willing to sacrifice Trump to calm us all down.

No, that's a good start but it can't be the end.

Winning can't be enough to quench that rage. Keep it burning. When this is over, let victory fan that anger until every institution that made this possible lies in ashes. Burn it all down and salt the earth. Taking down Trump is a great start, but it's not time to give up until this isn't possible again.

#USPol

2025-11-16 19:10:58

PSA about food labeling in the US

We have a gluten detection service dog because many things that should be gluten free/say they’re gluten free are not actually gluten free.

Stuff gets contaminated when growing (e.g. next to wheat field), by shared equipment, in factories, from packaging, during transport and in-store.

Every US consumer should know:

1. The list of ingredients on food isn't exhaustive

2. Allergen labeling:

a) limited to just some allergens

b) manufacturers don't actually have to test

c) "certified" foods are tested—but not continuously

d) testing only works with enough contamination

Some certifications may require batch-testing, but usually they don't.

A "certified gluten free" product may e.g. contain oats which sometimes are contaminated with gluten—but as not every batch is tested it's impossible to know unless you test yourself (hence the service dog).

Even if the product is properly batch-tested, you might get a part of the product that has the allergen in it, whereas the tested part didn't.

Or the threshold was too low (our dog can detect gluten better than any available lab testing equipment; yes, dogs are amazing).

Food products also contain ingredients that do not have to be included on the label when they're "incidental" (included in an another ingredient) or if they're considered part of the manufacturing process but not of the final product (e.g. various coatings on factory equipment).

Don't need to list flavors or specific spices either. ¯\_(ツ)_/¯

As for allergens, only those responsible for ~90% of food allergies* have to be specifically declared, and they're not tested for as it's simply based on the ingredients list.

Good luck if you have other allergies.

*milk, egg, egg, fish, Crustacean shellfish, tree nuts, wheat, peanuts, soybeans

2025-10-19 08:56:21

The other day my wife and I were talking about Charli XCX, how she's so popular. My wife hadn't heard her stuff. I had, last year, when everyone was raving about it. It's very popular. No shade to her fans.

So for fun, a little later, I put on just the first couple minutes of Charli's 'Brat' (that I found to be unlistenable) and I was like, hey listen to this.

My wife was like, what the FUCK is THAT?!? 😂😂

2025-11-15 20:41:44

Fuck the United States of America.

And Israel, of course.

Thank you for coming to my TED talk. https://norden.social/@stephie_hamburg/115555340426223090

2025-10-16 22:20:45

I’m using Claude-code to get stuff done that I couldn’t have done before. I just spent a non-frustrating hour or so successfully changing a system written mostly in typescript (which I’ve never used) to do a bunch of things differently. I’m telling it what I want the system to do (with BDD tests where possible) and it’s figuring out the code changes. Several days work for a human, weeks if I had to find someone to do it for me. Like this:

2025-10-14 22:01:22

There's no point in having fuck-you money in the bank if you never say "fuck you"!

https://www.anildash.com/2025/09/09/how-tim-cook-sold-out-steve-jobs/

2025-10-20 08:05:15

Some leftists have criticized #NoKingsDay2 as useless. Though it was the largest protest in US history, it didn't change anything. I would go further to say that protests like these generally won't change anything. Dictators aren't forced to step down by 2% of the population coming out for one day. If they're forced to step down by protests, those protests are sustained. They are every single day. They are accompanied by general strikes.

We've been watching that happen all over the world. Portland in 2020 gave us a taste of that in the US. The George Floyd Rebellion was the type of resistance that actually brings down dictators like Trump. Occasional protests, no matter how large, can simply be ignored. That is precisely the reason the US developed a militarized police force in the first place. You need more, more than the largest protests in US history, more than Occupy, more than the resistance of the 60's and 70's, more than, and different from, anything we've seen in our lives.

And yet... Each protest has grown, and grown bolder. Some have grown more persistent. If you think of protest as the path to achieve change, you will lose. It is not. But it is a path to escalate. Some people, some otherwise comfortable white folks, came out for their first time. Some people got pepper sprayed for the first time. Some people questioned authority, stood up for the first time, and have had an experience that will radicalize them for the rest of their lives.

Protest is not useful in and of itself. It is training. It's making connections. Authoritarian regimes rely on the illusion of compliance, so visual resistance does actually undermine their power.

Liberals like to teach that non-violence is all about staying peaceful no matter what, that there's some way that morality simply overwhelms an enemy. I remember reading Langston Hughes' A Dream Deferred in high school. I said it was a threat. My teacher said, "you're wrong, he was a pacifist." Pacifism is a threat. If you can spit at me, beat me, shoot me, and I will not move, if I have the strength to absorb violence without flinching, without even rising to violence, what will happen when you push me too far?

What happens to a dream deferred?

Does it dry up

like a raisin in the sun?

Or fester like a sore—

And then run?

Does it stink like rotten meat?

Or crust and sugar over—

like a syrupy sweet?

Maybe it just sags

like a heavy load.

Or does it explode?

For peaceful resistance to work, there must be ambiguity. It must not be clear if or when the resistance will stop being peaceful. Peaceful resistance with no possibility of escalation is just cowardice.

My critique then is not so harsh as some other anarchists. If you think that protest alone will work, you're probably going to lose. If you are prepared to escalate, if you are prepared to absorb violence without flinching, then it could be possible for protest alone to topple the dictator. The cracks are already beginning to show.

And then what?

The problems that lead to the George Floyd uprising were never resolved. The problems that lead to Occupy where never resolve. The DAPL was built, protesters were maimed, it leaked multiple times (exactly as predicted). Segregation never went away, it only changed forms. The fact that immigrants have different courts and different rights means that anyone can be arbitrarily kidnaped and renditioned to an arbitrary country. We never did anything about the torture black site. FFS, people can still be stripped of their voting rights and slavery is still legal in the US. The people who control both parties in the US are killing our children and grand children with oil wars and climate change.

Toppling the dictator does nothing to resolve all of the problems that existed before him.

No, #NoKingsDay was absolutely not useless. #NoKings and related protests are extremely useful but they aren't sufficient. But, I think we still need to challenge the movement on two points:

How do you escalate after you're ignored or brutalized?

What do you demand after you win?

#USPol