If you are an anarchist and you speak only one language, learn another one. Learn the language of your land, the indigenous language where you live. Learn the language of your ancestors, especially those languages that the state has tried to murder away. Learn an international language other than English, which have also been targets of state repression.

Prioritize languages that you both have some connection with (because it's hard to learn a language you don't care about) and languages that the state has tried to erase (because there are *reasons* those languages were targeted). Language (which can't be fully separated from culture) adds complexity. The state often tries to murder away language because (among other reasons) hierarchy is threatened by complexity. Every language adds some surveillance overhead, some more than others.

Flock of Pelicans flying over the beach. Moonstone Beach, Cambria, California, USA. May, 2023. OM System OM-1 M.Zuiko 12-40 F2.8. #moonstonebeach #pelican #birds

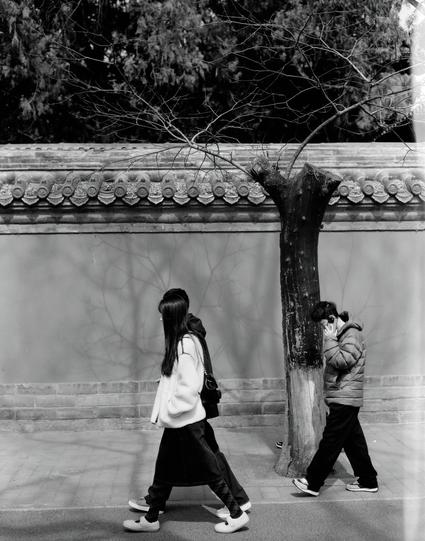

Life Runners 🪖

生活战士 🪖

📷 Nikon FE

🎞️ Ilford HP5 Plus 400, expired 1993

If you like my work, buy me a coffee from PayPal #filmphotography

RE: https://mastodon.online/@sherold/116481485878550299

If laptop stickers say something about the owner's attitude, then I have absolutely no idea what this is supposed to say about me. I guess it doesn't matter.

Towards Efficient Data Structures for Approximate Search with Range Queries

Ladan Kian, Dariusz R. Kowalski

https://arxiv.org/abs/2602.06860 https://arxiv.org/pdf/2602.06860 https://arxiv.org/html/2602.06860

arXiv:2602.06860v1 Announce Type: new

Abstract: Range queries are simple and popular types of queries used in data retrieval. However, extracting exact and complete information using range queries is costly. As a remedy, some previous work proposed a faster principle, {\em approximate} search with range queries, also called single range cover (SRC) search. It can, however, produce some false positives. In this work we introduce a new SRC search structure, a $c$-DAG (Directed Acyclic Graph), which provably decreases the average number of false positives by logarithmic factor while keeping asymptotically same time and memory complexities as a classic tree structure. A $c$-DAG is a tunable augmentation of the 1D-Tree with denser overlapping branches ($c \geq 3$ children per node). We perform a competitive analysis of a $c$-DAG with respect to 1D-Tree and derive an additive constant time overhead and a multiplicative logarithmic improvement of the false positives ratio, on average. We also provide a generic framework to extend our results to empirical distributions of queries, and demonstrate its effectiveness for Gowalla dataset. Finally, we quantify and discuss security and privacy aspects of SRC search on $c$-DAG vs 1D-Tree, mainly mitigation of structural leakage, which makes $c$-DAG a good data structure candidate for deployment in privacy-preserving systems (e.g., searchable encryption) and multimedia retrieval.

toXiv_bot_toot

Heading home from Easter holiday in sunny Italy and using @… to track the cyclone that just passed overhead in Denmark, looks like it will be cloudy and cool but the worst will have passed by the time we get home...

#EUMETSAT

As we’re landing, crew asks everyone to stay seated so paramedics can get into the back of the plane. Seatbelt sign goes off, 5 people in my section promptly stand up and start yanking luggage from the overhead bins. Some bins left open when crew scolds people back to their seats.

Sickly Red III ⭕️

病态的红 III ⭕️

📷 Pentax MX

🎞️ CineStill 800T

If you like my work, buy me a coffee from PayPal #filmphotography

latest petty airline complaint: "place your bag vertically in the overhead compartment as you would a book on a shelf" while actually being instructed to place the bag horizontally, as if placing a book with the spine facing up. guess airline safety folks aren't really readers.

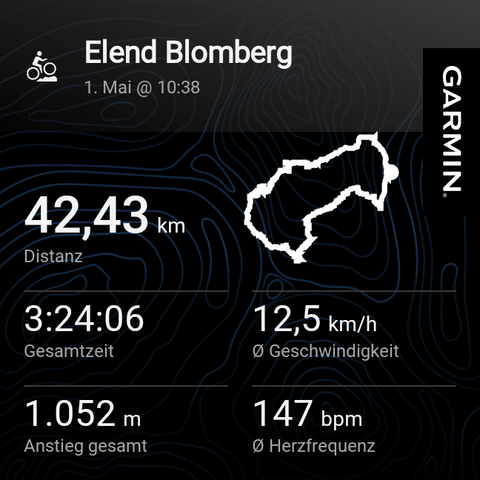

This was a nice ride. Some sections involved pushing the bike. And some steep parts came a bit surprising. But overall it was nice with some quite challenging sections. It was a loop that I wanted to try since quite a while.

And it's a new elevation record since I own the Garmin 🎉

Just my #garmin now suddenly refuses to connect to my phone. 😵💫 Factory reset, all suggestions fro…

Bird View 🐦

鸟瞰 🐦

📷 Nikon FE

🎞️ Ilford HP5 Plus 400, expired 1993

If you like my work, buy me a coffee from PayPal #filmphotography

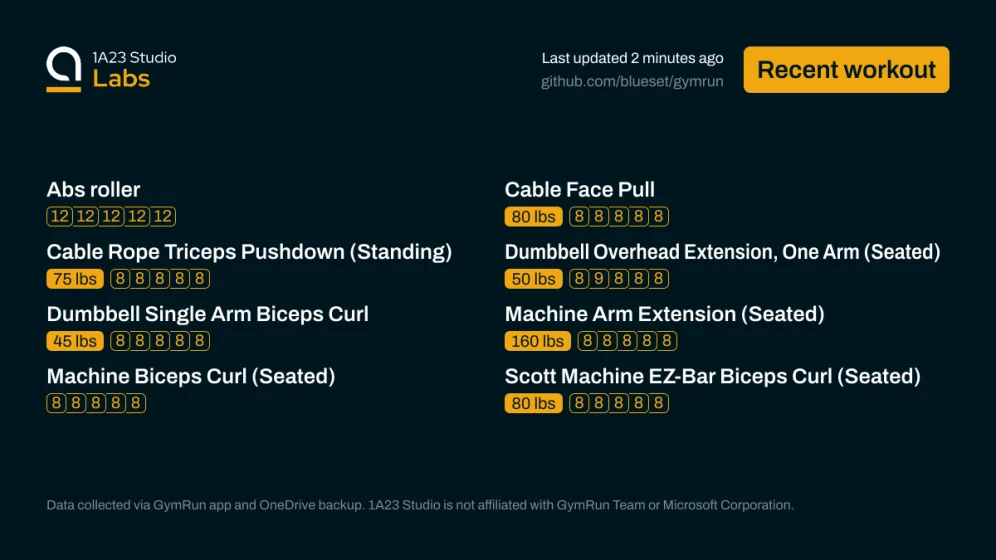

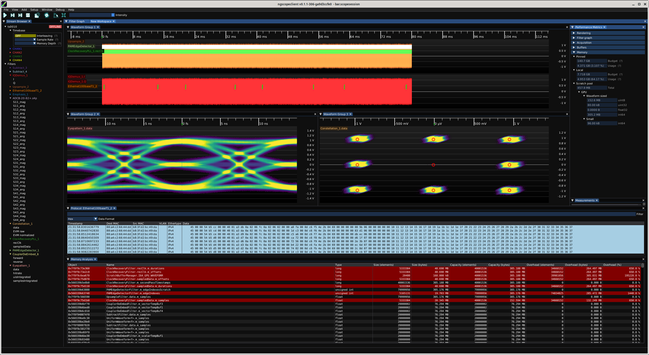

As part of the ongoing effort to reduce VRAM bloat in ngscopeclient I've added some nice debug tools (currently accessible by Window | Memory Analysis but that will probably get moved to the debug menu at some point) to show you the full list of AcceleratorBuffer objects along with a bunch of metadata.

Haven't figured out how to right align the column titles but this is a debug visualization so not a huge priority.

Rows with more than 10% overhead (capacity > 1.1*size)…

SysOM-AI: Continuous Cross-Layer Performance Diagnosis for Production AI Training

Yusheng Zheng, Wenan Mao, Shuyi Cheng, Fuqiu Feng, Guangshui Li, Zhaoyan Liao, Yongzhuo Huang, Zhenwei Xiao, Yuqing Li, Andi Quinn, Tao Ma

https://arxiv.org/abs/2603.29235 https://arxiv.org/pdf/2603.29235 https://arxiv.org/html/2603.29235

arXiv:2603.29235v1 Announce Type: new

Abstract: Performance diagnosis in production-scale AI training is challenging because subtle OS-level issues can trigger cascading GPU delays and network slowdowns, degrading training efficiency across thousands of GPUs. Existing profiling tools are limited to single system layers, incur prohibitive overhead (10--30%), or lack continuous deployment capabilities, resulting in manual analyses spanning days. We argue that continuous, cross-layer observability enabled by OS-level instrumentation and layered differential diagnosis is necessary to address this gap. We introduce SysOM-AI, a production observability system that continuously integrates CPU stack profiling, GPU kernel tracing, and NCCL event instrumentation via adaptive hybrid stack unwinding and eBPF-based tracing, incurring less than 0.4% overhead. Deployed at Alibaba across over 80,000 GPUs for more than one year, SysOM-AI helped diagnose 94 confirmed production issues, reducing median diagnosis time from days to approximately 10 minutes.

toXiv_bot_toot

One nice thing Linux does better is let me use different pixel scaling on two screens. So now if I have a window straddling two screens with different PPI it looks alright.

Another is it just seems faster. So much less bloat! Also less overhead: it looks like running LLMs locally may be 40% faster.

It's Friday the 13ᵗʰ, post your sleep paralysis demons.

Mine is kinda cute :3

Sickly Red IV ⭕️

病态的红 IV ⭕️

📷 Pentax MX

🎞️ CineStill 800T

If you like my work, buy me a coffee from PayPal #filmphotography …

Given that it's Sir Terry Pratchett day, I'd thought I'd dig this post up from my archive. If you're having fun on the #IndieWeb, #SmolWeb, or similar, why not join the Overhead as well 😉

#GNUTerry

TransLink outlines future transit improvements in Vancouver, Burnaby, New Westminster

Burrard Peninsula Area Transport Plan will guide improvements to bus service, active transportation, and goods movement

TransLink has released the Burrard Peninsula Area Transport Plan, outlining recommended transit and transportation improvements across Vancouver, Burnaby, and New Westminster, as well as UBC and the University Endowment Lands, which are part of Electoral Area A.

Scaling Vision Transformers: Evaluating DeepSpeed for Image-Centric Workloads

Huy Trinh, Rebecca Ma, Zeqi Yu, Tahsin Reza

https://arxiv.org/abs/2602.21081 https://arxiv.org/pdf/2602.21081 https://arxiv.org/html/2602.21081

arXiv:2602.21081v1 Announce Type: new

Abstract: Vision Transformers (ViTs) have demonstrated remarkable potential in image processing tasks by utilizing self-attention mechanisms to capture global relationships within data. However, their scalability is hindered by significant computational and memory demands, especially for large-scale models with many parameters. This study aims to leverage DeepSpeed, a highly efficient distributed training framework that is commonly used for language models, to enhance the scalability and performance of ViTs. We evaluate intra- and inter-node training efficiency across multiple GPU configurations on various datasets like CIFAR-10 and CIFAR-100, exploring the impact of distributed data parallelism on training speed, communication overhead, and overall scalability (strong and weak scaling). By systematically varying software parameters, such as batch size and gradient accumulation, we identify key factors influencing performance of distributed training. The experiments in this study provide a foundational basis for applying DeepSpeed to image-related tasks. Future work will extend these investigations to deepen our understanding of DeepSpeed's limitations and explore strategies for optimizing distributed training pipelines for Vision Transformers.

toXiv_bot_toot

Urban Demons X👻

城市鬼魂 X 👻

📷 Nikon F4E

🎞️ ERA 100, expired 1993

If you like my work, buy me a coffee from PayPal #filmphotography

Oh wow, I feel quite sore today. Maybe I didn't do "enough" during winter to keep my muscles in shape.

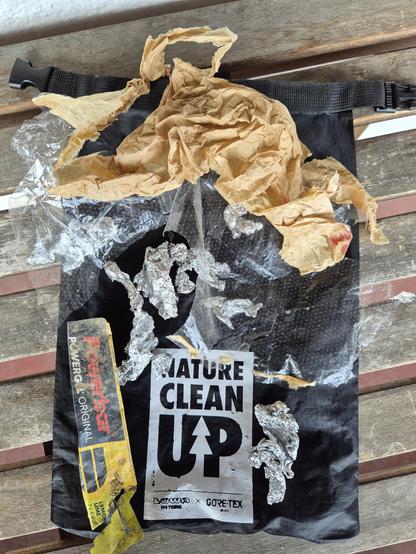

But it was worth it! (Pictures and video are yet to come). After the snow has mostly melted, it's time again to clean up all kinds of litter.

I once heard a metallic *pling* and knew ... "new microspikes needed now ... but as the metal plate broke and not just a chain, I hope that the shop might replace it. I mean .. broken metal after just ~1year? Keep fi…

Set the Unsettled II 🧘

尘埃落定 II 🧘

📷 Pentax MX

🎞️ Lucky SHD 400

If you like my work, buy me a coffee from PayPal #filmphotography

This is probably the worst joke I've seen, and I am crying.

#memes #funny #comic

HALO: A Fine-Grained Resource Sharing Quantum Operating System

John Zhuoyang Ye, Jiyuan Wang, Yifan Qiao, Jens Palsberg

https://arxiv.org/abs/2602.07191 https://arxiv.org/pdf/2602.07191 https://arxiv.org/html/2602.07191

arXiv:2602.07191v1 Announce Type: new

Abstract: As quantum computing enters the cloud era, thousands of users must share access to a small number of quantum processors. Users need to wait minutes to days to start their jobs, which only takes a few seconds for execution. Current quantum cloud platforms employ a fair-share scheduler, as there is no way to multiplex a quantum computer among multiple programs at the same time, leaving many qubits idle and significantly under-utilizing the hardware. This imbalance between high user demand and scarce quantum resources has become a key barrier to scalable and cost-effective quantum computing.

We present HALO, the first quantum operating system design that supports fine-grained resource-sharing. HALO introduces two complementary mechanisms. First, a hardware-aware qubit-sharing algorithm that places shared helper qubits on regions of the quantum computer that minimize routing overhead and avoid cross-talk noise between different users' processes. Second, a shot-adaptive scheduler that allocates execution windows according to each job's sampling requirements, improving throughput and reducing latency. Together, these mechanisms transform the way quantum hardware is scheduled and achieve more fine-grained parallelism.

We evaluate HALO on the IBM Torino quantum computer on helper qubit intense benchmarks. Compared to state-of-the-art systems such as HyperQ, HALO improves overall hardware utilization by up to 2.44x, increasing throughput by 4.44x, and maintains fidelity loss within 33%, demonstrating the practicality of resource-sharing in quantum computing.

toXiv_bot_toot

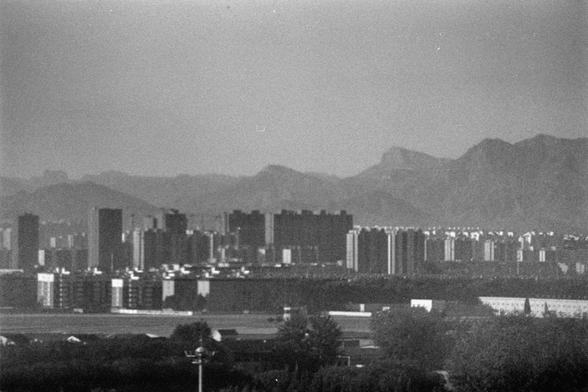

Urban Illusions and Fallacies II 🏙️

城市的幻影和谬误 II 🏙️

📷 Pentax 6x7

🎞️Kentmere Pan 200 (6x7)

If you like my work, Support by buying me a coffee or a roll of film from PayPal https://paypal.com/paypalme/ydcdingsite

Prune, Don't Rebuild: Efficiently Tuning $\alpha$-Reachable Graphs for Nearest Neighbor Search

Tian Zhang, Ashwin Padaki, Jiaming Liang, Zack Ives, Erik Waingarten

https://arxiv.org/abs/2602.08097 https://arxiv.org/pdf/2602.08097 https://arxiv.org/html/2602.08097

arXiv:2602.08097v1 Announce Type: new

Abstract: Vector similarity search is an essential primitive in modern AI and ML applications. Most vector databases adopt graph-based approximate nearest neighbor (ANN) search algorithms, such as DiskANN (Subramanya et al., 2019), which have demonstrated state-of-the-art empirical performance. DiskANN's graph construction is governed by a reachability parameter $\alpha$, which gives a trade-off between construction time, query time, and accuracy. However, adaptively tuning this trade-off typically requires rebuilding the index for different $\alpha$ values, which is prohibitive at scale. In this work, we propose RP-Tuning, an efficient post-hoc routine, based on DiskANN's pruning step, to adjust the $\alpha$ parameter without reconstructing the full index. Within the $\alpha$-reachability framework of prior theoretical works (Indyk and Xu, 2023; Gollapudi et al., 2025), we prove that pruning an initially $\alpha$-reachable graph with RP-Tuning preserves worst-case reachability guarantees in general metrics and improved guarantees in Euclidean metrics. Empirically, we show that RP-Tuning accelerates DiskANN tuning on four public datasets by up to $43\times$ with negligible overhead.

toXiv_bot_toot

Fork, Explore, Commit: OS Primitives for Agentic Exploration

Cong Wang, Yusheng Zheng

https://arxiv.org/abs/2602.08199 https://arxiv.org/pdf/2602.08199 https://arxiv.org/html/2602.08199

arXiv:2602.08199v1 Announce Type: new

Abstract: AI agents increasingly perform agentic exploration: pursuing multiple solution paths in parallel and committing only the successful one. Because each exploration path may modify files and spawn processes, agents require isolated environments with atomic commit and rollback semantics for both filesystem state and process state. We introduce the branch context, a new OS abstraction that provides: (1) copy-on-write state isolation with independent filesystem views and process groups, (2) a structured lifecycle of fork, explore, and commit/abort, (3) first-commit-wins resolution that automatically invalidates sibling branches, and (4) nestable contexts for hierarchical exploration. We realize branch contexts in Linux through two complementary components. First, BranchFS is a FUSE-based filesystem that gives each branch context an isolated copy-on-write workspace, with O(1) creation, atomic commit to the parent, and automatic sibling invalidation, all without root privileges. BranchFS is open sourced in https://github.com/multikernel/branchfs. Second, branch() is a proposed Linux syscall that spawns processes into branch contexts with reliable termination, kernel-enforced sibling isolation, and first-commit-wins coordination. Preliminary evaluation of BranchFS shows sub-350 us branch creation independent of base filesystem size, and modification-proportional commit overhead (under 1 ms for small changes).

toXiv_bot_toot

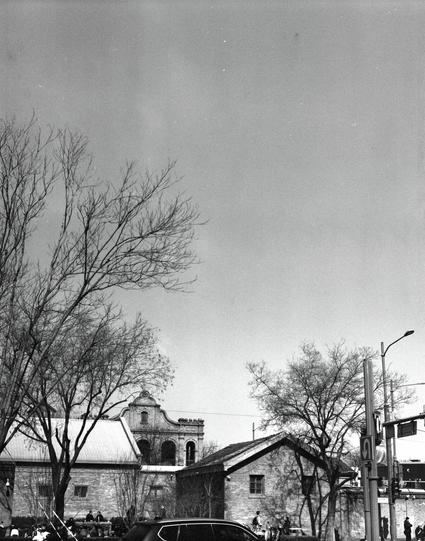

City Silhouettes III 🏙️

城市轮廓线 III 🏙️

📷 Pentax 6x7

🎞️ Lucky SHD 400 (6x7)

If you like my work, Support by buying me a coffee or a roll of film from PayPal https://www.paypal.com/paypalme/ydcdingsite

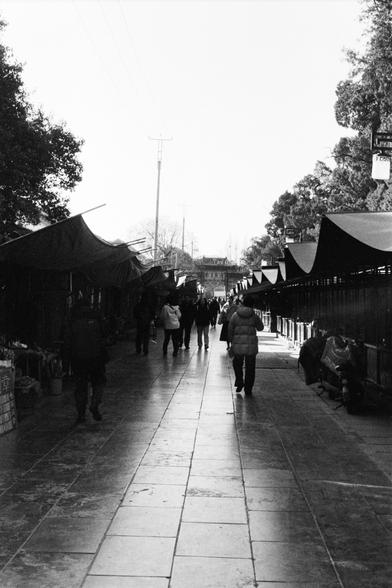

Different Corners XI ▶️

不同的角落 XI ▶️

📷 Nikon F4E

🎞️ Rollei RPX 400

#filmphotography #Photography #blackandwhite

Different Corners IX ▶️

不同的角落 IX ▶️

📷 Nikon F4E

🎞️ Rollei RPX 400

#filmphotography #Photography #blackandwhite

Rare Colours Blues IV🔷🔷

稀有的色彩蓝 IV🔷🔷

📷 Pentax MX

🎞️ Harman Phoenix 200 II (FF)

#filmphotography #Photography #Art

Some Snow ❄️

一些雪 ❄️

📷 Minolta Hi-Matic AF

🎞️ Ilford FP4 Plus 125, expired 1993

If you like my work, buy me a coffee from PayPal #filmphotography