2026-02-26 15:22:31

So a Replyguy just replied with "linking directly to the article to avoid Bluesky shit" to my post.

Dude, the "Bluesky shit" is the thread by a scientist I was linking to specifically because it destroyed the stupid lies in the article you linked to.

Maybe try reading the "Bluesky shit" first before hitting the reply button, idk.

2026-01-25 23:08:22

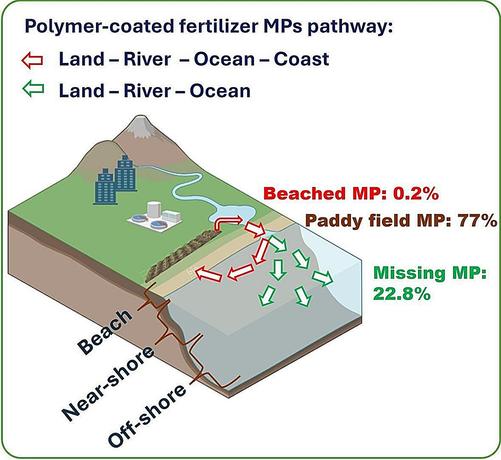

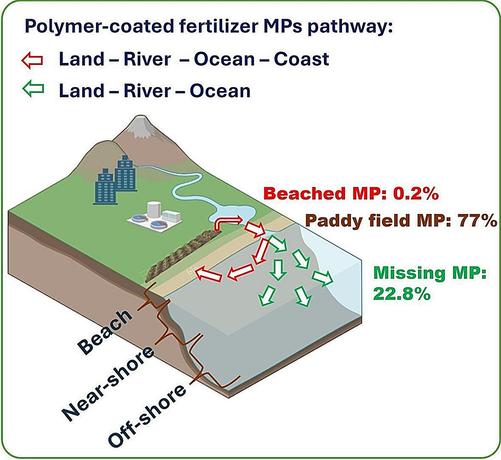

Scientists trace microplastics in fertilizer from fields to the beach #environment

2026-01-24 12:00:36

"Scientists trace microplastics in fertilizer from fields to the beach"

#Plastic #Plastics #Microplastics

2026-02-24 09:40:49

Philip Ball:

"There’s a broader issue at stake here too that pertains to the place of AI in scientific research. Too often now, we hear claims of how AI will replace human scientists and, in doing so, will solve our most pressing problems: curing all disease, solving climate change, figuring out how to conduct nuclear fusion. Such claims are never supported by experts in those fields—and that’s not because they want to convince us that they are indispensable after all. Rather, it i…

2026-03-25 20:21:39

Top climate scientist leaves NASA, because telling the truth there has become difficult. Sad.

Looking forward to hear from you in a new position, Kate!

https://www.nytimes.com/2026/03/25/climate/kate-marvel-nasa-resign.html

2026-03-25 22:51:44

Ohio State Pro Day: Will the Buckeyes have four players taken in the top 10 of NFL Draft?

https://www.cbssports.com/nfl/news/ohio-state-pro-day-buckeyes-top-10-players-nfl-draft…

2026-02-25 09:05:46

Q&A with Bostopia's Evan George on his TikTok and Instagram videos under @bostopianews, summarizing Boston local news while offering his own opinions (Hanaa' Tameez/Nieman Lab)

https://www.niemanlab.org/2026/02/bostopias-…

2026-02-25 19:54:33

🧬 Giant DNA viruses encode their own eukaryote-like translation machinery, researchers discover

#virus

2026-01-27 01:23:00

🇺🇦 #NowPlaying on #BBC6Music's #6MusicArtistCollection

The Smiths:

🎵 Reel Around The Fountain (Radio 1 John Peel Session, 18 May 1983)

#TheSmiths

https://akadungeonmaster.bandcamp.com/track/the-smiths-reel-around-the-fountain

https://open.spotify.com/track/5bSASu4W0HJx6CuG8rbRcA