2026-05-25 13:17:28

🇺🇦 #NowPlaying on KEXP's #Early

The Black Angels:

🎵 Young Men Dead

#TheBlackAngels

https://blackangels.bandcamp.com/track/young-men-dead

https://open.spotify.com/track/1cukr241u1vwUP36AmUfhO

2026-04-17 13:53:26

2026-04-17 13:53:26

2026-04-14 17:25:43

2026-03-03 16:44:17

Technological dependence on American software and cloud services : an assessment of the economic consequences in Europe - Cigref

https://www.cigref.fr/technological-dependence-on-american-software-and-…

2026-04-01 20:44:01

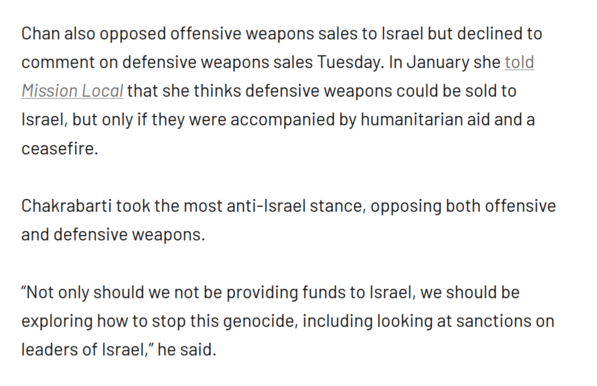

Not April Fool: okay, I think that clinches my vote for Chakrabarti over Chan unless there are major surprises. You can't be mealy-mouthed on enabling a genocidal regime. (Of course, Wiener is much worse on this issue, and I will vote for Chan in the general if it's those two who advance.)

https://

2026-05-21 07:51:39

🇺🇦 #NowPlaying on KEXP's #VarietyMix

Units:

🎵 Mission

#Units

https://open.spotify.com/track/36CecRFKByj5LQpDAZX2JG

2026-03-06 09:11:48

"The figures show this: a child born this morning in Britain can expect to be in good health only until they are 61. The last 20 years of their life will be blighted by illness: dodgy hearts, painful joints, an inability to get about. Our healthy life expectancy has been dropping for years; it is now the lowest since 2011, when records began."

This is a life and death story for the UK – so why is it being brushed under the carpet? | Aditya Chakrabortty

2026-05-03 17:38:40

34 🇲🇳 Premières PISTES en MONGOLIE, VRAIMENT INCROYABLES ⁉️ - YouTube

#voyage

2026-03-18 02:24:28