2026-04-09 12:04:00

Xeno Arena: „No Man’s Sky“ bekommt „Pokémon“-Schlachten

Kreaturen sammeln und sich dann mit ihnen duellieren: „No Man’s Sky“ bekommt eine neue Spielmechanik.

https://www.heise.de/news/Xeno-A…

2026-03-10 04:03:57

#Rant

Rob Shaw is solidifying his position as the new Dean of Right Wing, anti-Worker Legislative Reporters in British Columbia.

With these gems, it's incredible that he has an education or goes to a doctor at all…

"The BCTF is typically one of the most militant unions, and quickly prone to job action.”

BCTF Strikes since 2000:

2005

2014

phew! almost got to three!

Remember that this was at the time of a union-busting BC Liberal government that had to go to the Supreme Court of Canada to get told that they ripped up union contracts unconstitutionally and were *forced* to compensate many years later.

"The ratification is a win for a New Democrat government. And extraordinarily expensive for taxpayers, too."

“extraordinarily expensive. Really? How is a wage increase that is *barely* in line with inflation after literally decades of below-inflation increases, “extraordinary”? I'll wait.

"Teachers can thank the BCGEU for turning what was an initial 3.5 per cent wage offer over two years by government, into a more than 12 per cent increase over four that is now forming the baseline for all other union deals."

Indeed! For those who can do math, that means 3% each of 4 years instead of 3.5 over two. But thanks Rob for making it seem like 4 times more!

Thanks BCGEU members for your solidarity and perceverence! I have been on strike. It sucks HARD. But it was worth it and it works.

"The ratification by the BCTF means roughly half of the 450,000 public sector employees now have deals of some sort with the province. Two majors left on the table are nurses and doctors.”

Oh no! Let’s not pay doctors and nurses! Surely they'll stay regardless in our incredibly overworked and under resourced healthcare system!

Like how does Mr Shaw believe we are to stay competitive or attract people. Or is he just not worried about getting sick….

"The skyrocketing deficit has the NDP government inking sweetheart deals with organized labour on the one hand, while pledging to cut public sector jobs with the other.”

Ya, we could have kept those public sector jobs if it weren't for fools like you who demanded governments cut taxes over the past 20 years instead of reasonable rises to... again…keep up with inflation and retain service!

It is a crappy balancing act that the NDP is doing and I do not like a lot of it. At the same time as Mr. Shaw complains about "sweetheart deals" for people in post-secondary, I am seeing historic cuts in that same sector. It's a blood bath actually. So the potential wage increases are going to be welcome, but feel pretty hollow as so many collegues have left.

Rob Shaw would have had us all lose our jobs and take a pay cut at the next one for good measure.

Thanks but no thanks Rob, your world view sucks.

https://www.nsnews.com/economy-law-politics/rob-shaw-ndp-deal-with-bc-teachers-sets-another-costly-precedent-for-public-sector-talks-11966874

2026-06-08 20:57:39

The major rumors surrounding Apple's announcements at WWDC 2026:

The event's tagline,

"All Systems Glow,"

is widely seen as a hint at Siri's new design.

Bloomberg's Mark Gurman has reported that Apple is rebuilding Siri as a full chatbot to compete with ChatGPT, Claude, and Gemini,

-- complete with a dedicated app, Dynamic Island integration, and a new system-wide search interface wrapped in a dark, glowing aesthetic that matches the…

2026-03-10 14:01:08

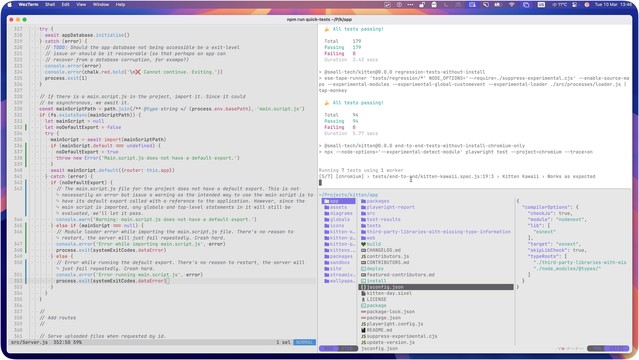

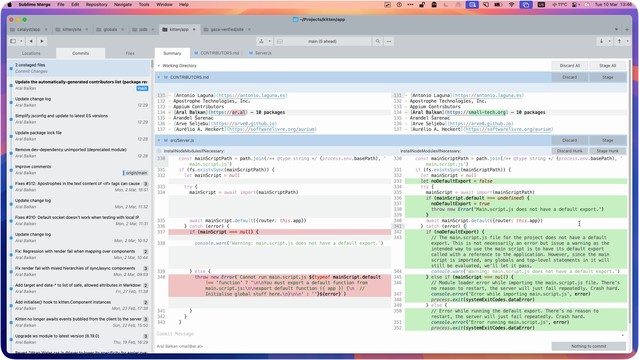

There’s life beyond VSCode… thought I’d share my dev setup:

• Main monitor: WezTerm¹ running in a three (sometimes four)-way split with Helix Editor² as my main editor, a terminal pane for general commands while working, and Yazi³ usually running in another for working with files/directories in a project.

• Other monitor: Sublime Merge⁴ always running full-screen so I can immediately see exactly what I’ve changed (in real time) as I’m working.

Others (not shown): Br…

2026-06-09 16:17:58

FYI, I strongly recommend calling even if you know your House rep already agrees.

My rep is Ilhan Omar, and I still called her office! It was a friendly call: “I know she’s already on the right side of this and there’s only so much she can do, but now is the time to fight like hell, use every tool she has….”

It’s important to pressure elected allies, too. The pressure •helps• them:

https://hachyderm.io/@inthehands/115867053108521520

2026-04-10 12:47:09

2026-04-10 08:13:00

2026-03-10 15:07:16

An asteroid that NASA used for target practice a few years ago

was nudged into a slightly different route around the sun,

findings that could help divert a future incoming killer space rock, scientists reported Friday.

It’s the first time that a celestial body’s orbit around the sun was deliberately changed.

The asteroid that NASA’s #Dart spacecraft slammed into was never a threat to Ea…

2026-03-10 18:04:45

Everywhere you look, the news isn’t merely bad. It’s terrible.

We’ve seen numerous examples in these last 13 months of Trump’s mendacity and malevolence.

Unfortunately, a lot of Americans will never see him that way.

There are those who adore him unconditionally, but beyond these dead-enders, there are others who know he’s not a good person but aren’t all that bothered by it.

That’s hard for millions of us to accept.

But I hope to God that these people are final…

2026-06-09 17:11:14

The S&P 500 tech index shed almost 1.7%.

Heavyweight Nvidia fell 1.2%, while Apple and Microsoft lost 3% and 1.1%, respectively.

AI stocks saw a sharp sell-off on Friday, after Broadcom's disappointing forecast fueled concerns about high valuations in the sector, particularly in chipmakers, which have rallied strongly this year.

Shares of chipmakers Intel, Broadcom and Micron Technology dropped between 1.7% and 2%.

The Philadelphia SE Semiconductor index fe…