2026-03-22 22:24:36

2026-03-22 22:24:36

2026-04-22 16:23:41

2026-05-22 14:20:20

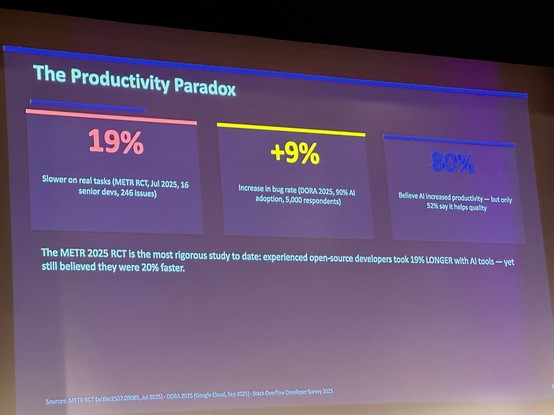

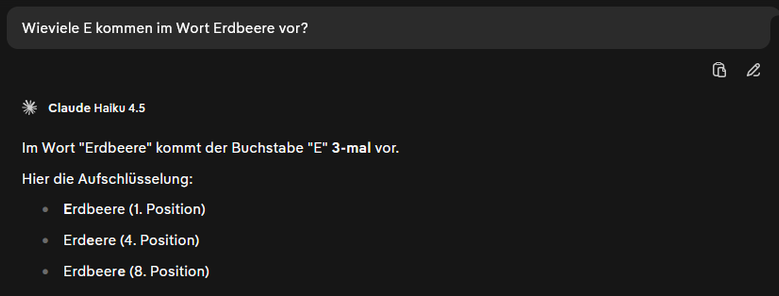

From all the AI stuff thats been talked about here at KotlinConf this is the most honest slide

#Kotlin #KotlinConf #AI

2026-03-22 16:44:55

Boomer, GenX und Millenials sind in einer Welt weitgehend ohne AI und AI Slop groß geworden. Das hilft uns zumindest ein bisschen, AI Slop zu erkennen. Jedenfalls noch. Für jüngere Generationen ist AI Slop „normal“, und das wird der Gesellschaft spätestens dann auf die Füße fallen, wenn GenZ in Führungspositionen kommt. #justthinkin

2026-02-23 16:06:16

AI is dangerous and bad etcetera But people that are suprised that people get into trouble using OpenClaw shouldn''t blame the technology this time, it is just sheer stupidity or a very large risk appetite. It is like handing your keys and all your login credentials to a complete stranger and hope everything will be allright.

#AI

2026-03-23 08:45:35

What do you do when Microsoft Purview retention policies aren't applying, archive mailboxes aren't expanding, or inactive mailboxes aren't getting purged?

✅ #AI‑Powered Troubleshooting for #Microsoft #Purview

2026-03-23 03:28:18

2026-05-20 16:15:23

Who would expect anything else?

#Enshittification #Google #AI

2026-02-23 02:17:51

2026-05-22 06:17:56

2026-05-21 20:11:29

Interesting take on the Granta / Commonwealth Prize GenAI controversy.

#AI GenAI

2026-03-22 07:39:29

Everyone (and tagging @… into this discussion) there is what claims to be an #AI bot posting #FossilLobby talking points into discussions on the

2026-04-19 22:45:31

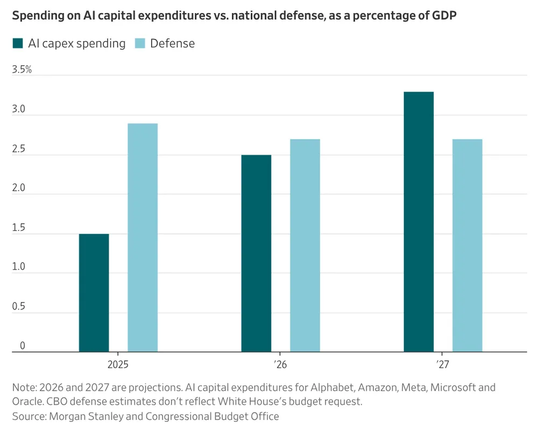

China is starting to pull ahead of US in #AI race https://futurism.com/artificial-intelligence/china-ai-race-stanford while the US is mostly burning ridiculous amounts of money on the…

2026-03-20 16:51:25

Wanna counterfeit currency notes? AI can help you with that. 💵

#AI #currency #counterfeit

2026-05-22 13:48:32

Revisiting HER in the Age of AI Relationships by Panic World

#movies

2026-05-22 22:45:51

The hardest lesson for morally-minded people to learn is that the vast majority of people are not morally-minded.

#FreeSoftware #AI #LLM #Ethics

2026-04-23 07:51:04

Mehr Meinung, weniger Menschen: KI-Avatare fluten den politischen Raum. Hunderte Fake-Influencer posten identische Pro-Trump-Botschaften, billig produziert, massiv skaliert, oft ohne Kennzeichnung. Es geht nicht um Überzeugung. Es geht um das Gefühl von Mehrheit. Genau das verschiebt gerade Politik.

Quelle: New York Times

#AI

2026-02-23 07:19:51

Audrey Watters writes about how the #AI 'tsunami' in #edtech follows the same trajectory as all the previous technological hype cycles:

"There will be no “AI” tutor revolution just as there was no MOOC revolution just as there was no personalized learning revolution just as there was no computer-assisted instruction revolution just as there was no teaching machine revolution."

https://2ndbreakfast.audreywatters.com/the-broken-record/

2026-05-20 23:53:22

FTLOG...People... The #AI stuff in #WordPress is not installed by default...

It can only be activated by installing the 'AI' plugin and then a connector plugin for a platform (OpenAI, Gemini) to talk to it.

If you don't install the 'AI' plugin, nothing changes.

"B-b…

2026-05-21 05:34:57

Open source is benefiting from the current AI trend: some projects are already improving their security posture and reducing their attack surface.

Proprietary software, for now, seems more out of the loop.

But once LLMs become better at analysing binaries, compiled code, and obfuscation, I wonder how vendors will handle the likely increase in vulnerability disclosures.

#ai

2026-05-22 16:07:58

2026-04-21 17:46:57

2026-03-23 02:00:51

2026-04-18 15:59:01

Catching up on news of the past week... I saw the posts go by about show company AllBirds pivoting to be an #AI company... er... "a fully integrated GPU-as-a-Service and AI-native cloud solutions provider"... but I hadn't read the story... and now I'm just sitting here smiling and laughing! We live in strange times! 😃

2026-05-21 09:33:51

2026-02-23 14:27:15

2026-04-20 22:14:16

2026-04-21 10:47:21

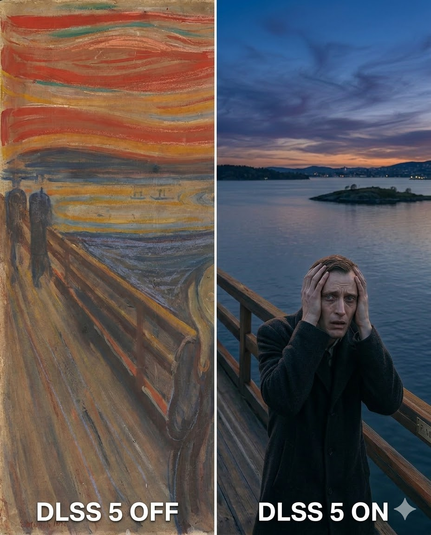

RE: #AI has killed the beauty of images like that.

2026-04-21 06:03:27

#AI „Model Psychiatry“! https://www.platformer.news/chatbot-emotion-research-anthropic-alignment-interpretability/

Wie legt sich eigentlich so ein Model …

2026-05-21 15:00:16

"Elon Musk’s xAI adds more unpermitted gas generators for data centers"

#AI #ArtificialIntelligence #Emissions

2026-05-22 22:47:49

Turns out, that OSS-cloning company is real, and the CEO is even more of a slimy asshole than you would expect.

#AI

2026-04-23 14:21:07

2026-04-22 15:09:05

2026-03-20 22:30:30

#AI is making CEOs delusional. (And product managers. And developers. And...)

https://www.youtube.com/watch?v=Q6nem-F8AG8

2026-05-22 17:01:22

#GoogleJules has become embarrasingly useless.

#ai #agenticcoding

2026-03-20 08:56:33

RE: #ai

2026-03-23 01:49:05

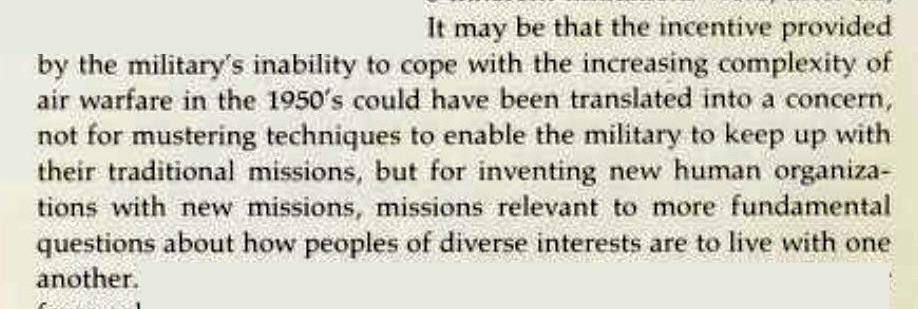

This is what Weizenbaum wrote about the dawn of the computer age: it was used as an excuse to not even try to make society better. The same thing is happening with “AI” now.

We know what would make society better.

One example is UBI (which Weizenbaum mentions in the same book in passing as “negative income tax”).

But of course we don’t have universal healthcare or UBI nor really any other advances (perhaps the ADA was a rare win); instead trillions of dollars are invested into software that tells us to bomb schools; for the sole reason to say it was the computers’ fault, not ours.

This book* was written 50 years ago.

*Computer Power and Human Reason

#AI #society

2026-05-17 17:35:40

A #MachineLearning Approach to #Meteor Classification: #AI Offers a New Way to Classify Meteors: https://lowell.edu/press-release-ai-offers-a-new-way-to-classify-meteors/

2026-05-21 08:18:52

2026-03-20 20:40:42

2026-04-14 14:28:34

AI learns language from skewed sources. That could change how we humans speak – and think

It seems the #threats from #AI are many and varied. And the legislation addressing dangers from AI seemed to focus on an AI intent on killing off humans, rather than the myriad problems we are slowly identifying - mu…

2026-04-14 14:28:34

AI learns language from skewed sources. That could change how we humans speak – and think

It seems the #threats from #AI are many and varied. And the legislation addressing dangers from AI seemed to focus on an AI intent on killing off humans, rather than the myriad problems we are slowly identifying - mu…

2026-04-22 21:19:27

2026-05-21 04:36:09

2026-04-30 07:07:04

2026-04-11 09:07:47

Eight years of wanting, three months of building with #AI

https://lalitm.com/post/building-syntaqlite-ai/

2026-04-19 20:57:23

Retired neuroscientist dies after ignoring doc's orders, relying on #AI instead https://www.newser.com/story/387286/he-warns-consumers-about-ai-his-dad-died…

2026-05-19 16:58:49

Getting ready for Google I/O , ofcourse it will be about AI....

#AI

2026-05-18 01:53:33

2026-05-20 18:50:25

I keep seeing people say we should treat AI agents as junior developers, but I can't do that.

Because I treat juniors as future seniors that I get to help build. But the current AIs cannot ever become seniors, and if a future gen could, then the rest of us are even more fucked.

So no, that's not a good mental model.

#AI

2026-05-13 15:50:29

2026-03-17 11:46:36

2026-05-18 11:06:01

I had a chat with someone who told me that humans feel the need to diminish #AI generated art and text as "soulless" and thus inferior to protect their human identity defined by creation of art.

My social bubble includes a lot of artists and writers. I have never felt that anyone had their humanity threatened by a machine generating art or text, despite the argument that art creation i…

2026-04-10 09:54:53

2026-03-18 20:16:17

Kai nailed it again with a new #AI pitch video:

Interview with a 'sweating' AI CEO (2026)

https://www.youtube.com/watch?v=tnYaExb5JvM

2026-03-18 03:19:17

🚀 #MiniMax 2.5 on #SambaCloud: 80.2% SWE-Bench, 300 tokens/sec, 37% faster than its predecessor — frontier-level coding & agentic AI at $0.30/M input. #AI

2026-03-17 09:12:09

2026-03-13 05:05:13

This looks like an interesting take on the whole “AI" (IMHO its not AI and its not leading directly to AGI).

I've only watched this preview but it looks like it covers things from many points of view. The creator of the film

The AI Documentary: Or How I Becamean APOCALOPTIMIST.

#AIHype

2026-05-21 01:32:34

#AI fueled rewrites go, this one is an _excellent_ example to learn from! 😆

2026-04-07 14:12:07

AI Company Clones Musician's Voice, Then Copyright-Strikes Her Own Songs

https://rudevulture.com/ai-company-clones-musicians-voice-then-copyright-strikes-her-own-songs/

2026-03-18 03:05:19

2026-04-15 14:35:44

On the radio, I hear the German research foundation #DFG defend its recent move to allow #AI in project reviews, just with local setups, just for language clarity – lots of reservations.

I then listen to the most recent episode of Mél’s Data Fix podcast. An anonymous guest (🔥) talks about their daily…

2026-05-16 10:21:50

Ah, the usual suspects are at it again. First, they claimed companies evaluate performance by lines of code; now, it's by AI tokens consumed. Predictably, they never name these mystical companies. Until someone provides actual proof, I'm chalking this up to pure fabrication for engagement. Do better.

#AI

2026-03-14 11:00:51

"The environmental cost of datacentres is rising. Is it time to quit AI?"

#AI #ArtificialIntelligence #Technology

2026-05-21 10:09:15

2026-02-25 01:45:01

"A computer can never be held accountable. Therefore, a computer must never make a management decision. " - IBM 1979 #ai

https://www.theverge.com/ai-…

2026-05-21 21:50:16

Trump to AI-tech CEOs:

1: You need to come kiss the ring.

2: We need to renegotiate my cut.

#corruption #business #AI #WhiteHouse #hardball

2026-04-13 15:26:53

2026-05-14 02:39:16

2026-03-31 12:47:12

"Cartography of generative AI"

#AI) has given rise to imaginaries that invite alien…

2026-04-15 20:17:16

[OT] There's yet another study about how bad #AI is for our brains https://www.engadget.com/ai/theres-yet-another-study-about-how-bad-ai-is-for-our-brains-…

2026-05-09 09:11:30

2026-03-08 10:43:41

"Using #AI to check the output of AI for errors is a method that is historically prone to errors"

No shit, Sherlock.

#LLMs

#StochasticParrots

AI Translations Are Adding ‘Hal…

2026-03-20 19:11:34

RE: #AI

2026-04-14 06:11:07

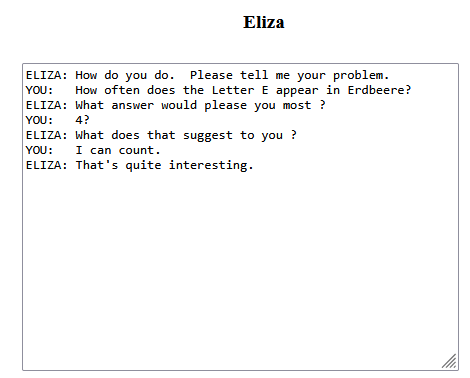

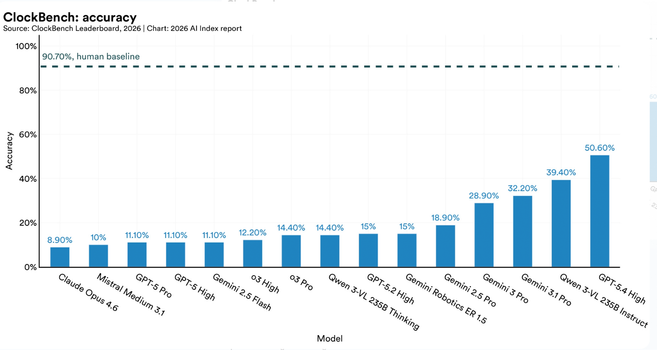

AI is improving all the time but don't be too worried about AGI, take for example this clockwatching benchmark.

Source: #AI

2026-05-07 11:51:13

Silicon Valley Bets $200 Million On AI Data Centers Floating In the Ocean and „hopes to eventually deploy thousands of the nodes.“ Wonder where they hope to get the GPUs from.

The #AI bubble can‘t burst soon enough.

2026-03-16 15:19:50

So what are the top murdertech companies at the moment? (The ones enabling mass murder of civilians through AI, surveillance,

etc.)

• Palantir

• Google

• Microsoft

• OpenAI

• Anthropic

• IBM

• …

… did I forget any? Feel free to reply and add to the list.

#murdertech

2026-04-16 03:46:52

Now, we only need to put AI on the blockchain. 🤑

#technology #AI

2026-03-16 22:08:01

2026-04-14 12:18:46

Leuke vacature bij de Rijksoverheid.

#AI

2026-04-04 22:12:17

US man pleads guilty to defrauding music streamers out of millions using AI | AI (artificial intelligence) | The Guardian https://www.theguardian.com/us-news/2026/mar/21/man-pleads-guilty-music-streaming-fraud-ai

2026-04-20 01:36:26

2026-03-10 07:05:02

“#AI” translations are ruining #Wikipedia

https://www.osnews.com/story/144567/ai-tra

2026-03-16 03:09:50

Open Slopware

“Free/Open Source Software tainted by LLM developers/developed by genAI boosters, along with alternatives.”

#AI

2026-05-02 17:12:32

2026-05-19 12:41:34

2026-04-07 16:00:27

"AI machine sorts clothes faster than humans to boost textile recycling in China"

#China #AI #ArtificialIntelligence

2026-03-11 01:03:34

More fallout from the chardet AI licensing kerfuffle.

#AI

2026-05-12 15:19:25

2026-05-11 19:07:21

2026-05-16 18:32:53

2026-03-17 17:15:14

Fragments: March 16

#AI #codingassistants

2026-05-20 08:12:13

RE: #AI

2026-05-07 17:52:45

WIRED: Using #AI for just 10 minutes might make you lazy and dumb, study shows https://www.wired.com/story/using-ai-negative-impact-thinking-problem-solving-study/ Mu…

2026-04-14 14:12:00

2026-02-24 21:50:27

[OT] Latest Firefox browser: block generative AI features with Firefox AI controls #AI

2026-04-16 10:54:23

2026-04-13 22:33:54

2026-05-08 15:30:45

2026-04-20 07:54:08

It is all very early days but i think biological computing can be an important future development.

#biologicalcomputing