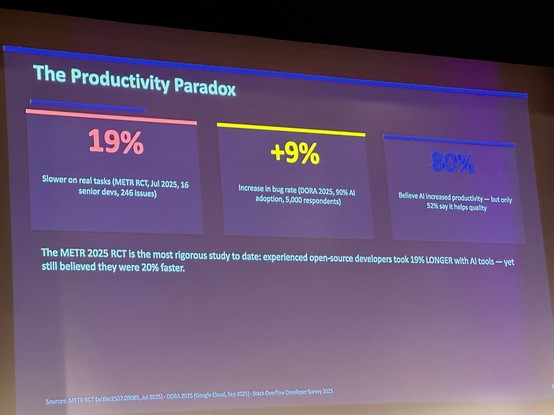

The whole #LLM ROI thing reveals something interesting. It's basically impossible to figure out the ROI of an LLM. That makes it impossible for bean counters to make a comparison between human work and LLM work, or human work without an LLM and LLM-assisted work, to determine if the incredibly high price is worth it. But it's also impossible because you can't measure the ROI of a human, especially for skilled labor.

You can't measure the ROI of a human, because managers have no idea what people do. There's an eternally expanding amount of work designed to address this problem. But no matter how closely people are surveilled, interrogated, analyzed, there's never any real answer.

I've talked in the past about in relation to medical care. One of the dirty secrets of hospitals is that they have no way to figure out how much individual treatment costs. It's easy to understand at scale. You can know exactly how much something costs society. You can even identify patterns, using public health models, and decrease costs for society by trying to get people to avoid risky behavior (stop smoking, use protection during sex, etc). But it is absolutely not possible, at all, in any way, to figure out how much a single visit costs. This is similar to the problem of predicting climate change vs predicting the weather tomorrow in Amsterdam at 15:00. One is possible, the other is simply not.

But what is becoming painfully clear now is that this is true *everywhere*. It's trivial to know how much an industry costs. It's possible to figure out it's ROI for society. It is not possible to figure out how much value any individual worker provides. LLM ROI and cost comparison is an instance of this larger problem.

This is a problem for capitalism because it shows that the fundamental assumptions behind capitalism, that product value and labor value are quantifiable, that people can actually make comparisons between competing products, etc, are completely bullshit. The capitalist apologetics that makes up so much of economics, the lies that are told that hold this system together, are crumbling before our eyes.

If you make a lot of money, it's because you've been lucky. You have the right social networks, you have become good at convincing people to give you money. There is absolutely no way to connect that to actually providing value to society. If you make a lot of money, internalize that. Understand that you are not special, and things can change. If you don't make a lot of money, it's not because you don't provide value. Don't forget that. The system is a lie built to destroy you. Don't let it.

The ideology is sick, something something time of monsters and all that, we are together in this dying machine. We need to understand the lies. Your value can never be quantified. The way we have always figured out how to do the right thing for each other is through each other. Social connection has always guided us. But now the most socially disconnected people on the planet have hijacked the system. They direct the resources of the world, and game the system to avoid personal responsibility.

We have to build a system where everyone is accountable. We can't use abstract numbers and lies to figure things out for us. We have to build systems around people and accountability. There is no other solution.