2026-04-23 19:03:17

The Most AI-Exposed Counties in America Are Not Where You Think

https://jakeprokopets.substack.com/p/why-the-most-ai-exposed-counties

Here is a county-level AI displacement model across all 3,204 US counties.

The Top 5 most exposed counties are a…

2026-02-24 15:30:54

Kraken launches what it claims are the first regulated perpetual futures contracts based on tokenized stocks, available to non-US users and trading 24/7 (Krisztian Sandor/CoinDesk)

https://www.coindesk.com/business/2026/02/

2026-04-23 14:45:28

Contextualizing the Proposed SECURE Data Act in the State Privacy Landscape

https://fpf.org/blog/contextualizing-the-proposed-secure-data-act-in-the-state-privacy-landscape/

@…

2026-04-24 16:00:04

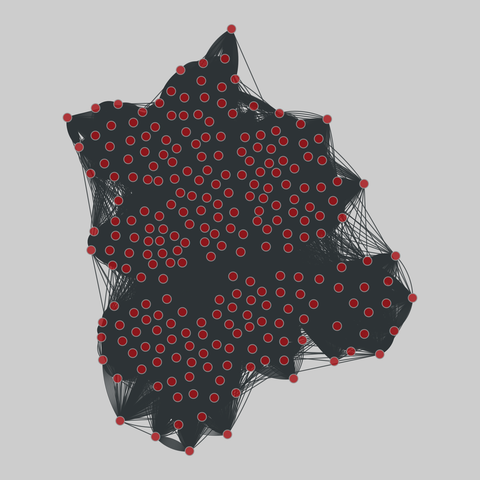

sp_primary_school: Primary school dynamic contacts (2009)

Two temporal networks of contacts among students and teachers at a primary school in Lyon, France, on consecutive days of in October 2009. Each network accumulates all contacts over the course of a single day; contacts were sampled at 20-second intervals.

This network has 242 nodes and 125773 edges.

Tags: Social, Offline, Weighted, Temporal, Metadata

2026-03-24 08:19:44

Liquid ass: now with 100% more context menu.

#apple

2026-02-25 10:45:11

Untied Ulysses: Memory-Efficient Context Parallelism via Headwise Chunking

Ravi Ghadia, Maksim Abraham, Sergei Vorobyov, Max Ryabinin

https://arxiv.org/abs/2602.21196 https://arxiv.org/pdf/2602.21196 https://arxiv.org/html/2602.21196

arXiv:2602.21196v1 Announce Type: new

Abstract: Efficiently processing long sequences with Transformer models usually requires splitting the computations across accelerators via context parallelism. The dominant approaches in this family of methods, such as Ring Attention or DeepSpeed Ulysses, enable scaling over the context dimension but do not focus on memory efficiency, which limits the sequence lengths they can support. More advanced techniques, such as Fully Pipelined Distributed Transformer or activation offloading, can further extend the possible context length at the cost of training throughput. In this paper, we present UPipe, a simple yet effective context parallelism technique that performs fine-grained chunking at the attention head level. This technique significantly reduces the activation memory usage of self-attention, breaking the activation memory barrier and unlocking much longer context lengths. Our approach reduces intermediate tensor memory usage in the attention layer by as much as 87.5$\%$ for 32B Transformers, while matching previous context parallelism techniques in terms of training speed. UPipe can support the context length of 5M tokens when training Llama3-8B on a single 8$\times$H100 node, improving upon prior methods by over 25$\%$.

toXiv_bot_toot

2026-02-24 15:06:57

Sources: Meta aims to enter the stablecoin space in H2, with plans to integrate a vendor to administer stablecoin-backed payments and implement a new wallet (Ian Allison/CoinDesk)

https://www.coindesk.com/business/2026/02/

2026-03-24 17:01:57

Circle shares fell as much as 18%, and Coinbase dropped about 8%, after a draft of the US Clarity Act raised the prospect of strict limits on stablecoin yield (CoinDesk)

https://www.coindesk.com/markets/2026/03/2

2026-02-25 00:00:05

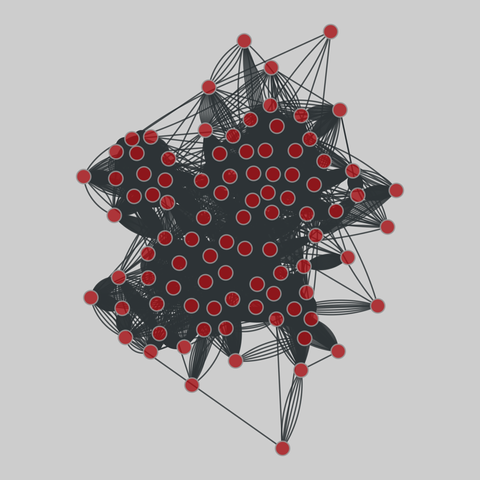

sp_office: Workplace contacts (2013)

A temporal network of contacts between individuals, measured in an office building in France, from June 24 to July 3, 2013.

This network has 92 nodes and 9827 edges.

Tags: Social, Offline, Weighted, Temporal, Metadata

https://networks.skewed.de/net/sp_offi