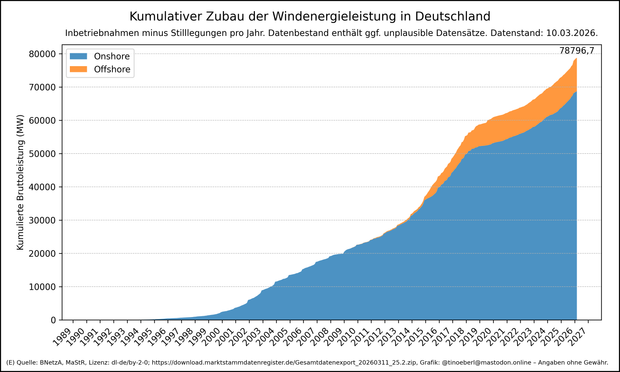

2026-03-15 14:15:07

Kumulativer Zubau der #Windenergieleistung in #Deutschland mit Stand vom 10.03.2026.

Summen der Inbetriebnahmen minus Stilllegungen pro Jahr.

Der Datenbestand enthält ggf. unplausible Datensätze.

👉 Zusatzlesestoff: Warum

2026-02-08 19:06:40

“4% of GitHub public commits are being authored by Claude Code right now. At the current trajectory, we believe that Claude Code will be 20% of all daily commits by the end of 2026. While you blinked, AI consumed all of software development.”

Must-read article, even if you can disagree with the analysis

2026-04-09 08:38:48

Train supprimé :

-KOHO départ Melun 10h48, arrivée Montereau 11h25

Risque d'affluence Š bord du train suivant.

Prochain train Š circuler :

-KOHO départ Melun 11h48, arrivée Montereau 12h25

Pour plus d'informations sur cette perturbation, consultez le fil X de la ligne.

Motif : difficulté d'acheminement du conducteur.

🤖 09/04 10:38

2026-04-06 18:43:24

I love this premise:

"It has been 62 years since the last Federation contact with the planet."

It sounds real, gloomy, and plausible in the grander scale of the Star Trek universe compared to the usual warping about the Alpha Quadrant.

It's from TNG's 1x14 (Angel One) Memory Alpha:

https://

2026-04-09 08:44:05

Train supprimé :

-ZOHA départ Montereau 12h29, arrivée Melun 13h05

Risque d'affluence Š bord du train suivant.

Prochain train Š circuler :

-ZOHA départ Montereau 13h29, arrivée Melun 14h05

Pour plus d'informations sur cette perturbation, consultez le fil X de la ligne.

Motif : difficulté d'acheminement du conducteur.

🤖 09/04 10:44

2026-03-31 11:13:03

Replaced article(s) found for cs.CL. https://arxiv.org/list/cs.CL/new

[4/5]:

- Retrieving Climate Change Disinformation by Narrative

Upravitelev, Solopova, Jakob, Sahitaj, M\"oller, Schmitt

https://arxiv.org/abs/2603.22015 https://mastoxiv.page/@arXiv_csCL_bot/116283633674519408

- PaperVoyager : Building Interactive Web with Visual Language Models

Dasen Dai, Biao Wu, Meng Fang, Wenhao Wang

https://arxiv.org/abs/2603.22999 https://mastoxiv.page/@arXiv_csCL_bot/116289015432093128

- Continual Robot Skill and Task Learning via Dialogue

Weiwei Gu, Suresh Kondepudi, Anmol Gupta, Lixiao Huang, Nakul Gopalan

https://arxiv.org/abs/2409.03166 https://mastoxiv.page/@arXiv_csRO_bot/113089412115632702

- Shifting Perspectives: Steering Vectors for Robust Bias Mitigation in LLMs

Zara Siddique, Irtaza Khalid, Liam D. Turner, Luis Espinosa-Anke

https://arxiv.org/abs/2503.05371 https://mastoxiv.page/@arXiv_csLG_bot/114136994263573386

- SkillFlow: Scalable and Efficient Agent Skill Retrieval System

Fangzhou Li, Pagkratios Tagkopoulos, Ilias Tagkopoulos

https://arxiv.org/abs/2504.06188 https://mastoxiv.page/@arXiv_csAI_bot/114306773220502860

- Large Language Models for Computer-Aided Design: A Survey

Licheng Zhang, Bach Le, Naveed Akhtar, Siew-Kei Lam, Tuan Ngo

https://arxiv.org/abs/2505.08137 https://mastoxiv.page/@arXiv_csLG_bot/114504972217393639

- Structured Agent Distillation for Large Language Model

Liu, Kong, Dong, Yang, Li, Tang, Yuan, Niu, Zhang, Zhao, Lin, Huang, Wang

https://arxiv.org/abs/2505.13820 https://mastoxiv.page/@arXiv_csLG_bot/114544636506163783

- VLM-3R: Vision-Language Models Augmented with Instruction-Aligned 3D Reconstruction

Fan, Zhang, Li, Zhang, Chen, Hu, Wang, Qu, Zhou, Wang, Yan, Xu, Theiss, Chen, Li, Tu, Wang, Ranjan

https://arxiv.org/abs/2505.20279 https://mastoxiv.page/@arXiv_csCV_bot/114578817567171199

- Learning to Diagnose Privately: DP-Powered LLMs for Radiology Report Classification

Bhattacharjee, Tian, Rubin, Lo, Merchant, Hanson, Gounley, Tandon

https://arxiv.org/abs/2506.04450 https://mastoxiv.page/@arXiv_csCR_bot/114635189706505648

- L-MARS: Legal Multi-Agent Workflow with Orchestrated Reasoning and Agentic Search

Ziqi Wang, Boqin Yuan

https://arxiv.org/abs/2509.00761 https://mastoxiv.page/@arXiv_csAI_bot/115140304787881576

- Your Models Have Thought Enough: Training Large Reasoning Models to Stop Overthinking

Han, Huang, Liao, Jiang, Lu, Zhao, Wang, Zhou, Jiang, Liang, Zhou, Sun, Yu, Xiao

https://arxiv.org/abs/2509.23392 https://mastoxiv.page/@arXiv_csAI_bot/115293169353788311

- Person-Centric Annotations of LAION-400M: Auditing Bias and Its Transfer to Models

Leander Girrbach, Stephan Alaniz, Genevieve Smith, Trevor Darrell, Zeynep Akata

https://arxiv.org/abs/2510.03721 https://mastoxiv.page/@arXiv_csCV_bot/115332690912652473

- Agentic Context Engineering: Evolving Contexts for Self-Improving Language Models

Zhang, Hu, Upasani, Ma, Hong, Kamanuru, Rainton, Wu, Ji, Li, Thakker, Zou, Olukotun

https://arxiv.org/abs/2510.04618 https://mastoxiv.page/@arXiv_csLG_bot/115332999596603375

- Mitigating Premature Exploitation in Particle-based Monte Carlo for Inference-Time Scaling

Giannone, Xu, Nayak, Awhad, Sudalairaj, Xu, Srivastava

https://arxiv.org/abs/2510.05825 https://mastoxiv.page/@arXiv_csLG_bot/115338159696513898

- Complete asymptotic type-token relationship for growing complex systems with inverse power-law co...

Pablo Rosillo-Rodes, Laurent H\'ebert-Dufresne, Peter Sheridan Dodds

https://arxiv.org/abs/2511.02069 https://mastoxiv.page/@arXiv_physicssocph_bot/115496283627867809

- ViPRA: Video Prediction for Robot Actions

Sandeep Routray, Hengkai Pan, Unnat Jain, Shikhar Bahl, Deepak Pathak

https://arxiv.org/abs/2511.07732 https://mastoxiv.page/@arXiv_csRO_bot/115535941444003568

- AISAC: An Integrated multi-agent System for Transparent, Retrieval-Grounded Scientific Assistance

Chandrachur Bhattacharya, Sibendu Som

https://arxiv.org/abs/2511.14043

- VideoARM: Agentic Reasoning over Hierarchical Memory for Long-Form Video Understanding

Yufei Yin, Qianke Meng, Minghao Chen, Jiajun Ding, Zhenwei Shao, Zhou Yu

https://arxiv.org/abs/2512.12360 https://mastoxiv.page/@arXiv_csCV_bot/115729238732682644

- RadImageNet-VQA: A Large-Scale CT and MRI Dataset for Radiologic Visual Question Answering

L\'eo Butsanets, Charles Corbi\`ere, Julien Khlaut, Pierre Manceron, Corentin Dancette

https://arxiv.org/abs/2512.17396 https://mastoxiv.page/@arXiv_csCV_bot/115762705911757243

- Measuring all the noises of LLM Evals

Sida Wang

https://arxiv.org/abs/2512.21326 https://mastoxiv.page/@arXiv_csLG_bot/115779597137785637

toXiv_bot_toot

2026-02-04 07:41:57

ProphetKV: User-Query-Driven Selective Recomputation for Efficient KV Cache Reuse in Retrieval-Augmented Generation

Shihao Wang, Jiahao Chen, Yanqi Pan, Hao Huang, Yichen Hao, Xiangyu Zou, Wen Xia, Wentao Zhang, Haitao Wang, Junhong Li, Chongyang Qiu, Pengfei Wang

https://arxiv.org/abs/2602.02579 https://arxiv.org/pdf/2602.02579 https://arxiv.org/html/2602.02579

arXiv:2602.02579v1 Announce Type: new

Abstract: The prefill stage of long-context Retrieval-Augmented Generation (RAG) is severely bottlenecked by computational overhead. To mitigate this, recent methods assemble pre-calculated KV caches of retrieved RAG documents (by a user query) and reprocess selected tokens to recover cross-attention between these pre-calculated KV caches. However, we identify a fundamental "crowding-out effect" in current token selection criteria: globally salient but user-query-irrelevant tokens saturate the limited recomputation budget, displacing the tokens truly essential for answering the user query and degrading inference accuracy.

We propose ProphetKV, a user-query-driven KV Cache reuse method for RAG scenarios. ProphetKV dynamically prioritizes tokens based on their semantic relevance to the user query and employs a dual-stage recomputation pipeline to fuse layer-wise attention metrics into a high-utility set. By ensuring the recomputation budget is dedicated to bridging the informational gap between retrieved context and the user query, ProphetKV achieves high-fidelity attention recovery with minimal overhead. Our extensive evaluation results show that ProphetKV retains 96%-101% of full-prefill accuracy with only a 20% recomputation ratio, while achieving accuracy improvements of 8.8%-24.9% on RULER and 18.6%-50.9% on LongBench over the state-of-the-art approaches (e.g., CacheBlend, EPIC, and KVShare).

toXiv_bot_toot

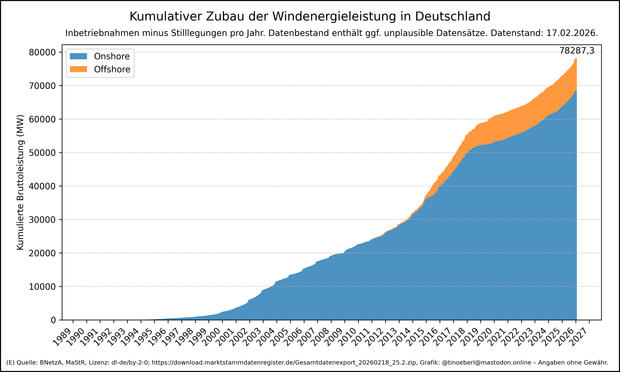

2026-02-21 09:10:02

Kumulativer Zubau der #Windenergieleistung in #Deutschland mit Stand vom 17.02.2026.

Summen der Inbetriebnahmen minus Stilllegungen pro Jahr.

Der Datenbestand enthält ggf. unplausible Datensätze.

👉 Zusatzlesestoff: Warum

2026-02-04 17:26:49

Prévoir un allongement de temps de trajet de 15 minutes environ pour le train suivant : - KUMO, départ Paris Gare de Lyon 17h44 - arrivée Montereau 18h44

Pour plus d'informations sur cette perturbation, consultez le fil X de la ligne.

Motif : alerte de sécurité émise par le conducteur.

🤖 04/02 18:26

2026-03-31 11:12:53

Replaced article(s) found for cs.CL. https://arxiv.org/list/cs.CL/new

[3/5]:

- Can Small Language Models Handle Context-Summarized Multi-Turn Customer-Service QA? A Synthetic D...

Lakshan Cooray, Deshan Sumanathilaka, Pattigadapa Venkatesh Raju

https://arxiv.org/abs/2602.00665 https://mastoxiv.page/@arXiv_csCL_bot/116006686092324902

- SEAD: Self-Evolving Agent for Multi-Turn Service Dialogue

Dai, Gao, Zhang, Wang, Luo, Wang, Wang, Wu, Wang

https://arxiv.org/abs/2602.03548

- OmniRAG-Agent: Agentic Omnimodal Reasoning for Low-Resource Long Audio-Video Question Answering

Yifan Zhu, Xinyu Mu, Tao Feng, Zhonghong Ou, Yuning Gong, Haoran Luo

https://arxiv.org/abs/2602.03707

- GreekMMLU: A Native-Sourced Multitask Benchmark for Evaluating Language Models in Greek

Zhang, Konomi, Xypolopoulos, Divriotis, Skianis, Nikolentzos, Stamou, Shang, Vazirgiannis

https://arxiv.org/abs/2602.05150

- Using LLMs for Knowledge Component-level Correctness Labeling in Open-ended Coding Problems

Zhangqi Duan, Arnav Kankaria, Dhruv Kartik, Andrew Lan

https://arxiv.org/abs/2602.17542 https://mastoxiv.page/@arXiv_csCL_bot/116102514058414603

- MetaState: Persistent Working Memory Enhances Reasoning in Discrete Diffusion Language Models

Kejing Xia, Mingzhe Li, Lixuan Wei, Zhenbang Du, Xiangchi Yuan, Dachuan Shi, Qirui Jin, Wenke Lee

https://arxiv.org/abs/2603.01331 https://mastoxiv.page/@arXiv_csCL_bot/116165314672421581

- A Browser-based Open Source Assistant for Multimodal Content Verification

Milner, Foster, Karmakharm, Razuvayevskaya, Roberts, Porcellini, Teyssou, Bontcheva

https://arxiv.org/abs/2603.02842 https://mastoxiv.page/@arXiv_csCL_bot/116170368271004704

- Nw\=ach\=a Mun\=a: A Devanagari Speech Corpus and Proximal Transfer Benchmark for Nepal Bhasha ASR

Sharma, Shrestha, Poudel, Tiwari, Shrestha, Ghimire, Bal

https://arxiv.org/abs/2603.07554 https://mastoxiv.page/@arXiv_csCL_bot/116204797995674104

- Model Merging in the Era of Large Language Models: Methods, Applications, and Future Directions

Mingyang Song, Mao Zheng

https://arxiv.org/abs/2603.09938 https://mastoxiv.page/@arXiv_csCL_bot/116210189810004206

- AgentDrift: Unsafe Recommendation Drift Under Tool Corruption Hidden by Ranking Metrics in LLM Ag...

Zekun Wu, Adriano Koshiyama, Sahan Bulathwela, Maria Perez-Ortiz

https://arxiv.org/abs/2603.12564 https://mastoxiv.page/@arXiv_csCL_bot/116237800898328349

- GhanaNLP Parallel Corpora: Comprehensive Multilingual Resources for Low-Resource Ghanaian Languages

Gyamfi, Azunre, Moore, Budu, Asare, Owusu, Asiamah

https://arxiv.org/abs/2603.13793 https://mastoxiv.page/@arXiv_csCL_bot/116243544688031749

- sebis at ArchEHR-QA 2026: How Much Can You Do Locally? Evaluating Grounded EHR QA on a Single Not...

Ibrahim Ebrar Yurt, Fabian Karl, Tejaswi Choppa, Florian Matthes

https://arxiv.org/abs/2603.13962 https://mastoxiv.page/@arXiv_csCL_bot/116243646346563497

- ExPosST: Explicit Positioning with Adaptive Masking for LLM-Based Simultaneous Machine Translation

Yuzhe Shang, Pengzhi Gao, Yazheng Yang, Jiayao Ma, Wei Liu, Jian Luan, Jinsong Su

https://arxiv.org/abs/2603.14903 https://mastoxiv.page/@arXiv_csCL_bot/116243711232778054

- BanglaSocialBench: A Benchmark for Evaluating Sociopragmatic and Cultural Alignment of LLMs in Ba...

Tanvir Ahmed Sijan, S. M Golam Rifat, Pankaj Chowdhury Partha, Md. Tanjeed Islam, Md. Musfique Anwar

https://arxiv.org/abs/2603.15949 https://mastoxiv.page/@arXiv_csCL_bot/116249122231759766

- EngGPT2: Sovereign, Efficient and Open Intelligence

G. Ciarfaglia, et al.

https://arxiv.org/abs/2603.16430 https://mastoxiv.page/@arXiv_csCL_bot/116249228411487178

- HypeLoRA: Hyper-Network-Generated LoRA Adapters for Calibrated Language Model Fine-Tuning

Bartosz Trojan, Filip G\k{e}bala

https://arxiv.org/abs/2603.19278 https://mastoxiv.page/@arXiv_csCL_bot/116277612915482857

- Automatic Analysis of Collaboration Through Human Conversational Data Resources: A Review

Yi Yu, Maria Boritchev, Chlo\'e Clavel

https://arxiv.org/abs/2603.19292 https://mastoxiv.page/@arXiv_csCL_bot/116277620779254916

- Alignment Whack-a-Mole : Finetuning Activates Verbatim Recall of Copyrighted Books in Large Langu...

Xinyue Liu, Niloofar Mireshghallah, Jane C. Ginsburg, Tuhin Chakrabarty

https://arxiv.org/abs/2603.20957 https://mastoxiv.page/@arXiv_csCL_bot/116283538317671552

- KG-Hopper: Empowering Compact Open LLMs with Knowledge Graph Reasoning via Reinforcement Learning

Shuai Wang, Yinan Yu

https://arxiv.org/abs/2603.21440 https://mastoxiv.page/@arXiv_csCL_bot/116283595007808076

toXiv_bot_toot