2025-08-05 07:59:42

2025-07-04 20:14:31

Long; central Massachusetts colonial history

Today on a whim I visited a site in Massachusetts marked as "Huguenot Fort Ruins" on OpenStreetMaps. I drove out with my 4-year-old through increasingly rural central Massachusetts forests & fields to end up on a narrow street near the top of a hill beside a small field. The neighboring houses had huge lawns, some with tractors.

Appropriately for this day and this moment in history, the history of the site turns out to be a microcosm of America. Across the field beyond a cross-shaped stone memorial stood an info board with a few diagrams and some text. The text of the main sign (including typos/misspellings) read:

"""

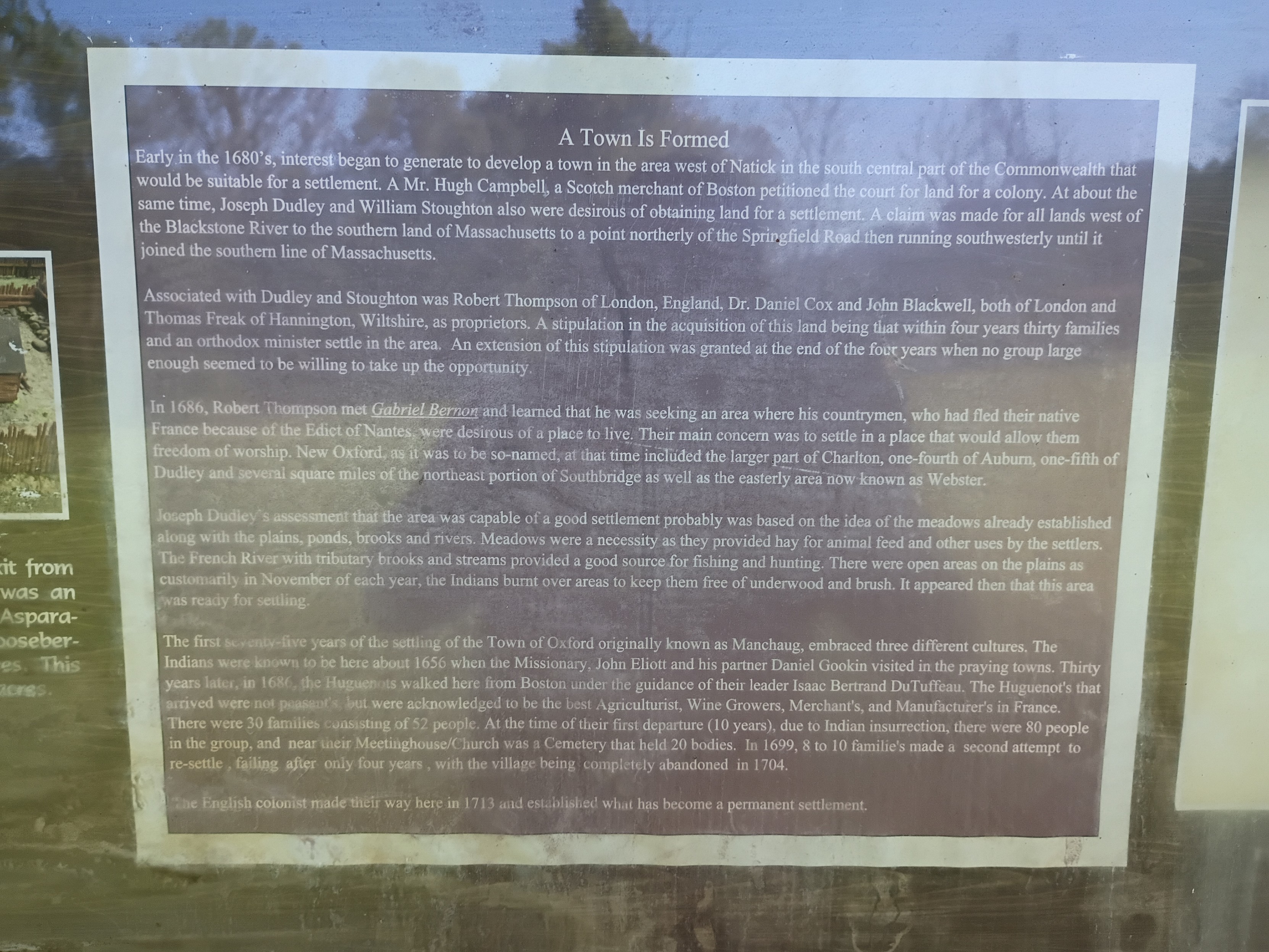

Town Is Formed

Early in the 1680's, interest began to generate to develop a town in the area west of Natick in the south central part of the Commonwealth that would be suitable for a settlement. A Mr. Hugh Campbell, a Scotch merchant of Boston petitioned the court for land for a colony. At about the same time, Joseph Dudley and William Stoughton also were desirous of obtaining land for a settlement. A claim was made for all lands west of the Blackstone River to the southern land of Massachusetts to a point northerly of the Springfield Road then running southwesterly until it joined the southern line of Massachusetts.

Associated with Dudley and Stoughton was Robert Thompson of London, England, Dr. Daniel Cox and John Blackwell, both of London and Thomas Freak of Hannington, Wiltshire, as proprietors. A stipulation in the acquisition of this land being that within four years thirty families and an orthodox minister settle in the area. An extension of this stipulation was granted at the end of the four years when no group large enough seemed to be willing to take up the opportunity.

In 1686, Robert Thompson met Gabriel Bernor and learned that he was seeking an area where his countrymen, who had fled their native France because of the Edict of Nantes, were desirous of a place to live. Their main concern was to settle in a place that would allow them freedom of worship. New Oxford, as it was the so-named, at that time included the larger part of Charlton, one-fourth of Auburn, one-fifth of Dudley and several square miles of the northeast portion of Southbridge as well as the easterly ares now known as Webster.

Joseph Dudley's assessment that the area was capable of a good settlement probably was based on the idea of the meadows already established along with the plains, ponds, brooks and rivers. Meadows were a necessity as they provided hay for animal feed and other uses by the settlers. The French River tributary books and streams provided a good source for fishing and hunting. There were open areas on the plains as customarily in November of each year, the Indians burnt over areas to keep them free of underwood and brush. It appeared then that this area was ready for settling.

The first seventy-five years of the settling of the Town of Oxford originally known as Manchaug, embraced three different cultures. The Indians were known to be here about 1656 when the Missionary, John Eliott and his partner Daniel Gookin visited in the praying towns. Thirty years later, in 1686, the Huguenots walked here from Boston under the guidance of their leader Isaac Bertrand DuTuffeau. The Huguenot's that arrived were not peasants, but were acknowledged to be the best Agriculturist, Wine Growers, Merchant's, and Manufacter's in France. There were 30 families consisting of 52 people. At the time of their first departure (10 years), due to Indian insurrection, there were 80 people in the group, and near their Meetinghouse/Church was a Cemetery that held 20 bodies. In 1699, 8 to 10 familie's made a second attempt to re-settle, failing after only four years, with the village being completely abandoned in 1704.

The English colonist made their way here in 1713 and established what has become a permanent settlement.

"""

All that was left of the fort was a crumbling stone wall that would have been the base of a higher wooden wall according to a picture of a model (I didn't think to get a shot of that myself). Only trees and brush remain where the multi-story main wooden building was.

This story has so many echoes in the present:

- The rich colonialists from Boston & London agree to settle the land, buying/taking land "rights" from the colonial British court that claimed jurisdiction without actually having control of the land. Whether the sponsors ever actually visited the land themselves I don't know. They surely profited somehow, whether from selling on the land rights later or collecting taxes/rent or whatever, by they needed poor laborers to actually do the work of developing the land (& driving out the original inhabitants, who had no say in the machinations of the Boston court).

- The land deal was on condition that there capital-holders who stood to profit would find settlers to actually do the work of colonizing. The British crown wanted more territory to be controlled in practice not just in theory, but they weren't going to be the ones to do the hard work.

- The capital-holders actually failed to find enough poor suckers to do their dirty work for 4 years, until the Huguenots, fleeing religious persecution in France, were desperate enough to accept their terms.

- Of course, the land was only so ripe for settlement because of careful tending over centuries by the natives who were eventually driven off, and whose land management practices are abandoned today. Given the mention of praying towns (& dates), this was after King Phillip's war, which resulted in at least some forced resettlement of native tribes around the area, but the descendants of those "Indians" mentioned in this sign are still around. For example, this is the site of one local band of Nipmuck, whose namesake lake is about 5 miles south of the fort site: #LandBack.

2025-07-05 12:23:39

Like other large #FreeSoftware projects, #Gentoo developers have varying degrees of activity. There are some people who dedicate a lot of their free time to Gentoo, maintain hundreds of packages, participate in multiple areas. Then, there are people with narrower interests, lower commit counts, but they are still putting an effort and making Gentoo a better distribution — and that matters. But then, there is the tail.

There is a few of developers whose main talents seem to be 1) finding packages that require absolutely minimal maintenance effort, and 2) justifying their developer status with long essays. I mean, this is getting beyond absurd. It is not just "my packages are all up-to-date". It is not even "my packages require very low maintenance, that's why I'm not doing much". It is literally "I deliberately choose low-maintenance packages, so I don't have to do anything". But of course, all these people definitely need commit access to Gentoo, and show off their Gentoo developer badges, and it's *so damn unfair*.

And in the meantime, other developers are overburdened, and getting burned out. And they step down from more things. And who takes these things over? Of course, not the developers who just admitted to not having much to do…

2025-08-04 16:42:20

Right, it’s time we started an initiative to find and call out (with receipts) accounts on the fediverse engaged in genocide denial and genocide apologism in support of Israel’s ongoing genocide of the Palestinian people.

There are accounts (and even whole instances, it would seem) that are engaged in this and, further, attempts to silence the voices, not to mention threaten the livelihoods of, those of us speaking out against Israel’s genocide of the Palestinian people and attempting …

2025-07-05 02:36:10

Okay, so while I don't like the "Democrats need to do more" because it's stupid

I think there's room for "Democrats need to SAY more."

Like the clip of Maxwell Frost. But y'all can go even harder. Call them names, remind the people of how absolutely scummy they are right now

#uspol

2025-06-06 02:56:35

Folks in NZ are starting to realise the implications of the impending EOL of Microsoft Windows 10 for our education system. In its finite wisdom, our MinOfEd has effectively locked all schools into either the use of MS Windows or Google ChromeOS. Both have fundamental issues like endless license hassles, EOLing OSs, ending support for Window or ChromeOS on specific computer models, etc. And that doesn't even start to talk about digital colonisation & sovereignty (see

2025-06-02 20:28:08

Confusing episode. Let me clear it all up.

The world is sinking into the doubt needed to rescue Omega, remember, and The Doctor is falling with a balcony that's separated from the building.

How does he get out of that?

Well, saved by a literal magic door that pops out of nowhere, leading back to the time hotel. 🤨

Anita, who he spent a year with once a couple of Christmases ago, has been popping around the Doctor's entire long life, peeping on him with the Daleks and stuff. Trying to find him on the Earth's last day. Today.

And now he's rescued, today turns into a groundhog day. Same day over and over again. 😆

There's another woman that's been stalking him through time lately, Mrs Flood. She was following him everywhere, but she had Xmas off she reckons, so didn't see the Time Hotel bit. Thus the element of surprise in the deus ex machina rescue. 😀

The Doctor is broken free of the wish spell now anyway, popped his conditioning, and can use the time hotel's door to recall Unit and break them all out of the wish too.

The Rani pops in to say hi and explain her plans. 😝

How did the Rani survive the end of the Timelords? She flipped her DNA to sidestep the genetic bomb apparently? Well that makes no sense, but nor does anything else so no time to ponder.

The end of the Time Lords made them all Barons... No, made them barren. There can be no more children of the time-lords.

She's popping Omega back out of the underworld for his DNA because the timelords are all barren and she wants to recreate Galifrey.

But wait a minute: Poppy is the Doctor's kid in wish world! So she should have Timelord DNA too! Maybe that could work?

No. The Rani is a nazi, don't like the kid's contaminated blood. She's got human all over her DNA. Eww.

Rani pops off back to her Bone Palace, and makes the bone beasts attack.

The Doctor explains that the Giant dinosaur skeletons are beasts that pop in to clean up the world when there's a reality flux, and the Rani has turned them on Unit HQ.

So the UNIT HQ turns into some kinda ship? Like the Crimson Permanent Insurance. Lol. It's blasting lasers at the bone beasts and turning around, and has a steering wheel like pirate ship now. 🤣

During the battle, the Doctor pops out to take a ride on the sky-bike, looking like something from Flash Gordon, and crashes into the Bone Palace.

Too late though! Omega is pretty much here now. He's a giant boney CGI zombie, become his own legend. Looks great but doesn't really seem like Omega, who ought to be held together by pure will.

Omega eats the Rani! One of the Ranis anyway. Mrs Flood avoids being eaten. She pops off with the time bracelet. "So much for the Two Rani's. It's a goodnight from me!" as she disappears off into time. Great gag. 😁

The Doctor just shoots Omega to get him back into his box. Pops a rifle off the wall. The Vindicator has apparently also got a built in laser as well as locator beacons. So that's handy. The Doctor doesn't use guns but some of his devices work like one. 🔫

So all is well! The day is saved and the wish is over and baby Poppy survives in a time box! 🍻

They're going to take the space baby off to do space adventures. Ruby is jealous of seeing The Doctor and Belinda vibing like that, as they plan a life in space with the space baby. Aww. Poor Ruby. 😭

But then Poppy pops off! Disappears entirely, and everyone other than Ruby forgets. Ruby remembers because she's disappeared from time herself in the past they say.

Okay: to save his child and on Ruby's word alone, the Doctor will sacrifice himself to turn reality one degree.

He goes off to commit suicide by Regeneration, but Thirteen is here! She's popped out of her timeline to stop him! Or maybe to help, with a motivational chat instead. Gives him a pep talk then pops back off again.

The Doctor zaps reality with his Tardis, dying but holding off on the actual regeneration for a few moments to go check on the kid.

The kid is safe! But isn't his own kid any more. Poppy has popped all her Timelord DNA and is just all human now. Poppy's pop isn't the doc, it's someone called Richie.

And Belinda has been so keen to get home all this time in order to get back to her Baby! Who isn't a timelord, and definitely didn't exist until she was wished into being.

This may not be the most ethical action The Doctor has ever taken: To bend the whole universe in order to recreate a baby that was accidentally wished into being out of nothing. Twisting time to give a child to a nurse who didn't previously have a child, or even remember the wish. Then it's not even the same child that disappeared, coz this one is all human. 🤷

But the doc is popping off to regenerate with Joy in the stars, and... Turns blonde: "oh. Hello?" 🤯

It's Rose! Billie Piper is back? Fantastic!

Is Rose doing a David Tennant Impression there?

Billie playing the Doctor, doing a Tennant impression as Bad Wolf? Amazing. Can't wait.![]()

#doctorWho

2025-08-05 10:47:06

For Two Raiders' Teammates, It's All in the Family https://www.si.com/nfl/raiders/las-vegas-devin-white-decamerion-richardson-pete-carroll-training-camp

2025-06-05 19:46:09

If prices keep rising out of the reach of so many people, businesses and even whole countries are in huge amounts of debt, what is the end game here?

Who exactly are we owing all this money to anyway?

#Money

2025-08-04 15:49:00

Should we teach vibe coding? Here's why not.

Should AI coding be taught in undergrad CS education?

1/2

I teach undergraduate computer science labs, including for intro and more-advanced core courses. I don't publish (non-negligible) scholarly work in the area, but I've got years of craft expertise in course design, and I do follow the academic literature to some degree. In other words, In not the world's leading expert, but I have spent a lot of time thinking about course design, and consider myself competent at it, with plenty of direct experience in what knowledge & skills I can expect from students as they move through the curriculum.

I'm also strongly against most uses of what's called "AI" these days (specifically, generative deep neutral networks as supplied by our current cadre of techbro). There are a surprising number of completely orthogonal reasons to oppose the use of these systems, and a very limited number of reasonable exceptions (overcoming accessibility barriers is an example). On the grounds of environmental and digital-commons-pollution costs alone, using specifically the largest/newest models is unethical in most cases.

But as any good teacher should, I constantly question these evaluations, because I worry about the impact on my students should I eschew teaching relevant tech for bad reasons (and even for his reasons). I also want to make my reasoning clear to students, who should absolutely question me on this. That inspired me to ask a simple question: ignoring for one moment the ethical objections (which we shouldn't, of course; they're very stark), at what level in the CS major could I expect to teach a course about programming with AI assistance, and expect students to succeed at a more technically demanding final project than a course at the same level where students were banned from using AI? In other words, at what level would I expect students to actually benefit from AI coding "assistance?"

To be clear, I'm assuming that students aren't using AI in other aspects of coursework: the topic of using AI to "help you study" is a separate one (TL;DR it's gross value is not negative, but it's mostly not worth the harm to your metacognitive abilities, which AI-induced changes to the digital commons are making more important than ever).

So what's my answer to this question?

If I'm being incredibly optimistic, senior year. Slightly less optimistic, second year of a masters program. Realistic? Maybe never.

The interesting bit for you-the-reader is: why is this my answer? (Especially given that students would probably self-report significant gains at lower levels.) To start with, [this paper where experienced developers thought that AI assistance sped up their work on real tasks when in fact it slowed it down] (https://arxiv.org/abs/2507.09089) is informative. There are a lot of differences in task between experienced devs solving real bugs and students working on a class project, but it's important to understand that we shouldn't have a baseline expectation that AI coding "assistants" will speed things up in the best of circumstances, and we shouldn't trust self-reports of productivity (or the AI hype machine in general).

Now we might imagine that coding assistants will be better at helping with a student project than at helping with fixing bugs in open-source software, since it's a much easier task. For many programming assignments that have a fixed answer, we know that many AI assistants can just spit out a solution based on prompting them with the problem description (there's another elephant in the room here to do with learning outcomes regardless of project success, but we'll ignore this over too, my focus here is on project complexity reach, not learning outcomes). My question is about more open-ended projects, not assignments with an expected answer. Here's a second study (by one of my colleagues) about novices using AI assistance for programming tasks. It showcases how difficult it is to use AI tools well, and some of these stumbling blocks that novices in particular face.

But what about intermediate students? Might there be some level where the AI is helpful because the task is still relatively simple and the students are good enough to handle it? The problem with this is that as task complexity increases, so does the likelihood of the AI generating (or copying) code that uses more complex constructs which a student doesn't understand. Let's say I have second year students writing interactive websites with JavaScript. Without a lot of care that those students don't know how to deploy, the AI is likely to suggest code that depends on several different frameworks, from React to JQuery, without actually setting up or including those frameworks, and of course three students would be way out of their depth trying to do that. This is a general problem: each programming class carefully limits the specific code frameworks and constructs it expects students to know based on the material it covers. There is no feasible way to limit an AI assistant to a fixed set of constructs or frameworks, using current designs. There are alternate designs where this would be possible (like AI search through adaptation from a controlled library of snippets) but those would be entirely different tools.

So what happens on a sizeable class project where the AI has dropped in buggy code, especially if it uses code constructs the students don't understand? Best case, they understand that they don't understand and re-prompt, or ask for help from an instructor or TA quickly who helps them get rid of the stuff they don't understand and re-prompt or manually add stuff they do. Average case: they waste several hours and/or sweep the bugs partly under the rug, resulting in a project with significant defects. Students in their second and even third years of a CS major still have a lot to learn about debugging, and usually have significant gaps in their knowledge of even their most comfortable programming language. I do think regardless of AI we as teachers need to get better at teaching debugging skills, but the knowledge gaps are inevitable because there's just too much to know. In Python, for example, the LLM is going to spit out yields, async functions, try/finally, maybe even something like a while/else, or with recent training data, the walrus operator. I can't expect even a fraction of 3rd year students who have worked with Python since their first year to know about all these things, and based on how students approach projects where they have studied all the relevant constructs but have forgotten some, I'm not optimistic seeing these things will magically become learning opportunities. Student projects are better off working with a limited subset of full programming languages that the students have actually learned, and using AI coding assistants as currently designed makes this impossible. Beyond that, even when the "assistant" just introduces bugs using syntax the students understand, even through their 4th year many students struggle to understand the operation of moderately complex code they've written themselves, let alone written by someone else. Having access to an AI that will confidently offer incorrect explanations for bugs will make this worse.

To be sure a small minority of students will be able to overcome these problems, but that minority is the group that has a good grasp of the fundamentals and has broadened their knowledge through self-study, which earlier AI-reliant classes would make less likely to happen. In any case, I care about the average student, since we already have plenty of stuff about our institutions that makes life easier for a favored few while being worse for the average student (note that our construction of that favored few as the "good" students is a large part of this problem).

To summarize: because AI assistants introduce excess code complexity and difficult-to-debug bugs, they'll slow down rather than speed up project progress for the average student on moderately complex projects. On a fixed deadline, they'll result in worse projects, or necessitate less ambitious project scoping to ensure adequate completion, and I expect this remains broadly true through 4-6 years of study in most programs (don't take this as an endorsement of AI "assistants" for masters students; we've ignored a lot of other problems along the way).

There's a related problem: solving open-ended project assignments well ultimately depends on deeply understanding the problem, and AI "assistants" allow students to put a lot of code in their file without spending much time thinking about the problem or building an understanding of it. This is awful for learning outcomes, but also bad for project success. Getting students to see the value of thinking deeply about a problem is a thorny pedagogical puzzle at the best of times, and allowing the use of AI "assistants" makes the problem much much worse. This is another area I hope to see (or even drive) pedagogical improvement in, for what it's worth.

1/2