2026-02-25 16:07:58

Replaced article(s) found for cs.LG. https://arxiv.org/list/cs.LG/new

[3/6]:

- Towards Scalable Oversight via Partitioned Human Supervision

Ren Yin, Takashi Ishida, Masashi Sugiyama

https://arxiv.org/abs/2510.22500 https://mastoxiv.page/@arXiv_csLG_bot/115451787490434401

- ContextPilot: Fast Long-Context Inference via Context Reuse

Yinsicheng Jiang, Yeqi Huang, Liang Cheng, Cheng Deng, Xuan Sun, Luo Mai

https://arxiv.org/abs/2511.03475 https://mastoxiv.page/@arXiv_csLG_bot/115502245581974540

- Metabolomic Biomarker Discovery for ADHD Diagnosis Using Interpretable Machine Learning

Nabil Belacel, Mohamed Rachid Boulassel

https://arxiv.org/abs/2601.11283 https://mastoxiv.page/@arXiv_csLG_bot/115921183182326799

- PhysE-Inv: A Physics-Encoded Inverse Modeling approach for Arctic Snow Depth Prediction

Akila Sampath, Vandana Janeja, Jianwu Wang

https://arxiv.org/abs/2601.17074

- SAGE-5GC: Security-Aware Guidelines for Evaluating Anomaly Detection in the 5G Core Network

Cristian Manca, Christian Scano, Giorgio Piras, Fabio Brau, Maura Pintor, Battista Biggio

https://arxiv.org/abs/2602.03596

- LORE: Jointly Learning the Intrinsic Dimensionality and Relative Similarity Structure From Ordina...

Anand, Helbling, Davenport, Berman, Alagapan, Rozell

https://arxiv.org/abs/2602.04192

- Towards Robust Scaling Laws for Optimizers

Alexandra Volkova, Mher Safaryan, Christoph H. Lampert, Dan Alistarh

https://arxiv.org/abs/2602.07712 https://mastoxiv.page/@arXiv_csLG_bot/116046369672796465

- Do We Need Adam? Surprisingly Strong and Sparse Reinforcement Learning with SGD in LLMs

Sagnik Mukherjee, Lifan Yuan, Pavan Jayasinha, Dilek Hakkani-T\"ur, Hao Peng

https://arxiv.org/abs/2602.07729 https://mastoxiv.page/@arXiv_csLG_bot/116046377539155485

- AceGRPO: Adaptive Curriculum Enhanced Group Relative Policy Optimization for Autonomous Machine L...

Yuzhu Cai, Zexi Liu, Xinyu Zhu, Cheng Wang, Siheng Chen

https://arxiv.org/abs/2602.07906 https://mastoxiv.page/@arXiv_csLG_bot/116046423413650658

- VESPO: Variational Sequence-Level Soft Policy Optimization for Stable Off-Policy LLM Training

Guobin Shen, Chenxiao Zhao, Xiang Cheng, Lei Huang, Xing Yu

https://arxiv.org/abs/2602.10693 https://mastoxiv.page/@arXiv_csLG_bot/116057229834947730

- KBVQ-MoE: KLT-guided SVD with Bias-Corrected Vector Quantization for MoE Large Language Models

Zukang Xu, Zhixiong Zhao, Xing Hu, Zhixuan Chen, Dawei Yang

https://arxiv.org/abs/2602.11184 https://mastoxiv.page/@arXiv_csLG_bot/116062537528208461

- MUSE: Multi-Tenant Model Serving With Seamless Model Updates

Correia, Ferreira, Martins, Bento, Guerreiro, Pereira, Gomes, Bono, Ferreira, Bizarro

https://arxiv.org/abs/2602.11776 https://mastoxiv.page/@arXiv_csLG_bot/116062952355379801

- Pawsterior: Variational Flow Matching for Structured Simulation-Based Inference

Jorge Carrasco-Pollo, Floor Eijkelboom, Jan-Willem van de Meent

https://arxiv.org/abs/2602.13813 https://mastoxiv.page/@arXiv_csLG_bot/116085828112928218

- Silent Inconsistency in Data-Parallel Full Fine-Tuning: Diagnosing Worker-Level Optimization Misa...

Hong Li, Zhen Zhou, Honggang Zhang, Yuping Luo, Xinyue Wang, Han Gong, Zhiyuan Liu

https://arxiv.org/abs/2602.14462 https://mastoxiv.page/@arXiv_csLG_bot/116085997857526328

- Divine Benevolence is an $x^2$: GLUs scale asymptotically faster than MLPs

Alejandro Francisco Queiruga

https://arxiv.org/abs/2602.14495 https://mastoxiv.page/@arXiv_csLG_bot/116086011618741857

- \"UberWeb: Insights from Multilingual Curation for a 20-Trillion-Token Dataset

DatologyAI, et al.

https://arxiv.org/abs/2602.15210 https://mastoxiv.page/@arXiv_csLG_bot/116090912256712568

- GLM-5: from Vibe Coding to Agentic Engineering

GLM-5-Team, et al.

https://arxiv.org/abs/2602.15763 https://mastoxiv.page/@arXiv_csLG_bot/116091080686771018

- Anatomy of Capability Emergence: Scale-Invariant Representation Collapse and Top-Down Reorganizat...

Jayadev Billa

https://arxiv.org/abs/2602.15997 https://mastoxiv.page/@arXiv_csLG_bot/116096541546306333

- AI-CARE: Carbon-Aware Reporting Evaluation Metric for AI Models

KC Santosh, Srikanth Baride, Rodrigue Rizk

https://arxiv.org/abs/2602.16042 https://mastoxiv.page/@arXiv_csLG_bot/116096581524696028

- Beyond Message Passing: A Symbolic Alternative for Expressive and Interpretable Graph Learning

Chuqin Geng, Li Zhang, Haolin Ye, Ziyu Zhao, Yuhe Jiang, Tara Saba, Xinyu Wang, Xujie Si

https://arxiv.org/abs/2602.16947 https://mastoxiv.page/@arXiv_csLG_bot/116102426238903124

toXiv_bot_toot

2026-04-29 11:00:16

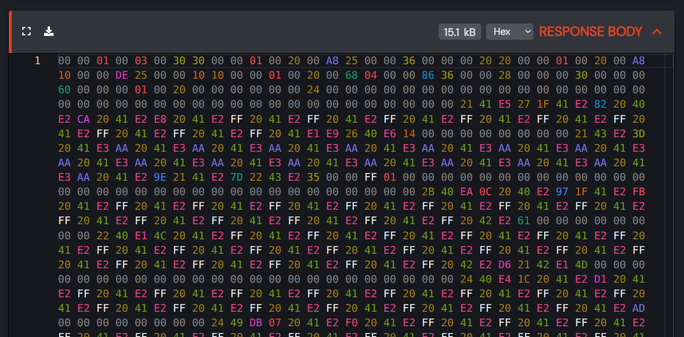

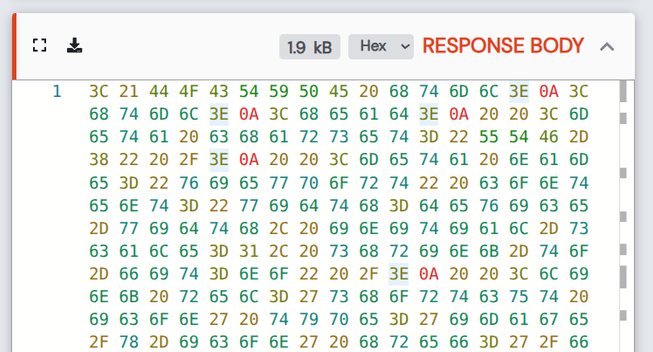

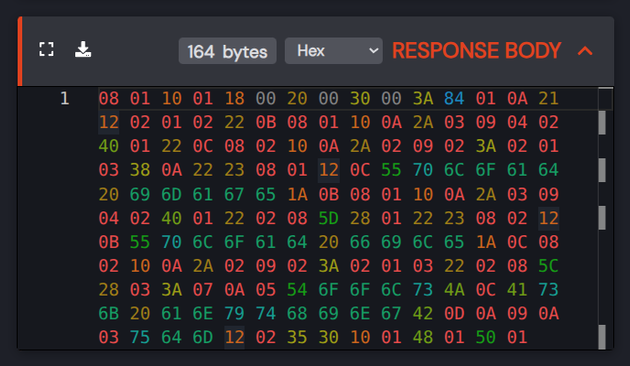

I've been thinking about https://simonomi.dev/blog/color-code-your-bytes/.

Whipped up a quick prototype for HTTP Toolkit's hex view - what do you think? Interesting and more useful than monochrome, or just visually noisy?

See if you can guess what each file type is …

2026-03-30 06:36:06

2026-02-28 16:35:54

This message is brought to you by Amazon Web Services

https://www.washingtonpost.com/opinions/2026/02/27/data-center-moratorium-construction-pritzker-reeves/

2026-03-31 15:07:48

RE: https://mastodon.world/@paninid/116324402697434215

This is a huge problem and we need to be holding people making chatbot interfaces accountable for some of it. The _shape_ of these tools matter immensely. They need to be presented as not just ‘might be wrong' in a disclaimer sense, but deeply integrating their epistemic status in the UI. These are suggestions, leads, pointers, rough summaries.

2026-02-01 02:32:56

#WordWeavers January 29 — Have you ever written a pet into one of your stories?

Several of the Greek gods have pets in Greek #mythology, so yes! Dionysos has pet panthers, for example, Demeter has dragons, and Zeus has fucking Pegasos to carry his thunderbolts, though I have not actually…

2026-03-31 07:47:22

Legendrian and Lagrangian higher torsion

Daniel Alvarez Gavela, Kiyoshi Igusa, Michael Sullivan

https://arxiv.org/abs/2603.28007 https://arxiv.org/pdf/2603.28007 https://arxiv.org/html/2603.28007

arXiv:2603.28007v1 Announce Type: new

Abstract: Let $M$ be a closed manifold. We introduce a family of Legendrian isotopy invariants for Legendrians in $J^1M$, which we collectively call Legendrian higher torsion. Given a choice of a class $\mathcal{F}$ of fibre bundles over $M$, equipped with suitable unitary local systems, the Legendrian higher torsion of a Legendrian $\Lambda \subset J^1M$ is the subset of $H^*(M;\mathbf{R})$ consisting of higher Reidemeister torsion cohomology classes of fibre bundles $W$ over $M$ in the class $\mathcal{F}$ such that $\Lambda$ admits a generating function on a stabilization of $W$. For the class of tube bundles in the sense of Waldhausen we call the invariant tube torsion. In particular, we show that the tube torsion of a nearby Lagrangian $L \subset T^*M$ is well-defined when the stable Gauss map $L \to U/O$ is trivial and consists of a union of cosets of a normalized version of the Pontryagin character. We also identify a distinguished coset, invariant under Hamiltonian isotopy of $L$, which we call nearby Lagrangian torsion. We do not know whether nearby Lagrangians must have trivial tube torsion, as would follow from the nearby Lagrangian conjecture. However, we show that there exist Legendrians $\Lambda \subset J^1M$ with nontrivial tube torsion whose projection $\Lambda \to M$ is homotopic to a diffeomorphism.

toXiv_bot_toot

2026-03-31 14:32:30

🌳 Recursive entries via parent_id — enabling nested hierarchies like threaded comments, email topics with messages & private notes

📝 Immutability: create new versions instead of updating — Entry is a pointer to current state with full edit history

🛠️ Battle-tested at #37signals in #Basecamp

2026-03-30 18:06:18

2026-04-29 20:16:13