2025-06-05 14:18:45

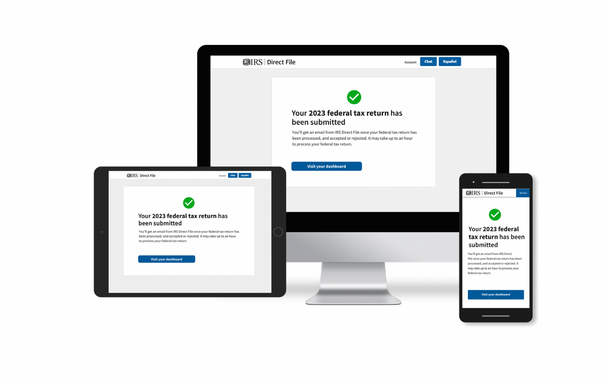

The IRS open sourced much of its incredibly popular Direct File software as the future of the free tax filing program is at risk of being killed by Intuit’s lobbyists and Donald Trump’s megabill.

Meanwhile, several top developers who worked on the software have left the government and joined a project to explore the “future of tax filing” in the private sector

2025-06-04 14:31:02

The IRS open sourced most of its Direct File tax software on GitHub last week, fulfilling a legal requirement under the SHARE IT Act, despite Intuit pressure (Jason Koebler/404 Media)

https://www.404media.co/directfile-open-source-…

2025-07-08 16:44:15

San Leandro teen charged with shooting & killing 17 yr old girlfriend in her bedroom, is freed from custody by empathetic judge who rejects #prosecution theory of intentional #murder.

#Prosecutors have refiled murder …

2025-06-04 15:49:36

The IRS Tax Filing Software TurboTax Is Trying To Kill Just Got Open Sourced - Slashdot

https://news.slashdot.org/story/25/06/04/1447205/the-irs-tax-filing-software-turbotax-is-trying-to-kill-just-got-open-sourced

2025-06-05 09:33:14

In episode 184, I talked about feeling the feelings - why it's so terrifying, what is actually good about it, and the things I'm doing to try to feel the scariest feelings at all. Here I talk about some of the unexpectedly positive things about allowing myself to feel stuff deeply #FeelingTheFeelings

2025-06-05 02:44:29

2025-07-08 04:37:38

2025-06-04 14:07:29

The IRS Tax Filing Software TurboTax Is Trying to Kill Just Got Open Sourced https://www.404media.co/directfile-open-source-irs-tax-filing-software-turbotax-is-trying-to-kil/

2025-05-15 23:40:15

#ZenBrowser is considering to change the behaviour of windows in the following way:

- Two windows of the same workspace show the exact same tabs.

- Tabs are not lost when closing a window.

- In order to create a new, empty window, you first need to create a new workspace.

Any opinions? I find that quite weird and don't understand the purpose. 🤔

2025-06-17 06:53:04