2026-03-12 18:37:15

The Chatbot Moment: Mapping the Emerging 2026 U.S. Chatbot Legislative Landscape

https://fpf.org/blog/the-chatbot-moment-mapping-the-emerging-2026-u-s-chatbot-legislative-landscape/

@…

2026-03-12 10:10:57

PitchBook: VC dealmaking in the media sector fell 69% YoY to $165M across 47 deals in the first two months of 2026, as investors take an "AI-first" approach (Lucas Manfredi/The Wrap)

https://www.thewrap.com/industry-news/deal

2026-04-09 20:14:28

One advantage of being an artistic weirdo who makes completely commercially non-viable music is that I have a •lot• of practice forging that sense of purpose and meaning for myself when the world is aggressively not handing it to me.

Software development has been coasting on a wave of profitability / employability for several decades, and as a discipline perhaps has an underdeveloped sense of intrinsic purpose. Now is a good time to for us to redevelop that as a community, regardless of future job market prospects.

2026-04-06 22:25:40

Filing: Broadcom agrees to produce future versions of Google's TPUs and expands its Anthropic deal to give the startup access to ~3.5 GW of computing capacity (Jordan Novet/CNBC)

https://www.cnbc.com/2026/04/06/broadcom-agrees-to-expanded…

2026-03-02 15:26:35

The Jackson Hole region has long been a refuge for the rich,

but an explosion of new affluence has allowed a growing cadre of extraordinarily wealthy people to ⛔️dominate both the local economy and Wyoming state politics.

Teton County is not merely the richest county in the country, per capita -- by far;

⭐️it is a window into America’s near future, as the country enters a new #gilded

2026-04-03 19:08:16

Lakes forming next to Greenland's melting ice sheet are speeding up glacier flow #Greenland

2026-04-28 04:58:20

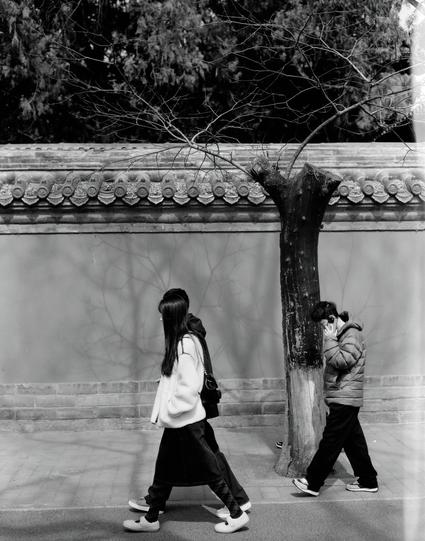

Urban Illusions and Fallacies II 🏙️

城市的幻影和谬误 II 🏙️

📷 Pentax 6x7

🎞️Kentmere Pan 200 (6x7)

If you like my work, Support by buying me a coffee or a roll of film from PayPal https://paypal.com/paypalme/ydcdingsite

2026-04-27 03:44:54

Led by Pope Leo but extending across Christian denominations, the rise of a genuine and global theological debate

is producing the sudden recognition that a kind of progressive Christianity long given over for dead seems to be stirring.

Christ is risen, as it were

– and if people of good faith push hard, the future could be redefined in powerful ways.

2026-02-15 19:19:57

Hundreds of thousands of people have taken part in rallies around the world

to show their solidarity with anti-government demonstrators in Iran

whose continued protests have been met with brutal and deadly repression.

On Saturday, Reza Pahlavi, the exiled son of Iran’s last shah,

addressed a crowd of 200,000 people in Munich,

telling them he was ready to lead the country to a “secular democratic future”.

Pahlavi urged Iranians at home and abroad to continu…

2026-02-27 17:54:53

Health care, new cars and new homes feel unaffordable to most Americans,

a Washington Post-ABC News-Ipsos poll shows.

Most Americans say that they can afford basic necessities like their current housing costs, groceries, utilities and gasoline.

But large numbers across income levels also say larger expenses and the cost of things associated with an enjoyable life

— including taking a weeklong vacation

— are out of reach.

Overall, 53 percent of adults say…