2026-02-27 13:02:02

Tether says it has frozen $4.2B of its crypto token over links to "illicit activity", including $3.5B since 2023 and $61M linked to pig-butchering scams (Elizabeth Howcroft/Reuters)

https://www.reuters.com/sustainabi…

2026-03-27 14:15:28

RE: #drones

2026-02-27 16:53:22

I'm absolutely floored by how cool this video turned out!

I had the honour of working with Wild Blue Studios on a couple of the illustrations in this cinematic trailer.

And that song is a proper banger.

Very excited for this project.

https://www.youtube.com/watch?v=uxZXjHZ45t0

2026-01-28 15:58:04

“Crypto Cash Fusion Cell”

https://ofsi.blog.gov.uk/2026/01/28/ofsi-and-partners-clamp-down-on-the-abuse-of-cryptoassets/

2026-02-28 11:36:15

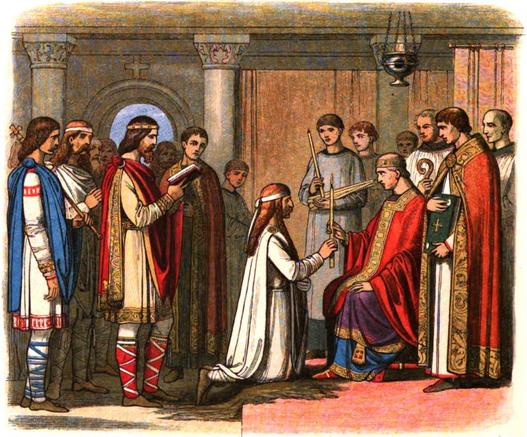

Alfred, Gunthrum & Danelaw The making of England. Dr Toby Purser, University of Northampton takes us on a tour of the Viking invasion of England. FREE Zoom talk https://www.ticketsource.co.uk/fostp 25 Mar 2026, 19.30 GMT. No Guthrum, no Alfred, no Alfred, no England ….

2026-04-27 04:03:39

Unlike the race to the moon between the Soviet Union and the US,

the 21st-century competition is shaping up to be more like a marathon,

with a gargantuan effort to launch multiple missions over many years.

“What this is really illustrating is that it doesn’t matter who gets to the moon next.

It matters who gets to the moon the next 10 times,”

said Scott Manley, a Scottish astrophysicist and expert on rocket engineering.

“The nation that keeps going is goi…

2026-03-27 08:44:52

Automating Computational Chemistry Workflows via OpenClaw and Domain-Specific Skills

Mingwei Ding, Chen Huang, Yibo Hu, Yifan Li, Zitian Lu, Xingtai Yu, Duo Zhang, Wenxi Zhai, Tong Zhu, Qiangqiang Gu, Jinzhe Zeng

https://arxiv.org/abs/2603.25522 https://arxiv.org/pdf/2603.25522 https://arxiv.org/html/2603.25522

arXiv:2603.25522v1 Announce Type: new

Abstract: Automating multistep computational chemistry tasks remains challenging because reasoning, workflow specification, software execution, and high-performance computing (HPC) execution are often tightly coupled. We demonstrate a decoupled agent-skill design for computational chemistry automation leveraging OpenClaw. Specifically, OpenClaw provides centralized control and supervision; schema-defined planning skills translate scientific goals into executable task specifications; domain skills encapsulate specific computational chemistry procedures; and DPDispatcher manages job execution across heterogeneous HPC environments. In a molecular dynamics (MD) case study of methane oxidation, the system completed cross-tool execution, bounded recovery from runtime failures, and reaction network extraction, illustrating a scalable and maintainable approach to multistep computational chemistry automation.

toXiv_bot_toot

2026-03-27 08:50:49

Recientemente en epublibre han publicado la saga de Stephen King "La torre oscura" en un solo volumen, con ilustraciones y todo.

Si desaparezco un tiempo por aquí, ya sabéis una de las posibles causas. #mastolibros #mastobooks

2026-03-28 15:13:29

Prokofiev - "Cinderella" (Cleveland Orch., V. Ashkenazy cond.) (1983)

#NowPlaying

2026-03-28 07:17:39

Plant specimens and teaching materials that inspired Charles Darwin

and qualified him to work as a naturalist on HMS Beagle

have been unearthed from an archive in Cambridge

and will be used for the first time to teach contemporary students about botany.

The fragile specimens, ink drawings and watercolour illustrations of plants belonged to

Darwin’s teacher and mentor,

Prof John Stevens Henslow,

and have been stored in Cambridge University’s herbarium…