2026-06-08 11:47:12

RE: https://tldr.nettime.org/@tante/116713587055769588

I was actually using LLMs a couple of years ago for text classification. Before that I pushed hard for resources to support automation. I helped build an automation team where none existed. I pushed to use transformer models to solve problems before LLMs existed. I used simple ML to make predictions and classify issues. I've been on the cutting edge for a long time.

I *should* be an early adopter of the modern LLM thing. But tech oligarchs transformed themselves into reverse ouroboros (infinitely shoving their heads up their own asses). The oligarchs never really believed that other people had free will, but I think they had been too scared to let that slip. After the lockdowns, I think they just snapped and here we are.

As much as I trash LLMs, I've had a nuanced critique for a while (wiring about this now). The lack of consent is so huge. The oligarchs have plans for us, and it doesn't involve our consent.

Under different conditions, I do actually think there could be revolutionary elements to this tech (not so much LLMs themselves as LLM MCP, and not for the things they think). But without consent, I think (and hope) the friction will overcome the momentum.

"AI" is already more expensive than humans. It will never be profitable as long as people resist. There are multiplying problems, and I don't see those being fixed without collective effort. I have a lot of thoughts, but "consent" (or the real or implied lack thereof) is central to so many aspects of LLMs.

The oligarchs want to force LLM tech onto all of us without our consent. Ultimately, they want to replace us all with obedient machines. The mistake they made was assuming we would be as obedient as the LLMs with which they have become so obsessed.

2026-05-10 19:23:38

I made a game called Fediquest but

There was some design flaws in it that I mistakenly introduced in the beginning and that became very annoying later

I confess, I used Claude to slopify it

and, maybe most importantly,

so I have revised my set of rules and will just restart from scratch...

2026-05-08 23:42:31

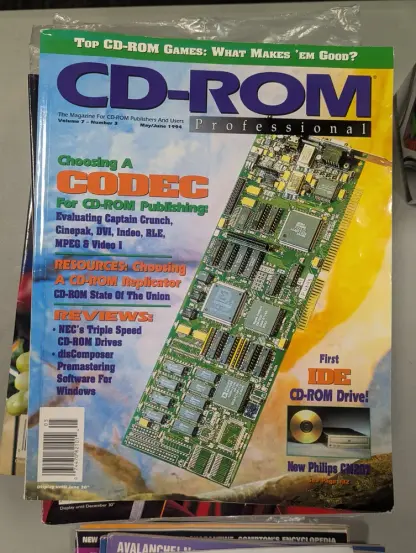

I thought I was familiar with a lot of codecs, but Captain Crunch and DVI are names I've never heard. #avpreservation

2026-04-09 15:59:33

Like…if we are concerned about humanity, if we believe that lives matter, then this war is an absolute horror, just a terrible mistake in every moral and ethical sense, period.

But if we are only concerned about the battle of nation-states in some cold-blooded realpolitik frame of mind…then this is •still• a terrible mistake, one of the greatest unforced errors of statecraft in modern history.

2026-06-08 18:11:55

Recharged my gravid Aedes traps (GATs) with water that was infested with mosquitoes. This will likely increase their catch rate substantially because mosquitoes love to lay eggs in water that already has mosquitoes. But don't worry, I plopped in a bit of a Mosquito Dunk into each one so nothing will be maturing to adulthood. PLUS these traps are designed with screening on the top part, so even if adults emerged they couldn't get out. They are made by Biogents (Regensburg, Germany). #mosquitos #aedes #biogents

2026-06-05 23:24:43

One of the biggest advantages I find with ApplePay is that if a site supports AP, it bypasses all that shit.

It has encouraged a LOT of impulse purchases that I might have bagged on if a site bugged me for all that shit.

*Disclaimer: yes, Apple, I know….

https://bsky.app/profile/di…

2026-04-08 02:42:43

I bet it's really difficult to get a profile pic like that when you lack a spine. Probably a whole elaborate system behind him holding his head upright.

https://bsky.app/profile/did:plc:jl6bnuiemddpm3orm2kcedxh/post/3miw3zs3dak2f

2026-05-08 03:22:27

ok, I've got some questions for users of #MeshCore

How do you handle custom sensors and modules? In Meshtastic they generally "just work" after setting the pin configuration, but I'm having zero luck. Currently fighting with trying to get a NEO-6M GPS module working with a Heltec V3 radio.

On Meshtastic it worked as soon as I set the pin configuration, but in MeshCore, it's…

2026-04-07 22:51:18

🇺🇦 #NowPlaying on KEXP's #AfternoonShow

IDLES:

🎵 I'm Scum

#IDLES

https://idlesband.bandcamp.com/track/im-scum

https://open.spotify.com/track/0mXDySw7m2OXK6Wd9hc9Js

2026-06-10 02:17:00

For a moment, I mistakenly thought two posts were the same thread and my brain was busy trying to parse what cattle smuggling had to do with the choosing of the astronauts on the Artemis mission