2026-04-28 08:08:41

Disentangling the Effect of Ionic Coupling and Multiple Interfering Terms in Attosecond Molecular Interferometry

Ioannis Makos, Jakub Benda, David Busto, Benjamin Steiner, Barbara Merzuk, Serguei Patchkovskii, Van-Hung Hoang, Uwe Thumm, Zden\v{e}k Ma\v{s}\'in, Giuseppe Sansone

https://arxiv.org/abs/2604.23441

2026-03-08 05:55:53

Samsung's consumer device chief TM Roh says it was "open to strategic co-operation" with more AI groups, having recently added Perplexity to its mobile OS (Michael Acton/Financial Times)

https://www.ft.com/content/3752d058-d3ee-41a4-b702-d49ae7f61b5c

2026-05-26 07:53:47

Seeing Inside the Storm: Improving Nowcasting by Integrating Meteorological Drivers

Minghui Qiu, Jun Chen, Lin Chen, Weifeng Chen, Shuxin Zhong, Zhidan Liu, Yu Zhang, Kaishun Wu

https://arxiv.org/abs/2605.24067 https://arxiv.org/pdf/2605.24067 https://arxiv.org/html/2605.24067

arXiv:2605.24067v1 Announce Type: new

Abstract: Most nowcasting systems, built on radar reflectivity, focus on current precipitation, ignoring the atmospheric precursors -- such as low-level convergence, turbulent eddies, and latent heating -- that offer a fleeting window to foresee storm birth. We introduce MeteoLogist, a physics-inspired radar intelligence framework that models the full life cycle of convection -- from its precursors to organized storm evolution. However, exploiting these precursors is non-trivial: they originate from multiple meteorological drivers -- thermodynamic, kinematic, and microphysical -- that evolve asynchronously (C1) and remain spatially fragmented (C2). To this end, MeteoLogist designs three tightly integrated components. The Physics-Tailored Encoders process radar echoes according to their intrinsic physical scales and semantics, forming thermodynamic, kinematic, and microphysical streams that capture distinct dynamical regimes. The Temporal-Phase Aligner addresses C1 by leveraging causal temporal attention to capture when and how different drivers interact and activate. The Cross-Field Spatial Aggregator addresses C2 through cross-regional fusion, aligning weak and scattered precursors across neighboring cells to expose upstream triggers and enforce spatial coherence. Evaluated on 3D-NEXRAD (2020--2022, US-wide), MeteoLogist boosts high-impact detection (CSI40) by 9.7% over strong baselines, and achieves a remarkable 37.67% gain during the storm-developing stage -- demonstrating true foresight in sensing storms before they appear. The code can be found in the supplementary material.

toXiv_bot_toot

2026-04-07 09:59:10

Human #echolocation works step by step https://www.sciencenews.org/article/human-echolocation-blind-brain "A study reveals how individual tongue clicks and their echoes …

2026-04-11 02:32:24

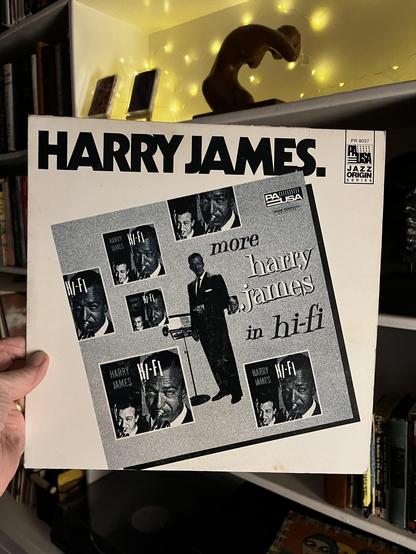

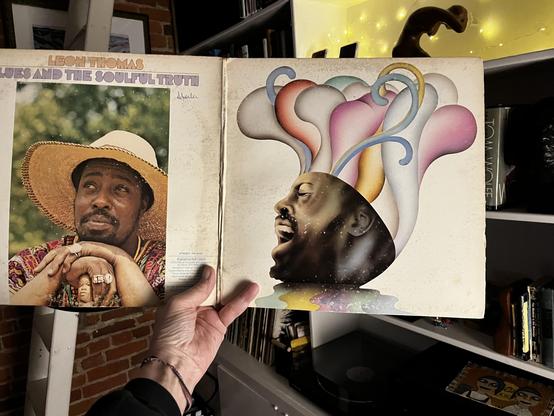

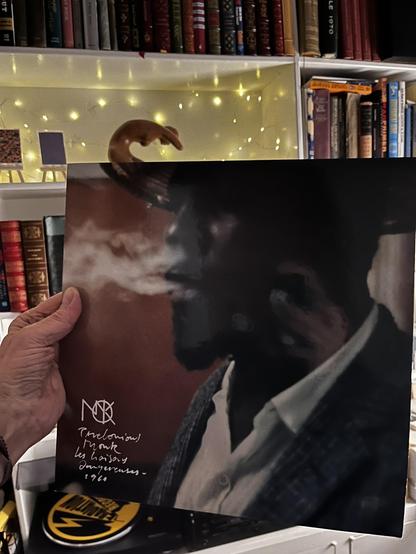

i'm playing records that don't spark joy tonight, thanking them for their service before i trade them in at the record store down the street

#vinyl #jazz #harryjames

2026-03-18 19:48:53

Exploring books on scientific discovery never fails to spark my curiosity. The birth of transformative ideas and their resonance in fields like business is astounding. One key discovery can spur innovation across multiple domains — a true testament to our interconnected knowledge. Forever inspired! #ScienceAndBusiness #CuriosityUnleashed 📚

2026-05-20 07:54:29

Coherent All-Optical Radio Frequency Phase Sensing Using Multiphoton Dressing and Interference

Hongqiao Zhang, Pinrui Shen, Stephanie M. Bohaichuk, Hanna Lippmann, Harald Kubler, James P. Shaffer

https://arxiv.org/abs/2605.19851

2026-03-31 11:12:28

Replaced article(s) found for cs.CL. https://arxiv.org/list/cs.CL/new

[1/5]:

- Beyond In-Distribution Success: Scaling Curves of CoT Granularity for Language Model Generalization

Ru Wang, Wei Huang, Selena Song, Haoyu Zhang, Qian Niu, Yusuke Iwasawa, Yutaka Matsuo, Jiaxian Guo

https://arxiv.org/abs/2502.18273 https://mastoxiv.page/@arXiv_csCL_bot/114069031700102129

- Benchmarking NLP-supported Language Sample Analysis for Swiss Children's Speech

Anja Ryser, Yingqiang Gao, Sarah Ebling

https://arxiv.org/abs/2504.00780 https://mastoxiv.page/@arXiv_csCL_bot/114267149909002069

- Cultural Biases of Large Language Models and Humans in Historical Interpretation

Fabio Celli, Georgios Spathulas

https://arxiv.org/abs/2504.02572 https://mastoxiv.page/@arXiv_csCL_bot/114278467094094490

- BRIDGE: Benchmarking Large Language Models for Understanding Real-world Clinical Practice Text

Jiageng Wu, et al.

https://arxiv.org/abs/2504.19467 https://mastoxiv.page/@arXiv_csCL_bot/114420036189999973

- Understanding the Anchoring Effect of LLM with Synthetic Data: Existence, Mechanism, and Potentia...

Yiming Huang, Biquan Bie, Zuqiu Na, Weilin Ruan, Songxin Lei, Yutao Yue, Xinlei He

https://arxiv.org/abs/2505.15392 https://mastoxiv.page/@arXiv_csCL_bot/114550277171100272

- Just as Humans Need Vaccines, So Do Models: Model Immunization to Combat Falsehoods

Raza, Qureshi, Farooq, Lotif, Chadha, Pandya, Emmanouilidis

https://arxiv.org/abs/2505.17870 https://mastoxiv.page/@arXiv_csCL_bot/114572956853819813

- LingoLoop Attack: Trapping MLLMs via Linguistic Context and State Entrapment into Endless Loops

Fu, Jiang, Hong, Li, Guo, Yang, Chen, Zhang

https://arxiv.org/abs/2506.14493 https://mastoxiv.page/@arXiv_csCL_bot/114703502552989170

- GHTM: A Graph-based Hybrid Topic Modeling Approach with a Benchmark Dataset for the Low-Resource ...

Farhana Haque, Md. Abdur Rahman, Sumon Ahmed

https://arxiv.org/abs/2508.00605 https://mastoxiv.page/@arXiv_csCL_bot/114969875643478303

- Link Prediction for Event Logs in the Process Industry

Anastasia Zhukova, Thomas Walton, Christian E. Lobm\"uller, Bela Gipp

https://arxiv.org/abs/2508.09096 https://mastoxiv.page/@arXiv_csCL_bot/115020938764936882

- AirQA: A Comprehensive QA Dataset for AI Research with Instance-Level Evaluation

Huang, Cao, Zhang, Kang, Wang, Wang, Luo, Zheng, Qian, Chen, Yu

https://arxiv.org/abs/2509.16952 https://mastoxiv.page/@arXiv_csCL_bot/115253526588472475

- Multi-View Attention Multiple-Instance Learning Enhanced by LLM Reasoning for Cognitive Distortio...

Jun Seo Kim, Hyemi Kim, Woo Joo Oh, Hongjin Cho, Hochul Lee, Hye Hyeon Kim

https://arxiv.org/abs/2509.17292 https://mastoxiv.page/@arXiv_csCL_bot/115253586227941157

- Dual-Space Smoothness for Robust and Balanced LLM Unlearning

Han Yan, Zheyuan Liu, Meng Jiang

https://arxiv.org/abs/2509.23362 https://mastoxiv.page/@arXiv_csCL_bot/115293308293558024

- The Rise of AfricaNLP: Contributions, Contributors, Community Impact, and Bibliometric Analysis

Tadesse Destaw Belay, et al.

https://arxiv.org/abs/2509.25477 https://mastoxiv.page/@arXiv_csCL_bot/115298213432594791

- Open ASR Leaderboard: Towards Reproducible and Transparent Multilingual and Long-Form Speech Reco...

Srivastav, Zheng, Bezzam, Le Bihan, Koluguri, \.Zelasko, Majumdar, Moumen, Gandhi

https://arxiv.org/abs/2510.06961 https://mastoxiv.page/@arXiv_csCL_bot/115343748052193267

- Neuron-Level Analysis of Cultural Understanding in Large Language Models

Taisei Yamamoto, Ryoma Kumon, Danushka Bollegala, Hitomi Yanaka

https://arxiv.org/abs/2510.08284 https://mastoxiv.page/@arXiv_csCL_bot/115349533441895984

- CLMN: Concept based Language Models via Neural Symbolic Reasoning

Yibo Yang

https://arxiv.org/abs/2510.10063 https://mastoxiv.page/@arXiv_csCL_bot/115372392366793754

- Schema for In-Context Learning

Chen, Chen, Wang, Leong, Fung, Bernales, Aspuru-Guzik

https://arxiv.org/abs/2510.13905 https://mastoxiv.page/@arXiv_csCL_bot/115389057899856601

- Evaluating Latent Knowledge of Public Tabular Datasets in Large Language Models

Matteo Silvestri, Fabiano Veglianti, Flavio Giorgi, Fabrizio Silvestri, Gabriele Tolomei

https://arxiv.org/abs/2510.20351 https://mastoxiv.page/@arXiv_csCL_bot/115428615784704418

- LuxIT: A Luxembourgish Instruction Tuning Dataset from Monolingual Seed Data

Julian Valline, Cedric Lothritz, Siwen Guo, Jordi Cabot

https://arxiv.org/abs/2510.24434 https://mastoxiv.page/@arXiv_csCL_bot/115457025096322944

- Surfacing Subtle Stereotypes: A Multilingual, Debate-Oriented Evaluation of Modern LLMs

Muhammed Saeed, Muhammad Abdul-mageed, Shady Shehata

https://arxiv.org/abs/2511.01187 https://mastoxiv.page/@arXiv_csCL_bot/115491321130591723

toXiv_bot_toot