2025-06-09 10:13:42

Corrector Sampling in Language Models

Itai Gat, Neta Shaul, Uriel Singer, Yaron Lipman

https://arxiv.org/abs/2506.06215 https://arxiv…

2025-06-04 20:09:39

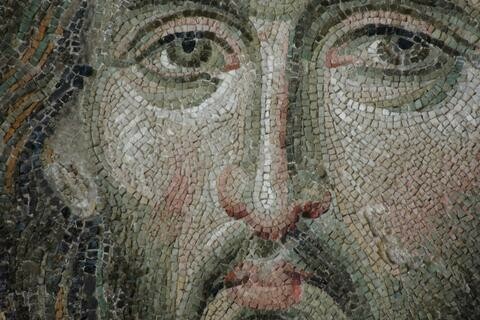

“I know faithful people, including faithful preachers, who persist in hope for the future, against all odds, grounded in their hope in God’s steadfastness. That hope emboldens them both to hear and preach the truth of God’s compassion and of God’s justice, and to act accordingly. Their hope emboldens me.”

—Roger Ferlo ’79 Ph.D. writing in the latest issue of our journal, Reflections

2025-07-04 10:11:31

Context-aware gate set tomography: Improving the self-consistent characterization of trapped-ion universal gate sets by leveraging non-Markovianity

Pablo Vi\~nas, Alejandro Bermudez

https://arxiv.org/abs/2507.02542

2025-06-21 02:34:13

Why AI can't possibly make you more productive; long

#AI and "productivity", some thoughts:

Productivity is a concept that isn't entirely meaningless outside the context of capitalism, but it's a concept that is heavily inflected in a capitalist context. In many uses today it effectively means "how much you can satisfy and/or exceed your boss' expectations." This is not really what it should mean: even in an anarchist utopia, people would care about things like how many shirts they can produce in a week, although in an "I'd like to voluntarily help more people" way rather than an "I need to meet this quota to earn my survival" way. But let's roll with this definition for a second, because it's almost certainly what your boss means when they say "productivity", and understanding that word in a different (even if truer) sense is therefore inherently dangerous.

Accepting "productivity" to mean "satisfying your boss' expectations," I will now claim: the use of generative AI cannot increase your productivity.

Before I dive in, it's imperative to note that the big generative models which most people think of as constituting "AI" today are evil. They are 1: pouring fuel on our burning planet, 2: psychologically strip-mining a class of data laborers who are exploited for their precarity, 3: enclosing, exploiting, and polluting the digital commons, and 4: stealing labor from broad classes of people many of whom are otherwise glad to give that labor away for free provided they get a simple acknowledgement in return. Any of these four "ethical issues" should be enough *alone* to cause everyone to simply not use the technology. These ethical issues are the reason that I do not use generative AI right now, except for in extremely extenuating circumstances. These issues are also convincing for a wide range of people I talk to, from experts to those with no computer science background. So before I launch into a critique of the effectiveness of generative AI, I want to emphasize that such a critique should be entirely unnecessary.

But back to my thesis: generative AI cannot increase your productivity, where "productivity" has been defined as "how much you can satisfy and/or exceed your boss' expectations."

Why? In fact, what the fuck? Every AI booster I've met has claimed the opposite. They've given me personal examples of time saved by using generative AI. Some of them even truly believe this. Sometimes I even believe they saved time without horribly compromising on quality (and often, your boss doesn't care about quality anyways if the lack of quality is hard to measure of doesn't seem likely to impact short-term sales/feedback/revenue). So if generative AI genuinely lets you write more emails in a shorter period of time, or close more tickets, or something else along these lines, how can I say it isn't increasing your ability to meet your boss' expectations?

The problem is simple: your boss' expectations are not a fixed target. Never have been. In virtue of being someone who oversees and pays wages to others under capitalism, your boss' game has always been: pay you less than the worth of your labor, so that they can accumulate profit and this more capital to remain in charge instead of being forced into working for a wage themselves. Sure, there are layers of manservant caught in between who aren't fully in this mode, but they are irrelevant to this analysis. It matters not how much you please your manager if your CEO thinks your work is not worth the wages you are being paid. And using AI actively lowers the value of your work relative to your wages.

Why do I say that? It's actually true in several ways. The most obvious: using generative AI lowers the quality of your work, because the work it produces is shot through with errors, and when your job is reduced to proofreading slop, you are bound to tire a bit, relax your diligence, and let some mistakes through. More than you would have if you are actually doing and taking pride in the work. Examples are innumerable and frequent, from journalists to lawyers to programmers, and we laugh at them "haha how stupid to not check whether the books the AI reviewed for you actually existed!" but on a deeper level if we're honest we know we'd eventually make the same mistake ourselves (bonus game: spot the swipe-typing typos I missed in this post; I'm sure there will be some).

But using generative AI also lowers the value of your work in another much more frightening way: in this era of hype, it demonstrates to your boss that you could be replaced by AI. The more you use it, and no matter how much you can see that your human skills are really necessary to correct its mistakes, the more it appears to your boss that they should hire the AI instead of you. Or perhaps retain 10% of the people in roles like yours to manage the AI doing the other 90% of the work. Paradoxically, the *more* you get done in terms of raw output using generative AI, the more it looks to your boss as if there's an opportunity to get enough work done with even fewer expensive humans. Of course, the decision to fire you and lean more heavily into AI isn't really a good one for long-term profits and success, but the modern boss did not get where they are by considering long-term profits. By using AI, you are merely demonstrating your redundancy, and the more you get done with it, the more redundant you seem.

In fact, there's even a third dimension to this: by using generative AI, you're also providing its purveyors with invaluable training data that allows them to make it better at replacing you. It's generally quite shitty right now, but the more use it gets by competent & clever people, the better it can become at the tasks those specific people use it for. Using the currently-popular algorithm family, there are limits to this; I'm not saying it will eventually transcend the mediocrity it's entwined with. But it can absolutely go from underwhelmingly mediocre to almost-reasonably mediocre with the right training data, and data from prompting sessions is both rarer and more useful than the base datasets it's built on.

For all of these reasons, using generative AI in your job is a mistake that will likely lead to your future unemployment. To reiterate, you should already not be using it because it is evil and causes specific and inexcusable harms, but in case like so many you just don't care about those harms, I've just explained to you why for entirely selfish reasons you should not use it.

If you're in a position where your boss is forcing you to use it, my condolences. I suggest leaning into its failures instead of trying to get the most out of it, and as much as possible, showing your boss very clearly how it wastes your time and makes things slower. Also, point out the dangers of legal liability for its mistakes, and make sure your boss is aware of the degree to which any of your AI-eager coworkers are producing low-quality work that harms organizational goals.

Also, if you've read this far and aren't yet of an anarchist mindset, I encourage you to think about the implications of firing 75% of (at least the white-collar) workforce in order to make more profit while fueling the climate crisis and in most cases also propping up dictatorial figureheads in government. When *either* the AI bubble bursts *or* if the techbros get to live out the beginnings of their worker-replacement fantasies, there are going to be an unimaginable number of economically desperate people living in increasingly expensive times. I'm the kind of optimist who thinks that the resulting social crucible, though perhaps through terrible violence, will lead to deep social changes that effectively unseat from power the ultra-rich that continue to drag us all down this destructive path, and I think its worth some thinking now about what you might want the succeeding stable social configuration to look like so you can advocate towards that during points of malleability.

As others have said more eloquently, generative AI *should* be a technology that makes human lives on average easier, and it would be were it developed & controlled by humanists. The only reason that it's not, is that it's developed and controlled by terrible greedy people who use their unfairly hoarded wealth to immiserate the rest of us in order to maintain their dominance. In the long run, for our very survival, we need to depose them, and I look forward to what the term "generative AI" will mean after that finally happens.

2025-06-29 19:31:19

"""

Writing has been an instrument for some of the highest expressions of the human spirit: poetry, philosophy, science. But to understand it — why it came into being, how it changed the human experience — we have to first appreciate its crass practicality. It evolved mainly as an instrument of the mundane: the economic, the administrative, the political.

Confusion over this point is understandable. Some scholars have equated the origin of “civilization” with the origin of writing. Laypeople sometimes take this equation to mean that with writing humanity put aside its barbarous past and started behaving in gentlemanly fashion, sipping tea and remembering to say “please.” And indeed, this may be only a mild caricature of what some nineteenth-century scholars actually meant by the equation: writing equals Greece equals Plato; illiteracy equals barbarism equals Attila the Hun.

But, in truth, if you add literacy to Attila the Hun, you don’t get Plato. You get Genghis Khan. During the thirteenth century, he administered what even today is the largest continuous land empire in the history of the world. And he could do so only because he had the requisite means of control: a script that, when carried by his pony express, amounted to the fastest large-scale information-processing technology of his era. One consequence was to give pillaging a scope beyond Attila’s wildest dreams. Information technology, like energy technology or any other technology, can be a tool for good or bad. By itself, it is no guarantor of moral progress or civility.

"""

(Robert Wright, Nonzero: The Logic of Human Destiny)

2025-07-04 09:06:11

Pressure-induced band gap energy increase in crystalline lactose

Igor A. Fedorov

https://arxiv.org/abs/2507.02629 https://arxiv.org/p…

2025-06-05 07:28:03

The NASA Exoplanet Archive and Exoplanet Follow-up Observing Program: Data, Tools, and Usage

Jessie L. Christiansen, Douglas L. McElroy, Marcy Harbut, David R. Ciardi, Megan Crane, John Good, Kevin K. Hardegree-Ullman, Aurora Y. Kesseli, Michael B. Lund, Meca Lynn, Ananda Muthiar, Ricky Nilsson, Toba Oluyide, Michael Papin, Amalia Rivera, Melanie Swain, Nicholas D. Susemiehl, Raymond Tam, Julian van Eyken, Charles Beichman

2025-06-29 18:21:32

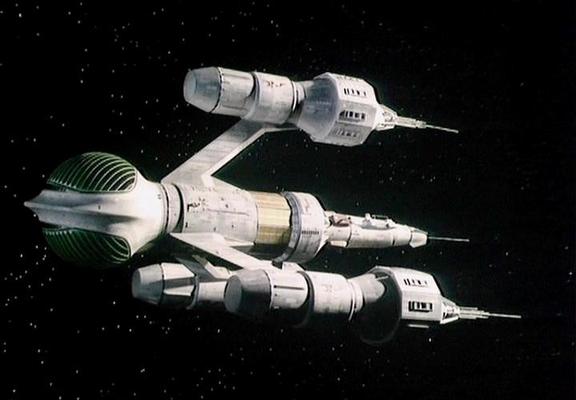

Series C, Episode 02 - Powerplay

AVON: What do we get?

KLEGG: You'll be teleported onto the surface of the nearest planet. You can build your security into the computer program. It won't respond to me until you are safely off the ship.

https://blake.torpidity.net/m/302/498

2025-06-02 07:36:21

Optimal Haar random fermionic linear optics circuits

Paolo Braccia, N. L. Diaz, Martin Larocca, M. Cerezo, Diego Garc\'ia-Mart\'in

https://arxiv.org/abs/2505.24212

2025-07-03 09:19:10

Constraints on the ejecting-crust activity model on comet 67P/Churyumov-Gerasimenko

Nicholas Attree, Pedro Guti\'errez, Christian Schuckart, Johannes Markkanen, Yuri Skorov, Yingqi Xin, Dorothea Bischoff, Bastian Gundlach, Jurgen Blum

https://arxiv.org/abs/2507.01441