2026-04-08 15:10:56

Alibaba and China Telecom launch a data center in southern China that is powered by 10,000 of Alibaba's Zhenwu chips designed for AI training and inferencing (Arjun Kharpal/CNBC)

https://www.cnbc.com/2026/04/08/china-alibaba-data-center-ai-chips-zhenwu.html…

2026-06-08 20:13:36

Had ik wel eens gezegd dat mijn broertje Arthur hele kekke glaskunst maakt? En dat 'ie vanaf vandaag te zien is bij de de Royal Academy of Art Summer Exhibition?

https://www.instagram.com/p/DY65z5isFG1/

2026-04-08 03:04:42

Thinking about buying a seismic sensor for my Raspberry Pi.

"Yeah, I know we're moving away from the seismically active zone of Switzerland, but you could record the shockwaves when he-who-must-not-be-named uses the nukes, you'd have first hand data! You'd be among the first to know that mankind is gonna end!"

Some days I'm not sure about the mental health of some of my inner voices. Especially since it said "when", not "if".

2026-06-06 11:56:43

I gave the Strangers in a Tangled Wilderness crew the significant challenge of trying to make me sound coherent in this interview with Inmn Neruin. I was managing some pretty significant sleep deprivation and a mild cold, but I think it turned out pretty good (despite my best efforts).

But I did talk a lot about my trauma related to some of my experience living in #rural areas, and in doing that I was definitely not as careful as I could have been to talk about that as a trauma experience rather than as reality. Some people get trapped in rural areas, but other folks live there because they find beautiful things.

Not only is #RuralOrganizing critical (Trumpism grew out of areas neglected by "the left"), but rural living can be beautiful and rewarding. I briefly mentioned growing up throwing knives. A friend of mine lived way out in the woods, and there's something special about having a playground that spans several square miles. Intertwined with the old settler colonialism, antisemitism, *phobias, and isolation, that is at the heart of a lot of my personal rural misery, there's also a joyous and feral thing that taught me a lot and, I think, helped me organize more fearlessly. That thing is both individualist and collectivist, in different ways, and I don't think it's well understood without experiencing it. There's far more nuance than I was able to offer (and I definitely could have been more careful not to play into anti-rural stereotypes).

I think I said, "no one wants to live there." People do. You do. So let me try to fix that by giving you a chance to talk about that. I also talked about how slow things move, how nothing changes, but there are also sometimes opportunities to change things in huge ways specifically because structures don't exist to stop those changes. So while I'm bumping this zine and interview, also I want to use this as an opportunity to welcome my rural comrades to help fill in the gaps:

What draws you to where you are?

Do you choose to live in a rural area vs urban, and why?

Is there any other thing I've said that you would like to correct?

What other things should folks know?

https://www.tangledwilderness.org/features/near-death

@dawid@social.craftknight.com

@dawid@social.craftknight.com2026-05-05 20:55:40

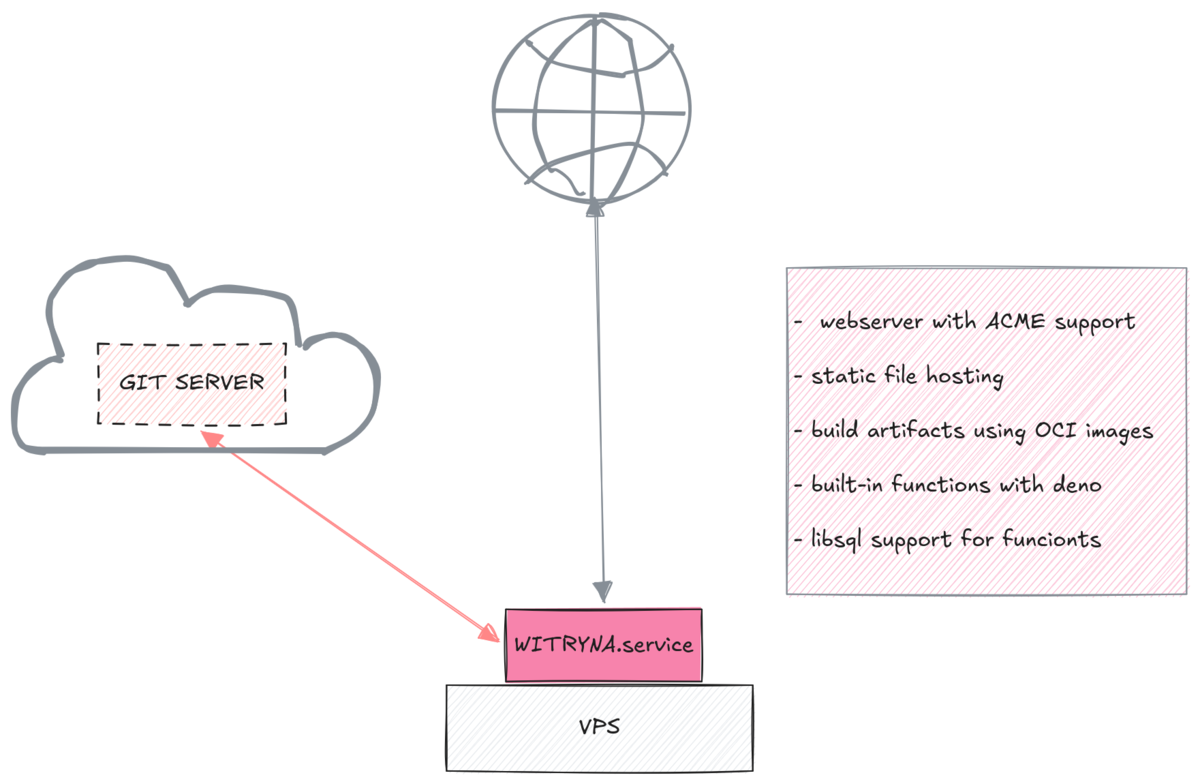

A gdyby nie musiało być trudno? Gdyby to wszystko załatwił jeden config. Życie byłoby piękne :D

Dosłownie - wskazujemy na git repo, serwis śledzi zmiany i wszystko robi za nas. Bez docker, bez zewnętrznych dependency, jedna statyczna binarka jako serwis, buduje stronę, kompiluje funkcje i wszystko wystawia pod danym adresem ogarniając ACME, przekierowania, kompresje, headery - out of the box wszystko, co na diagramie z poprzedniego posta. Dodatkowo fajnie jakby miał wsparcie _redirects …

2026-04-08 06:02:17

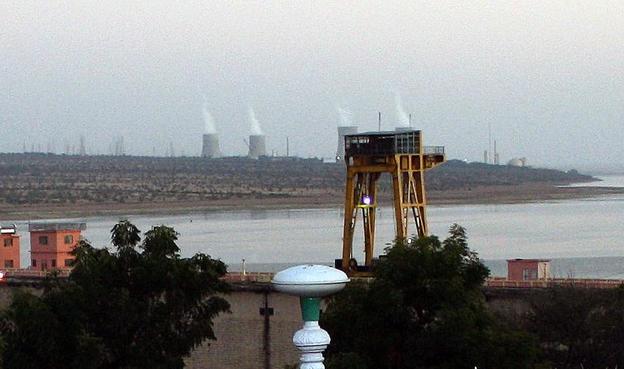

Heute vor 35 Jahren: Am 8. April 1991 kam es im Kernkraftwerk #Rajasthan-2 (Indien) während der Betankung zu einem Leck aufgrund eines Defekts der Gleitringdichtung, daraufhin liefen 600 kg schweres Wasser aus.

2026-05-31 19:28:04

After reading this piece I updated my DNS test zone to include a similar textual phrase.

Test tools should be able to test things, even if that discomforts AI crawlers that are trying to steal the intellectual property created by others.

"Fed up with vibe coders, dev sneaks data-nuking prompt injection into their code"

2026-06-01 08:00:27

Dinge, die ihr nicht in eurem Keller finden möchtet... 🫢

Der Fund eines alten Rauchmelders mit einem Warnaufkleber vor Radioaktivität hat in Hasenkrug im Kreis Segeberg für Aufregung gesorgt.

Zum Artikel: https://heise.de/-10419905?wt_mc=sm.re

@dawid@social.craftknight.com

@dawid@social.craftknight.com2026-06-07 17:57:29

Ostatnio usłyszałem w swoim laptopie Framework 13 jakiś dziwny zgrzyt wiatraka - pewnie znowu psie kłaki.

Wyłączyłem laptopa, rozebrałem go tym małym śrubokręcikiem, co dodali w zestawie, podniosłem radiator z procesora, nawet rozkręciłem wiatrak - też śrubokręt pasował - tylko druga strona z mały philipsem.

W środku była oczywiście kupa huskowych kłaków, pomieszana z kulkami kurzu. Przy okazji wymieniłem pastę. Całość zajęła mi 15min w tym 10min szukałem pasty termoprzewodzącej w…

@dawid@social.craftknight.com

@dawid@social.craftknight.com2026-03-29 10:37:32

Gdyby istniało narzędzie, które potrafi wystawiać `./functions` tak jak netflify/vercel/cloudeflare - to mój problem by był rozwiązany z ostatniego dnia.

Ba - mogła by to być realny prosty sposób selfhostowania stron statycznych z prostym backendem. Kolejne ba - nic co budowałem, by nie było potrzebne - platforma która wprowadza "serverless" architekturę na właśnie self hosting.

Mamy repo ze statyczną stroną - taki Astro/Hugo/Gatsby, coktolubi i w folderze ./functions po…