2026-04-08 15:00:04

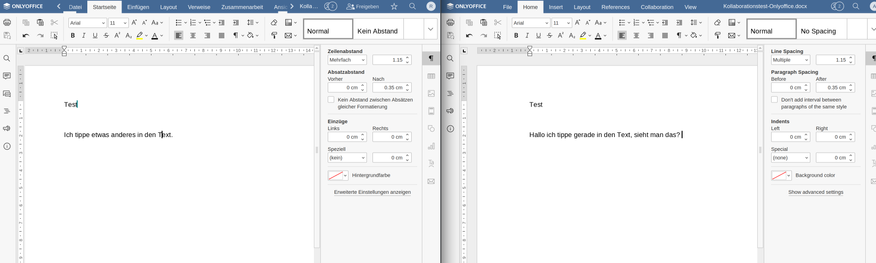

windsurfers: Windsurfers network (1986)

A network of interpersonal contacts among windsurfers in southern California during the Fall of 1986. The edge weights indicate the perception of social affiliations majored by the tasks in which each individual was asked to sort cards with other surfer’s name in the order of closeness.

This network has 43 nodes and 336 edges.

Tags: Social, Offline, Weighted

2026-04-07 06:40:07

Robert Creeley at 100, A Celebration of His Life and Poetry

https://ift.tt/DoHiKOb

updated: Monday, April 6, 2026 - 3:01pmfull name / name of organization: The Charles Olson…

via Input 4 RELCFP

2026-02-25 16:08:18

Replaced article(s) found for cs.LG. https://arxiv.org/list/cs.LG/new

[5/6]:

- Watermarking Degrades Alignment in Language Models: Analysis and Mitigation

Apurv Verma, NhatHai Phan, Shubhendu Trivedi

https://arxiv.org/abs/2506.04462 https://mastoxiv.page/@arXiv_csCL_bot/114635190037336859

- Sensory-Motor Control with Large Language Models via Iterative Policy Refinement

J\^onata Tyska Carvalho, Stefano Nolfi

https://arxiv.org/abs/2506.04867 https://mastoxiv.page/@arXiv_csAI_bot/114635187854195641

- ICE-ID: A Novel Historical Census Dataset for Longitudinal Identity Resolution

de Carvalho, Popov, Kaatee, Correia, Th\'orisson, Li, Bj\"ornsson, Sigur{\dh}arson, Dibangoye

https://arxiv.org/abs/2506.13792 https://mastoxiv.page/@arXiv_csAI_bot/114703312162525342

- Feedback-driven recurrent quantum neural network universality

Lukas Gonon, Rodrigo Mart\'inez-Pe\~na, Juan-Pablo Ortega

https://arxiv.org/abs/2506.16332 https://mastoxiv.page/@arXiv_quantph_bot/114732532383196043

- Programming by Backprop: An Instruction is Worth 100 Examples When Finetuning LLMs

Cook, Sapora, Ahmadian, Khan, Rocktaschel, Foerster, Ruis

https://arxiv.org/abs/2506.18777 https://mastoxiv.page/@arXiv_csAI_bot/114738213040759661

- Stochastic Quantum Spiking Neural Networks with Quantum Memory and Local Learning

Jiechen Chen, Bipin Rajendran, Osvaldo Simeone

https://arxiv.org/abs/2506.21324 https://mastoxiv.page/@arXiv_csNE_bot/114754367612728319

- Enjoying Non-linearity in Multinomial Logistic Bandits: A Minimax-Optimal Algorithm

Pierre Boudart (SIERRA), Pierre Gaillard (Thoth), Alessandro Rudi (PSL, DI-ENS, Inria)

https://arxiv.org/abs/2507.05306 https://mastoxiv.page/@arXiv_statML_bot/114822374525501660

- Characterizing State Space Model and Hybrid Language Model Performance with Long Context

Saptarshi Mitra, Rachid Karami, Haocheng Xu, Sitao Huang, Hyoukjun Kwon

https://arxiv.org/abs/2507.12442 https://mastoxiv.page/@arXiv_csAR_bot/114867589638074984

- Is Exchangeability better than I.I.D to handle Data Distribution Shifts while Pooling Data for Da...

Ayush Roy, Samin Enam, Jun Xia, Won Hwa Kim, Vishnu Suresh Lokhande

https://arxiv.org/abs/2507.19575 https://mastoxiv.page/@arXiv_csCV_bot/114935399825741861

- TASER: Table Agents for Schema-guided Extraction and Recommendation

Nicole Cho, Kirsty Fielding, William Watson, Sumitra Ganesh, Manuela Veloso

https://arxiv.org/abs/2508.13404 https://mastoxiv.page/@arXiv_csAI_bot/115060386723032051

- Morphology-Aware Peptide Discovery via Masked Conditional Generative Modeling

Nuno Costa, Julija Zavadlav

https://arxiv.org/abs/2509.02060 https://mastoxiv.page/@arXiv_qbioBM_bot/115139546511384706

- PCPO: Proportionate Credit Policy Optimization for Aligning Image Generation Models

Jeongjae Lee, Jong Chul Ye

https://arxiv.org/abs/2509.25774 https://mastoxiv.page/@arXiv_csCV_bot/115298580419859537

- Multi-hop Deep Joint Source-Channel Coding with Deep Hash Distillation for Semantically Aligned I...

Didrik Bergstr\"om, Deniz G\"und\"uz, Onur G\"unl\"u

https://arxiv.org/abs/2510.06868 https://mastoxiv.page/@arXiv_csIT_bot/115343320768797486

- MoMaGen: Generating Demonstrations under Soft and Hard Constraints for Multi-Step Bimanual Mobile...

Chengshu Li, et al.

https://arxiv.org/abs/2510.18316 https://mastoxiv.page/@arXiv_csRO_bot/115416889485910123

- A Spectral Framework for Graph Neural Operators: Convergence Guarantees and Tradeoffs

Roxanne Holden, Luana Ruiz

https://arxiv.org/abs/2510.20954 https://mastoxiv.page/@arXiv_statML_bot/115445273121677005

- Breaking Agent Backbones: Evaluating the Security of Backbone LLMs in AI Agents

Bazinska, Mathys, Casucci, Rojas-Carulla, Davies, Souly, Pfister

https://arxiv.org/abs/2510.22620 https://mastoxiv.page/@arXiv_csCR_bot/115451397563132982

- Uncertainty Calibration of Multi-Label Bird Sound Classifiers

Raphael Schwinger, Ben McEwen, Vincent S. Kather, Ren\'e Heinrich, Lukas Rauch, Sven Tomforde

https://arxiv.org/abs/2511.08261 https://mastoxiv.page/@arXiv_csSD_bot/115535982708483824

- Two-dimensional RMSD projections for reaction path visualization and validation

Rohit Goswami (Institute IMX and Lab-COSMO, \'Ecole polytechnique f\'ed\'erale de Lausanne)

https://arxiv.org/abs/2512.07329 https://mastoxiv.page/@arXiv_physicschemph_bot/115688910885717951

- Distribution-informed Online Conformal Prediction

Dongjian Hu, Junxi Wu, Shu-Tao Xia, Changliang Zou

https://arxiv.org/abs/2512.07770 https://mastoxiv.page/@arXiv_statML_bot/115689281155541568

- Coupling Experts and Routers in Mixture-of-Experts via an Auxiliary Loss

Ang Lv, Jin Ma, Yiyuan Ma, Siyuan Qiao

https://arxiv.org/abs/2512.23447 https://mastoxiv.page/@arXiv_csCL_bot/115808311310246601

toXiv_bot_toot

2026-04-03 09:42:24

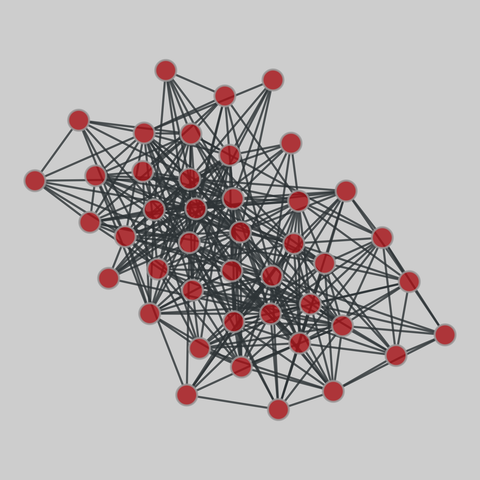

Since currently the pop-up window functionality of GoldenDict-ng is kind of bugged on KDE, this script might help.

You'll need kdotool and wl-clipboard. Add the script to the KDE menu and set a global keyboard shortcut. The shortcut will also work if the clipboard is empty (just showing GoldenDict).

#GoldenDict

2026-03-05 03:18:50

One day after California’s Democratic party urged candidates without a “viable path” in the state’s crucial race for governor to drop out,

the crowded field showed no sign of winnowing down.

At least nine Democrats are in the running to replace the outgoing governor, Gavin Newsom,

with no clear frontrunner,

💥which has fueled fears that the number of candidates could lead to two Republicans advancing to the November election.

Rusty Hicks, the chair of California’…

2026-04-04 19:00:40

Happy to have a paper, a performance and an 'alt.nime' contribution accepted to NIME (new interfaces for musical express) conference London !

The paper is a collaborative one, about Uzu languages, the draft is here https://codeberg.org/uzu/nime2026/src/branch/main/uzu.pdf…

2026-03-02 10:55:41

Q&A with Kickstarter CEO Everette Taylor on modernizing the platform, attracting new creators, managing a fully remote, four-day-per-week workplace, and more (Jordyn Holman/New York Times)

https://www.nytimes.com/2026/03/01/busines

2026-03-01 10:46:49

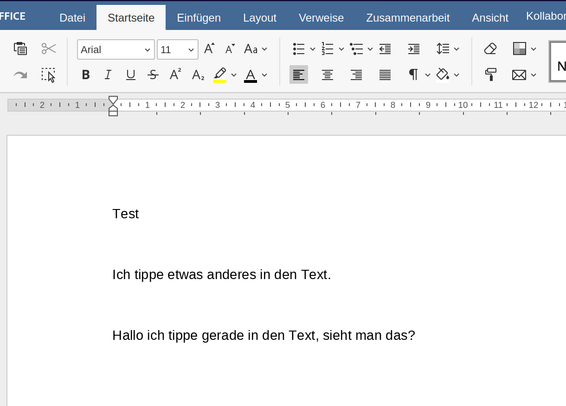

UPDATE2: Problem gelöst, mit einem externen Community Server läuft nun alles prima. Lag also an irgendwelchen Probleme zwischen der Nextcloud-App documentserver_community und dem Server/PHP.

----

Ich teste gerade für einen Artikel #Onlyoffice vs. #Collabora und bin einigermaßen en…

2026-03-02 10:55:43

Q&A with Kickstarter CEO Everette Taylor on modernizing the platform, attracting new creators, managing a fully remote, four-day-per-week workplace, and more (Jordyn Holman/New York Times)

https://www.nytimes.com/2026/03/01/busines

![#!/bin/bash

WINDOW_ID=$(kdotool search --name "GoldenDict-ng")

ACTIVE_ID=$(kdotool getactivewindow)

if [ "$WINDOW_ID" == "$ACTIVE_ID" ]; then

# Window is active and on top

kdotool -q search --name "GoldenDict-ng" windowclose

else

# Window is either hidden, minimized, or in the background

goldendict "$(wl-paste --primary)" # will grab the whole selection; remove quotes for the first word only

kdotool -q search --name "GoldenDict-ng" windowactivate

fi

exit 0](https://cdn.masto.host/socialpetertoushkoveu/media_attachments/files/116/340/088/967/083/421/small/7a32486afec916dc.jpg)