2025-09-12 05:36:11

I was googling whether there was a logo for the Open Source A.I. definition (OASID: https://opensource.org/ai). and I ended-up on one of those jolly logo generator websites. I think this one has delightful stalefish/surströmming vibes.

... and while you're here: Why not submit a talk proposal for the Every…

2025-06-12 03:08:48

2025-08-03 14:35:10

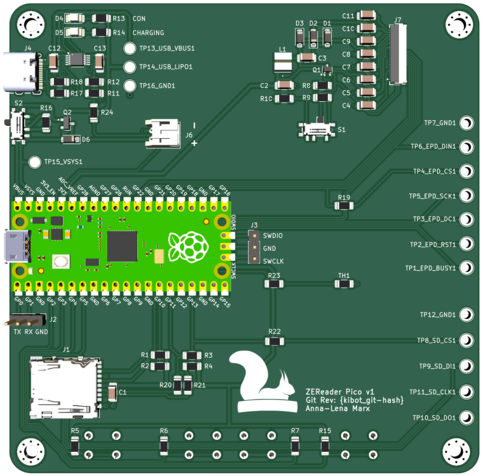

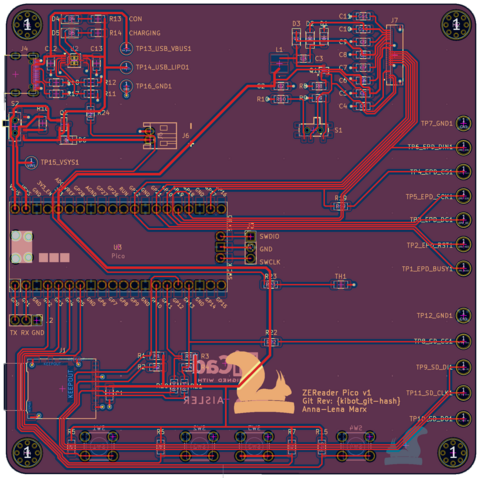

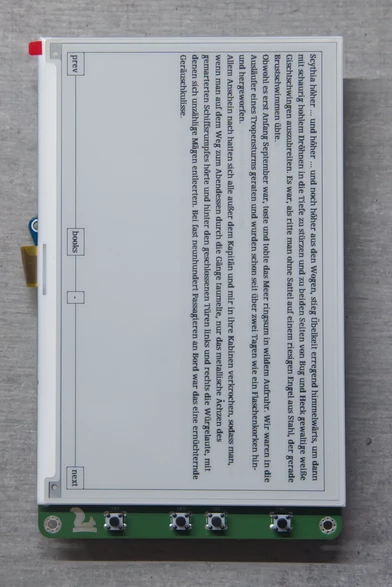

Did you know I studied electrical engineering besides work? On Thursday, I finished my bachelor's thesis with the final presentation and it's time to finally present my project: ZEReader, a microcontroller-based E-Reader.

Inspired by the Open Book Project by Joey Castillo, I designed my own platform from scratch. My focus was on building a reader usable in everyday life that is capable of handling books in the EPUB format. The project is still in a very early phase, but it shows…

2025-09-05 13:15:19

"""

In melancholy, the spirits are carried away by an agitation, but a weak agitation that lacks power or violence, a sort of impotent upset that follows neither a particular path nor the aperta opercula [open ways], but traverses the cerebral matter constantly creating new pores. Yet the spirits do not wander far on the new paths they create, and their agitation dies down rapidly, as their strength is quickly spent and motion comes to a halt: ‘non longe perveniunt’ [they do not reach far]. A trouble of this nature, common to all delirium, does not have the power to produce on the surface of the body the violent movements or the cries to be observed in mania and frenzy. Melancholy never attains frenzy; it is a madness always at the limits of its own impotence. That paradox is explained by the secret alterations in the spirits. Ordinarily, they travel with the speed and instantaneous transparency of rays of light, but in melancholy they become weighed down with night, becoming ‘obscure, thick and dark’, and the images of things that they bring before consciousness are ‘in a shadow, or covered with darkness’. As a result they move more slowly, and are more like a dark, chemical vapour than pure light. This chemical vapour is acid in nature, rather than sulphurous or alcoholic, for in acid vapours the particles are mobile and incapable of repose, but their activity is weak and without consequence. When they are distilled, all that remains in the still is a kind of insipid phlegm. Acid vapours, therefore, are taken to have the same properties as melancholy, whereas alcoholic vapours, which are always ready to burst into flames, are more related to frenzy, and sulphurous vapours bring on mania, as they are agitated by continuous, violent movement. If the ‘formal reason and causes’ of melancholy were to be sought, it made sense to look for them in the vapours that rose up from the blood to the head, and which had degenerated into ‘an acetous or sharp distillation’. A cursory glance seems to indicate that a melancholy of spirits and a whole chemistry of humours lies behind Willis’ analyses, but in fact his guiding principle mostly reflects the immediate qualities of the melancholic illness: an impotent disorder, and the shadow that comes over the spirit with an acrid acidity that slowly corrodes the heart and the mind. The chemistry of acids is not an explanation of the symptoms, but a qualitative option: a whole phenomenology of melancholic experience.

"""

(Michel Foucault, History of Madness)

2025-09-01 08:45:00

Spahn und Miersch offen für Nutzung russischen Vermögens

Die Fraktionschefs von Union und SPD, Jens Spahn und Matthias Miersch, haben sich offen für die Nutzung des eingefrorenen russischen Vermögens zur Unterstützung der Ukraine gezeigt. Miersch verwies auf die laufenden Gespräche zwischen den Europäern über weitere Sanktionen gegen Russland und sagte: "Insofern liegen alle Optionen auf dem Tisch."

🔗

2025-08-04 15:49:00

Should we teach vibe coding? Here's why not.

Should AI coding be taught in undergrad CS education?

1/2

I teach undergraduate computer science labs, including for intro and more-advanced core courses. I don't publish (non-negligible) scholarly work in the area, but I've got years of craft expertise in course design, and I do follow the academic literature to some degree. In other words, In not the world's leading expert, but I have spent a lot of time thinking about course design, and consider myself competent at it, with plenty of direct experience in what knowledge & skills I can expect from students as they move through the curriculum.

I'm also strongly against most uses of what's called "AI" these days (specifically, generative deep neutral networks as supplied by our current cadre of techbro). There are a surprising number of completely orthogonal reasons to oppose the use of these systems, and a very limited number of reasonable exceptions (overcoming accessibility barriers is an example). On the grounds of environmental and digital-commons-pollution costs alone, using specifically the largest/newest models is unethical in most cases.

But as any good teacher should, I constantly question these evaluations, because I worry about the impact on my students should I eschew teaching relevant tech for bad reasons (and even for his reasons). I also want to make my reasoning clear to students, who should absolutely question me on this. That inspired me to ask a simple question: ignoring for one moment the ethical objections (which we shouldn't, of course; they're very stark), at what level in the CS major could I expect to teach a course about programming with AI assistance, and expect students to succeed at a more technically demanding final project than a course at the same level where students were banned from using AI? In other words, at what level would I expect students to actually benefit from AI coding "assistance?"

To be clear, I'm assuming that students aren't using AI in other aspects of coursework: the topic of using AI to "help you study" is a separate one (TL;DR it's gross value is not negative, but it's mostly not worth the harm to your metacognitive abilities, which AI-induced changes to the digital commons are making more important than ever).

So what's my answer to this question?

If I'm being incredibly optimistic, senior year. Slightly less optimistic, second year of a masters program. Realistic? Maybe never.

The interesting bit for you-the-reader is: why is this my answer? (Especially given that students would probably self-report significant gains at lower levels.) To start with, [this paper where experienced developers thought that AI assistance sped up their work on real tasks when in fact it slowed it down] (https://arxiv.org/abs/2507.09089) is informative. There are a lot of differences in task between experienced devs solving real bugs and students working on a class project, but it's important to understand that we shouldn't have a baseline expectation that AI coding "assistants" will speed things up in the best of circumstances, and we shouldn't trust self-reports of productivity (or the AI hype machine in general).

Now we might imagine that coding assistants will be better at helping with a student project than at helping with fixing bugs in open-source software, since it's a much easier task. For many programming assignments that have a fixed answer, we know that many AI assistants can just spit out a solution based on prompting them with the problem description (there's another elephant in the room here to do with learning outcomes regardless of project success, but we'll ignore this over too, my focus here is on project complexity reach, not learning outcomes). My question is about more open-ended projects, not assignments with an expected answer. Here's a second study (by one of my colleagues) about novices using AI assistance for programming tasks. It showcases how difficult it is to use AI tools well, and some of these stumbling blocks that novices in particular face.

But what about intermediate students? Might there be some level where the AI is helpful because the task is still relatively simple and the students are good enough to handle it? The problem with this is that as task complexity increases, so does the likelihood of the AI generating (or copying) code that uses more complex constructs which a student doesn't understand. Let's say I have second year students writing interactive websites with JavaScript. Without a lot of care that those students don't know how to deploy, the AI is likely to suggest code that depends on several different frameworks, from React to JQuery, without actually setting up or including those frameworks, and of course three students would be way out of their depth trying to do that. This is a general problem: each programming class carefully limits the specific code frameworks and constructs it expects students to know based on the material it covers. There is no feasible way to limit an AI assistant to a fixed set of constructs or frameworks, using current designs. There are alternate designs where this would be possible (like AI search through adaptation from a controlled library of snippets) but those would be entirely different tools.

So what happens on a sizeable class project where the AI has dropped in buggy code, especially if it uses code constructs the students don't understand? Best case, they understand that they don't understand and re-prompt, or ask for help from an instructor or TA quickly who helps them get rid of the stuff they don't understand and re-prompt or manually add stuff they do. Average case: they waste several hours and/or sweep the bugs partly under the rug, resulting in a project with significant defects. Students in their second and even third years of a CS major still have a lot to learn about debugging, and usually have significant gaps in their knowledge of even their most comfortable programming language. I do think regardless of AI we as teachers need to get better at teaching debugging skills, but the knowledge gaps are inevitable because there's just too much to know. In Python, for example, the LLM is going to spit out yields, async functions, try/finally, maybe even something like a while/else, or with recent training data, the walrus operator. I can't expect even a fraction of 3rd year students who have worked with Python since their first year to know about all these things, and based on how students approach projects where they have studied all the relevant constructs but have forgotten some, I'm not optimistic seeing these things will magically become learning opportunities. Student projects are better off working with a limited subset of full programming languages that the students have actually learned, and using AI coding assistants as currently designed makes this impossible. Beyond that, even when the "assistant" just introduces bugs using syntax the students understand, even through their 4th year many students struggle to understand the operation of moderately complex code they've written themselves, let alone written by someone else. Having access to an AI that will confidently offer incorrect explanations for bugs will make this worse.

To be sure a small minority of students will be able to overcome these problems, but that minority is the group that has a good grasp of the fundamentals and has broadened their knowledge through self-study, which earlier AI-reliant classes would make less likely to happen. In any case, I care about the average student, since we already have plenty of stuff about our institutions that makes life easier for a favored few while being worse for the average student (note that our construction of that favored few as the "good" students is a large part of this problem).

To summarize: because AI assistants introduce excess code complexity and difficult-to-debug bugs, they'll slow down rather than speed up project progress for the average student on moderately complex projects. On a fixed deadline, they'll result in worse projects, or necessitate less ambitious project scoping to ensure adequate completion, and I expect this remains broadly true through 4-6 years of study in most programs (don't take this as an endorsement of AI "assistants" for masters students; we've ignored a lot of other problems along the way).

There's a related problem: solving open-ended project assignments well ultimately depends on deeply understanding the problem, and AI "assistants" allow students to put a lot of code in their file without spending much time thinking about the problem or building an understanding of it. This is awful for learning outcomes, but also bad for project success. Getting students to see the value of thinking deeply about a problem is a thorny pedagogical puzzle at the best of times, and allowing the use of AI "assistants" makes the problem much much worse. This is another area I hope to see (or even drive) pedagogical improvement in, for what it's worth.

1/2

2025-09-03 09:04:11

How priorities change:

My #framework12 arrived on Monday. I have hardly opened the package, not even properly inspected the device. Why? Because the children want to help me build a laptop, and we did not find the time yet to do it together. 😅

A few years ago, the package would have been open before the door from the delivery was closed. 🤷♂️

2025-07-06 03:29:15

Joanna Macy, one of the visionaries, has entered hospice this week. https://open.substack.com/pub/therapysocialchange/p/joanna-macy-prophet-of-the-great?r=1o5n19&utm_campaign=pos…

2025-06-18 17:42:44

Is this a parody?

I don’t do #InfoSec or other cons so I don’t have a strong sense of whether the “Open Space” concept is brilliant or uproariously absurd. I lean towards the latter because it just seems to me like a recipe for people standing around.

2025-07-30 17:56:35

Just read this post by @… on an optimistic AGI future, and while it had some interesting and worthwhile ideas, it's also in my opinion dangerously misguided, and plays into the current AGI hype in a harmful way.

https://social.coop/@eloquence/114940607434005478

My criticisms include:

- Current LLM technology has many layers, but the biggest most capable models are all tied to corporate datacenters and require inordinate amounts of every and water use to run. Trying to use these tools to bring about a post-scarcity economy will burn up the planet. We urgently need more-capable but also vastly more efficient AI technologies if we want to use AI for a post-scarcity economy, and we are *not* nearly on the verge of this despite what the big companies pushing LLMs want us to think.

- I can see that permacommons.org claims a small level of expenses on AI equates to low climate impact. However, given current deep subsidies on place by the big companies to attract users, that isn't a great assumption. The fact that their FAQ dodges the question about which AI systems they use isn't a great look.

- These systems are not free in the same way that Wikipedia or open-source software is. To run your own model you need a data harvesting & cleaning operation that costs millions of dollars minimum, and then you need millions of dollars worth of storage & compute to train & host the models. Right now, big corporations are trying to compete for market share by heavily subsidizing these things, but it you go along with that, you become dependent on them, and you'll be screwed when they jack up the price to a profitable level later. I'd love to see open dataset initiatives SBD the like, and there are some of these things, but not enough yet, and many of the initiatives focus on one problem while ignoring others (fine for research but not the basis for a society yet).

- Between the environmental impacts, the horrible labor conditions and undercompensation of data workers who filter the big datasets, and the impacts of both AI scrapers and AI commons pollution, the developers of the most popular & effective LLMs have a lot of answer for. This project only really mentions environmental impacts, which makes me think that they're not serious about ethics, which in turn makes me distrustful of the whole enterprise.

- Their language also ends up encouraging AI use broadly while totally ignoring several entire classes of harm, so they're effectively contributing to AI hype, especially with such casual talk of AGI and robotics as if embodied AGI were just around the corner. To be clear about this point: we are several breakthroughs away from AGI under the most optimistic assumptions, and giving the impression that those will happen soon plays directly into the hands of the Sam Altmans of the world who are trying to make money off the impression of impending huge advances in AI capabilities. Adding to the AI hype is irresponsible.

- I've got a more philosophical criticism that I'll post about separately.

I do think that the idea of using AI & other software tools, possibly along with robotics and funded by many local cooperatives, in order to make businesses obsolete before they can do the same to all workers, is a good one. Get your local library to buy a knitting machine alongside their 3D printer.

Lately I've felt too busy criticizing AI to really sit down and think about what I do want the future to look like, even though I'm a big proponent of positive visions for the future as a force multiplier for criticism, and this article is inspiring to me in that regard, even if the specific project doesn't seem like a good one.