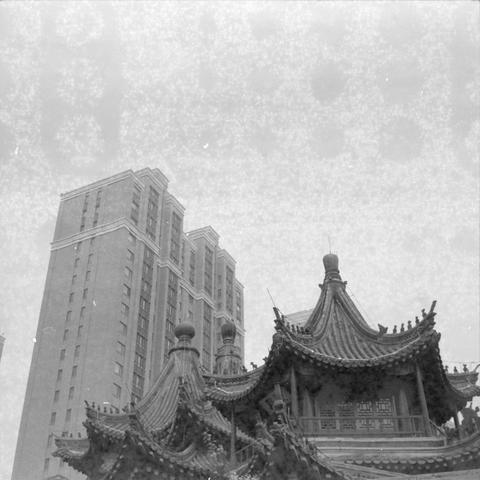

2026-02-26 00:30:04

Urban Demons VII 👻

城市鬼魂 VII 👻

📷 Zeiss IKON Super Ikonta 533/16

🎞️ Ilford HP5 400 Plus, expired 1993

If you like my work, buy me a coffee from PayPal https://www.paypal.com/paypalme/ydcdingsite

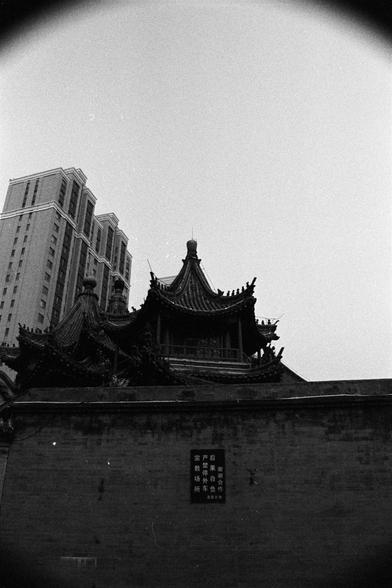

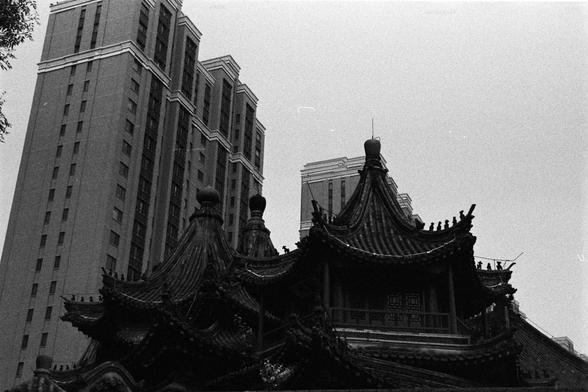

2026-02-23 00:30:06

Urban Demons IV 👻

城市鬼魂 IV 👻

📷 Zeiss IKON Super Ikonta 533/16

🎞️ Ilford HP5 400 Plus, expired 1993

If you like my work, buy me a coffee from PayPal https://www.paypal.com/paypalme/ydcdingsite

2026-04-25 20:42:02

from my link log —

Google Chrome reduces its root DNS traffic.

https://blog.verisign.com/domain-names/chromiums-reduction-of-root-dns-traffic/

saved 2021-01-07

2026-03-25 04:52:43

Ronnie Taylor is a Karen that forgot that "you do not mess with Reacher & the Special Investigators".

Here's Alan's helmetcam video of the whole encounter.

▶️ Police Conclude Alan Ritchson Acted in Self Defense in Neighbor Fight

https://www.

2026-02-25 13:08:21

Oh sweet memory. This is where I spend 3 years during my apprenticeship at SIEMENS-Nixdorf. Sad to see this place being abandoned

https://www.radiohochstift.de/aktionen/lokale-aktionen-und…

2026-02-25 10:37:41

Transcoder Adapters for Reasoning-Model Diffing

Nathan Hu, Jake Ward, Thomas Icard, Christopher Potts

https://arxiv.org/abs/2602.20904 https://arxiv.org/pdf/2602.20904 https://arxiv.org/html/2602.20904

arXiv:2602.20904v1 Announce Type: new

Abstract: While reasoning models are increasingly ubiquitous, the effects of reasoning training on a model's internal mechanisms remain poorly understood. In this work, we introduce transcoder adapters, a technique for learning an interpretable approximation of the difference in MLP computation before and after fine-tuning. We apply transcoder adapters to characterize the differences between Qwen2.5-Math-7B and its reasoning-distilled variant, DeepSeek-R1-Distill-Qwen-7B. Learned adapters are faithful to the target model's internal computation and next-token predictions. When evaluated on reasoning benchmarks, adapters match the reasoning model's response lengths and typically recover 50-90% of the accuracy gains from reasoning fine-tuning. Adapter features are sparsely activating and interpretable. When examining adapter features, we find that only ~8% have activating examples directly related to reasoning behaviors. We deeply study one such behavior -- the production of hesitation tokens (e.g., "wait"). Using attribution graphs, we trace hesitation to only ~2.4% of adapter features (5.6k total) performing one of two functions. These features are necessary and sufficient for producing hesitation tokens; removing them reduces response length, often without affecting accuracy. Overall, our results provide insight into reasoning training and suggest transcoder adapters may be useful for studying fine-tuning more broadly.

toXiv_bot_toot

2026-03-24 03:06:19

RE: https://detmi.social/@coreyjrowe/116184654882228125

I made the jump and I’ve been readjusting over the past week. It’s definitely nice having iMessage again, a more stable video recording experience, etc. but… this keyboard man. I want Gboard back. Wha…

2026-02-25 10:45:11

Untied Ulysses: Memory-Efficient Context Parallelism via Headwise Chunking

Ravi Ghadia, Maksim Abraham, Sergei Vorobyov, Max Ryabinin

https://arxiv.org/abs/2602.21196 https://arxiv.org/pdf/2602.21196 https://arxiv.org/html/2602.21196

arXiv:2602.21196v1 Announce Type: new

Abstract: Efficiently processing long sequences with Transformer models usually requires splitting the computations across accelerators via context parallelism. The dominant approaches in this family of methods, such as Ring Attention or DeepSpeed Ulysses, enable scaling over the context dimension but do not focus on memory efficiency, which limits the sequence lengths they can support. More advanced techniques, such as Fully Pipelined Distributed Transformer or activation offloading, can further extend the possible context length at the cost of training throughput. In this paper, we present UPipe, a simple yet effective context parallelism technique that performs fine-grained chunking at the attention head level. This technique significantly reduces the activation memory usage of self-attention, breaking the activation memory barrier and unlocking much longer context lengths. Our approach reduces intermediate tensor memory usage in the attention layer by as much as 87.5$\%$ for 32B Transformers, while matching previous context parallelism techniques in terms of training speed. UPipe can support the context length of 5M tokens when training Llama3-8B on a single 8$\times$H100 node, improving upon prior methods by over 25$\%$.

toXiv_bot_toot

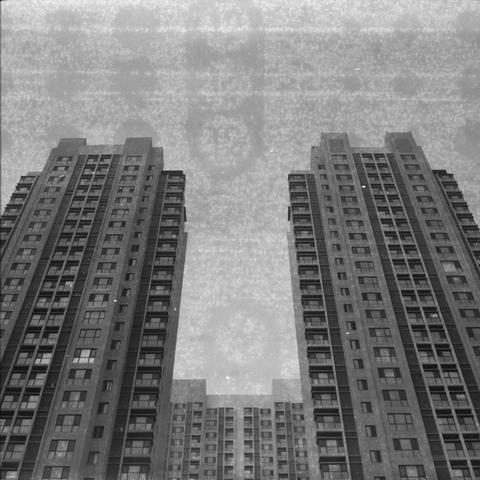

2026-02-24 00:30:01

Urban Demons V 👻

城市鬼魂 V 👻

📷 Nikon F4E

🎞️ Rollei RPX 400

If you like my work, buy me a coffee from PayPal #filmphotography

2026-03-18 00:30:00

Earthy Retouches 💄

地标的装潢 💄

📷 Nikon FE

🎞️ Ilford FP4 Plus 125, expired 1993

If you like my work, buy me a coffee from PayPal #filmphotography