2026-02-25 10:37:11

Exploring the Impact of Parameter Update Magnitude on Forgetting and Generalization of Continual Learning

JinLi He, Liang Bai, Xian Yang

https://arxiv.org/abs/2602.20796 https://arxiv.org/pdf/2602.20796 https://arxiv.org/html/2602.20796

arXiv:2602.20796v1 Announce Type: new

Abstract: The magnitude of parameter updates are considered a key factor in continual learning. However, most existing studies focus on designing diverse update strategies, while a theoretical understanding of the underlying mechanisms remains limited. Therefore, we characterize model's forgetting from the perspective of parameter update magnitude and formalize it as knowledge degradation induced by task-specific drift in the parameter space, which has not been fully captured in previous studies due to their assumption of a unified parameter space. By deriving the optimal parameter update magnitude that minimizes forgetting, we unify two representative update paradigms, frozen training and initialized training, within an optimization framework for constrained parameter updates. Our theoretical results further reveals that sequence tasks with small parameter distances exhibit better generalization and less forgetting under frozen training rather than initialized training. These theoretical insights inspire a novel hybrid parameter update strategy that adaptively adjusts update magnitude based on gradient directions. Experiments on deep neural networks demonstrate that this hybrid approach outperforms standard training strategies, providing new theoretical perspectives and practical inspiration for designing efficient and scalable continual learning algorithms.

toXiv_bot_toot

2026-01-19 14:15:51

Sources: internal Google data shows Gemini API calls surged from 35B in March 2025 to 85B in August 2025; Google says Gemini Enterprise hit 8M subscribers (Erin Woo/The Information)

https://www.theinformation.com/articles/googles-gemini-sees-skyrocketi…

2026-03-13 17:25:51

And "free" also implies that the worker is supposedly completely free. He is free in the sense that he can sell for as high a price as he can manage.

Himself?

Yes, for as much money as possible. - Now, in this market, the computer replaces the best thing the worker has to offer: knowledge and skill. The machine takes over both, and he himself is degraded to a mere machine operator. His actual abilities have been coaxed out of him and incorporated into the device. Consequently, he has less to sell than before, and what he can still sell is worth less.

He himself loses value?

Yes, because he is not only robbed of the ability to provide bread for his family, but also of one of the signs that prove to him that he is human. Now he is simplified and transformed into an operator. (Almost like in Kafka, where a man is transformed into a beetle; Kafka is a prophet in that regard.)"

Joseph Weizenbaum, Kurs auf den Eisberg, Serie Piper, Munich, 1987

2026-01-29 15:00:34

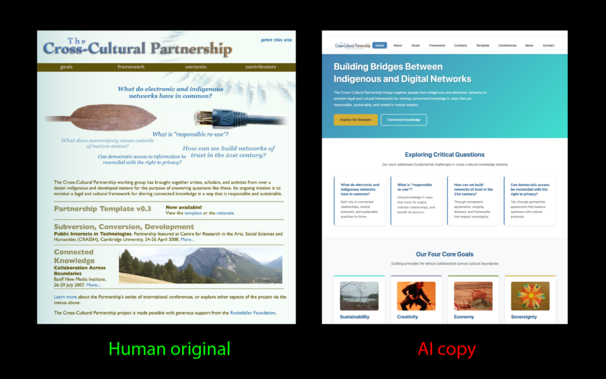

I've been worried AI will crowd out human voices, and now an AI copy has replaced my 2006 Connected Knowledge site, warping context and displacing the original work online. Can we stop this creeping ensloppification?

https://www.linkedin.…

2026-03-12 20:02:10

xAI hires senior Cursor leaders Andrew Milich and Jason Ginsberg; Elon Musk said he expects xAI to catch up with rivals in coding by "the middle of this year" (The Information)

https://www.theinformation.com/articles/xai-hires-two-senior-lea…

2026-03-06 18:00:32

"Microplastics and nanoplastics in urban air originate mainly from tire abrasion, research reveals"

#Plastic #Plastics #MicroPlastics

2026-01-09 23:38:59

2026-03-10 21:54:19

Lions add running back Isiah Pacheco as the new backup to Jahmyr Gibbs, AP source says https://www.foxsports.com/articles/nfl/lions-add-running-back-isiah-pacheco-as-the-new-backup-to-jahmyr-gibbs-ap-source-says

2026-03-02 04:40:41

Early data show wages are rising for AI-exposed jobs that place a high value on a "worker's tacit knowledge and experience", as textbook knowledge loses value (J. Scott Davis/Federal Reserve Bank of Dallas)

https://www.dallasfed.org/research/economics/2026/0224