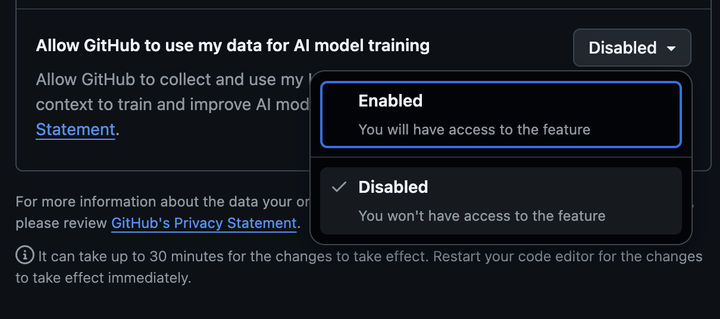

Quick reminder, especially if you’re a freelancer or developer using Free/Pro/Pro plans for client work: Opt out of GitHub using your data for AI model training before April 24 (seriously, wtf that this isn’t opt-in!).

https://github.com/settings/copilot/features#copilo…

FBI director Kash Patel and UFC CEO Dana White announced:

Mixed martial arts fighters are set to host a two-day "training program" for FBI agents

Current and former UFC fighters will host an “exclusive training seminar”

at the FBI’s Special Agent Academy in Quantico, Virginia, this weekend,

according to a statement released Wednesday.

Academy students and senior FBI staff are expected to attend 🍿

GraphWalker: Agentic Knowledge Graph Question Answering via Synthetic Trajectory Curriculum

Shuwen Xu, Yao Xu, Jiaxiang Liu, Chenhao Yuan, Wenshuo Peng, Jun Zhao, Kang Liu

https://arxiv.org/abs/2603.28533 https://arxiv.org/pdf/2603.28533 https://arxiv.org/html/2603.28533

arXiv:2603.28533v1 Announce Type: new

Abstract: Agentic knowledge graph question answering (KGQA) requires an agent to iteratively interact with knowledge graphs (KGs), posing challenges in both training data scarcity and reasoning generalization. Specifically, existing approaches often restrict agent exploration: prompting-based methods lack autonomous navigation training, while current training pipelines usually confine reasoning to predefined trajectories. To this end, this paper proposes \textit{GraphWalker}, a novel agentic KGQA framework that addresses these challenges through \textit{Automated Trajectory Synthesis} and \textit{Stage-wise Fine-tuning}. GraphWalker adopts a two-stage SFT training paradigm: First, the agent is trained on structurally diverse trajectories synthesized from constrained random-walk paths, establishing a broad exploration prior over the KG; Second, the agent is further fine-tuned on a small set of expert trajectories to develop reflection and error recovery capabilities. Extensive experiments demonstrate that our stage-wise SFT paradigm unlocks a higher performance ceiling for a lightweight reinforcement learning (RL) stage, enabling GraphWalker to achieve state-of-the-art performance on CWQ and WebQSP. Additional results on GrailQA and our constructed GraphWalkerBench confirm that GraphWalker enhances generalization to out-of-distribution reasoning paths. The code is publicly available at https://github.com/XuShuwenn/GraphWalker

toXiv_bot_toot

Radu Gheorghe and Rafał Kuć are joining #bbuzz26 to talk about ways to untangle it: lexical search, significant terms, training an embedder from scratch, etc.

Learn more about their amazing session: https://2026.berlinbuzzwords.de/session/circular-dependency-fixes-when-bootstrapping-a-golden-set/

Join us for Berlin Buzzwords on June 7-9 at Kulturbrauerei or online

Can't help but wonder how this strategy introduces bias into the training set,and misses the distribution tails. Actual Incompetence?

Thousands of people are selling their identities to train AI – but at what cost?

https://www.theguardian.com/technology/202…

Can't help but wonder how this strategy introduces bias into the training set,and misses the distribution tails. Actual Incompetence?

Thousands of people are selling their identities to train AI – but at what cost?

https://www.theguardian.com/technology/202…

Training data generation for context-dependent rubric-based short answer grading

Pavel \v{S}indel\'a\v{r}, D\'avid Slivka, Christopher Bouma, Filip Pr\'a\v{s}il, Ond\v{r}ej Bojar

https://arxiv.org/abs/2603.28537 https://arxiv.org/pdf/2603.28537 https://arxiv.org/html/2603.28537

arXiv:2603.28537v1 Announce Type: new

Abstract: Every 4 years, the PISA test is administered by the OECD to test the knowledge of teenage students worldwide and allow for comparisons of educational systems. However, having to avoid language differences and annotator bias makes the grading of student answers challenging. For these reasons, it would be interesting to compare methods of automatic student answer grading. To train some of these methods, which require machine learning, or to compute parameters or select hyperparameters for those that do not, a large amount of domain-specific data is needed. In this work, we explore a small number of methods for creating a large-scale training dataset using only a relatively small confidential dataset as a reference, leveraging a set of very simple derived text formats to preserve confidentiality. Using these methods, we successfully created three surrogate datasets that are, at the very least, superficially more similar to the reference dataset than purely the result of prompt-based generation. Early experiments suggest one of these approaches might also lead to improved model training.

toXiv_bot_toot

Autotuning T-PaiNN: Enabling Data-Efficient GNN Interatomic Potential Development via Classical-to-Quantum Transfer Learning

Vivienne Pelletier, Vedant Bhat, Daniel J. Rivera, Steven A. Wilson, Christopher L. Muhich

https://arxiv.org/abs/2603.24752 https://arxiv.org/pdf/2603.24752 https://arxiv.org/html/2603.24752

arXiv:2603.24752v1 Announce Type: new

Abstract: Machine-learned interatomic potentials (MLIPs), particularly graph neural network (GNN)-based models, offer a promising route to achieving near-density functional theory (DFT) accuracy at significantly reduced computational cost. However, their practical deployment is often limited by the large volumes of expensive quantum mechanical training data required. In this work, we introduce a transfer learning framework, Transfer-PaiNN (T-PaiNN), that substantially improves the data efficiency of GNN-MLIPs by leveraging inexpensive classical force field data. The approach consists of pretraining a PaiNN MLIP architecture on large-scale datasets generated from classical molecular simulations, followed by fine-tuning (dubbed autotuning) using a comparatively small DFT dataset. We demonstrate the effectiveness of autotuning T-PaiNN on both gas-phase molecular systems (QM9 dataset) and condensed-phase liquid water. Across all cases, T-PaiNN significantly outperforms models trained solely on DFT data, achieving order-of-magnitude reductions in mean absolute error while accelerating training convergence. For example, using the QM9 data set, error reductions of up to 25 times are observed in low-data regimes, while liquid water simulations show improved predictions of energies, forces, and experimentally relevant properties such as density and diffusion. These gains arise from the model's ability to learn general features of the potential energy surface from extensive classical sampling, which are subsequently refined to quantum accuracy. Overall, this work establishes transfer learning from classical force fields as a practical and computationally efficient strategy for developing high-accuracy, data-efficient GNN interatomic potentials, enabling broader application of MLIPs to complex chemical systems.

toXiv_bot_toot

Visibility nowcasting in South Korea: a machine learning approach to class imbalance and distribution shift

Bong Gyun Shin, Chan Sik Lee, Hyesun Suh

https://arxiv.org/abs/2605.21507 https://arxiv.org/pdf/2605.21507 https://arxiv.org/html/2605.21507

arXiv:2605.21507v1 Announce Type: new

Abstract: Atmospheric visibility is a critical variable for transportation safety and air quality management, however, accurate prediction remains challenging due to the complex interactions between meteorological conditions and air pollutants, as well as the rarity of low-visibility events. This study introduces a machine learning framework to nowcast visibility in six major South Korean cities. To handle the imbalance in the 2018-2020 training data, we applied the Synthetic Minority Over-sampling Technique with Nominal and Continuous (SMOTENC) and Conditional Tabular Generative Adversarial Network (CTGAN). An ensemble approach combining machine learning and deep learning models was then used and evaluated on a 2021 test dataset. The results revealed a marked decline in predictive performance in the test set compared to the cross-validation phase. This degradation was attributed to a distributional shift between training and testing periods, which was quantitatively confirmed by measuring the Wasserstein distance of the most influential feature identified by SHAP analysis. In general, this study presents a methodology that aims to simultaneously address the dual challenges of data imbalance and temporal distributional shifts, and emphasizes the necessity of accounting for evolving external environmental factors when implementing nowcasting models on time-series data.

toXiv_bot_toot

SF-Flow: Sound field magnitude estimation via flow matching guided by sparse measurements

Ege Erdem, Shoichi Koyama, Tomohiko Nakamura, Orchisama Das, Zoran Cvetkovi\'c

https://arxiv.org/abs/2605.10398 https://arxiv.org/pdf/2605.10398 https://arxiv.org/html/2605.10398

arXiv:2605.10398v1 Announce Type: new

Abstract: Reconstructing a 3D sound field from sparse microphone measurements is a fundamental yet ill-posed problem, which we address through Acoustic Transfer Function (ATF) magnitude estimation. ATF magnitude encapsulates key perceptual and acoustic properties of a physical space with applications in room characterization and correction. Although recent generative paradigms such as Flow Matching (FM) have achieved state-of-the-art performance in speech and music generation, their potential in spatial audio remains underexplored. We propose a novel framework for 3D ATF magnitude reconstruction as a guided generation task, with a 3D U-Net conditioned by a permutation-invariant set encoder. This architecture enables reconstruction from an arbitrary number of sparse inputs while leveraging the stable and efficient training properties of FM. Experimental results demonstrate that SF-Flow achieves accurate reconstruction up to \SI{1}{kHz}, trains substantially faster than the autoencoder baseline, and improves significantly with dataset size.

toXiv_bot_toot