2026-03-17 08:10:35

RE: https://todon.eu/@CrimethInc/116240955986507756

We have a revolutionary moment in our hands. I cannot express strongly enough how important it is to seize this moment.

We must *build* an alternative society, starting by drawing people in and giving them opportunities to create a better future. We cannot win with logical arguments, because people are not logical. We must demonstrate, through experience, what we mean when we talk about liberation, autonomy, and mutual aid. We must create the new world in the shell of the old, here and now, so people can understand what it is we are fighting for. This is our best opportunity, right now, to reach out while the window is wide open.

If we miss this opportunity, we may not get another.

2026-02-12 14:49:34

Following federal cuts to history-focused organizations, the president of the Canadian Historical Association, Colin Coates, sent this letter to Marc Miller, the Minister of Canadian Identity and Culture.

One thing might not be obvious: Coates's reference to Carney's recent Quebec City speech suggests Canadians' need for historical context right now. He doesn't agree with Carney's claims. In fact, most Canadian historians would dispute them.

2026-03-15 14:01:51

I used to live in Waukesha County and like others I never imagined it could turn blue but… there’s a chance.

https://www.therecombobulationarea.news/p/a-certain-shade-of-purple-can-these

2026-03-09 14:38:16

The US succeeds at regime change in Iran as Khamenei replaces Khamenei.

165 dead schoolgirls is sometimes the price you have to pay for such things.

https://www.aljazeera.com/features/2026/3/8/who-is-mojtaba-khamenei-a-conte…

2026-03-04 23:25:41

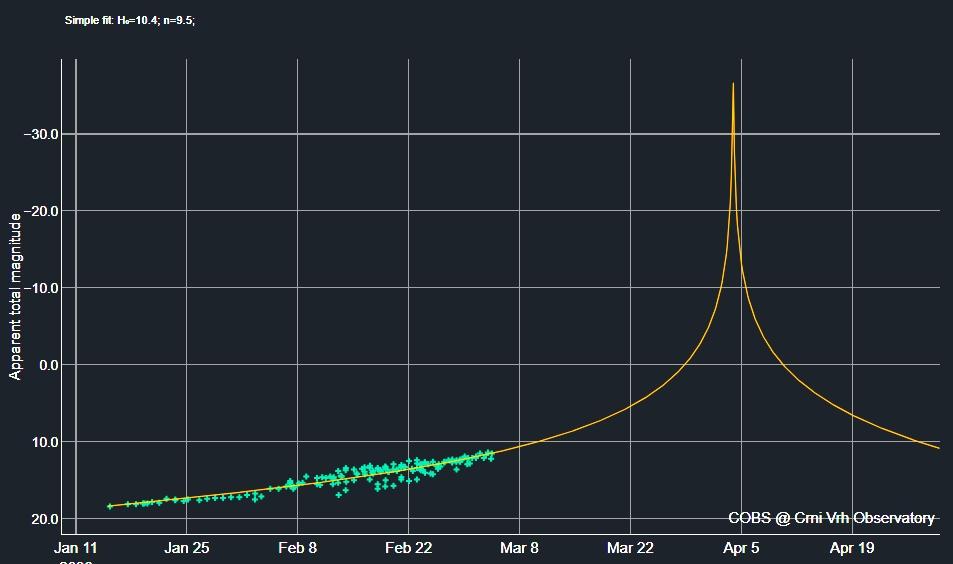

So the new #Kreutz #comet #MAPS is *still* following the constant rapid rise in brightness it has shown since discovery: a dumb extrapolation - https://cobs.si/analysis/?comet=2688&from_date=2026-01-15 00:00&to_date=2026-04-30 00:00&observation_type=V&observation_type=C&plot_x_value=1&plot_y_value=1&fit_option=1&exclude_faint=on&exclude_issue=on&observer=&association=&country=&compare_values=compare - has it get 10,000-times brighter than the Sun at its extremely close perihelion which makes so sense at all, of course, physically.

"It must therefore be assumed that this increase in activity will level off significantly in the near future," writes https://fg-kometen.vdsastro.de/koj_2026/c2026a1/26a1eaus.htm: "More likely are parameters m m0=12.0 mag / n=4 (or even lower), which would still result in a (very short-term) maximum brightness of about –9 mag (but this would probably still be significantly too bright) – always assuming that the comet survives its perihelion passage unscathed."

For other views see http://www.cbat.eps.harvard.edu/iau/cbet/005600/CBET005663.txt and https://arxiv.org/abs/2602.17626 and https://www.facebook.com/photo?fbid=10236580364221799 and https://cometografia.es/cometa-kreutz-2026-a1-maps-analisis/ - and the actual brightness is tracked at https://cobs.si/obs_list?id=2688 where it has reached ~11.5 mag. now.

2026-02-06 23:17:41

From Open Media

Sign Canada’s Digital Sovereignty Charter

Canadian democracy is under threat. Algorithms and deceptive content are shaping what we see, think, and share, while foreign tech giants collect our data without limits.

Our current laws and digital systems aren’t strong enough to protect us. This leaves Canadian voices, our choices, and our digital identities at risk. Parliament must step up and champion Canada’s Digital Sovereignty Charter now

2026-02-26 06:38:49

“Big Tech’s AI hype is distracting users from the rapid and dangerous expansion of giant, energy and water-intensive data centres […].

There is simply no evidence that AI will help the climate more than it will harm it.

Rather than relying on credible and substantiated data, Big Tech companies are writing themselves a blank cheque to pollute on the empty promise of future salvation. We cannot bet the climate on these baseless claims.”

2026-02-05 10:24:10

2026-02-04 12:55:40

Every year at TNC, ideas big and small take the stage, sparking collaboration and creativity.

#TNC26 Programme Committee is now looking for contributions that showcase bold ideas & lessons learned.

Here are 3 ways you could make it on the programme:

🔹 Present a Lightning Talk

🔹 Lead a Bird of a Feather (BoF) session

🔹 Host a Community Hub session

🎤 Submit …

2026-02-25 20:48:57

"En el caso de las izquierdas, hay algunas izquierdas que cuando se trata de Cuba miran para otro lado sin entender que Cuba tiene muchas funciones en la batalla cultural de las izquierdas del hemisferio occidental."

"In the case of the left, there are certain left sections that, when Cuba comes up, look the other way, without understanding that Cuba has multiple functions in the cultural battle of the Western hemisphere left."

Iramis R. Cšrdenas: