2025-07-28 13:04:34

How popular media gets love wrong

Okay, so what exactly are the details of the "engineered" model of love from my previous post? I'll try to summarize my thoughts and the experiences they're built on.

1. "Love" can be be thought of like a mechanism that's built by two (or more) people. In this case, no single person can build the thing alone, to work it needs contributions from multiple people (I suppose self-love might be an exception to that). In any case, the builders can intentionally choose how they build (and maintain) the mechanism, they can build it differently to suit their particular needs/wants, and they will need to maintain and repair it over time to keep it running. It may need winding, or fuel, or charging plus oil changes and bolt-tightening, etc.

2. Any two (or more) people can choose to start building love between them at any time. No need to "find your soulmate" or "wait for the right person." Now the caveat is that the mechanism is difficult to build and requires lots of cooperation, so there might indeed be "wrong people" to try to build love with. People in general might experience more failures than successes. The key component is slowly-escalating shared commitment to the project, which is negotiated between the partners so that neither one feels like they've been left to do all the work themselves. Since it's a big scary project though, it's very easy to decide it's too hard and give up, and so the builders need to encourage each other and pace themselves. The project can only succeed if there's mutual commitment, and that will certainly require compromise (sometimes even sacrifice, though not always). If the mechanism works well, the benefits (companionship; encouragement; praise; loving sex; hugs; etc.) will be well worth the compromises you make to build it, but this isn't always the case.

3. The mechanism is prone to falling apart if not maintained. In my view, the "fire" and "appeal" models of love don't adequately convey the need for this maintenance and lead to a lot of under-maintained relationships many of which fall apart. You'll need to do things together that make you happy, do things that make your partner happy (in some cases even if they annoy you, but never in a transactional or box-checking way), spend time with shared attention, spend time alone and/or apart, reassure each other through words (or deeds) of mutual beliefs (especially your continued commitment to the relationship), do things that comfort and/or excite each other physically (anywhere from hugs to hand-holding to sex) and probably other things I'm not thinking of. Not *every* relationship needs *all* of these maintenance techniques, but I think most will need most. Note especially that patriarchy teaches men that they don't need to bother with any of this, which harms primarily their romantic partners but secondarily them as their relationships fail due to their own (cultivated-by-patriarchy) incompetence. If a relationship evolves to a point where one person is doing all the maintenance (& improvement) work, it's been bent into a shape that no longer really qualifies as "love" in my book, and that's super unhealthy.

4. The key things to negotiate when trying to build a new love are first, how to work together in the first place, and how to be comfortable around each others' habits (or how to change those habits). Second, what level of commitment you have right now, and what how/when you want to increase that commitment. Additionally, I think it's worth checking in about what you're each putting into and getting out of the relationship, to ensure that it continues to be positive for all participants. To build a successful relationship, you need to be able to incrementally increase the level of commitment to one that you're both comfortable staying at long-term, while ensuring that for both partners, the relationship is both a net benefit and has manageable costs (those two things are not the same). Obviously it's not easy to actually have conversations about these things (congratulations if you can just talk about this stuff) because there's a huge fear of hearing an answer that you don't want to hear. I think the range of discouraging answers which actually spell doom for a relationship is smaller than people think and there's usually a reasonable "shoulder" you can fall into where things aren't on a good trajectory but could be brought back into one, but even so these conversations are scary. Still, I think only having honest conversations about these things when you're angry at each other is not a good plan. You can also try to communicate some of these things via non-conversational means, if that feels safer, and at least being aware that these are the objectives you're pursuing is probably helpful.

I'll post two more replies here about my own experiences that led me to this mental model and trying to distill this into advice, although it will take me a moment to get to those.

#relationships #love

2025-07-28 14:45:21

"Das Wesentliche ist: Es geht um koordinierte Angriffe mit dem Ziel, eine ganze Gruppe zu zerstören. Es gibt unterschiedliche Taktiken und Praktiken, aber das Ziel ist dasselbe. Wir sehen massenhaftes Töten, von dem wir alle dachten, das werde es nicht geben. Direktes Töten. Tausende oder hunderte Menschen zu töten, ist kein Kollateralschaden. Das passiert wieder und wieder und wieder über Monate. Millionen Menschen auszuhungern, ist kein legitimer Akt in einem Krieg."

2025-07-29 13:00:05

inploid: Inploid: an online social Q&A platform

Inploid is a social question & answer website in Turkish. Users can follow others and see their questions and answers on the main page. Each user is associated with a reputability score which is influenced by feedback of others about questions and answers of the user. Each user can also specify interest in topics. The data is crawled in June 2017 and consist of 39,749 nodes and 57,276 directed links between them. In addition, for …

2025-06-28 11:19:23

Merz warnt vor falschen Sicherheitsgefühl

Bundeskanzler Friedrich Merz hat vor einem falschen Sicherheitsgefühl in Deutschland gewarnt und mehr Verteidigungsanstrengungen verlangt. Bei einem Besuch des Operativen Führungskommandos der Bundeswehr in Schwielowsee wies er auf den anhaltenden russischen Angriffskrieg gegen die Ukraine hin. "Wir dürfen also unsere Sicherheit nicht als gegeben hinnehmen. Wir müssen mehr tun, dass w…

📑

2025-05-28 07:12:29

Aus #Löwenzahnblüten kann man übrigens Sirup kochen, auch als Löwenzahnblütenhonig bezeichnet.

Mein Rezept siehe:https://www.oe…

2025-06-27 17:47:33

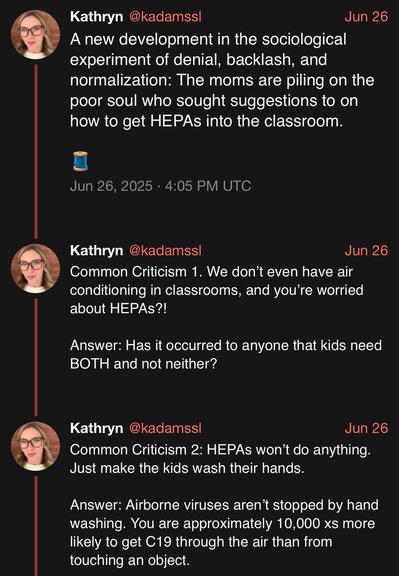

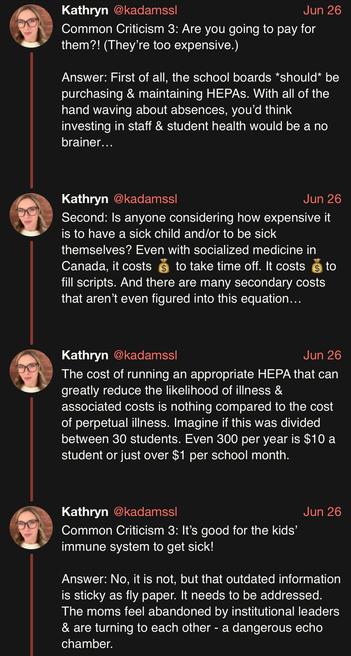

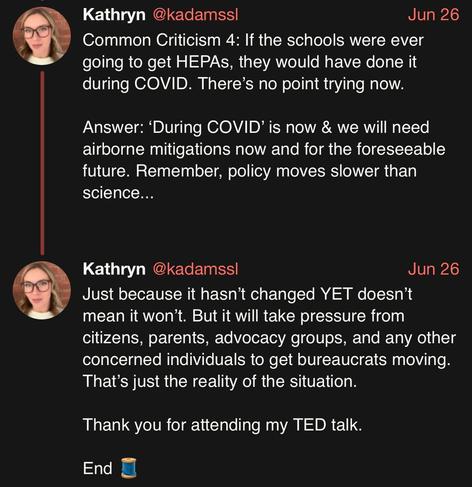

The challenge of HEPA filters in the classrooms.

h/t @…

source: https://xcancel.com/kadamssl/status/1938267359233429683#m

2025-07-29 21:18:16

(Hint: the answer to that question is almost certainly “a bunch of investors who would gladly suck the blood from your still-living body if it earned them another 0.0002% ROI.”)

2025-07-29 11:47:51

FRED: Financial Retrieval-Enhanced Detection and Editing of Hallucinations in Language Models

Likun Tan, Kuan-Wei Huang, Kevin Wu

https://arxiv.org/abs/2507.20930 https://

2025-05-29 15:17:24

MSMT: Angriffe mit Hilfe aus Nordkorea

Russland hat nach Erkenntnissen einer internationalen Beobachtergruppe seine Angriffe auf die Ukraine dank nordkoreanischer Hilfe verstärken können. Es seien über 20.000 Container mit Munition nach Russland geliefert worden, erklärt das Multilateral Sanctions Monitoring Team (MSMT). Darunter seien neun Millionen Artilleriegranaten und Munition für Raketenwerfer. Zudem habe Nordkorea zur …

📑

2025-05-26 12:51:54

Let's say you find a really cool forum online that has lots of good advice on it. It's even got a very active community that's happy to answer questions very quickly, and the community seems to have a wealth of knowledge about all sorts of subjects.

You end up visiting this community often, and trusting the advice you get to answer all sorts of everyday questions you might have, which before you might have found answers to using a web search (of course web search is now full of SEI spam and other crap so it's become nearly useless).

Then one day, you ask an innocuous question about medicine, and from this community you get the full homeopathy treatment as your answer. Like, somewhat believable on the face of it, includes lots of citations to reasonable-seeming articles, except that if you know even a tiny bit about chemistry and biology (which thankfully you do), you know that the homoeopathy answers are completely bogus and horribly dangerous (since they offer non-treatments for real diseases). Your opinion of this entire forum suddenly changes. "Oh my God, if they've been homeopathy believers all this time, what other myths have they fed me as facts?"

You stop using the forum for anything, and go back to slogging through SEI crap to answer your everyday questions, because one you realize that this forum is a community that's fundamentally untrustworthy, you realize that the value of getting advice from it on any subject is negative: you knew enough to spot the dangerous homeopathy answer, but you know there might be other such myths that you don't know enough to avoid, and any community willing to go all-in on one myth has shown itself to be capable of going all in on any number of other myths.

...

This has been a parable about large language models.

#AI #LLM