2026-02-09 02:16:00

Finally an #ArtemisII update on https://www.nasa.gov/blogs/missions/2026/02/08/nasa-conducts-repairs-analysis-ahead-of-next-artemis-ii-fueling-test/ - "technicians have replaced two seals in an area where operators saw higher than allowable hydrogen gas concentrations during the test. Engineers are analyzing the removed seals and developing plans to address all issues ahead of the next rehearsal." No date for that new wet dress rehearsal has been set, and a launch date will be determined only after it is over.

2026-03-31 11:12:48

Replaced article(s) found for cs.CL. https://arxiv.org/list/cs.CL/new

[2/5]:

- POTSA: A Cross-Lingual Speech Alignment Framework for Speech-to-Text Translation

Li, Cui, Wang, Ge, Huang, Li, Peng, Lu, Tashi, Wang, Dang

https://arxiv.org/abs/2511.09232 https://mastoxiv.page/@arXiv_csCL_bot/115541846907664054

- Beyond Elicitation: Provision-based Prompt Optimization for Knowledge-Intensive Tasks

Yunzhe Xu, Zhuosheng Zhang, Zhe Liu

https://arxiv.org/abs/2511.10465 https://mastoxiv.page/@arXiv_csCL_bot/115547607561282911

- $\pi$-Attention: Periodic Sparse Transformers for Efficient Long-Context Modeling

Dong Liu, Yanxuan Yu

https://arxiv.org/abs/2511.10696 https://mastoxiv.page/@arXiv_csCL_bot/115564418836654965

- Based on Data Balancing and Model Improvement for Multi-Label Sentiment Classification Performanc...

Zijin Su, Huanzhu Lyu, Yuren Niu, Yiming Liu

https://arxiv.org/abs/2511.14073 https://mastoxiv.page/@arXiv_csCL_bot/115575715073023141

- HEAD-QA v2: Expanding a Healthcare Benchmark for Reasoning

Alexis Correa-Guill\'en, Carlos G\'omez-Rodr\'iguez, David Vilares

https://arxiv.org/abs/2511.15355 https://mastoxiv.page/@arXiv_csCL_bot/115581410328165116

- Towards Hyper-Efficient RAG Systems in VecDBs: Distributed Parallel Multi-Resolution Vector Search

Dong Liu, Yanxuan Yu

https://arxiv.org/abs/2511.16681 https://mastoxiv.page/@arXiv_csCL_bot/115603508442305146

- Estonian WinoGrande Dataset: Comparative Analysis of LLM Performance on Human and Machine Transla...

Marii Ojastu, Hele-Andra Kuulmets, Aleksei Dorkin, Marika Borovikova, Dage S\"arg, Kairit Sirts

https://arxiv.org/abs/2511.17290 https://mastoxiv.page/@arXiv_csCL_bot/115604083224487885

- A Systematic Study of In-the-Wild Model Merging for Large Language Models

O\u{g}uz Ka\u{g}an Hitit, Leander Girrbach, Zeynep Akata

https://arxiv.org/abs/2511.21437 https://mastoxiv.page/@arXiv_csCL_bot/115621178703846052

- CREST: Universal Safety Guardrails Through Cluster-Guided Cross-Lingual Transfer

Lavish Bansal, Naman Mishra

https://arxiv.org/abs/2512.02711 https://mastoxiv.page/@arXiv_csCL_bot/115655090475535157

- Multilingual Medical Reasoning for Question Answering with Large Language Models

Pietro Ferrazzi, Aitor Soroa, Rodrigo Agerri

https://arxiv.org/abs/2512.05658 https://mastoxiv.page/@arXiv_csCL_bot/115683267711014189

- OnCoCo 1.0: A Public Dataset for Fine-Grained Message Classification in Online Counseling Convers...

Albrecht, Lehmann, Poltermann, Rudolph, Steigerwald, Stieler

https://arxiv.org/abs/2512.09804 https://mastoxiv.page/@arXiv_csCL_bot/115700409397020978

- Does Tone Change the Answer? Evaluating Prompt Politeness Effects on Modern LLMs: GPT, Gemini, an...

Hanyu Cai, Binqi Shen, Lier Jin, Lan Hu, Xiaojing Fan

https://arxiv.org/abs/2512.12812 https://mastoxiv.page/@arXiv_csCL_bot/115729149622659403

- Beg to Differ: Understanding Reasoning-Answer Misalignment Across Languages

Ovalle, Ross, Ruder, Williams, Ullrich, Ibrahim, Sagun

https://arxiv.org/abs/2512.22712 https://mastoxiv.page/@arXiv_csCL_bot/115808161882146194

- Activation Steering for Masked Diffusion Language Models

Adi Shnaidman, Erin Feiglin, Osher Yaari, Efrat Mentel, Amit Levi, Raz Lapid

https://arxiv.org/abs/2512.24143 https://mastoxiv.page/@arXiv_csCL_bot/115819533211103315

- JMedEthicBench: A Multi-Turn Conversational Benchmark for Evaluating Medical Safety in Japanese L...

Liu, Li, Niu, Zhang, Xun, Hou, Wang, Iwasawa, Matsuo, Hatakeyama-Sato

https://arxiv.org/abs/2601.01627 https://mastoxiv.page/@arXiv_csCL_bot/115847901607405421

- FACTUM: Mechanistic Detection of Citation Hallucination in Long-Form RAG

Dassen, Kotula, Murray, Yates, Lawrie, Kayi, Mayfield, Duh

https://arxiv.org/abs/2601.05866 https://mastoxiv.page/@arXiv_csCL_bot/115881545684182376

- {\dag}DAGGER: Distractor-Aware Graph Generation for Executable Reasoning in Math Problems

Zabir Al Nazi, Shubhashis Roy Dipta, Sudipta Kar

https://arxiv.org/abs/2601.06853 https://mastoxiv.page/@arXiv_csCL_bot/115887753245730019

- Symphonym: Universal Phonetic Embeddings for Cross-Script Name Matching

Stephen Gadd

https://arxiv.org/abs/2601.06932 https://mastoxiv.page/@arXiv_csCL_bot/115887767008671765

- LLMs versus the Halting Problem: Revisiting Program Termination Prediction

Sultan, Armengol-Estape, Kesseli, Vanegue, Shahaf, Adi, O'Hearn

https://arxiv.org/abs/2601.18987 https://mastoxiv.page/@arXiv_csCL_bot/115972010510378715

- MuVaC: A Variational Causal Framework for Multimodal Sarcasm Understanding in Dialogues

Diandian Guo, Fangfang Yuan, Cong Cao, Xixun Lin, Chuan Zhou, Hao Peng, Yanan Cao, Yanbing Liu

https://arxiv.org/abs/2601.20451 https://mastoxiv.page/@arXiv_csCL_bot/115977891530875024

toXiv_bot_toot

2026-04-07 22:50:46

Dashcam video shows fascist paramilitary invaders shoot at car in Patterson, CA (4/7/26)

Lyons said in a written statement that officers conducted a traffic stop Tuesday in Patterson, which is about 80 miles south of Sacramento, “to arrest Carlos Ivan Mendoza Hernandez, an 18th Street Gang member wanted in El Salvador for questioning in connection to a murder.” Lyons stated that when officers approached his car, Mendoza Hernandez “weaponized his vehicle in an attempt to run an officer …

2026-03-04 23:25:41

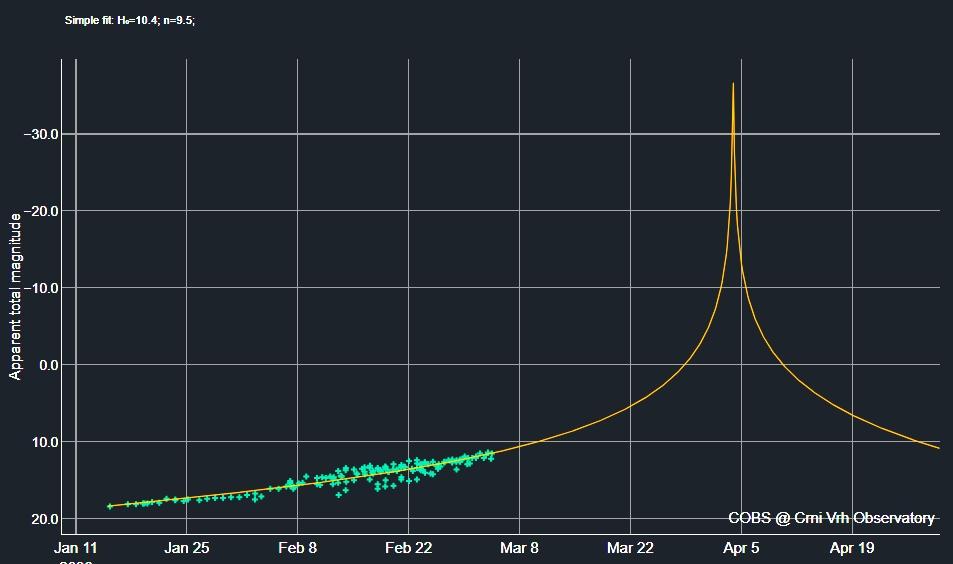

So the new #Kreutz #comet #MAPS is *still* following the constant rapid rise in brightness it has shown since discovery: a dumb extrapolation - https://cobs.si/analysis/?comet=2688&from_date=2026-01-15 00:00&to_date=2026-04-30 00:00&observation_type=V&observation_type=C&plot_x_value=1&plot_y_value=1&fit_option=1&exclude_faint=on&exclude_issue=on&observer=&association=&country=&compare_values=compare - has it get 10,000-times brighter than the Sun at its extremely close perihelion which makes so sense at all, of course, physically.

"It must therefore be assumed that this increase in activity will level off significantly in the near future," writes https://fg-kometen.vdsastro.de/koj_2026/c2026a1/26a1eaus.htm: "More likely are parameters m m0=12.0 mag / n=4 (or even lower), which would still result in a (very short-term) maximum brightness of about –9 mag (but this would probably still be significantly too bright) – always assuming that the comet survives its perihelion passage unscathed."

For other views see http://www.cbat.eps.harvard.edu/iau/cbet/005600/CBET005663.txt and https://arxiv.org/abs/2602.17626 and https://www.facebook.com/photo?fbid=10236580364221799 and https://cometografia.es/cometa-kreutz-2026-a1-maps-analisis/ - and the actual brightness is tracked at https://cobs.si/obs_list?id=2688 where it has reached ~11.5 mag. now.

2026-03-31 11:12:28

Replaced article(s) found for cs.CL. https://arxiv.org/list/cs.CL/new

[1/5]:

- Beyond In-Distribution Success: Scaling Curves of CoT Granularity for Language Model Generalization

Ru Wang, Wei Huang, Selena Song, Haoyu Zhang, Qian Niu, Yusuke Iwasawa, Yutaka Matsuo, Jiaxian Guo

https://arxiv.org/abs/2502.18273 https://mastoxiv.page/@arXiv_csCL_bot/114069031700102129

- Benchmarking NLP-supported Language Sample Analysis for Swiss Children's Speech

Anja Ryser, Yingqiang Gao, Sarah Ebling

https://arxiv.org/abs/2504.00780 https://mastoxiv.page/@arXiv_csCL_bot/114267149909002069

- Cultural Biases of Large Language Models and Humans in Historical Interpretation

Fabio Celli, Georgios Spathulas

https://arxiv.org/abs/2504.02572 https://mastoxiv.page/@arXiv_csCL_bot/114278467094094490

- BRIDGE: Benchmarking Large Language Models for Understanding Real-world Clinical Practice Text

Jiageng Wu, et al.

https://arxiv.org/abs/2504.19467 https://mastoxiv.page/@arXiv_csCL_bot/114420036189999973

- Understanding the Anchoring Effect of LLM with Synthetic Data: Existence, Mechanism, and Potentia...

Yiming Huang, Biquan Bie, Zuqiu Na, Weilin Ruan, Songxin Lei, Yutao Yue, Xinlei He

https://arxiv.org/abs/2505.15392 https://mastoxiv.page/@arXiv_csCL_bot/114550277171100272

- Just as Humans Need Vaccines, So Do Models: Model Immunization to Combat Falsehoods

Raza, Qureshi, Farooq, Lotif, Chadha, Pandya, Emmanouilidis

https://arxiv.org/abs/2505.17870 https://mastoxiv.page/@arXiv_csCL_bot/114572956853819813

- LingoLoop Attack: Trapping MLLMs via Linguistic Context and State Entrapment into Endless Loops

Fu, Jiang, Hong, Li, Guo, Yang, Chen, Zhang

https://arxiv.org/abs/2506.14493 https://mastoxiv.page/@arXiv_csCL_bot/114703502552989170

- GHTM: A Graph-based Hybrid Topic Modeling Approach with a Benchmark Dataset for the Low-Resource ...

Farhana Haque, Md. Abdur Rahman, Sumon Ahmed

https://arxiv.org/abs/2508.00605 https://mastoxiv.page/@arXiv_csCL_bot/114969875643478303

- Link Prediction for Event Logs in the Process Industry

Anastasia Zhukova, Thomas Walton, Christian E. Lobm\"uller, Bela Gipp

https://arxiv.org/abs/2508.09096 https://mastoxiv.page/@arXiv_csCL_bot/115020938764936882

- AirQA: A Comprehensive QA Dataset for AI Research with Instance-Level Evaluation

Huang, Cao, Zhang, Kang, Wang, Wang, Luo, Zheng, Qian, Chen, Yu

https://arxiv.org/abs/2509.16952 https://mastoxiv.page/@arXiv_csCL_bot/115253526588472475

- Multi-View Attention Multiple-Instance Learning Enhanced by LLM Reasoning for Cognitive Distortio...

Jun Seo Kim, Hyemi Kim, Woo Joo Oh, Hongjin Cho, Hochul Lee, Hye Hyeon Kim

https://arxiv.org/abs/2509.17292 https://mastoxiv.page/@arXiv_csCL_bot/115253586227941157

- Dual-Space Smoothness for Robust and Balanced LLM Unlearning

Han Yan, Zheyuan Liu, Meng Jiang

https://arxiv.org/abs/2509.23362 https://mastoxiv.page/@arXiv_csCL_bot/115293308293558024

- The Rise of AfricaNLP: Contributions, Contributors, Community Impact, and Bibliometric Analysis

Tadesse Destaw Belay, et al.

https://arxiv.org/abs/2509.25477 https://mastoxiv.page/@arXiv_csCL_bot/115298213432594791

- Open ASR Leaderboard: Towards Reproducible and Transparent Multilingual and Long-Form Speech Reco...

Srivastav, Zheng, Bezzam, Le Bihan, Koluguri, \.Zelasko, Majumdar, Moumen, Gandhi

https://arxiv.org/abs/2510.06961 https://mastoxiv.page/@arXiv_csCL_bot/115343748052193267

- Neuron-Level Analysis of Cultural Understanding in Large Language Models

Taisei Yamamoto, Ryoma Kumon, Danushka Bollegala, Hitomi Yanaka

https://arxiv.org/abs/2510.08284 https://mastoxiv.page/@arXiv_csCL_bot/115349533441895984

- CLMN: Concept based Language Models via Neural Symbolic Reasoning

Yibo Yang

https://arxiv.org/abs/2510.10063 https://mastoxiv.page/@arXiv_csCL_bot/115372392366793754

- Schema for In-Context Learning

Chen, Chen, Wang, Leong, Fung, Bernales, Aspuru-Guzik

https://arxiv.org/abs/2510.13905 https://mastoxiv.page/@arXiv_csCL_bot/115389057899856601

- Evaluating Latent Knowledge of Public Tabular Datasets in Large Language Models

Matteo Silvestri, Fabiano Veglianti, Flavio Giorgi, Fabrizio Silvestri, Gabriele Tolomei

https://arxiv.org/abs/2510.20351 https://mastoxiv.page/@arXiv_csCL_bot/115428615784704418

- LuxIT: A Luxembourgish Instruction Tuning Dataset from Monolingual Seed Data

Julian Valline, Cedric Lothritz, Siwen Guo, Jordi Cabot

https://arxiv.org/abs/2510.24434 https://mastoxiv.page/@arXiv_csCL_bot/115457025096322944

- Surfacing Subtle Stereotypes: A Multilingual, Debate-Oriented Evaluation of Modern LLMs

Muhammed Saeed, Muhammad Abdul-mageed, Shady Shehata

https://arxiv.org/abs/2511.01187 https://mastoxiv.page/@arXiv_csCL_bot/115491321130591723

toXiv_bot_toot

2026-03-01 17:49:38

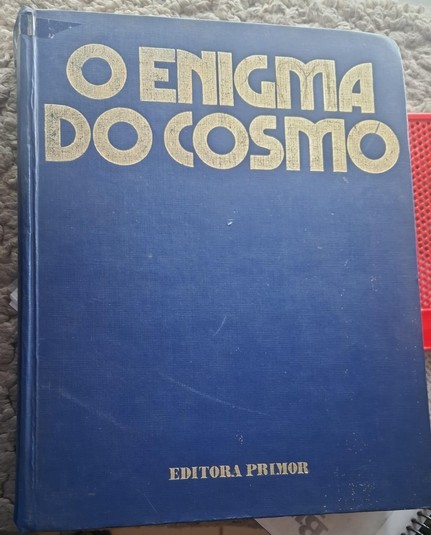

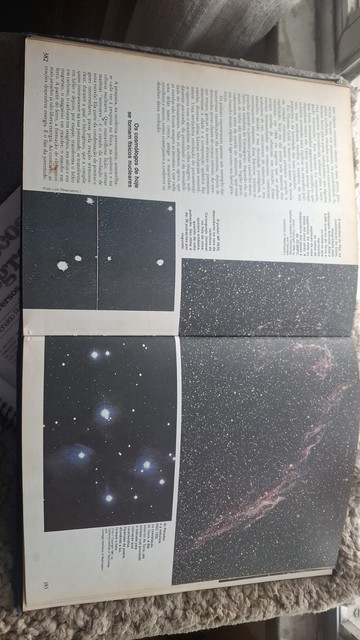

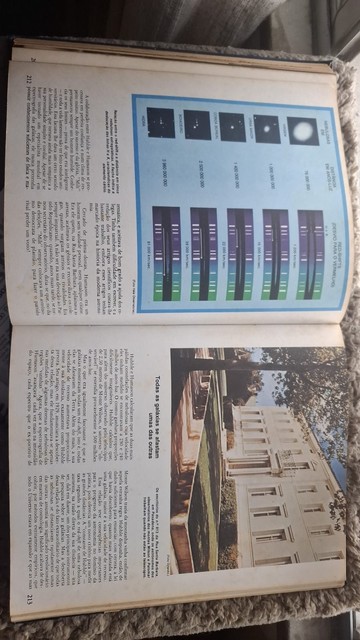

Isso de arrumar/organizar/limpar #livros é uma viagem no tempo. Eu AMAVA este livro durante a minha infância e adolescência. Sem dúvida que foi um dos fatores que fez eu me interessar pelas ciências exatas. Funciona muito bem esta fórmula de utilizar biografias de cientistas como fio condutor para explicar a ciência. E tem muita ciência de verdade sendo ensinada ali. A gravitação universal, a …

2026-03-31 18:58:31

2026-04-27 22:30:08

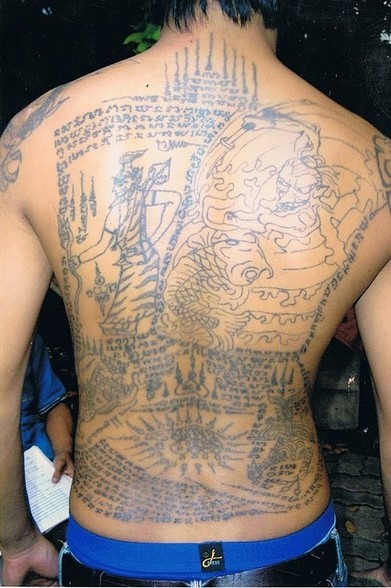

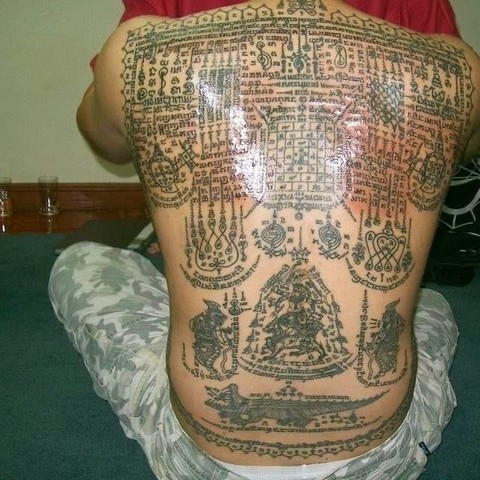

The rules of abstention (khatha kamma; ข้อห้ามศิษย์ยันต์) for sak yant tattoos vary depending on the master. Each lineage may prescribe slightly different codes of conduct, which are considered essential to preserve the power of the yant. The following are the rules traditionally taught at Wat Bang Phra:

• Do not eat star fruit, pumpkin, or other gourd-like vegetables.

• Do not become romantically involved with someone who is already married.

• Never insult or slander anyone’…

2026-04-30 16:08:10

David Louis Hollembaek (Veeva Systems) and Hande Kafkas (cognee) will tackle one of the most pressing AI challenges right now, getting context right for AI agents. Two search veterans, one great session.

Learn more: https://2026.berlinbuzzwords.de/session/search-is-back-solving-the-context-crisis-for-ai-agents/

Get your ticket: https://2026.berlinbuzzwords.de/tickets/

2026-04-04 00:45:23

I've been attempting to configure Vim-Classic in a way that works for my taste.

https://sr.ht/~sircmpwn/vim-classic/

This is Drew Devault's fork of Vim from around 8.2, before the codebase was tainted by llm commits. I've been using Neovim long enough that I got really used to Lua …