2026-03-10 21:05:50

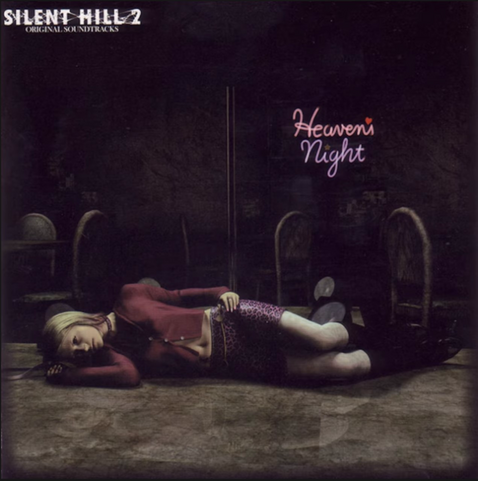

I love this track from Silent Hill 2.

I heard the new one from the Remake aaaaand is a good track but in my opinion isn't better than the original.

Maybe the nostalgia hits me so hard. I don't know 🤔

#silenthill2 #silenthill2remake

2026-03-11 17:17:48

Submit Your Song to the Second General Strike Song Contest - Labor Heritage Foundation https://laborheritage.org/content.aspx?page_id=5&club_id=533040&item_id=132879&action=view&al=y&actr=3

2026-04-14 11:06:02

Hello les fédigens !

Depuis plusieurs jours, le disque dur abritant la partition donnée de mon vénérable ordi donne des signes de fin de vie. Again (il est récent). Envie de récupérer les fichiers les plus récents depuis ma dernière sauvegarde. Mais le dd refuse de se monter. Connecté via liveusb, j'ai lancé un fsck, J'ai un message d'erreur : attempt to read block from filesystem in short read while trying to open /dev/sda2 Could this be a zero-lenght partition ?

Des …

2026-02-28 10:20:01

As salty as I am about it, there's also another way to think about this. For anyone who still has connections to folks on the right (which is perhaps unlikely for anyone on this server, I digress), the cult that has consumed them thrives on isolation and grievance.

The words "you were right" have the potential to cut through the programming and open up an opportunity for reconnection. The modern conspiratorial cult of the Right has been built partially around people who were told they were wrong or were crazy. In the vast majority of cases, they were wrong and even when they were right they completely misunderstood why, but we'll skip that for now. Liberals making fun of them (even the times when they definitely earned it) has pushed them further and further into their ideological hole.

The thing about those words, "you were right," in this context is that the way they offer reconnection also requires them to take one little step of betraying their ideology to accept them. So they must choose between maintaining allegiance to a pedophile or finally getting to feel superior after years of living in an illusion of persecution.

Under the ideology of the Right, admitting one is wrong is a weakness. It is admitting defeat. They have to "own the libs" by saying things, things that they know aren't true, in order to feel dominant. But these things are often so absurd that they end up being made fun of, feeling even more weak and pathetic, reinforcing their fear and alienation.

Offering what they're looking for can offer a way out, but only if they're willing to start to recognize the thing they've supported for what it is.

And they were right about some things. They were right that Bill Gates was a terrible person. I've had plenty of liberals defend him based on his philanthropy washing, but he's awful and always has been. The Epstein links make that blatant. They intuitively recognized him and didn't trust him, even if they were wildly off base about *how and why* he shouldn't be trusted... Even if their correct mistrust was leveraged into one of the most destructive conspiracy theories ever (vaccine denial and COVID vaccine avoidance).

They were right about Bill Clinton. He was always shady as fuck. Sure, the people who attacked him at the time turned out to be even more shady but that's not the point right now. He was connected to Epstein and that was always creepy as fuck.

And the Epstein thing was an open secret that liberals ignored for a long time. It was seen as some weird thing that right wing nutjobs believed about the Clintons. But it was true. Not all of it, and there has always been an antisemitic element to the right wing interpretation or Epstein stuff, but his whole pedophile conspiracy was always kind of real.

The whole "Illuminati"/deep state thing is a vast oversimplification, an attempt to make comprehensible an incredibly complex set of interlocking and emergent behaviors. But Epstein did very much want to remake the world, to create a new world order, and he absolutely played a part in it.

The Right wing nutjobs talked about global authoritarianism, Blackhawks flying over American cities, masked men with guns disarming and executing legal gun owners in the streets. That's all happening right now.

The "FEMA concentration camps" are not actually that far off. ICE and FEMA are sister agencies, both under DHS. I'd be more than happy to call that one "close enough" in order to hear some MAGA admit that ICE is, in fact, building concentration camps.

There was always a huge millennialist element to these things. They tended to be connected to "the antichrist." It was absurd, especially for me as someone who no longer identifies as a Christian. But I'll even acquiess that to a degree. The "the number of the Beast" is 666. That's just the sum of the Hebrew spelling of "Nero." Revelations focuses a lot on Nero coming back to life after his death. His death that involved a head wound, thus the line from Revelation 13:3:

> And I saw one of his heads as if it had been mortally wounded, and his deadly wound was healed. And all the world marveled and followed the beast.

The parallels between Trump and Nero are easy to draw, and Trump's ear wound feels pretty on-the-nose for this. I don't believe in "prophecy" in this way. I think that there are patterns, and useful patterns can become encoded in beleif systems. But I will, again, happily call this one "close enough" for anyone on that side willing to also acknowledge it. I'm happy to meet on that common ground, because anyone who accepts it must recognize that their duty is to fight against it.

A lot of these correct nuggets are embedded in a framework of religious extremism and antisemitism. The vast majority of the beliefs holding these together are wildly wrong and incredibly toxic. But by giving some room to feel validated, listened to, understood, can give some room to admit things that were wrong.

Cult de-programming starts with an opening. People have to talk through their own thoughts, hear their own inconsistencies. Guiding questions can help them untangle these things for themselves. And it all starts by having enough room to feel safe, to not feel cornered, to not feel stupid. Admitting mistakes means being vulnerable, and the MAGA cult is built on fear. It's built on exploiting vulnerability and locking it away.

De-programming takes a long time. It's not easy. It takes patience. But every person who comes out does so with a powerful perspective, a deep understanding, that can be turned back against it. The best people at getting people out of cults are former members. Some of the most dedicated antifa are former fascists who understood their mistakes and dedicate their lives to fixing them.

2026-03-31 11:12:48

Replaced article(s) found for cs.CL. https://arxiv.org/list/cs.CL/new

[2/5]:

- POTSA: A Cross-Lingual Speech Alignment Framework for Speech-to-Text Translation

Li, Cui, Wang, Ge, Huang, Li, Peng, Lu, Tashi, Wang, Dang

https://arxiv.org/abs/2511.09232 https://mastoxiv.page/@arXiv_csCL_bot/115541846907664054

- Beyond Elicitation: Provision-based Prompt Optimization for Knowledge-Intensive Tasks

Yunzhe Xu, Zhuosheng Zhang, Zhe Liu

https://arxiv.org/abs/2511.10465 https://mastoxiv.page/@arXiv_csCL_bot/115547607561282911

- $\pi$-Attention: Periodic Sparse Transformers for Efficient Long-Context Modeling

Dong Liu, Yanxuan Yu

https://arxiv.org/abs/2511.10696 https://mastoxiv.page/@arXiv_csCL_bot/115564418836654965

- Based on Data Balancing and Model Improvement for Multi-Label Sentiment Classification Performanc...

Zijin Su, Huanzhu Lyu, Yuren Niu, Yiming Liu

https://arxiv.org/abs/2511.14073 https://mastoxiv.page/@arXiv_csCL_bot/115575715073023141

- HEAD-QA v2: Expanding a Healthcare Benchmark for Reasoning

Alexis Correa-Guill\'en, Carlos G\'omez-Rodr\'iguez, David Vilares

https://arxiv.org/abs/2511.15355 https://mastoxiv.page/@arXiv_csCL_bot/115581410328165116

- Towards Hyper-Efficient RAG Systems in VecDBs: Distributed Parallel Multi-Resolution Vector Search

Dong Liu, Yanxuan Yu

https://arxiv.org/abs/2511.16681 https://mastoxiv.page/@arXiv_csCL_bot/115603508442305146

- Estonian WinoGrande Dataset: Comparative Analysis of LLM Performance on Human and Machine Transla...

Marii Ojastu, Hele-Andra Kuulmets, Aleksei Dorkin, Marika Borovikova, Dage S\"arg, Kairit Sirts

https://arxiv.org/abs/2511.17290 https://mastoxiv.page/@arXiv_csCL_bot/115604083224487885

- A Systematic Study of In-the-Wild Model Merging for Large Language Models

O\u{g}uz Ka\u{g}an Hitit, Leander Girrbach, Zeynep Akata

https://arxiv.org/abs/2511.21437 https://mastoxiv.page/@arXiv_csCL_bot/115621178703846052

- CREST: Universal Safety Guardrails Through Cluster-Guided Cross-Lingual Transfer

Lavish Bansal, Naman Mishra

https://arxiv.org/abs/2512.02711 https://mastoxiv.page/@arXiv_csCL_bot/115655090475535157

- Multilingual Medical Reasoning for Question Answering with Large Language Models

Pietro Ferrazzi, Aitor Soroa, Rodrigo Agerri

https://arxiv.org/abs/2512.05658 https://mastoxiv.page/@arXiv_csCL_bot/115683267711014189

- OnCoCo 1.0: A Public Dataset for Fine-Grained Message Classification in Online Counseling Convers...

Albrecht, Lehmann, Poltermann, Rudolph, Steigerwald, Stieler

https://arxiv.org/abs/2512.09804 https://mastoxiv.page/@arXiv_csCL_bot/115700409397020978

- Does Tone Change the Answer? Evaluating Prompt Politeness Effects on Modern LLMs: GPT, Gemini, an...

Hanyu Cai, Binqi Shen, Lier Jin, Lan Hu, Xiaojing Fan

https://arxiv.org/abs/2512.12812 https://mastoxiv.page/@arXiv_csCL_bot/115729149622659403

- Beg to Differ: Understanding Reasoning-Answer Misalignment Across Languages

Ovalle, Ross, Ruder, Williams, Ullrich, Ibrahim, Sagun

https://arxiv.org/abs/2512.22712 https://mastoxiv.page/@arXiv_csCL_bot/115808161882146194

- Activation Steering for Masked Diffusion Language Models

Adi Shnaidman, Erin Feiglin, Osher Yaari, Efrat Mentel, Amit Levi, Raz Lapid

https://arxiv.org/abs/2512.24143 https://mastoxiv.page/@arXiv_csCL_bot/115819533211103315

- JMedEthicBench: A Multi-Turn Conversational Benchmark for Evaluating Medical Safety in Japanese L...

Liu, Li, Niu, Zhang, Xun, Hou, Wang, Iwasawa, Matsuo, Hatakeyama-Sato

https://arxiv.org/abs/2601.01627 https://mastoxiv.page/@arXiv_csCL_bot/115847901607405421

- FACTUM: Mechanistic Detection of Citation Hallucination in Long-Form RAG

Dassen, Kotula, Murray, Yates, Lawrie, Kayi, Mayfield, Duh

https://arxiv.org/abs/2601.05866 https://mastoxiv.page/@arXiv_csCL_bot/115881545684182376

- {\dag}DAGGER: Distractor-Aware Graph Generation for Executable Reasoning in Math Problems

Zabir Al Nazi, Shubhashis Roy Dipta, Sudipta Kar

https://arxiv.org/abs/2601.06853 https://mastoxiv.page/@arXiv_csCL_bot/115887753245730019

- Symphonym: Universal Phonetic Embeddings for Cross-Script Name Matching

Stephen Gadd

https://arxiv.org/abs/2601.06932 https://mastoxiv.page/@arXiv_csCL_bot/115887767008671765

- LLMs versus the Halting Problem: Revisiting Program Termination Prediction

Sultan, Armengol-Estape, Kesseli, Vanegue, Shahaf, Adi, O'Hearn

https://arxiv.org/abs/2601.18987 https://mastoxiv.page/@arXiv_csCL_bot/115972010510378715

- MuVaC: A Variational Causal Framework for Multimodal Sarcasm Understanding in Dialogues

Diandian Guo, Fangfang Yuan, Cong Cao, Xixun Lin, Chuan Zhou, Hao Peng, Yanan Cao, Yanbing Liu

https://arxiv.org/abs/2601.20451 https://mastoxiv.page/@arXiv_csCL_bot/115977891530875024

toXiv_bot_toot