2026-04-01 13:56:06

US kid safety groups say they didn't know OpenAI had entirely funded the Parents & Kids Safe AI Coalition to promote CA legislation until after it was announced (Emily Shugerman/The San Francisco Standard)

https://sfstandard.com/2026/04/01/openai-ai-kids-safety-coa…

2026-04-02 09:21:35

Imagine:

You are these parent of an adorable 4-year-old kid. They have made a toy airplane out of spare cardboard. Sadly, during play the wing has fallen off. You, a wise parent, produce a piece of duct tape and tape it back on. Your kid asks: "but what if the tape breaks, or the other wing falls off?" Dutifully, and with a completely serious manner, you duct tape the other wing, and then with a sharpie you write "Please DO NOT fall off!" on each wing. "There," you say, now the wings will not fall off. "

Your child happily returns to their play.

Imagine:

You are boarding a Boeing airplane for an intercontinental flight. Just the other day you were reading news about the emergency exit door falling off a Boeing airplane during flight. Thankfully nobody was injured in that incident, but a passenger could have been sucked out the gap and killed. As you walk down the aisle towards your seat at the back, you notice that around the emergency exit door of this plane, there are some scratch marks. It looks like it might not be 100% seated in place. You see several rolls worth of duct tape slapped onto the gaps between the door and the frame. In sharpie, someone has written "Please DO NOT fall off!" on the duct tape.

This is a post about #Agentic #AI.

To clarify: there are a host of reasons why using Claude Code is unethical in the first place, besides the fact that its a danger to its users. These make it unethical to use it even for a child's-toy-like application. But the source code we've just witnessed in the recent leak is *exactly* this level of "engineering." If you see an app that claims to be "programmed with AI" and it has any possibility of failing in a way that could harm you (for example, if it connects to the internet, meaning that poor programming could allow hackers to take over the device you run it on), my advice is: "Do not use it and warn your friends and family."

P.S. yes, this advice does apply to Microsoft Widows at this point, although that can be a tougher bullet to bite.

2026-06-02 09:01:29

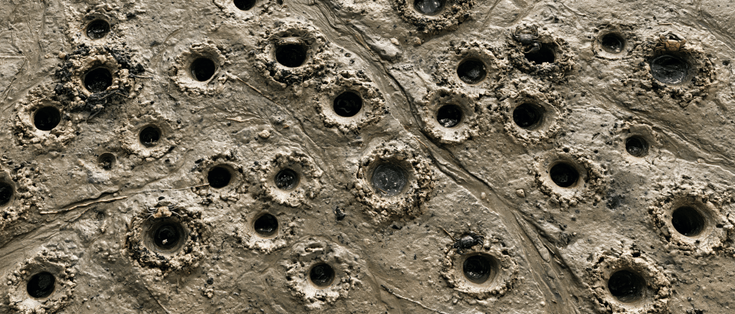

Review article: Restoring vision and touch with cortical microstimulation #BCI

2026-05-01 22:10:54

Servers operated by Ubuntu and its parent company Canonical have been down for more than a day, following a "sustained, cross-border attack" (Dan Goodin/Ars Technica)

https://arstechnica.com/security/2026/05/ubuntu-infrastructure-ha…

2026-05-30 14:57:55

Backstage Collapsed: Universal Recording and the Architecture of Courtship

A panelist on a recent broadcast conversation made the following argument. Young people across the wealthy world are not having children. Before they do not have children, they do not date. Before they do not date, they do not interact at the dances, the parties, the mixers their parents and grandparents used as the primary infrastructure for finding mates. Even when they show up at such…

2026-03-30 12:31:22

Sadly couldn't join myself (wife wasn't feeling good and wanted me to stay home and help parent while she napped) but my SAR team participated in a great inter-agency training this past weekend.

It's always nice to practice alongside volunteers from other teams that we work with on real incidents and get to know each other without the pressure of a real emergency.

One note: I half feel like there should be a CW on this post for mentioning law enforcement in a positiv…

2026-04-29 10:00:01

If you set limits for a scale (e.g. x-axis) in ggplot, how would you like data outside of that range be handled? There is the oob parameter for that and a set of functions to use with it: https://scales.r-lib.org/reference/oob.html

2026-04-25 19:55:42

An Egyptian family of six believed to be the longest held at the controversial South Texas Family Residential Center in Dilley, the nation’s only federal immigrant facility authorized to imprison parents with their children,

were redetained Saturday

after federal judges this week ordered their release.

They are being sent to Egypt on a private plane, according to one of the family’s lawyers.

2026-04-24 01:55:07

- 7:30pm -

me: "Eat now. I don't want to hear that you're hungry at bed time"

14yo: "I'm full"

8yo: "Me too!"

- 9pm -

me: "Let's go, into bed."

8yo: "But I'm hungry!"

14yo: "Y'know, now that you mention it, I'm feeling a bit peckish.."

#parenting

2026-04-26 02:56:30

Survey of 1,050 Australian teens: ~60% said they retained access to social media accounts after ban; two-thirds say platforms took no action to remove accounts (Sasha Rogelberg/Fortune)

https://fortune.com/2026/04/25/australia-social-me…