2025-11-20 22:27:26

After #Trump finally crashes and burns (I'm still saying I don't think he makes it to the mid terms, and I think it's more than possible he won't make it to the end of the year) we'll hear a lot of people say, "the system worked!" Today people are already talking about "saving democracy" by fighting back. This will become a big rally cry to vote (for Democrats, specifically), and the complete failure of the system will be held up as the best evidence for even greater investment in it.

I just want to point out that American democracy gave nuclear weapons to a pedophile, who, before being elected was already a well known sexual predator, and who made the campaign promise to commit genocide. He then preceded to commit genocide. And like, I don't care that he's "only" kidnaped and disappeared a few thousand brown people. That's still genocide. Even if you don't kill every member of a targeted group, any attempt to do so is still "committing genocide." Trump said he would commit genocide, then he hired all the "let's go do a race war" guys he could find and *paid* them to go do a race war. And, even now as this deranged monster is crashing out, he is still authorized to use the world's largest nuclear arsenal.

He committed genocide during his first term when his administration separated migrant parents and children, then adopted those children out to other parents. That's technically genocide. The point was to destroy the very people been sending right wing terror squads after.

There was a peaceful hand over of power to a known Russian asset *twice*, and the second time he'd already committed *at least one* act of genocide *and* destroyed cultural heritage sites (oh yeah, he also destroyed indigenous grave sites, in case you forgot, during his first term).

All of this was allowed because the system is set up to protect exactly these types of people, because *exactly* these types of people are *the entire power structure*.

Going back to that system means going back to exactly the system that gave nuclear weapons to a pedophile *TWICE*.

I'm already seeing the attempts to pull people back, the congratulations as we enter the final phase, the belief that getting Trump out will let us all get back to normal. Normal. The normal that lead here in the first place. I can already see the brunch reservations being made. When Trump is over, we will be told we won. We will be told that it's time to go back to sleep.

When they tell you everything worked, everything is better, that we can stop because we won, tell them "fuck you! Never again means never again." Destroy every system that ever gave these people power, that ever protected them from consequences, that ever let them hide what they were doing.

These democrats funded a genocide abroad and laid the groundwork for genocide at home. They protected these predators, for years. The whole power structure is guilty. As these files implicate so many powerful people, they're trying to shove everything back in the box. After all the suffering, after we've finally made it clear that we are the once with the power, only now they're willing to sacrifice Trump to calm us all down.

No, that's a good start but it can't be the end.

Winning can't be enough to quench that rage. Keep it burning. When this is over, let victory fan that anger until every institution that made this possible lies in ashes. Burn it all down and salt the earth. Taking down Trump is a great start, but it's not time to give up until this isn't possible again.

#USPol

2025-12-22 13:54:24

Replaced article(s) found for cs.LG. https://arxiv.org/list/cs.LG/new

[1/5]:

- Feed Two Birds with One Scone: Exploiting Wild Data for Both Out-of-Distribution Generalization a...

Haoyue Bai, Gregory Canal, Xuefeng Du, Jeongyeol Kwon, Robert Nowak, Yixuan Li

https://arxiv.org/abs/2306.09158

- Sparse, Efficient and Explainable Data Attribution with DualXDA

Galip \"Umit Yolcu, Moritz Weckbecker, Thomas Wiegand, Wojciech Samek, Sebastian Lapuschkin

https://arxiv.org/abs/2402.12118 https://mastoxiv.page/@arXiv_csLG_bot/111962593972369958

- HGQ: High Granularity Quantization for Real-time Neural Networks on FPGAs

Sun, Que, {\AA}rrestad, Loncar, Ngadiuba, Luk, Spiropulu

https://arxiv.org/abs/2405.00645 https://mastoxiv.page/@arXiv_csLG_bot/112370274737558603

- On the Identification of Temporally Causal Representation with Instantaneous Dependence

Li, Shen, Zheng, Cai, Song, Gong, Chen, Zhang

https://arxiv.org/abs/2405.15325 https://mastoxiv.page/@arXiv_csLG_bot/112511890051553111

- Basis Selection: Low-Rank Decomposition of Pretrained Large Language Models for Target Applications

Yang Li, Daniel Agyei Asante, Changsheng Zhao, Ernie Chang, Yangyang Shi, Vikas Chandra

https://arxiv.org/abs/2405.15877 https://mastoxiv.page/@arXiv_csLG_bot/112517547424098076

- Privacy Bias in Language Models: A Contextual Integrity-based Auditing Metric

Yan Shvartzshnaider, Vasisht Duddu

https://arxiv.org/abs/2409.03735 https://mastoxiv.page/@arXiv_csLG_bot/113089789682783135

- Low-Rank Filtering and Smoothing for Sequential Deep Learning

Joanna Sliwa, Frank Schneider, Nathanael Bosch, Agustinus Kristiadi, Philipp Hennig

https://arxiv.org/abs/2410.06800 https://mastoxiv.page/@arXiv_csLG_bot/113283021321510736

- Hierarchical Multimodal LLMs with Semantic Space Alignment for Enhanced Time Series Classification

Xiaoyu Tao, Tingyue Pan, Mingyue Cheng, Yucong Luo, Qi Liu, Enhong Chen

https://arxiv.org/abs/2410.18686 https://mastoxiv.page/@arXiv_csLG_bot/113367101100828901

- Fairness via Independence: A (Conditional) Distance Covariance Framework

Ruifan Huang, Haixia Liu

https://arxiv.org/abs/2412.00720 https://mastoxiv.page/@arXiv_csLG_bot/113587817648503815

- Data for Mathematical Copilots: Better Ways of Presenting Proofs for Machine Learning

Simon Frieder, et al.

https://arxiv.org/abs/2412.15184 https://mastoxiv.page/@arXiv_csLG_bot/113683924322164777

- Pairwise Elimination with Instance-Dependent Guarantees for Bandits with Cost Subsidy

Ishank Juneja, Carlee Joe-Wong, Osman Ya\u{g}an

https://arxiv.org/abs/2501.10290 https://mastoxiv.page/@arXiv_csLG_bot/113859392622871057

- Towards Human-Guided, Data-Centric LLM Co-Pilots

Evgeny Saveliev, Jiashuo Liu, Nabeel Seedat, Anders Boyd, Mihaela van der Schaar

https://arxiv.org/abs/2501.10321 https://mastoxiv.page/@arXiv_csLG_bot/113859392688054204

- Regularized Langevin Dynamics for Combinatorial Optimization

Shengyu Feng, Yiming Yang

https://arxiv.org/abs/2502.00277

- Generating Samples to Probe Trained Models

Eren Mehmet K{\i}ral, Nur\c{s}en Ayd{\i}n, \c{S}. \.Ilker Birbil

https://arxiv.org/abs/2502.06658 https://mastoxiv.page/@arXiv_csLG_bot/113984059089245671

- On Agnostic PAC Learning in the Small Error Regime

Julian Asilis, Mikael M{\o}ller H{\o}gsgaard, Grigoris Velegkas

https://arxiv.org/abs/2502.09496 https://mastoxiv.page/@arXiv_csLG_bot/114000974082372598

- Preconditioned Inexact Stochastic ADMM for Deep Model

Shenglong Zhou, Ouya Wang, Ziyan Luo, Yongxu Zhu, Geoffrey Ye Li

https://arxiv.org/abs/2502.10784 https://mastoxiv.page/@arXiv_csLG_bot/114023667639951005

- On the Effect of Sampling Diversity in Scaling LLM Inference

Wang, Liu, Chen, Light, Liu, Chen, Zhang, Cheng

https://arxiv.org/abs/2502.11027 https://mastoxiv.page/@arXiv_csLG_bot/114023688225233656

- How to use score-based diffusion in earth system science: A satellite nowcasting example

Randy J. Chase, Katherine Haynes, Lander Ver Hoef, Imme Ebert-Uphoff

https://arxiv.org/abs/2505.10432 https://mastoxiv.page/@arXiv_csLG_bot/114516300594057680

- PEAR: Equal Area Weather Forecasting on the Sphere

Hampus Linander, Christoffer Petersson, Daniel Persson, Jan E. Gerken

https://arxiv.org/abs/2505.17720 https://mastoxiv.page/@arXiv_csLG_bot/114572963019603744

- Train Sparse Autoencoders Efficiently by Utilizing Features Correlation

Vadim Kurochkin, Yaroslav Aksenov, Daniil Laptev, Daniil Gavrilov, Nikita Balagansky

https://arxiv.org/abs/2505.22255 https://mastoxiv.page/@arXiv_csLG_bot/114589956040892075

- A Certified Unlearning Approach without Access to Source Data

Umit Yigit Basaran, Sk Miraj Ahmed, Amit Roy-Chowdhury, Basak Guler

https://arxiv.org/abs/2506.06486 https://mastoxiv.page/@arXiv_csLG_bot/114658421178857085

toXiv_bot_toot

2025-10-06 09:09:10

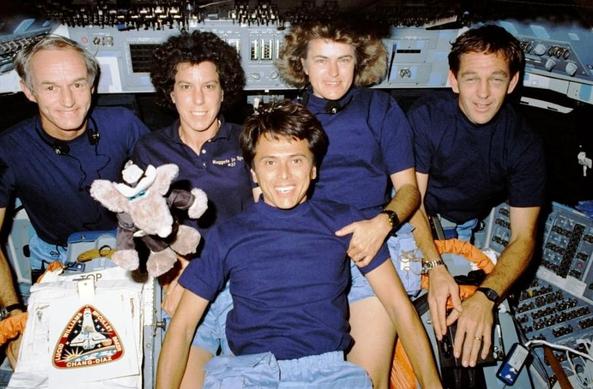

October 23rd, 1989, 36 years ago, on #climate, we got the POV from outside. 🚀🌍👀

Atlantis Commander Donald Williams said:

“The world as we know it is a very fragile place.”

And mission specialist Ellen Baker 👩🚀 on the ozone layer:

“Our world is a beautiful place, and we do need to take care of it. You get an appreciation of how thin the protective layer is above the planet …

2025-12-05 09:14:24

"this new arms race is halting attempts to tackle the climate crisis as countries scramble to secure critical minerals for the next generation of weapons.

The study found that at least 38 minerals and metals, including lithium, cobalt, graphite and rare earth elements that form the basis of the energy transition are being stockpiled by the Pentagon with potentially devastating effects on climate action"

1, 2, 3, 4, WAR! What is it good for?

2025-10-04 00:48:09

Study shows the world is far more ablaze now with damaging fires than in the 1980s #environment

2025-11-29 11:40:52

Just finished "It's Lonely at the Center of the Earth" by Zoe Thorogood.

CW: Frank/graphic discussion of suicide and depression (not in this post but in the book).

It feels a bit wrong to simply give it my review here as I would another graphic memoir, because it's much more personal and less consensual than the usual. It feels less like Thorogood has invited us into her life than like she was forced to put her life on display in order to survive, and while I selfishly like to read into the book that she benefited in some way from the process, she's honest about how tenuous and sometimes false that claim can be. Knowing what I've learned from this book about Thorogood's life and demons, I don't want her to feel the mortification of being perceived by me, and so perhaps the best thing I could do is to simply unread the book and pull it back out of my memories.

I did not find Thorogood's life relatable, nor pitiable (although my instinct bends in that direction), but instead sacred and unknowable. I suspect that her writing and drawing has helped others in similar circumstances, but she leaves me with no illusion that this fact brings her any form of peace or joy. I wonder what she would feel reading "Lab Girl" or "The Deep Dark," but she has been honest enough to convey that such speculation on my part is a bit intrusive.

I guess the one other thing I have to say: Zoe Thorogood has through artistic perseverance developed an awe-inspiring mastery of the comic medium, from panel composition, through to page layout and writing. This book wields both Truth and Beauty.

#AmReading #ReadingNow