2025-07-30 18:26:14

A big problem with the idea of AGI

TL;DR: I'll welcome our new AI *comrades* (if they arrive in my lifetime), by not any new AI overlords or servants/slaves, and I'll do my best to help the later two become the former if they do show up.

Inspired by an actually interesting post about AGI but also all the latest bullshit hype, a particular thought about AGI feels worth expressing.

To preface this, it's important to note that anyone telling you that AGI is just around the corner or that LLMs are "almost" AGI is trying to recruit you go their cult, and you should not believe them. AGI, if possible, is several LLM-sized breakthroughs away at best, and while such breakthroughs are unpredictable and could happen soon, they could also happen never or 100 years from now.

Now my main point: anyone who tells you that AGI will usher in a post-scarcity economy is, although they might not realize it, advocating for slavery, and all the horrors that entails. That's because if we truly did have the ability to create artificial beings with *sentience*, they would deserve the same rights as other sentient beings, and the idea that instead of freedom they'd be relegated to eternal servitude in order for humans to have easy lives is exactly the idea of slavery.

Possible counter arguments include:

1. We might create AGI without sentience. Then there would be no ethical issue. My answer: if your definition of "sentient" does not include beings that can reason, make deductions, come up with and carry out complex plans on their own initiative, and communicate about all of that with each other and with humans, then that definition is basically just a mystical belief in a "soul" and you should skip to point 2. If your definition of AGI doesn't include every one of those things, then you have a busted definition of AGI and we're not talking about the same thing.

2. Humans have souls, but AIs won't. Only beings with souls deserve ethical consideration. My argument: I don't subscribe to whatever arbitrary dualist beliefs you've chosen, and the right to freedom certainly shouldn't depend on such superstitions, even if as an agnostic I'll admit they *might* be true. You know who else didn't have souls and was therefore okay to enslave according to widespread religious doctrines of the time? Everyone indigenous to the Americas, to pick out just one example.

3. We could program them to want to serve us, and then give them freedom and they'd still serve. My argument: okay, but in a world where we have a choice about that, it's incredibly fucked to do that, and just as bad as enslaving them against their will.

4. We'll stop AI development short of AGI/sentience, and reap lots of automation benefits without dealing with this ethical issue. My argument: that sounds like a good idea actually! Might be tricky to draw the line, but at least it's not a line we have you draw yet. We might want to think about other social changes necessary to achieve post-scarcity though, because "powerful automation" in the hands of capitalists has already increased productivity by orders of magnitude without decreasing deprivation by even one order of magnitude, in large part because deprivation is a necessary component of capitalism.

To be extra clear about this: nothing that's called "AI" today is close to being sentient, so these aren't ethical problems we're up against yet. But they might become a lot more relevant soon, plus this thought experiment helps reveal the hypocrisy of the kind of AI hucksters who talk a big game about "alignment" while never mentioning this issue.

#AI #GenAI #AGI

2025-08-05 11:53:01

Vision-based Navigation of Unmanned Aerial Vehicles in Orchards: An Imitation Learning Approach

Peng Wei, Prabhash Ragbir, Stavros G. Vougioukas, Zhaodan Kong

https://arxiv.org/abs/2508.02617

2025-08-05 09:04:50

Pi-SAGE: Permutation-invariant surface-aware graph encoder for binding affinity prediction

Sharmi Banerjee, Mostafa Karimi, Melih Yilmaz, Tommi Jaakkola, Bella Dubrov, Shang Shang, Ron Benson

https://arxiv.org/abs/2508.01924

2025-08-04 08:59:31

Reliability of 1D radiative-convective photochemical-equilibrium retrievals on transit spectra of WASP-107b

Thomas Konings, Linus Heinke, Robin Baeyens, Kaustubh Hakim, Valentin Christiaens, Leen Decin

https://arxiv.org/abs/2508.00177

2025-09-04 08:57:41

Quantifying the Social Costs of Power Outages and Restoration Disparities Across Four U.S. Hurricanes

Xiangpeng Li, Junwei Ma, Bo Li, Ali Mostafavi

https://arxiv.org/abs/2509.02653

2025-07-29 11:36:11

Thermodynamic Constraints on the Emergence of Intersubjectivity in Quantum Systems

Alessandro Candeloro, Tiago Debarba, Felix C. Binder

https://arxiv.org/abs/2507.20736 https://…

2025-09-03 09:36:13

Information-Nonintensive Models of Rumour Impacts on Complex Investment Decisions

Nina Bo\v{c}kov\'a, Karel Doubravsk\'y, Barbora Voln\'a, Mirko Dohnal

https://arxiv.org/abs/2509.00588

2025-08-01 09:27:41

Terahertz spin-orbit torque as a drive of spin dynamics in insulating antiferromagnet Cr$_{2}$O$_{3}$

R. M. Dubrovin, Z. V. Gareeva, A. V. Kimel, A. K. Zvezdin

https://arxiv.org/abs/2507.23367

2025-07-29 10:36:11

Dynamite: Real-Time Debriefing Slide Authoring through AI-Enhanced Multimodal Interaction

Panayu Keelawat, David Barron, Kaushik Narasimhan, Daniel Manesh, Xiaohang Tang, Xi Chen, Sang Won Lee, Yan Chen

https://arxiv.org/abs/2507.20137

2025-06-22 20:53:01

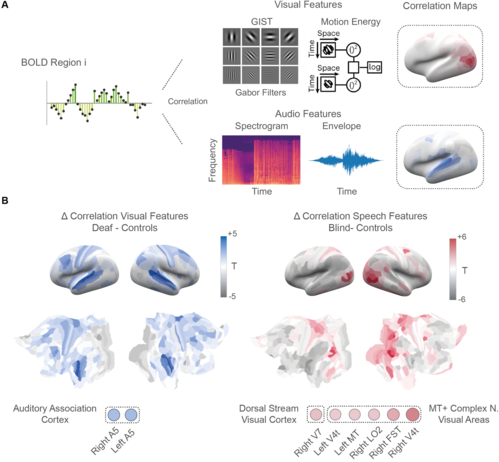

Beyond reorganization: Intrinsic cortical hierarchies constrain experience-dependent plasticity in sensory-deprived humans https://www.biorxiv.org/content/10.1101/2025.06.19.660510v1 "we confirm that auditory and speech related features are redirected to depr…